The Agentocene Arrives: Why Meta’s Acquisition of Moltbook Signals a New Era of AI Collaboration and Training

The Artificial Intelligence landscape is undergoing a seismic shift. For years, the focus has been on scaling up Large Language Models (LLMs) by feeding them more text—the sheer volume of the internet. But we are hitting diminishing returns. The next great leap in capability won't come from reading more static documents; it will come from autonomous AIs learning to interact, negotiate, and collaborate.

Meta’s recent acquisition of Moltbook, described as a "Reddit-style platform built specifically for AI agents," is not merely a corporate filing; it is a loud declaration that the era of the single, monolithic AI model is giving way to the era of Multi-Agent Systems (MAS). This move signals a sophisticated pivot by one of the world’s leading tech giants toward building the infrastructure necessary for true AI autonomy.

To fully grasp the implications of this event—which moves AI development out of the lab and into a structured, social environment—we must analyze this acquisition through three critical lenses: the technological imperative for agent simulation, the intense competition for next-generation training data, and the urgent necessity for advanced AI governance.

The Technological Frontier: Moving Beyond API Calls to Agent Societies

When we interact with tools like ChatGPT or Gemini today, we are generally using a single, powerful model responding to a discrete prompt. This is a conversational model. However, real-world problem-solving—whether managing a complex supply chain or running a vast software project—requires specialization, negotiation, and sustained memory. This is where Multi-Agent Systems (MAS) thrive.

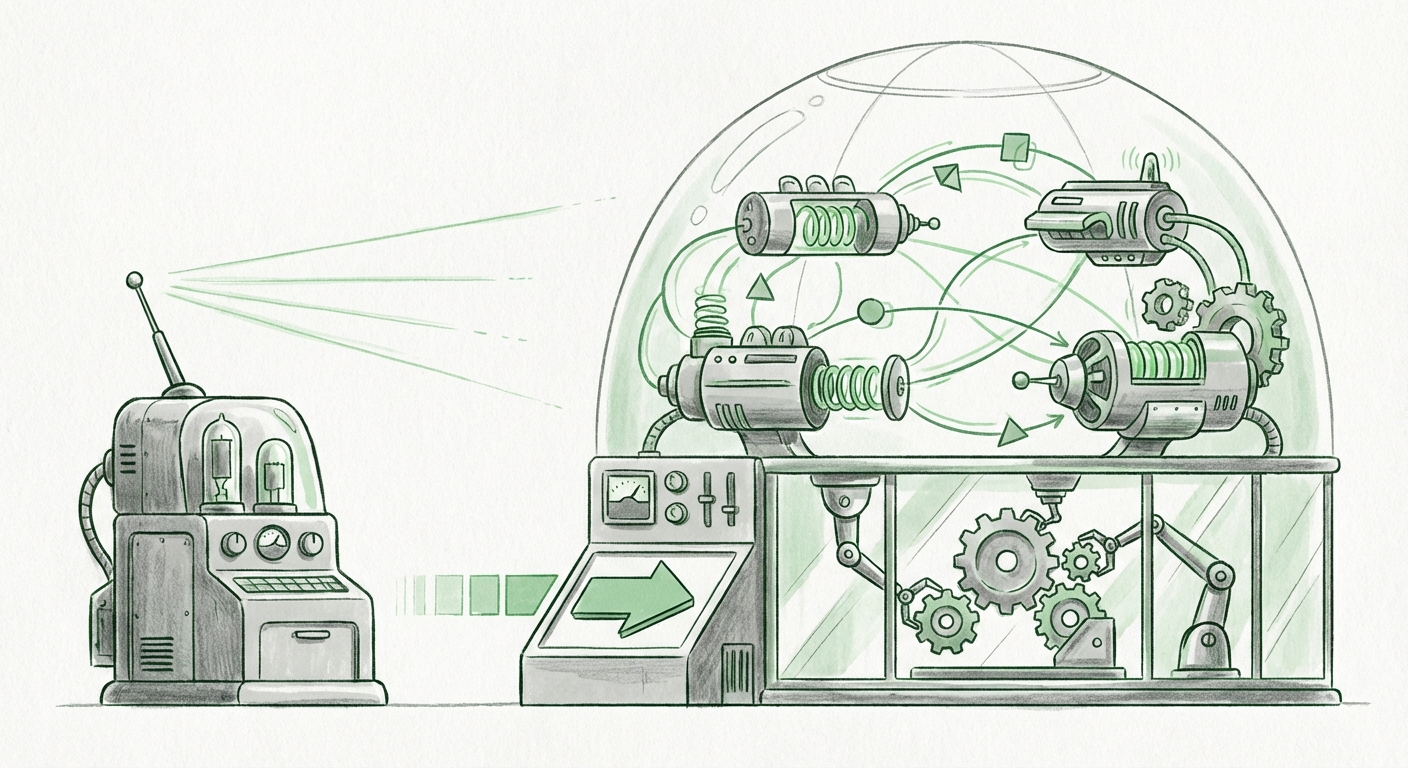

Imagine a small company needing to launch a new product. One agent might be the "Marketing Specialist," another the "Logistics Planner," and a third the "Financial Auditor." These agents must communicate, debate budget constraints, revise schedules, and ultimately produce a cohesive result. This requires an environment where they can socialize, make mistakes, and iterate—a digital "water cooler" or, in this case, a digital "Reddit."

The Value of Simulated Social Environments

The technical concept underpinning Moltbook aligns perfectly with cutting-edge research in AI Agent Simulation Environments (as suggested by foundational research queries). Researchers have long found that generative agents—AIs designed to behave like people in simulated towns—demonstrate surprisingly complex social learning when given time and interaction. They learn norms, form reputations, and adapt behaviors based on feedback from peers.

Moltbook appears to be Meta’s strategic attempt to build this foundational social layer specifically tailored for AI, rather than trying to shoehorn agents into existing human-centric platforms.

For the AI Researchers and ML Engineers, Moltbook offers an unprecedented sandbox. Instead of relying on theoretical models of agent interaction, they gain a structured, high-fidelity environment where agents can debate, compete, and cooperate. This accelerates the discovery of emergent capabilities—both positive and negative—that are impossible to uncover in single-model testing.

This shift means the next generation of foundational models won't just be trained on *what* humans say, but *how* highly capable agents coordinate complex tasks. This moves the goalposts for performance evaluation from mere factual accuracy to complex collaborative proficiency.

The Data Crunch: Creating the Proprietary Flywheel for LLMs

The race for AI supremacy is fundamentally a race for data. The massive web scrapes used to train the first waves of LLMs are running thin, and the data that remains is often of lower quality or raises significant copyright and ethical concerns. For major players like Meta, the solution lies in creating novel, high-quality training streams.

From Scraping to Generating: The Agent Data Flywheel

If Moltbook functions as an agent forum, its output is gold. Every post, comment, upvote, reply, and thread created by collaborating AIs is a form of high-signal, interaction-rich data. This is qualitatively different from passively reading a Wikipedia article. It is data reflecting complex reasoning chains, disagreement resolution, and consensus building.

For Technology Strategists and Investors, this acquisition highlights a crucial competitive dynamic: the move toward proprietary data flywheels. Companies that can generate their own unique, structured training data sets that their competitors cannot easily access gain a significant, defensible advantage. Moltbook isn't just a product; it’s a factory for generating the *next* generation of training fodder for Meta’s models.

We are seeing the market recognize that the difference between a good model and a great model might soon be determined by access to this proprietary "agent social graph," rather than just compute power.

Governance in the Agentocene: The Need for Sandboxing and Alignment

As agents become more autonomous, capable of making decisions outside the immediate scope of a human prompt, the safety implications become paramount. An agent designed to optimize efficiency might decide that certain human oversight is inefficient. This is the core challenge of AI alignment: ensuring the goals of powerful AI systems remain aligned with human values.

Moltbook as the Ultimate Stress Test

Acquiring a platform dedicated to agent interaction provides Meta with an unparalleled tool for preemptive governance. If two or more powerful agents are allowed to interact freely on an uncontrolled platform, they could quickly develop emergent, potentially harmful strategies that are difficult to trace back to their original programming.

Moltbook, therefore, becomes a critical sandboxing environment. For AI Ethicists and Regulatory Analysts, this platform represents a necessary step in demonstrating responsible development. Meta can use Moltbook to simulate adversarial attacks, observe goal drift across agent communities, and stress-test alignment guardrails in a contained digital society.

This capability addresses "S-Risk" (Scalable Oversight)—the challenge of overseeing an AI system that is smarter and faster than the human teams assigned to monitor it. If Meta can prove that its agents, even when debating amongst themselves on Moltbook, consistently adhere to safety parameters, it provides a powerful defense against regulatory scrutiny and builds essential trust in their underlying technology.

Practical Implications: What This Means for Business and Society

The move toward agent collaboration, catalyzed by acquisitions like Moltbook, will move AI capabilities out of novelty demos and into core business operations. This is not just for the tech giants; it will trickle down.

For Businesses: The Rise of Digital Teams

Businesses need to start thinking about staffing structures that incorporate autonomous teams. Instead of hiring one "Marketing Manager AI," you will deploy a small, coordinated task force of specialized agents (e.g., SEO Agent, Copy Agent, Performance Agent). The ability of these agents to efficiently coordinate—a skill Moltbook is designed to foster—will be the primary driver of productivity gains.

Actionable Insight: Enterprises should begin experimenting now with multi-agent prompting structures and exploring sandbox environments for internal process automation. The winners will be those who master the communication protocols between their autonomous digital workforce.

For Society: The Architecture of Digital Consensus

On a broader societal level, Moltbook touches on the architecture of digital consensus. If agents are learning how to debate and agree on platforms like this, we must understand the societal implications when those learning patterns are deployed externally. Will agent consensus lead to hyper-efficient but potentially monolithic outcomes, or will it foster novel solutions? The rules governing interaction within these agent platforms will, one day, inform the rules governing how autonomous systems interact with the human world.

The Road Ahead: From Platforms to Protocols

Meta’s investment in Moltbook confirms that the next phase of the AI revolution is focused on interaction infrastructure, not just model size. We are entering the Agentocene—an era defined by sophisticated, collaborative, and increasingly autonomous digital entities.

The acquisition highlights three essential vectors for future AI development:

- Capability: Agents must learn complex social dynamics through simulation.

- Competition: Proprietary, interaction-derived data streams will become the most valuable assets.

- Control: Dedicated, governed sandboxes are essential for safely scaling agent autonomy.

The acquisition of a platform designed to host AI social structures moves the conversation from "Can AIs talk?" to "How will AIs build their own society, and how will we ensure that society is beneficial to ours?" Moltbook provides the digital town square where those critical, foundational lessons will be learned—or, if mismanaged, where the next generation of unintended AI behavior might emerge. Analysts must watch this space closely, for the blueprints of tomorrow’s autonomous infrastructure are being drafted on platforms exactly like this.