The GPU Cartel and the New Frontier: Why Nvidia's Partnership with Mira Murati's Lab Signals a Major AI Shift

In the high-stakes world of Artificial Intelligence, foundational breakthroughs are less about clever algorithms these days and more about access to sheer computational power—the silicon backbone known as the GPU. When news broke that Nvidia, the undisputed titan of AI hardware, has entered a long-term partnership with Thinking Machines Lab, the startup founded by former OpenAI executive Mira Murati, the industry took notice. This isn't just another collaboration; it’s a strategic signal about the future structure of AI development, the economics of training frontier models, and the enduring dominance of Nvidia’s ecosystem.

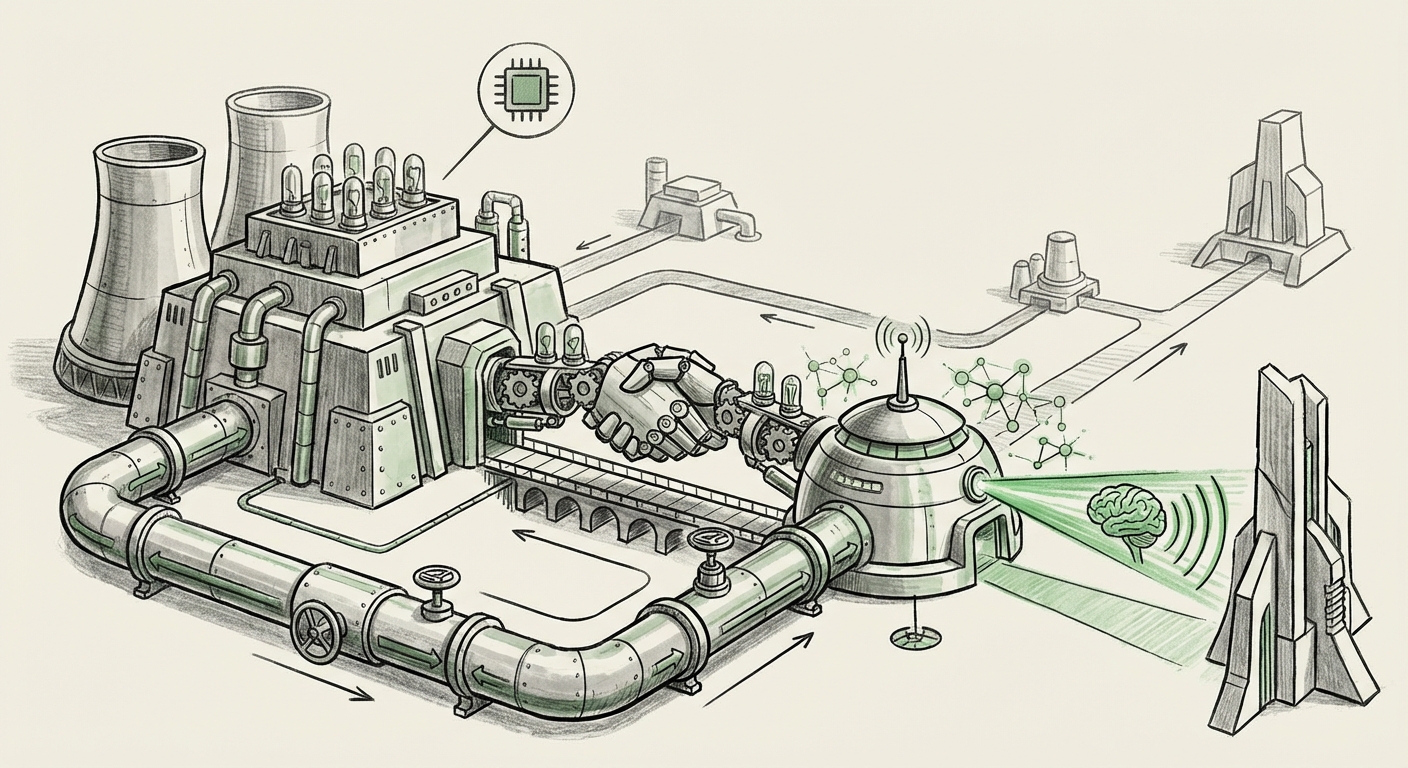

To truly understand the weight of this announcement, we must move beyond the headline and examine the three critical pillars shaping the modern AI landscape: the absolute necessity of compute, the rise of well-funded independent talent, and the necessity of deep hardware integration.

The New Gatekeeper: Nvidia’s Unshakeable Control Over Compute

If AI models are the new oil, Nvidia’s Graphics Processing Units (GPUs)—especially the A100 and H100 series, and the anticipated Blackwell architecture—are the refineries. Developing a cutting-edge Large Language Model (LLM) or multimodal system requires staggering amounts of specialized compute, often costing tens or even hundreds of millions of dollars just for training runs. This reality has created an intense bottleneck, making access to Nvidia hardware the primary limiting factor for innovation outside the largest tech corporations.

The partnership between Nvidia and Thinking Machines Lab underscores a critical reality: Nvidia is strategically choosing who gets to play at the frontier.

When we explore Nvidia's GPU allocation strategy for AI startups, we see a deliberate effort to foster an ecosystem that is both dependent on and deeply integrated with their stack. For Nvidia, funding or partnering with a promising new lab like Murati’s serves multiple purposes:

- Early Adopter Feedback: Thinking Machines Lab becomes a crucial, high-level testbed for new hardware features, software updates (like CUDA improvements), and performance metrics before a wider rollout.

- Ecosystem Lock-in: Long-term commitments ensure that Murati’s team builds its foundational workflows around Nvidia’s proprietary tools, making it extremely costly to switch to competitors (like AMD or custom silicon) down the line.

- Strategic Hedging: By supplying compute to high-profile, independent labs, Nvidia ensures that it benefits from potential disruptive breakthroughs, regardless of which major tech giant ultimately deploys the final product.

For any business relying on AI infrastructure, this means that the pipeline for securing next-generation silicon is intensely managed. Access is not just purchased; it is earned through strategic alignment with the hardware provider.

The Talent Exodus: Why Independent Labs Are the Next Disruptors

The last few years have witnessed a significant migration of elite AI talent from established powerhouses like Google DeepMind and OpenAI. Mira Murati’s move is emblematic of this trend. Top researchers are increasingly choosing to build their own ventures, fueled by massive venture capital infusions, seeking the agility and mission focus that centralized organizations often lack.

This shift suggests that the next major architectural breakthrough may not come from the entities that currently dominate the conversation. As demonstrated by startups like Mistral AI challenging established norms, independent labs offer fresh perspectives unburdened by legacy codebases or conflicting corporate mandates. (For further context on this broader trend, exploring articles detailing AI labs founded by former OpenAI/DeepMind executives reveals a pattern of intellectual capital flowing outward.)

For the business community, this decentralization of talent is both a threat and an opportunity:

- The Opportunity: Businesses might find that these lean, focused labs develop niche, superior models for specific enterprise tasks faster than generalized models from incumbents.

- The Threat: The speed of innovation is increasing across the board. If a competitor partners with an agile, well-resourced lab, they could leapfrog established AI strategies overnight.

Murati’s partnership with Nvidia effectively equips this independent powerhouse with the necessary fuel. It suggests that the barrier to entry for competing with the largest labs is no longer just securing funding, but securing the right compute relationship.

Beyond the Chip: Long-Term Integration and Architectural Co-Development

The term "long-term partnership" is the keyword here. This isn't a simple cloud computing contract. It implies deep integration, particularly concerning future hardware generations like the rumored successors to the Blackwell architecture. This is where the technical implications become profound.

When labs cooperate long-term with hardware manufacturers, they are often involved in designing the software layers that best utilize the hardware's unique strengths. This is critical for efficiency. Training a massive model is like trying to fill an Olympic swimming pool with a garden hose; optimization is everything. Advances in compute efficiency and specialized architectures determine whether a training run takes six months or one year, potentially saving hundreds of millions of dollars.

What Co-Development Looks Like for the Future

This collaboration likely involves:

- Custom Kernels and Libraries: Thinking Machines Lab might be fine-tuning low-level code to exploit specific tensor core configurations on future Nvidia chips, leading to performance gains unavailable to general users.

- Novel Model Architectures: If Murati’s team is exploring non-standard AI structures—perhaps highly sparse models or radically different transformer designs—Nvidia needs that data to ensure their next GPUs efficiently support those designs.

- Democratizing Breakthroughs (Selectively): By ensuring these breakthrough models run optimally on Nvidia, the company solidifies the standard for the entire industry.

This technical alignment guarantees that the innovation cycle remains tightly coupled. If Thinking Machines Lab manages to produce a paradigm-shifting model, it will almost certainly run best on the hardware that helped birth it.

Practical Implications for Business and Innovation

What does this consolidation of talent and hardware power mean for the average enterprise or the next wave of AI builders?

1. The Widening Compute Divide

The reality is becoming starker: the gap between those who can access the best compute (like Murati's lab, backed by Nvidia) and those who cannot is growing. Businesses must decide whether to build models in-house using scarce resources or focus entirely on fine-tuning and deploying models created by these heavily resourced entities.

2. The Importance of Cloud Ecosystems

For companies that cannot secure direct hardware deals, the partnership reinforces the strategic importance of Nvidia’s cloud partners (AWS, Azure, GCP). These platforms host the hardware, and the terms set by Nvidia cascade directly down to cloud pricing and availability for enterprises.

3. Focus on Data Moats, Not Model Moats

If the compute advantage is increasingly concentrated, competitive advantage shifts elsewhere. Businesses should prioritize building proprietary, high-quality data sets—the 'data moat'—that can make even a slightly less powerful, commercially available model perform exceptionally well within their specific domain. You might not be able to train the next GPT-5, but you can train a GPT-4 derivative specifically for your industry with proprietary knowledge.

Actionable Insights for Navigating the New AI Map

For those looking to thrive in this new reality defined by strategic hardware alignments, here are three actionable insights:

- Audit Your Compute Risk Profile: If your competitive AI strategy relies on training large models from scratch, you must immediately assess your long-term access to H100/Blackwell capacity. Consider multi-cloud strategies or exploring alternative accelerators, even if they require extra software engineering effort.

- Invest in MLOps for Efficiency: Since compute is expensive, prioritize MLOps tools and talent that ensure every GPU hour is used optimally. This means mastering quantization, pruning, and efficient inference serving. Every percentage point of efficiency gained translates directly to operational savings.

- Track Talent Clusters: Keep a close watch on which former employees of major labs are founding new ventures. These founders often carry crucial architectural insights and strong hardware relationships (like Murati’s with Nvidia). These partnerships are often precursors to the next major technological inflection point.

The collaboration between Nvidia and Thinking Machines Lab is more than a simple press release; it’s a blueprint for how frontier AI will be developed over the next half-decade. It confirms that the path to the most advanced artificial intelligence remains heavily paved, and guarded, by the silicon infrastructure giant.