The New AI Power Brokers: How Nvidia's Partnership with Mira Murati's Lab Reshapes the Compute Landscape

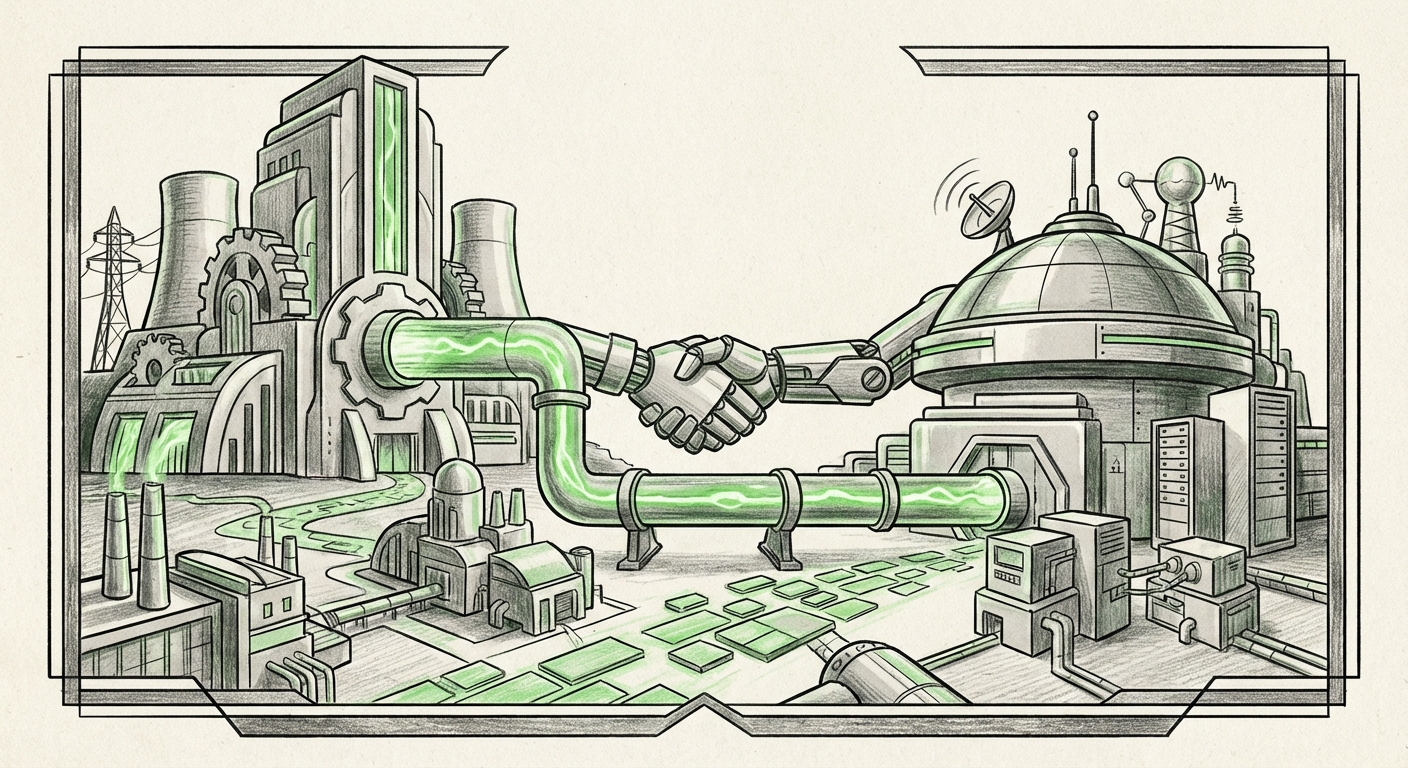

The world of Artificial Intelligence is experiencing a seismic shift. It’s no longer just about the models; it’s about the infrastructure that builds them and the brilliant minds that guide their creation. A recent announcement—the long-term partnership between Nvidia, the undisputed king of AI hardware, and Mira Murati’s new venture, the Thinking Machines Lab—is far more than a simple business deal. It is a strategic realignment that signals where elite AI development is heading next.

For years, the narrative of AI progress has centered on a few corporate behemoths—the large labs, often backed by immense capital and, crucially, immense compute power. Murati’s move, following her prominent role at OpenAI, coupled with this strategic Nvidia alignment, suggests a future where frontier AI innovation might be more decentralized, albeit still heavily reliant on securing access to the world’s most powerful chips.

The Hardware Bottleneck: Why Nvidia's Blessing Matters

To understand the significance of this partnership, one must first appreciate the role of Nvidia. Think of AI models like large cities; the software is the architecture, but the GPUs (like Nvidia’s A100s and the cutting-edge Blackwell chips) are the raw electricity, the fiber optic cables, and the foundational concrete. Without cutting-edge hardware, even the best researchers hit a wall.

The current constraint in AI is compute scarcity. Training the next generation of massive language models requires tens of thousands of the most advanced GPUs, costing hundreds of millions of dollars. Therefore, any new lab capable of securing a long-term infrastructure commitment from Nvidia instantly vaults into the top tier of research organizations. This move confirms that Nvidia isn't just selling chips; it is strategically choosing which next-generation projects to power.

Nvidia's Strategy: Beyond the Hyperscalers

As we explore in discussions around Nvidia's evolving partnership strategy (contextualized by analysis of their focus on new AI startups and infrastructure commitments), the company is keen to maintain its monopoly across the entire AI ecosystem. By forging deep, long-term bonds with high-potential, newly independent labs, Nvidia hedges its bets. They ensure that as new architectural breakthroughs occur—whether in efficiency or model scale—their hardware remains the essential backbone. This guarantees future demand, even if one of the established Big Tech players decides to develop more vertically integrated silicon in the long run.

For businesses and investors tracking the semiconductor roadmap, this shows Nvidia is actively incentivizing innovation outside the usual suspects, effectively decentralizing the *training* environment while maintaining centralized *control* over the necessary tools.

The Post-OpenAI Exodus: Murati’s Vision for Thinking Machines

Mira Murati is a known quantity in the AI community. Her departure from OpenAI, where she served as CTO, was highly scrutinized. When a top executive leaves a leading organization to start their own venture, it inevitably sparks speculation about internal friction, philosophical differences, or ambitious plans that the existing structure couldn't support.

The core question becomes: What is the **core thesis** of the Thinking Machines Lab that necessitated this move? Research into Murati’s new focus (often sought via searches detailing her lab’s initial funding and mission) suggests a divergence from the general-purpose AGI race, focusing perhaps on areas where current models struggle, such as **grounding, long-term reasoning, or embodied intelligence (robotics)**.

This pivot is crucial. If Thinking Machines Lab is focused on developing AI that interacts more reliably with the real world—AI that requires deep, complex, iterative training rather than just vast web data ingestion—then their compute needs are highly specialized. Securing Nvidia’s commitment is not just about raw power; it's about accessing early hardware and dedicated engineering support crucial for iterating on complex, physical-world simulations and testing.

This represents the continuing talent migration: elite leaders are moving to structure environments that better align with their specific research goals, often leading to specialized rather than generalized AI outcomes in the short term.

The Competitive Edge: Access in a Tight Market

The ability of a startup to lock in a multi-year supply deal with Nvidia is a massive competitive signal. This relates directly to the ongoing analysis of the AI infrastructure competition and the supply chain status of chips like the Blackwell series.

Currently, demand for leading-edge AI accelerators wildly outstrips supply. Cloud providers, national labs, and major tech firms are all scrambling for allocations. When a new entity, especially one founded by a prominent figure, secures a long-term commitment, it implies they have negotiated favorable terms for future hardware delivery. This translates directly into a competitive moat.

Practical Implication for Competitors: Any other emerging lab or research group aiming for similar scale must now compete not just on funding, but on the quality and strategic importance of their proposed research to Nvidia’s overall roadmap. Compute access is becoming the primary differentiator between those who *can* build frontier models and those who *cannot*.

This dynamic also influences M&A and talent acquisition. Startups that can promise access to elite compute are inherently more attractive to top researchers seeking to execute ambitious projects.

Future Implications: A Specialized, Aligned AI Ecosystem

What does this Nvidia-Murati alignment truly mean for the future trajectory of AI technology and its application in the real world?

1. The Rise of Infrastructure-Aware Model Design

When researchers know they have guaranteed access to the next three generations of hardware, their model design philosophy changes. Instead of optimizing for what is currently available (a common constraint), they can design models optimized for future capabilities. This fosters bolder research, pushing the boundaries of what's possible in terms of context window size, multimodality, and energy efficiency.

2. Deepening Vertical Specialization

The era of "one model to rule them all" may be giving way to an era of highly optimized, powerful, but *specialized* systems. If Thinking Machines Lab focuses on robotics or scientific discovery (areas that leverage complex, high-fidelity simulation), the models they produce will be deeply integrated with the specialized data pipelines that Nvidia’s platform enables. This moves AI beyond simple text generation and into complex decision-making systems.

3. New Metrics for AI Success

For investors and business leaders, the focus shifts. Success will no longer be measured solely by benchmark scores on public datasets. It will be measured by the successful integration of AI systems into complex, high-value operational domains—the very domains that require the high-reliability compute guaranteed by this partnership.

Actionable Insights for Businesses and Developers

This shift in infrastructure alignment has clear repercussions across the technology sector:

- Re-evaluate Cloud Spend Strategy: While major cloud providers (AWS, Azure, GCP) remain essential, look for emerging, specialized compute providers or consortiums that are securing direct hardware deals similar to the one seen here. These smaller entities might offer specialized services or custom hardware configurations tailored for specific workloads (like reinforcement learning or fluid dynamics simulation) that general cloud instances cannot match.

- Focus on Data Quality and Grounding: If leading labs like Murati’s are moving toward real-world interaction, businesses must clean and structure their proprietary data rigorously. The next leap in AI utility will come from models that can correctly interpret domain-specific data, not just scrape the public web.

- Talent Scouting Beyond the Majors: The talent migration from large labs continues. While OpenAI and Google DeepMind remain magnets, look closely at new, well-funded ventures like Thinking Machines Lab. They represent concentrated pools of expertise looking to deploy innovative architectures outside established corporate silos.

The partnership between Nvidia and Thinking Machines Lab is a microcosm of the present AI race: a contest defined by infrastructure access and focused leadership. It confirms that the future of AI is not just about who has the biggest model, but who has the most reliable and strategically aligned foundation upon which to build the next revolutionary system. We are witnessing the formation of new power centers designed to leverage hardware dominance to achieve specific, ambitious technological milestones, potentially accelerating specialized AI capabilities faster than previously anticipated.