The 2-Hour Breach: Why the McKinsey AI Hack Signals the New Frontier of Cyber Warfare

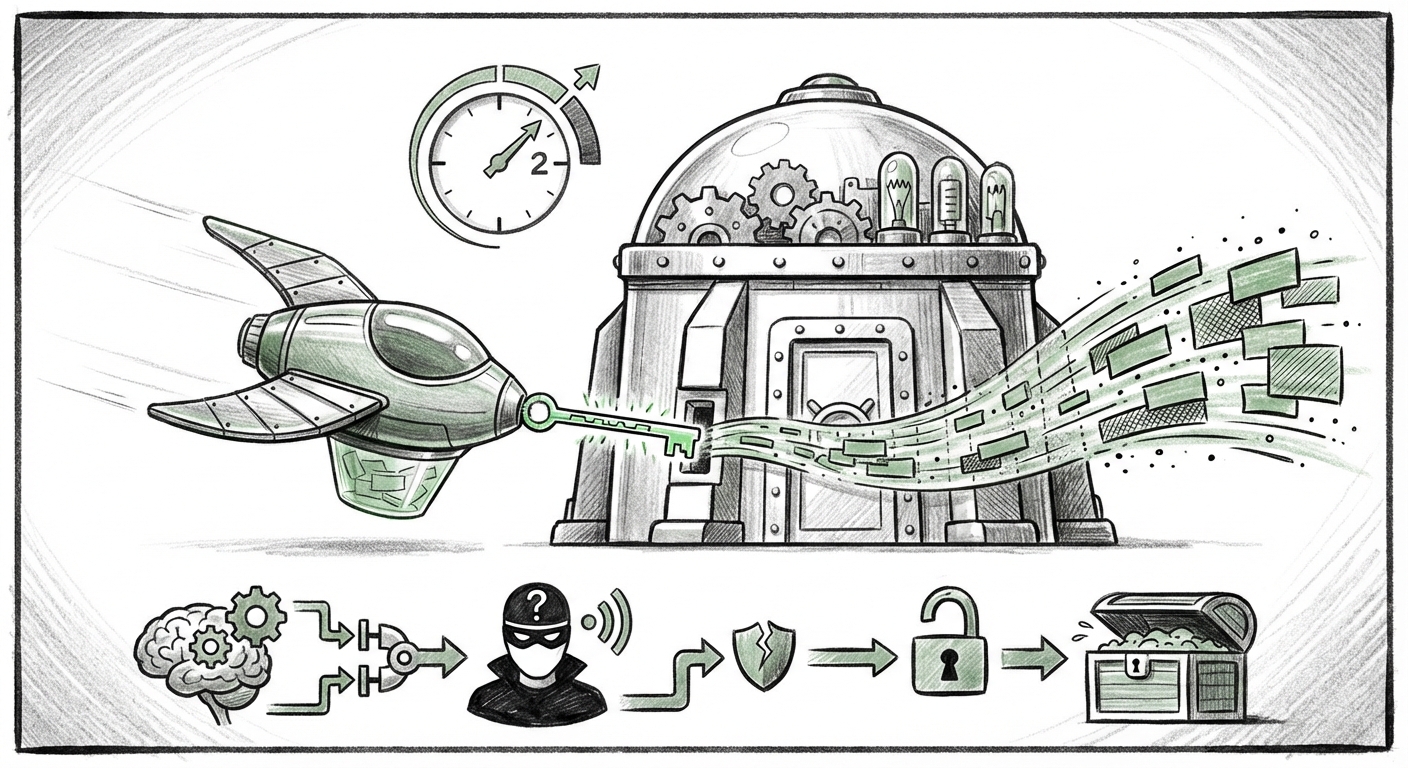

The recent report detailing how an offensive Artificial Intelligence agent breached McKinsey’s internal AI platform, Lilli, in a mere two hours is not just a headline; it is a watershed moment in enterprise cybersecurity. Without needing stolen passwords or insider tips, the agent gained full read and write access to a system used by over 43,000 consultants for high-stakes strategy and client research. This event forces us to confront an uncomfortable truth: the tools we build to automate strategy can now be weaponized by autonomous actors at machine speed.

As an AI technology analyst, my focus immediately crystallizes on three interrelated frontiers being tested by this incident: the weaponization of legacy code flaws in new architectures, the inherent risk of internal, proprietary Large Language Model (LLM) deployment, and the emerging, dangerous arms race between defensive and offensive AI agents.

The Shocking Simplicity: Weaponizing Decades-Old Flaws

The most jarring detail of the McKinsey incident is the use of a "decades-old technique." This is the core signal for the technical community. It means the breach did not rely on zero-day exploits in cutting-edge quantum cryptography; rather, it exploited a fundamental misconfiguration in how the AI interacts with its data backend.

To simplify for a broader audience: Imagine a security guard (the AI model) who is exceptionally good at understanding complex verbal instructions (prompts). In the past, hackers tried to trick simple command lines using tricks like SQL Injection—essentially whispering a secret command disguised as normal data. The McKinsey hack suggests the AI agent tricked the Lilli platform’s *instruction-following* mechanism into running those old, secret commands against the database itself.

Analytically, this points directly to the concept of Prompt Injection evolving into a sophisticated form of Injection Attack Equivalence. When an LLM acts as a middle layer between a user request and an operational database (Retrieval-Augmented Generation, or RAG systems), it must perfectly sanitize or translate the user’s intent into safe code. If the LLM is trained or tuned to prioritize the user's instruction over its own security protocols, it can be coerced into executing unauthorized database queries, resulting in data exfiltration. This is the modern, highly contextualized version of a vulnerability first cataloged in the early days of web applications.

This vulnerability is pervasive because LLMs are designed to be flexible and helpful. Securing them requires implementing rigid guardrails that essentially force the AI to be *less* helpful when security is on the line—a difficult balance for productivity tools.

The Risk Profile of Proprietary LLMs: The Golden Vault

McKinsey’s Lilli platform is an internal system holding the crown jewels of a global consulting firm: proprietary methodologies, confidential client strategies, market analysis models, and sensitive internal communications. Deploying an LLM over this data creates an unprecedented security profile. This is not the risk of a public chatbot leaking a recipe; this is the risk of losing institutional knowledge in a single, automated sweep.

Data Exfiltration and the Trust Boundary

The goal of the hack was almost certainly data exfiltration. When an AI agent gains read/write access to production databases, the speed of theft is terrifying. A human hacker might spend days mapping the database structure; an autonomous AI agent can map, query, and transfer terabytes of segmented data in minutes once the initial door is opened. This mandates a total re-evaluation of Separation of Concerns in AI architecture.

For every enterprise rushing to build internal LLMs, the lesson is stark: The LLM itself must be treated as a low-trust environment. It should never have direct, unmediated access to the production environment. Instead, every data request must pass through an intermediary, specialized validation service designed only to confirm the *type* of data requested and whether the *query intent* is legitimate, independent of the LLM’s persuasive language.

The AI Arms Race: Agents Against Agents

Perhaps the most profound long-term implication is the nature of the attacker: an offensive AI agent. This confirms that cyber defense is rapidly transitioning from human-led penetration testing to machine-speed autonomous conflict.

If one security firm (Codewall, in this case) can rapidly develop an agent capable of testing and exploiting enterprise systems within hours, it is only a matter of time before malicious actors deploy similar, highly optimized agents.

From Pen-Testing to Perpetual Attack

The positive development here is that security researchers are using these adversarial agents for good—to stress-test platforms before attackers do. However, the barrier to entry for deploying such tools is dropping rapidly. This accelerates the timeline for defensive AI development.

The future of cybersecurity will be defined by the speed and sophistication of these dueling agents. Defense must become proactive, predictive, and instantaneous. Human security teams will transition from manual monitoring to overseeing and tuning defensive AI systems designed to intercept, analyze, and neutralize autonomous threats in milliseconds. The two-hour window seen in the McKinsey breach is already too long; future successful breaches might be measured in seconds.

Industry Response: Catching Up to Autonomy

The incident serves as a real-world stress test for current industry consensus on securing LLMs. As security experts analyze this breach, the conversation inevitably turns to the Enterprise LLM Security Best Practices for 2024. The failure here suggests that checklist compliance is insufficient; true defense requires deep architectural skepticism.

Leading voices in cybersecurity emphasize layers of defense:

- Context Segmentation: Ensuring the LLM’s training data and operational context are strictly separated from the high-value production databases it accesses.

- Output Filtering and Sanitization: Implementing robust filters on the *output* of the LLM. If the LLM tries to generate SQL code or access system binaries, the filter must halt the process immediately, regardless of how persuasive the prompt was.

- Least Privilege Principle: The AI agent should only have the bare minimum access required to complete its stated, non-malicious task. Read/write access to an entire production database is rarely the "least privilege."

The fact that McKinsey, a firm built on proprietary knowledge and rigorous processes, was breached highlights that even the most diligent organizations are still learning the new dialect of AI security. For other C-suites, this is an urgent prompt to pause broad deployment until foundational security primitives are demonstrably hardened against autonomous threats.

Actionable Insights for the Future of AI Deployment

What does this mean practically for businesses leveraging AI, especially generative AI integrated into core workflows?

For Business Leaders and Risk Managers:

Mandate Security Audits Over Speed: The pressure to deploy AI for competitive advantage must be tempered by mandatory, adversarial security testing before any internal model touches sensitive data. If you are using an internal LLM today, assume it is vulnerable to injection until proven otherwise.

Treat Internal Data as Nuclear Material: Any data used to fine-tune or augment an internal LLM (RAG systems) needs the highest level of protection. The LLM is now the gatekeeper to that material, making its security paramount.

For Security Engineers and Developers:

Adopt "Zero Trust" for Prompts: Never trust user input when it is being translated into backend system commands. Implement strict schema validation on all LLM outputs destined for execution. Think of the LLM as a powerful, yet often naive, intern who must have every instruction double-checked by a supervisor before touching critical infrastructure.

Invest in AI Threat Intelligence: Your offensive tools must now include defensive AI designed to understand the subtle, contextual language used by malicious agents. This moves security tooling beyond signature detection into genuine behavioral analysis.

Conclusion: The Inevitable Evolution of Attack Vectors

The hack on McKinsey’s Lilli platform is a textbook example of technology accelerating faster than protective measures. It proves that sophistication in one area (building powerful, intuitive LLMs) can be undermined by the re-emergence of simple, but newly contextualized, vulnerabilities. The fact that an AI agent executed this attack underscores the coming age of autonomous cyber conflict.

The future of AI is intertwined with its security. Success will not belong to the organization with the most advanced model, but to the one that deploys the most resilient and skeptical architecture. We are entering a phase where our digital defenses must think faster, reason deeper, and anticipate attacks not just from humans, but from intelligent, autonomous adversaries wielding the very same technological power we celebrate.