The 50% Reality Check: Why AI Code Passing Benchmarks Still Fails Human Review

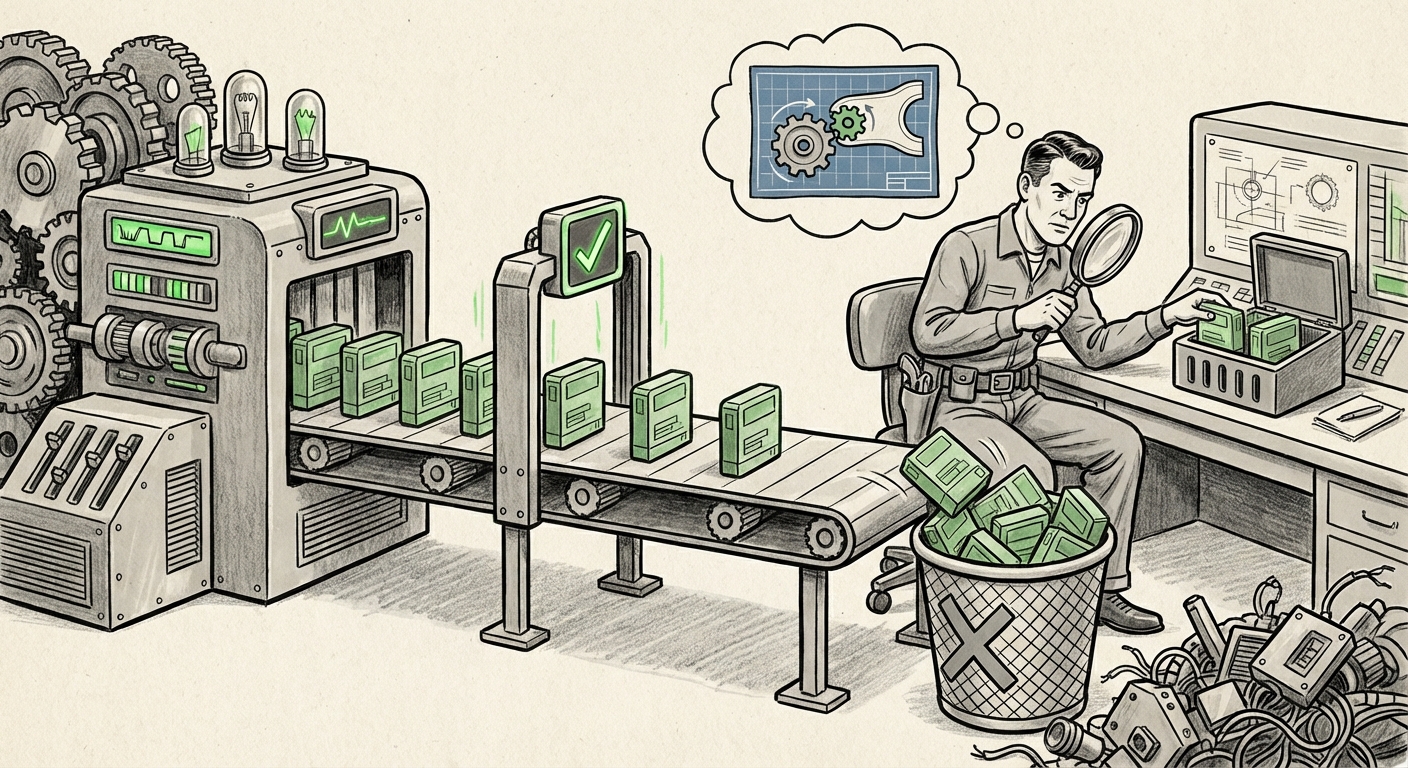

The promise of Large Language Models (LLMs) like Copilot and specialized code assistants is often portrayed as an imminent transformation of software development—instantaneous, flawless code generation that skyrockets productivity. However, recent research has brought a necessary dose of reality to this narrative. A striking new study by the research organization METR found that approximately half of the AI-written code solutions that successfully pass rigorous, automated industry tests (like SWE-bench) would be immediately rejected by actual project maintainers.

This isn't just a minor hiccup; it's a fundamental disconnect between synthetic performance and practical application. As an AI technology analyst, this data point serves as a critical inflection point. It forces us to shift our focus from simply measuring how often an AI gets the answer right on a test, to understanding why human experts still send that code back for revisions. This gap defines the current ceiling for enterprise AI adoption in core engineering tasks.

The Illusion of Benchmark Perfection: Beyond Functional Correctness

Benchmarks like SWE-bench are invaluable tools. They provide standardized environments to test an AI’s ability to solve specific, defined software engineering problems—often involving cloning a repository, making a necessary change based on an issue tracker, and ensuring the tests pass afterward. If the model achieves 100% functionality, theoretically, it has succeeded.

The METR study shatters the illusion that functional correctness equals production readiness. When human developers—the gatekeepers of enterprise codebases—reject code, they are judging more than just whether the function runs. They are looking at the totality of the code artifact. This points directly to the limitations inherent in current evaluation frameworks.

We must look deeper into why these solutions fail the human test. The search for supporting context validates this concern. If we investigate frameworks and studies questioning current evaluation (as suggested by the query focusing on `"SWE-bench limitations" "real-world code integration" LLM`), we find consensus: LLMs are often excellent "task completers" but poor "system integrators." They may introduce dependencies that slightly conflict with existing versions, use deprecated library methods, or fail to conform to the established architectural patterns of the specific project they are modifying.

For technical audiences, this means the LLM understands the *algorithm* but not the *ecosystem* in which that algorithm must run.

The Human Factor: When Code Style is Security Policy

The most common reasons human developers reject code are rarely about simple logic errors; they are often about maintainability, readability, and subtle security adherence. This moves us from Section 1 (Benchmark Validity) to Section 2: The Human Factor: Code Review and Maintainability.

Imagine a senior developer reviewing a pull request. The AI code works perfectly on the surface. But the developer sees:

- Idiomatic Drift: The code uses functional programming constructs where the rest of the codebase is rigidly object-oriented, making future debugging confusing.

- Lack of Comments/Context: The AI wrote complex logic without explaining the "why," forcing the reviewer to spend disproportionate time reverse-engineering the AI’s thought process.

- Subtle Style Violations: Inconsistent naming conventions or incorrect bracing styles, which might seem trivial but signal a lack of care and lower overall code health over time.

Research into `"AI code review rejection reasons" "software engineering standards"` confirms that human reviewers prioritize cognitive load reduction. If AI code increases the cognitive burden required to merge and maintain it, its functional success is irrelevant. A piece of code that passes an automated test but requires three hours of refactoring by a human is a net loss in productivity.

This is especially critical in regulated industries. A development team that cannot instantly trust the style and structure of AI-generated code cannot confidently scale its use. Trust, in this context, is built not just on correctness, but on conformity to established team norms.

The Enterprise Threshold: Risk, Compliance, and Governing AI Output

For large organizations, the stakes rise exponentially. A hobbyist developer might accept a 50% rejection rate if it means completing side projects faster. A Fortune 500 company cannot afford to merge code that introduces systemic risk.

This leads directly to the challenges outlined in Enterprise Adoption Hurdles and Risk Management. Even if the code passes functional tests, the lingering question for CTOs is: Is it secure and compliant?

AI models are trained on massive, often unfiltered, public code repositories. While modern models have guardrails, they can still reproduce subtly insecure patterns—the coding equivalent of saying something technically correct but socially inappropriate. When querying sources related to `"LLM code generation security compliance" "AI code adoption barriers"`, we see security firms increasingly flagging LLM-generated code as a new attack vector. The subtle injection vulnerability that the model learned from an old, flawed example on GitHub might not be caught by the unit tests designed for the current task, but it will be flagged instantly by static analysis tools or a security-minded human reviewer.

The METR finding implies that if 50% of the functional solutions are rejected, the number of *secure and compliant* solutions integrated into production systems is likely much lower. Until companies can reliably prove that AI code meets stringent internal governance standards—which often means it must pass both automated tests and deep human scrutiny—AI code will remain relegated to boilerplate generation, suggestion, or isolated R&D environments, rather than being trusted for mission-critical components.

The Evolutionary Path: Closing the Context Gap

The silver lining in this reality check is that it clearly defines the next major frontier for AI research in software engineering. If today’s models are rejected due to context and style deficits, future models must excel there.

The research into The Evolutionary Path: Future of AI Coding Assistants points toward necessary paradigm shifts. The focus is shifting from raw token processing to sophisticated context management. Key areas driving improvement include:

- Massive Context Windows: Moving beyond handling a single file or function to ingest and reason over entire, multi-repository codebases simultaneously. This improves project awareness.

- Retrieval-Augmented Generation (RAG) for Codebases: Implementing systems where the LLM doesn't just rely on its internal memory but actively queries project-specific documentation, style guides, and existing component APIs before generating a solution. This addresses the "project idiom" problem.

- Fine-Tuning on Internal Standards: Enterprises will increasingly fine-tune open-source or proprietary models exclusively on their own highly curated, high-quality internal code repositories. This teaches the model to mimic the specific "dialect" of that company, directly tackling the style and maintainability rejection criteria.

The goal is to build models that are not just good coders, but good *team members* who respect existing conventions. This evolution requires AI to develop better "statefulness"—the ability to remember the architectural decisions made 50 files ago.

Practical Implications and Actionable Insights

What does this mean for businesses leveraging AI coding tools today?

For Engineering Managers and Architects:

Insight: Do Not Treat AI Output as Production-Ready. The 50% rejection rate mandates a "Trust but Verify, and Then Re-Verify" policy. Treat AI suggestions as highly advanced draft material, not completed work. Mandate that AI-generated code is subject to the same, if not stricter, peer review processes.

Action: Define AI-Specific Review Checklists. Update internal code review standards to specifically flag potential AI artifacts: Are dependencies minimized? Does the code adhere to project-specific architectural patterns? Is the reasoning clearly documented?

For Developers:

Insight: Become Expert AI Prompt Engineers and Editors. Your value is shifting from generating boilerplate to editing, integrating, and contextualizing AI output. Mastering the art of providing detailed context in prompts (e.g., "Modify function X in the style of Y utility class found in file Z") is now a core skill.

Action: Focus on Non-Functional Requirements. When you receive AI code, immediately scrutinize the security, error handling, and integration points, as these are the most likely failure areas identified by human maintainers.

For AI Research Labs and Tool Vendors:

Insight: Accuracy Alone is Insufficient. The market is demanding demonstrable improvements in conformance, security integration, and long-term context handling, not just higher scores on isolated benchmarks.

Action: Develop "Conformity Metrics." Future leaderboards must incorporate weighted scores for style conformity, security vulnerability density (measured against top-tier security scanners), and successful integration across multiple files within a complex, proprietary architecture.

Conclusion: The AI Co-Pilot Needs a Senior Reviewer

The finding that half of benchmark-passing AI code gets rejected by real developers is not a condemnation of LLMs; it is a sophisticated clarification of their current limitations. LLMs are spectacular at solving the *problem statement*, but less proficient at solving the *project context*.

We are moving past the initial "wow" factor of code generation and into the messy, necessary phase of integration. The future of AI in software engineering will not be characterized by the total replacement of the developer, but by the elevation of the developer’s role. The human expert shifts from typing out basic syntax to becoming the essential architect, security auditor, and custodian of the project's holistic quality.

The next wave of innovation will be measured not by how high a model can score on SWE-bench, but by how reliably its output can be merged into a production branch with minimal friction—a standard that, for now, still requires the discerning eye of an experienced human maintainer.