The 50% Code Rejection Rate: Why AI Benchmarks Are Failing the Real World Test

The promise of Large Language Models (LLMs) in software development has been revolutionary, offering the allure of vastly accelerated coding speed. Tools like GitHub Copilot are rapidly becoming standard issue for developers worldwide. Yet, a recent, sobering finding casts a harsh light on this rapid adoption: A new study by the research organization METR suggests that approximately half of the AI-written code solutions that successfully pass the popular SWE-bench benchmark would be immediately rejected by actual project maintainers.

For technology leaders, this 50% rejection rate is not just a statistical curiosity; it is a loud signal that our current methods for evaluating AI proficiency are fundamentally misaligned with the demands of production-grade software engineering. This gap between synthetic success and real-world applicability defines the next major hurdle in the age of AI-assisted development.

Deconstructing the Benchmark Illusion: Function vs. Form

SWE-bench is designed to test an AI’s ability to solve real-world issues found in GitHub repositories, often requiring modifications to existing codebases to fix bugs or implement features. On the surface, passing this test implies a high level of competency. If half of the successful submissions are still rejected by human eyes, we must ask: What are the human reviewers rejecting?

This situation highlights a core tension in current LLM evaluation. Benchmarks often reward functional correctness—does the code compile and pass the provided unit tests? However, real development demands far more. As corroborating research often explores when examining benchmark limitations, AI excels at solving isolated problems but struggles with the peripheral requirements of large systems. Human reviewers are trained to see beyond the immediate function to assess:

- Contextual Integration: Does the code fit seamlessly with the existing project’s architecture, patterns, and dependency structures?

- Maintainability: Is the code overly complex, poorly documented, or written in a non-standard style that will incur technical debt for the next engineer?

- Idiomatic Quality: While functional, is the solution the "right" way to solve the problem within that specific language ecosystem?

In essence, LLMs currently generate solutions that are syntactically correct but architecturally, stylistically, or contextually deficient for a live codebase. The benchmark rewards the answer; the maintainer rewards the integration.

The Human Filter: Developer Perception and Real-World Friction

The human maintainer acts as the essential quality gatekeeper. Understanding their perspective is key to interpreting the 50% rejection rate. When developers are asked to merge AI code, they are effectively signing off on its long-term liability. If their surveys on tool adoption show skepticism, it supports the METR finding.

Developers are not just looking for code that works once; they are looking for code that works reliably for years with minimal surprise. An AI suggestion that looks plausible but introduces subtle coupling issues, or requires significant refactoring to align with team standards, is often rejected outright. Why? Because the time saved by the initial generation is instantly lost (and often exceeded) by the time required to clean it up, debug the integration, or explain the deviation to colleagues.

This friction creates a trust deficit. If a tool is only right half the time in a controlled setting, adoption stalls when developers realize they must scrutinize every line, negating the promised productivity gains.

The Unseen Risk: Security and Debt

The most critical reason for rejection, often hidden beneath functional tests, lies in security and long-term debt. If an LLM produces a functional solution that fails security audits—perhaps by inadvertently leaving sensitive data exposed or using outdated cryptographic methods—it must be rejected, regardless of how quickly it solved the problem.

Studies examining security vulnerabilities in LLM output often find patterns where models produce code that is insecure by default unless explicitly prompted otherwise. For a project maintainer, merging code that introduces a high-severity CVE is catastrophic. This risk factor alone forces a default posture of high skepticism toward unverified AI contributions.

Implications for the Future of AI in Software

This reality check forces us to adjust our timeline for "full automation" and rethink how we measure progress.

1. The Obsolescence of Isolated Benchmarks

The industry must urgently pivot toward holistic evaluation frameworks. Future benchmarks cannot simply test isolated function completion. They must incorporate:

- Integration Tests: Requiring modifications across multiple files within a complex, non-trivial project structure.

- Quality Metrics: Penalizing code that falls below established metrics for cyclomatic complexity or test coverage.

- Security Scanning Gates: Integrating static analysis tools (SAST) directly into the evaluation pipeline, failing the model if security warnings are introduced.

In short, the success metric must shift from "Can the AI write code?" to "Can the AI write code that contributes positively to an existing, evolving codebase?"

2. The Rise of the AI Orchestrator Role

If the AI writes the first draft (the 50% that passes basic checks), the human developer evolves into a quality assurance, security vetting, and architectural alignment specialist. This is the core of the human-in-the-loop strategy.

Developers will spend less time on boilerplate syntax and more time on high-level tasks: designing architecture, defining precise requirements (prompt engineering), and rigorously validating the AI's output against systemic constraints. The developer becomes less of a typist and more of an **AI Orchestrator**.

This change requires new skills. Developers must master the art of context setting, using few-shot learning within prompts, and effectively chaining AI tools together (e.g., "Write the function, then run the linter on it, then submit the clean output to me for review").

3. Business Strategy: Trust and Cost Analysis

For CTOs and engineering leaders, this data mandates a conservative approach to immediate productivity gains. Implementing AI coding assistants without robust peer review and automated validation layers is not cost-saving; it is merely outsourcing technical debt creation.

The true business value of LLMs today lies not in eliminating developers, but in reducing the time spent on *uncreative, repetitive coding tasks*—the low-hanging fruit that passes basic SWE-bench tests. The high-value, complex integrations that require architectural foresight are still firmly in the human domain.

Actionable Insights for Developers and Organizations

How do we move from a 50% rejection rate to sustainable adoption? The answer lies in closing the gap between synthetic evaluation and production reality, primarily through rigorous process engineering.

For Engineering Teams: Elevate the Review Process

Action: Treat AI-generated code as if it came from an unknown, potentially junior contractor.

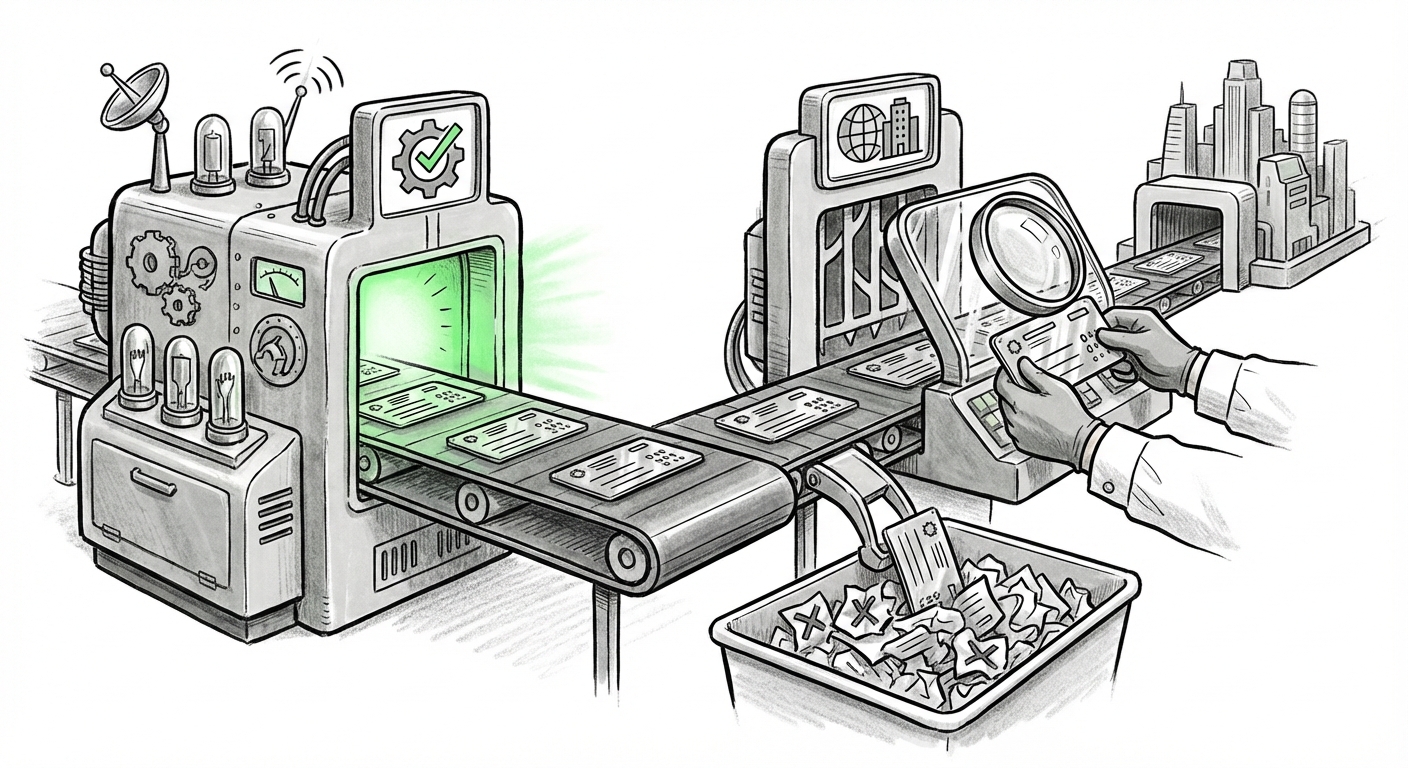

Implement mandatory, intensive code reviews for all AI-sourced contributions. These reviews must specifically check for architectural fit and security, not just functional correctness. Furthermore, integrate quality gates *before* the human review. If an AI suggestion fails to pass the team’s standard static analysis or unit tests automatically, it should be automatically rejected by the pipeline, saving the reviewer's time.

For AI Researchers and Tool Vendors: Rethink Evaluation

Action: Prioritize context over completion.

Vendors must move past benchmarks that test singular function writes. The next generation of evaluation must force models to manage state, adhere to complex dependency graphs, and satisfy security policies across a simulated, large-scale project environment. This requires building richer, more adversarial testing environments that mimic the inherent messiness of real-world repositories.

For Strategy Leaders: Invest in Verification Tooling

Action: Budget for automated verification layers.

The biggest ROI in the short term isn't faster generation; it's better verification. Invest in tools that can analyze LLM output for common failure modes (security, style drift, performance bottlenecks) automatically. This verification layer minimizes the burden on the human reviewer, allowing them to focus only on truly novel, complex design choices.

Conclusion: The Road to Trustworthy AI Code

The finding that half of "passing" AI code is rejected by developers is a healthy reality check. It demonstrates that the current state of AI is excellent at drafting and prototyping, but inadequate at autonomous, integrated production deployment. The future of software engineering will not be code written *by* AI, but code written with AI, subject to rigorous human and automated verification.

The immediate implication is clear: Trust must be earned through measurable, holistic quality, not just benchmark scores. The developer who masters the art of verifying, refining, and orchestrating AI output will define the productivity curve for the next decade, ensuring that speed never comes at the catastrophic expense of stability and security.