The Code Review Gap: Why Half of AI's 'Perfect' Code Fails the Real-World Test

The narrative around Artificial Intelligence in software development has been overwhelmingly positive, promising a future where boilerplate disappears and productivity soars. Tools like GitHub Copilot and specialized code-generating LLMs are rapidly integrating into development workflows. However, a recent study delivered a necessary reality check: about half of the AI-written code solutions that pass the rigorous SWE-bench benchmark would be immediately rejected by actual project maintainers.

As an AI technology analyst, this finding is not a sign of failure, but rather a crucial milestone in understanding the maturity curve of generative AI. It starkly illustrates the chasm between synthetic performance in a controlled testing environment and the messy, nuanced reality of shipping maintainable, production-ready code.

The Illusion of Benchmark Perfection

SWE-bench is designed to test an LLM's ability to fix real issues pulled from GitHub repositories. Passing this benchmark implies the AI successfully navigated problem descriptions, found the correct files, and produced a functional fix. For a long time, high scores on such tests were treated as proof that LLMs were nearly ready to become autonomous coders.

The METR study forces us to ask a deeper question: What does "passing" really mean?

For an automated system, "passing" means the code executes the requested change and satisfies automated tests. For a human developer reviewing that code, "passing" means:

- Does this fit our established coding style guide?

- Does this introduce hidden security vulnerabilities?

- Will this be easy for a new team member to understand six months from now? (Maintainability)

- Does it correctly handle subtle edge cases that weren't explicitly in the prompt?

The 50% rejection rate strongly suggests that LLMs are excelling at the first point (correct execution) while failing miserably on the next three (context, style, and long-term health). This discrepancy immediately points toward the limitations of current evaluation frameworks. We need benchmarks that measure production readiness, not just localized correctness. As we explore further context through industry discussions, it becomes clear that the focus must shift to evaluating architectural integration and contextual awareness.

Corroboration 1: Questioning the Yardstick

The need to interrogate benchmark limitations—a topic often covered in deep AI research circles—is paramount. If the AI passes the test, but the engineer rejects the solution, the test itself is insufficient. Researchers are increasingly calling for benchmarks that force LLMs to engage in multi-file reasoning, dependency management, and iterative debugging across an entire project structure, rather than solving a single, isolated bug in isolation.

The Context Collapse: Why AI Code Fails the Review

Why would a technically correct snippet be rejected? The root cause often boils down to the LLM's limited "memory" or context window when dealing with large, established codebases. A human developer inherently understands the project's history, the purpose of obscure legacy functions, and the unwritten rules governing how components interact. LLMs, even with massive context windows, struggle to ingest and prioritize that institutional knowledge.

This leads directly to the issue of Maintainability and Architectural Dissonance.

Corroboration 2: The Struggle for Deeper Context

When an LLM generates code, it prioritizes the immediate task completion based on the provided input. If the solution requires subtly refactoring an established design pattern across three different modules to maintain consistency, the LLM is likely to generate a local fix that breaks the larger architectural contract. This synthesized code is "functionally correct" but "architecturally poisonous." Technical leads recognize this immediately because it creates future technical debt.

For a non-technical audience, imagine hiring a temporary worker to fix a single leaking pipe in your house. The worker fixes the leak perfectly (passes the test). But they use the wrong kind of sealant, connect it to the wrong pressure valve, and leave the shut-off mechanism inaccessible behind a wall. The immediate problem is solved, but the long-term maintenance nightmare has just begun. This is the quality gap AI code currently faces.

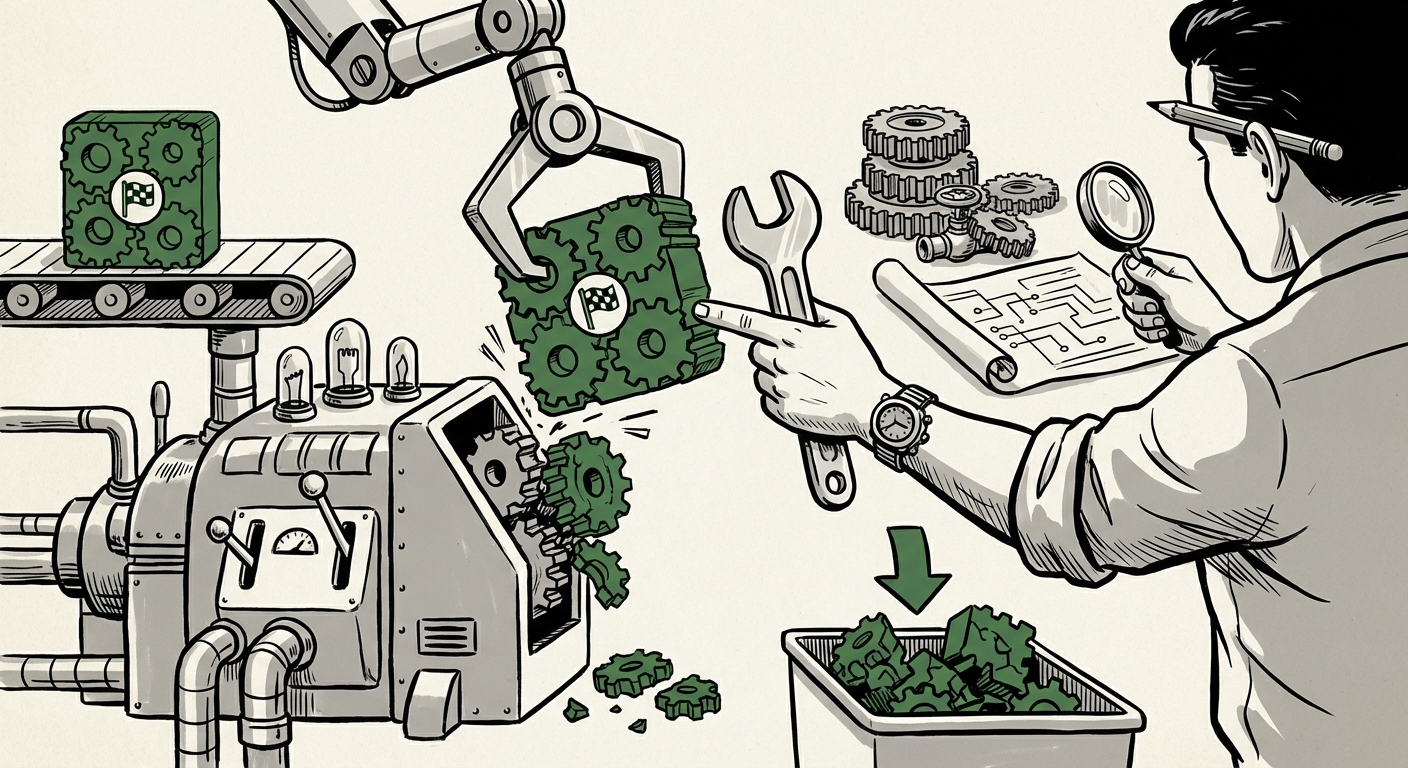

The Pivot: From Coder to Supervisor

If the AI can get us 50% of the way there, but the final 50% requires deep, contextual judgment, the role of the human programmer must evolve. The debate is shifting decisively away from AI replacement toward developer augmentation.

Corroboration 3: The Rise of the AI Supervisor

The developer is morphing into a highly skilled prompt engineer and editor—the AI Supervisor. Their value is no longer solely in typing syntax, but in directing, validating, and integrating the AI's output. The time saved writing boilerplate is now reinvested in rigorous code review, dependency checking, and strategic architectural planning.

This transformation has significant implications for tech hiring. Future developers must be masters of verification. They must know exactly what questions to ask the LLM, how to structure complex, multi-step prompts, and, critically, how to spot the subtle flaws that automated testing misses.

Industry Implementation and the Tooling Race

The market is not standing still. Businesses, acutely aware of the cost of technical debt, are not blindly integrating raw LLM output. Instead, we are seeing a focus on integrating AI tools within stricter guardrails.

Corroboration 4: Guardrails and Enterprise Adoption

Enterprise adoption of tools like GitHub Copilot is increasingly coupled with enhanced internal static analysis, security scanners (SAST/DAST), and mandatory peer reviews focused specifically on AI-generated blocks. Companies are looking for tooling that acts as an intelligent intermediary—a 'Junior LLM Reviewer'—to catch basic errors before the code ever reaches the senior engineer's queue. The development trajectory is clear: the market demands tools that address the known weaknesses of the underlying models.

This means the next generation of successful AI coding tools won't just be better at generating code; they will be better at self-correction and contextual alignment within an existing repository's structure.

Future Implications: What This Means for AI and Tech Strategy

The 50% rejection rate is a crucial stress test revealing the current limitations of AI in complex, human-centric tasks. Here is what this means for the future trajectory of AI technology:

1. Benchmarks Must Get Harder (and More Holistic)

The industry must move beyond isolated fixes. Future benchmarks will need to reward holistic solutions that demonstrate architectural understanding, dependency management across multiple files, and adherence to subtle, implicit project conventions. Success will be measured by "Time to Production Acceptance" rather than "Test Pass Rate."

2. The LLM Context Window is the Next Frontier

If maintainability and integration are the failure points, then scaling the effective context window—or developing better methods for context retrieval (like advanced Retrieval-Augmented Generation, or RAG, applied to source code)—will be the primary area of competitive innovation. The LLM that can truly internalize the entire codebase’s history and structure will be the one that achieves true production readiness.

3. Productivity Gains are Real, But Nuanced

Businesses should not abandon AI coding tools. The tools are delivering substantial productivity gains by handling tedious, well-defined tasks. However, the expected productivity multiplier (e.g., 10x developer) is tempered by the required human oversight. Current realistic gains are likely in the 20-40% range, factoring in the necessary review and integration time.

For a business, this means budgeting for highly skilled, senior engineers to supervise the AI process, not expecting junior staff to simply inherit the output.

4. The Rise of Specialized, Smaller Models

While frontier models handle general programming well, we may see a specialization trend. Smaller, fine-tuned models trained exclusively on a single company's internal codebase, style guides, and security policies could generate code with a far lower rejection rate within that specific corporate environment, solving the context problem locally.

Actionable Insights for Stakeholders

For those steering technology development and career paths, these insights translate into clear actions:

- For Software Engineering Managers: Treat AI-generated code as high-quality first drafts, not final submissions. Update your code review metrics to heavily penalize violations of architecture, style, and known security patterns, regardless of whether the code passes initial unit tests.

- For Individual Developers: Focus skill development on systems thinking, architectural design, and advanced prompt engineering. Mastering the art of *verification* and *integration* is more valuable than ever before. Your worth is in your judgment, not your keystrokes.

- For AI Tool Vendors: Shift R&D focus from raw code generation accuracy to contextual integration accuracy. Build tools that proactively analyze surrounding files and alert developers to potential architectural conflicts before they commit the code.

The era of AI-assisted coding is not stalled; it is entering a more mature, challenging phase. The initial honeymoon period, where high benchmark scores suggested autonomous capability, is over. We are now in the critical phase of integration, where the hard work of making AI-generated solutions genuinely reliable and maintainable falls to the human experts.

The fact that half the code is rejected is a strong signal that the human programmer remains indispensable—not as a typist, but as the ultimate custodian of software quality and systemic integrity.