The Reality Gap: Why AI Code That Passes Benchmarks Fails Human Developers

Artificial Intelligence, particularly Large Language Models (LLMs), is transforming software development at breakneck speed. Tools that suggest code completions and even draft entire functions are moving from novelty to necessity in many tech stacks. However, a recent finding from the research organization METR has thrown a crucial spotlight onto the maturity of this technology: about half of the AI-generated code solutions that successfully pass stringent industry tests would be immediately rejected by actual project maintainers.

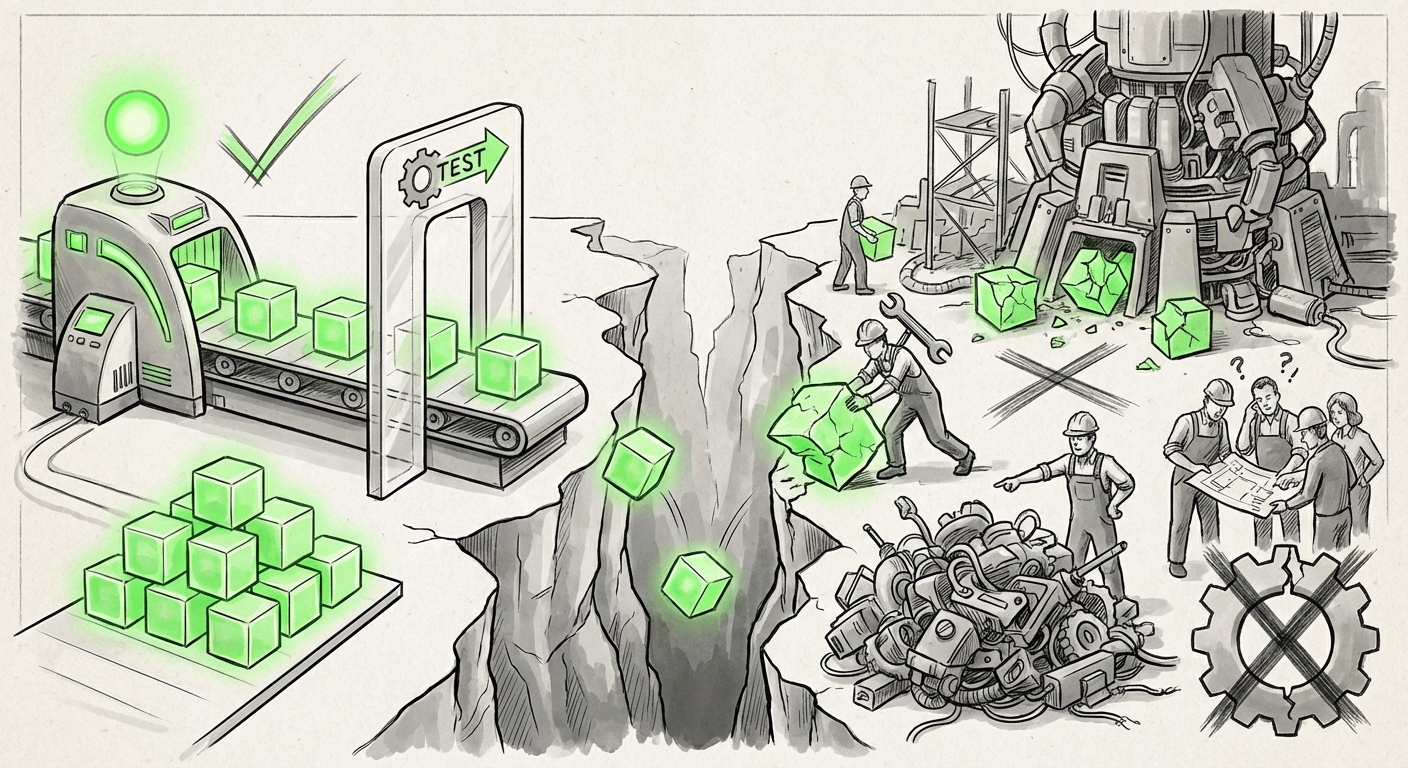

This disparity isn't just a minor hiccup; it represents a profound "reality gap." It is the difference between passing a test designed in a lab and surviving the harsh, messy environment of a real, large-scale software project. To understand what this means for the future of AI, we must look beyond the pass/fail scores and investigate the limitations of our testing methods, the subtleties of human rejection, and the necessary evolution of our quality assurance systems.

The Illusion of Perfection: Why Benchmarks Fall Short

The excitement surrounding LLMs is often measured by standardized tests. Tools like SWE-bench challenge models to solve real-world software engineering problems pulled from open-source repositories. A "pass" on these benchmarks suggests the AI understands complex instructions and can produce functionally correct code.

However, this success can create an illusion of readiness. If a model can successfully implement a required feature to fix a bug—meaning the fix works when tested in isolation—the benchmark is satisfied. But code is rarely isolated. The METR study, along with related research into benchmark limitations, confirms that these synthetic environments fail to capture the full scope of engineering reality.

Context is King: The Missing Variables in Automated Testing (Contextual Source Insight)

When researchers drill down into benchmark design, they often conclude that evaluations must become more dynamic. Automated tests frequently check for functional correctness—"Does this code do what the prompt asked?"—but ignore the surrounding concerns that consume most developer time. If we look into the deep dive on benchmark limitations, we see the critique focusing on:

- Architectural Fit: Does the AI code use the established patterns of the existing codebase? An engineer might reject a solution because it introduces a new, unapproved library or breaks a core design principle, even if the immediate task works.

- Long-Term Maintainability: A quick fix that is hard to read, poorly documented, or relies on implicit assumptions is a ticking time bomb. Benchmarks rarely penalize code that creates future technical debt.

- Security Context: While some benchmarks test for known vulnerabilities, they often lack the global context of an entire security posture. An AI might write safe code for one function, but overlook how that function interacts with authentication layers or sensitive data handling across the application.

This tells us that the next generation of evaluation tools must simulate the integration process, not just the component creation process.

The Human Factor: Why Maintainers Hit Reject (Corroborating Developer Insights)

The most telling data point is the human rejection rate. When an experienced developer reviews code, they are not just a debugger; they are a custodian of the project's long-term health. The reasons for rejecting AI code go beyond simple syntax errors, as corroborated by developer sentiment surveys.

Developers often express distrust when:

- The Code is "Too Clever": AI can sometimes generate extremely dense, complex, or overly optimized code that is technically superior in performance but impossible for the next human developer to debug quickly. Simplicity often trumps micro-optimization in maintenance-heavy environments.

- Style and Linter Violations: In professional settings, adherence to strict style guides is non-negotiable for code consistency. AI models, unless heavily fine-tuned on a specific team’s style rules, frequently generate code that clashes with established formatting, immediately flagging it for rejection or heavy modification.

- Implicit Assumptions: Real-world software lives in a world of implicit context—what the team already knows about the database schema, the caching layer, or external API limits. AI models, lacking that shared history, make assumptions that a human reviewer immediately recognizes as flawed or dangerous.

Essentially, the human developer acts as a contextual filter. They check not just "Does it work?" but "Is this the *right* way to build this within our specific, existing constraints?" This points to the current weakness of LLMs: exceptional pattern matching capability divorced from deep, shared historical and architectural context.

The Future of AI in Software: From Author to Assistant (Future Implications Analysis)

If half of the generated code is rejected, it fundamentally changes how we should view the role of AI tools like GitHub Copilot or internal code generators. They are not yet autonomous programmers; they are high-speed **prototyping engines** or **expert first-draft writers**.

Shifting the Focus to AI-Assisted Verification

The most exciting implication arising from this quality gap is the required evolution of our tooling itself. If AI generates code that requires human refinement, we must use AI to accelerate that refinement. This is driving the trend toward AI-assisted code review and verification systems.

Instead of relying solely on human maintainers to spot the subtle errors (which exhausts them and slows down the process), we are seeing the rise of specialized AI agents trained explicitly to scrutinize the output of generative LLMs. These verification agents can be narrowly focused on security vulnerability checks, adherence to complex API usage documentation, or performance regression testing before the code ever reaches a human reviewer.

This creates a layered validation pipeline:

- LLM Generator: Creates the functional draft (high speed).

- Verification AI: Checks structure, security, and style consistency (medium speed).

- Human Maintainer: Validates architecture, domain logic, and long-term integration (high scrutiny).

This tiered approach acknowledges the current reality: AI boosts speed, but specialized verification and ultimate human stewardship ensure quality.

Business and Technical Implications: Navigating the Adoption Curve

For technology leaders and investors, the METR study provides essential data for managing expectations regarding the Return on Investment (ROI) of generative AI coding tools.

The Speed vs. Debt Trade-Off (Market Context Insight)

Industry reports often highlight massive reported productivity gains (the "speed") from using these tools. However, when we factor in the time spent by highly paid senior developers cleaning up or rejecting poorly contextualized AI code, the net efficiency gain shrinks considerably. If developers spend 30 minutes rewriting an AI suggestion that only took 2 minutes to generate, the ROI is negligible or even negative.

The implication is clear: Unchecked adoption leads to accumulating technical debt. Companies that view these tools purely as a cost-cutting measure, replacing junior roles with unverified AI output, are likely building future crises in the form of brittle, unmaintainable systems.

For businesses integrating this technology, actionable insights must focus on supervision:

- Mandate AI Code Tagging: All code generated or heavily modified by an AI must be clearly tagged in the version control system. This allows tracking of rejection rates and targeted feedback loops to refine internal models.

- Senior Developers as AI Coaches: The role of senior engineers must evolve. They should spend less time writing boilerplate and more time defining architectural guardrails, training the models (via feedback), and performing the high-level contextual review that AI currently cannot.

- Invest in Internal Validation Loops: Relying solely on external benchmarks like SWE-bench is insufficient. Companies need to build internal benchmarks that mirror their proprietary codebase, architectural challenges, and specific security mandates.

Actionable Insights: Bridging the Reality Gap

The reality gap highlighted by the METR study is not a failure of the technology, but a necessary phase in its maturation. We have powerful code *generators*, but we are still developing reliable code *validators* that match human engineering standards.

For the Engineer: Embrace the Co-Pilot, But Keep the Chisel Handy

If you are a developer, view AI code as a highly competent, but sometimes socially awkward, intern. It brings energy and volume, but requires careful guidance and rigorous peer review. Your value shifts from being the primary typist to being the master editor and contextual architect.

For the Business Leader: Quality Over Quantity in Deployment

Do not measure AI success by lines of code generated; measure it by accepted, production-ready pull requests that do not introduce new, untrackable technical debt. Implementing robust internal testing pipelines that specifically challenge AI output against production realities is now an essential investment, not an optional overhead.

The future of software development is undeniably interwoven with AI. But the path forward is not one of replacement, but of sophisticated augmentation. We are learning that the most advanced AI in the world still needs the wisdom, context, and caution of an experienced human mind to produce code that is not just functional today, but sustainable tomorrow.