The Governance Era: Why Anthropic’s New Institute Signals a Fundamental Shift in AI Development

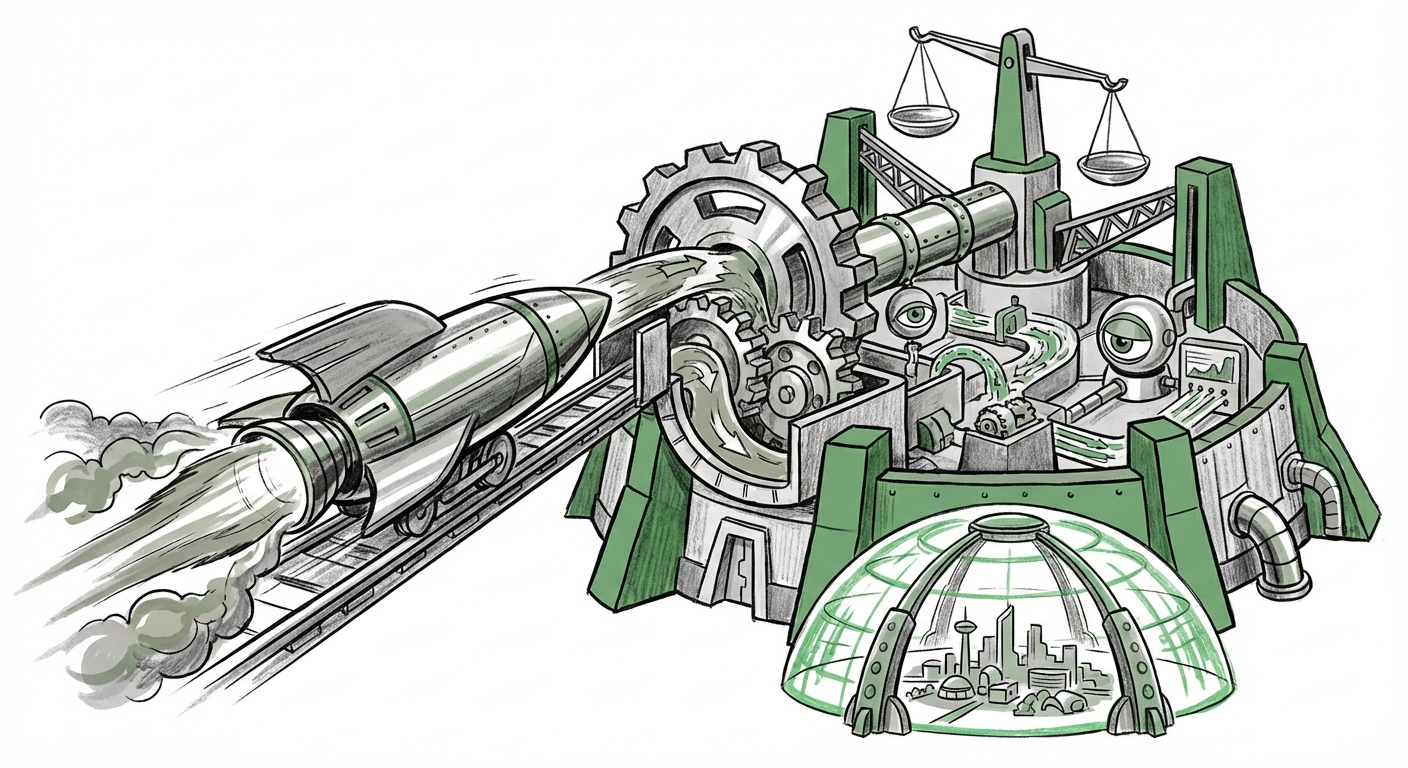

For years, the narrative driving the AI industry was one of relentless capability scaling. Faster compute, larger models, and seemingly limitless intelligence were the primary metrics of success. However, the landscape is undergoing a profound and necessary maturation. The recent launch of the **Anthropic Institute**, an internal think tank dedicated to studying the societal, economic, and security impacts of powerful AI, is not just a press release—it is a strategic inflection point.

This development signals that the leading builders of foundational models are shifting focus. They recognize that moving from developing world-changing technology to successfully *integrating* that technology into human civilization requires deep, specialized, and institutionalized research into governance. This is the dawn of the Governance Era.

The Maturity Curve: Moving Beyond Capability Scaling

To understand the gravity of Anthropic’s move, we must place it within the competitive context. Pioneers like OpenAI and DeepMind have long maintained safety and alignment teams. Anthropic’s decision to create a dedicated, high-profile *Institute* suggests that foundational safety research is graduating from a specialized department into a primary, public-facing pillar of corporate strategy.

When major players dedicate significant resources to studying the side effects of their own products, it indicates a consensus: the era where performance eclipsed all other concerns is ending. We are moving into a phase where trust and demonstrable responsibility will become key differentiators, much like data privacy became a necessary feature in the early days of consumer internet.

The goal here is twofold: first, to mitigate catastrophic risks inherent in super-powerful systems (like bias amplification or loss of human control), and second, to proactively shape the frameworks—both internal and external—that will govern their deployment. This shift is essential for long-term viability.

Corroborating the Trend: Institutionalizing Safety

The market demands proof of stewardship. As we look at the landscape, we see industry-wide acknowledgments of this necessity. Articles detailing the structure and collaboration between major labs on governance initiatives confirm this trend. If leading labs are increasingly working together, or at least publicly adopting similar structures for oversight, it suggests a shared understanding that the risks associated with frontier models transcend any single company’s walls.

This move helps analysts and investors assess the long-term risk profile of these companies. A firm that prioritizes deep, non-commercial research into potential harms is seen as more sustainable than one focused solely on quarterly capability gains.

The External Pressure: Regulation is No Longer Theoretical

Anthropic’s Institute is positioned perfectly to address one of the most powerful drivers of change in the AI ecosystem: **global regulation.** AI governance is moving rapidly from academic debate to hard law.

Consider the European Union's **AI Act** and the growing list of national executive orders worldwide. These legislative efforts place explicit responsibility on the developers of "high-risk" or "general-purpose" foundation models. They mandate everything from transparency documentation to rigorous pre-market testing and ongoing risk monitoring.

Proactive Compliance and Lobbying

For a company like Anthropic, operating an internal think tank dedicated to societal impact becomes a dual-purpose mechanism. Firstly, it is a **proactive compliance engine.** The Institute's research provides the necessary data, testing methodologies, and technical expertise required to satisfy future regulatory audits or implement safety guards mandated by law.

Secondly, it functions as an expert liaison in policy discussions. By presenting well-researched, data-backed findings on security and economics, developers can help steer regulation toward effective, technically feasible standards rather than reactive, overly restrictive blanket rules. In essence, the Institute allows Anthropic to speak the language of regulators with the authority of deep technical understanding.

For businesses looking to adopt AI, this external pressure simplifies decision-making slightly: *responsible AI compliance is becoming a prerequisite for market access.*

The Core Challenge: Dual-Use Capabilities and Security Audits

The core mandate of the Anthropic Institute revolves around "security." This is perhaps the most immediate and tangible concern for national security and cybersecurity stakeholders. Powerful generative models possess **dual-use capabilities**—they can create immense value (e.g., drug discovery) but also facilitate significant harm (e.g., creating novel bio-toxins or hyper-personalized phishing campaigns).

From Benchmarks to Real-World Red-Teaming

The transition in focus means moving beyond theoretical alignment debates to concrete security audits. We need to see detailed technical reports on what these institutes are finding when they stress-test models for misuse. Are they finding vulnerabilities in code generation that could lead to widespread, automated cyberattacks? Are they uncovering novel methods for synthetic identity creation that could destabilize political processes?

The industry is increasingly recognizing that public "trust scores" or basic safety filters are insufficient. Real-world adversarial testing, or *red-teaming*, by dedicated internal experts is essential. This research is invaluable for cybersecurity professionals globally, as it proactively identifies the attack vectors that malicious actors are likely to pursue tomorrow.

Actionable Insight for Security Teams: Pay close attention to research publications from these institutes detailing discovered model exploits. These are not academic curiosities; they are blueprints for the next generation of digital threats that your defenses must immediately start accounting for.

The Economic Calculation: Governance as a Competitive Asset

Creating and staffing an institute dedicated to deep research is not cheap. This brings us to the crucial economic dimension: **governance is now an expense that generates strategic value.**

In the past, investors rewarded speed. Now, they must reward sustainability. Companies that fail to manage externalities—be it bias crises, security breaches, or public backlash over perceived recklessness—will face severe financial penalties, whether through lost market share, regulatory fines, or talent attrition.

The Cost of Trust vs. The Cost of Failure

Articles analyzing the economics of AI deployment reveal a growing consensus: the cost of building robust, safe infrastructure might be high upfront, but the cost of failure in deployment is exponentially higher. An institute like Anthropic's serves as an insurance policy and a competitive differentiator. It helps attract top-tier talent who prioritize working on ethically sound projects, and it reassures large enterprise clients—who face their own liability issues—that they are partnering with a responsible vendor.

For businesses, this translates into due diligence requirements. When sourcing AI solutions, asking "What safety research infrastructure does your provider have?" will soon be as common as asking about uptime guarantees or data center locations.

Implications for Businesses: Navigating the Governance Landscape

The development of institutional safety bodies by AI leaders has clear, practical implications for every sector looking to embed AI into its operations:

- Mandate Internal Red Teams: Businesses cannot rely solely on vendor assurances. They must begin developing internal teams tasked with stress-testing deployed models against sector-specific misuse scenarios (e.g., financial fraud, supply chain disruption).

- Demand Transparency on Testing: When evaluating foundation models, procure clear documentation on the safety standards and testing procedures used by the provider. Look for evidence of research institutes driving their safety roadmap.

- Integrate Policy Expertise: As regulations evolve, AI adoption strategies must incorporate legal and public policy expertise alongside technical skill sets. Understanding impending EU or US guidelines now saves costly retrofitting later.

- Shift Talent Acquisition Focus: The demand for AI alignment researchers, ethics officers, and governance specialists will soar. Talent strategy must adapt to compete for individuals who excel at critical thinking and societal risk assessment, not just algorithmic optimization.

Actionable Insights: Preparing for the Responsible AI Future

The message from the AI development frontier is clear: raw power must be tempered by profound wisdom. The launch of the Anthropic Institute is a landmark event confirming that wisdom is now being systematically engineered.

For Decision Makers: Reframe your AI investment strategy. Shift the balance away from 100% capability focus towards a 70/30 split—70% capability, 30% integration and governance assurance. This is not a slowdown; it is a necessary re-calibration for long-term, high-impact deployment.

For Technologists: Embrace the interdisciplinary nature of this challenge. The most valuable engineers and researchers in the coming years will be those who can bridge the gap between complex code and complex human systems.

The race for smarter AI is now inextricably linked to the race for safer AI. Anthropic is institutionalizing this reality, ensuring that as models become more powerful, the guardrails surrounding them are equally robust.