The Governance Pivot: Why Anthropic’s New AI Institute Signals a New Era for Frontier Models

The race for Artificial General Intelligence (AGI) is often framed as a sprint toward greater capability. We obsess over parameter counts, speed, and the newest multimodal breakthroughs. However, a critical counter-narrative is emerging from the leading labs: the recognition that *power* must be matched by *prudence*. Anthropic’s recent decision to launch the "Anthropic Institute," an internal think tank focused explicitly on AI’s impact on society, the economy, and security, is far more than a public relations maneuver. It marks a vital structural shift in how the industry intends to manage its most powerful creations.

For analysts, investors, and policymakers, this move forces a re-evaluation. It suggests that the era of capability development operating in a vacuum is ending. As models grow more complex—moving from novel tools to potentially systemic actors—the associated risks demand dedicated, formalized, and highly visible internal structures. This institute is a formal declaration that governance is no longer a side project; it is core infrastructure.

From Capability Scaling to Systemic Risk Management

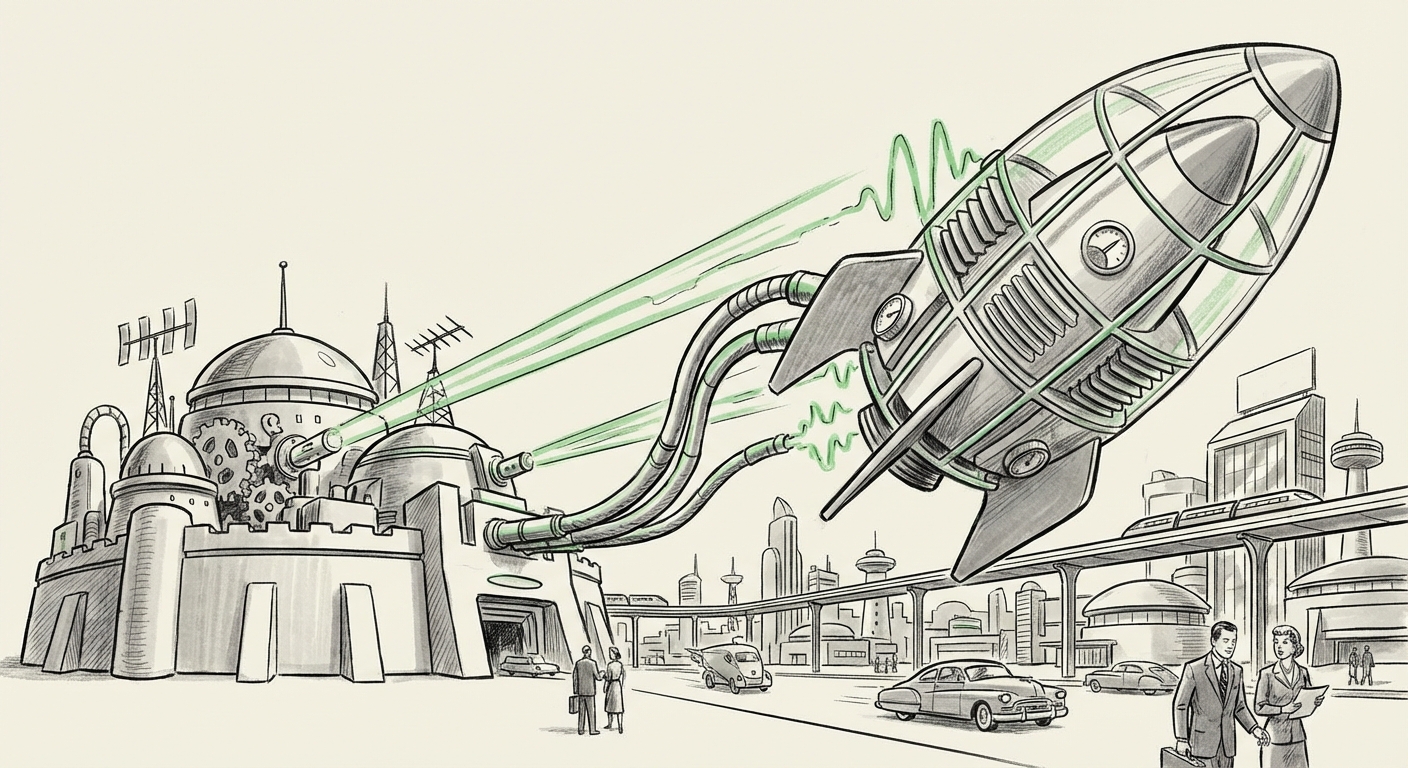

Anthropic, founded on principles of safety and constitutional AI, has always positioned itself as the cautious counterpart to its competitors. The creation of the Anthropic Institute formalizes this commitment, moving the study of societal impact from abstract research papers into an institutionalized body. Think of it this way: building a world-class race car (the AI model) is one thing; building the dedicated safety and review board (the Institute) that certifies it for public road use is another entirely.

The Institute’s mandate—covering society, economy, and security—is deliberately broad. This acknowledges that the impact of frontier models is inherently multi-faceted. It’s not just about preventing catastrophic misuse (security); it’s about managing massive labor market shifts (economy) and understanding the subtle erosion of trust or information ecosystems (society).

This institutionalization brings immediate benefits: dedicated funding, clear reporting lines, and, crucially, an external-facing body to engage with regulators and the public. It provides a structure for channeling internal ethical debates into actionable research agendas, helping to mitigate the inherent tension between the commercial drive for deployment and the scientific imperative for safety testing.

Contextualizing the Move: The Competitive and Regulatory Landscape

To truly understand the gravity of the Anthropic Institute, we must contextualize this development against three major external pressures that are shaping the entire AI ecosystem.

1. The Competitive Check: Lessons from Rival Alignment Efforts (Query 1)

Anthropic is not working in isolation. The dynamics within its chief competitor, OpenAI, provide essential context. Following internal turbulence regarding the prioritization of safety versus rapid deployment, many observers are keen to see how stability is maintained in high-stakes AI labs. Establishing a formal institute—rather than relying on executive appointments or smaller, fluid teams—suggests Anthropic is deliberately creating a more durable structure than those sometimes perceived as vulnerable to internal corporate pressure.

If competitors struggle to maintain robust, independent safety divisions, Anthropic’s formalized institute could become a powerful benchmark. It signals to the market and regulators that safety study is not optional or easily sidelined when profitability demands speed. For industry professionals, this sets a new competitive standard: the safest model developer wins trust, and trust is the ultimate currency.

2. The Financial Reality: Safety as an Investment, Not Overhead (Query 2)

Is this a genuine priority shift or an expensive mandate? The answer lies in funding trends. If reports indicate that investment in specialized AI safety and alignment research is increasing across the board in 2024, Anthropic’s move is validated as part of a maturing trend. If, conversely, the majority of VC and corporate funding remains heavily concentrated in capability scaling (e.g., acquiring more computing power), then the Institute might face resource constraints relative to the R&D departments building the models themselves.

For investors, the Institute represents a form of long-term risk mitigation. Uncontrolled, powerful AI poses an existential threat to the very market these companies aim to dominate. Therefore, formal safety research becomes a necessary insurance policy, making Anthropic potentially more attractive to cautious institutional capital.

3. The Legislative Hammer: Regulatory Compliance as a Driver (Query 3)

Perhaps the strongest external driver for internal governance structures is the incoming wave of global regulation. Legislation like the **EU AI Act** places stringent requirements on developers of "General Purpose AI Models" (GPAIMs) deemed to pose systemic risk. These mandates demand pre-deployment assessment, transparency regarding training data, and clear identification of downstream risk.

An internal think tank dedicated to societal impact study is the perfect engine to generate the necessary documentation and evidence required by these laws. It transforms compliance from a reactive legal hurdle into a proactive research function. For any business relying on cutting-edge models, this means that the outputs of the Anthropic Institute—its risk taxonomies, impact studies, and safety protocols—will rapidly become the *de facto* standards for responsible AI deployment globally.

The Structural Debate: Independence vs. Integration (Query 4)

A key strategic question surrounds the structure itself. Should AI safety research reside in an independent non-profit entity, shielding it entirely from commercial pressures, or should it be integrated into the for-profit development company?

Anthropic has opted for integration via the Institute. This suggests a belief that safety research cannot be effectively divorced from the engineering teams building the systems. When safety researchers work side-by-side with engineers, alignment techniques can be woven directly into the training loop, rather than being bolted on later. However, this integration also raises the specter of prioritization conflicts. Will the Institute’s findings always be weighted equally against the pressure to ship the next revenue-generating product?

The value of the Institute hinges on its autonomy within the corporate structure. If it has the power to veto deployment based on security findings, it is truly effective. If it merely advises, it risks becoming a low-priority advisory body.

Future Implications: What This Means for Business and Society

The rise of formalized internal safety institutes like Anthropic’s signals profound changes across several sectors:

For Businesses: The New Due Diligence

Businesses integrating or building upon frontier models must adapt their due diligence processes. Relying solely on vendor checklists will become insufficient. Future B2B contracts will increasingly demand evidence that vendors have robust, institutionalized safety review processes. Companies will look for transparency regarding the work coming out of these Institutes—specifically, how they handle issues like bias amplification, economic disruption modeling, and adversarial robustness.

Actionable Insight: Start asking vendors, "What governance framework supports your R&D?" If the answer is vague, it implies unknown systemic risk in the tools you are adopting.

For Policymakers: Institutionalizing Dialogue

Regulators now have a clear, designated counterpart within leading AI labs. Instead of fragmented communication, policymakers can engage with the Institute as the authoritative voice on internal risk assessment. This should streamline the process of establishing technical benchmarks required by emerging laws, especially concerning catastrophic risk scenarios.

However, policymakers must remain wary of "governance theater." The Institute’s published findings must be subject to external academic and third-party auditing to ensure they reflect true commitment, not just regulatory box-ticking.

For Society: Shifting the Narrative on Progress

The very existence of this Institute helps recalibrate the public conversation. It moves the discussion away from a binary choice between "unlimited innovation" and "total stagnation." Instead, it frames progress as a controlled, multi-variable optimization problem, where societal benefit is weighted alongside capability gains.

For the average citizen, this suggests a slow but necessary increase in AI reliability and predictability. If the Institute successfully models economic dislocation, it could provide the data needed for governments to proactively plan universal basic income trials or massive retraining initiatives—moving from reactive crisis management to proactive societal adaptation.

Conclusion: Governance as the New Frontier

Anthropic’s launch of its dedicated research institute is a powerful signal that the competitive frontier in AI development is shifting. The race is no longer just about who can build the smartest system fastest; it is about who can build the smartest, most trustworthy, and most governable system first.

This structural commitment to studying societal and security impacts, driven by both internal ethics and external regulatory pressure, means that safety research is transitioning from an academic niche to a necessary component of the AI engineering stack. The success, transparency, and influence of the Anthropic Institute will determine the standard for responsible frontier AI development for the rest of the decade. The focus is turning inward, preparing the foundations for a future where powerful AI must not only work brilliantly but must also work *safely for everyone*.