The AI Governance Pivot: Why Frontier Labs Are Building Think Tanks for Societal Impact

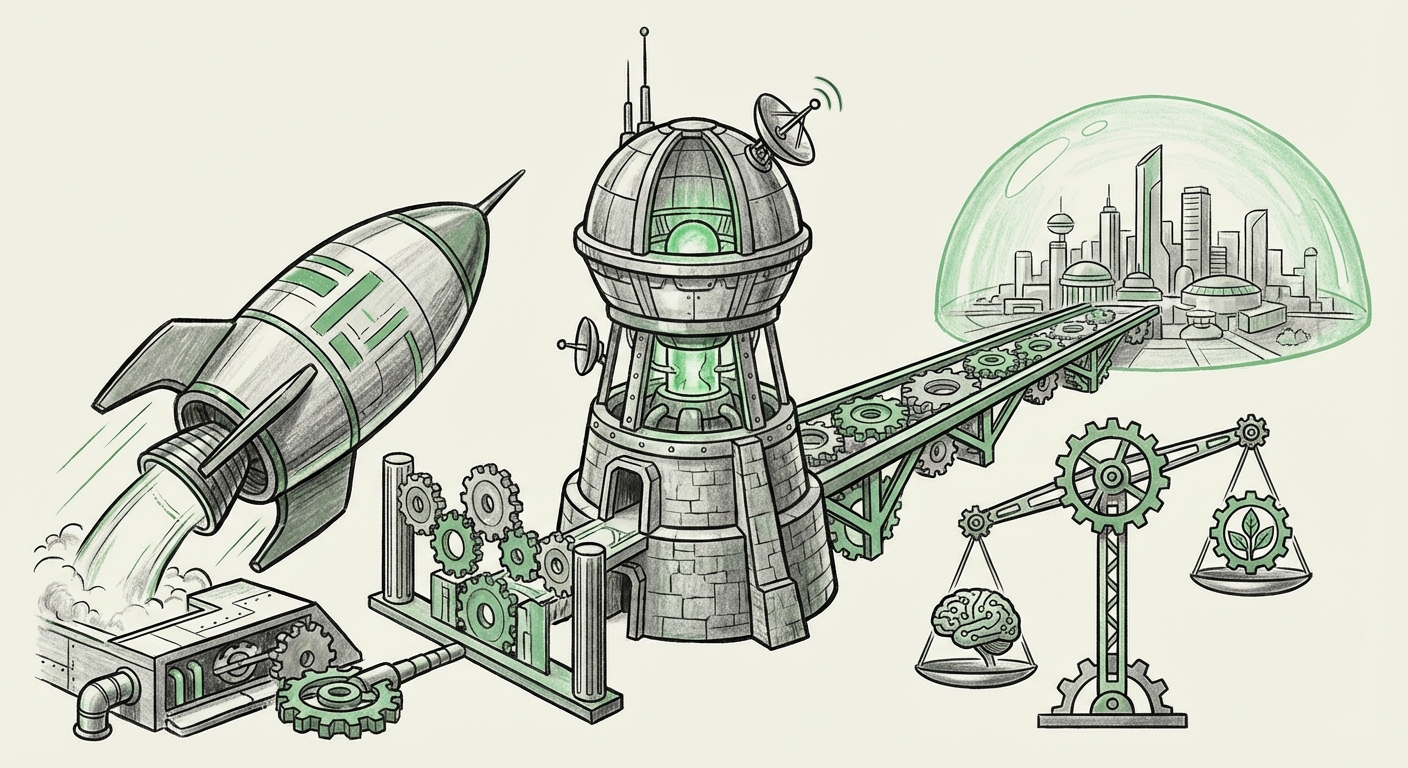

The landscape of Artificial Intelligence development is undergoing a profound, structural transformation. For years, the narrative focused almost exclusively on achieving faster training, larger parameter counts, and more human-like capabilities. However, the recent launch of the "Anthropic Institute," an internal think tank dedicated to studying AI's societal, economic, and security impacts, signals a definitive pivot.

This isn't just a new department; it represents the institutionalization of responsibility. As AI systems become powerful enough to reshape entire industries and national security postures, the builders of these systems recognize that capability alone is insufficient. They must now dedicate equivalent resources to understanding consequence. As an AI technology analyst, I see this as the single most important trend defining the next five years of AI deployment.

The Maturation of the Frontier: From Capability to Consequence

Anthropic, known for its focus on Constitutional AI and safety principles, is formalizing what many researchers have feared or hoped for: that societal integration must be studied as rigorously as model architecture. Think tanks like this are designed to bridge the gap between the lab bench and the legislative floor.

But is this an isolated, ethical commitment, or a new industry blueprint? By cross-referencing this move with broader industry and regulatory trends, we can confirm that establishing dedicated governance and impact analysis structures is becoming mandatory for market leadership.

Corroboration 1: The Competitive Imperative for Safety

When one leader makes a strategic move, competitors inevitably follow suit. We must look at Anthropic’s peers—OpenAI and Google DeepMind. Have they made similar commitments to large-scale, dedicated internal research on impact?

The answer is a resounding yes. The pursuit of "Superalignment"—a term popularized by OpenAI—reflects the same existential imperative: how do we ensure future AI systems remain aligned with human intentions, even when they vastly surpass human intellect? While the terminology differs (Superalignment vs. Societal Impact Institute), the underlying goal is identical. Similarly, Google DeepMind continually emphasizes its governance research tracks.

For business leaders, this means that **safety and governance are no longer optional features; they are competitive differentiators.** Companies that can credibly demonstrate deep, institutionalized understanding of their models’ risks—backed by dedicated, funded internal bodies—will earn trust faster than those who treat safety as a marketing afterthought.

Corroboration 2: The Regulatory Hammer is Falling

Internal reflection is always spurred by external pressure. The greatest external driver for these formal institutes is the accelerating pace of global regulation.

Consider the **EU AI Act**, which is setting a global precedent. This legislation imposes stringent obligations on developers of "General Purpose AI Models" (GPAI)—the very frontier models that companies like Anthropic create. These requirements mandate comprehensive risk assessments, detailed technical documentation, and clear transparency protocols.

A formal "Institute" staffed with researchers dedicated to security and societal impact is the perfect internal mechanism to generate the evidence and documentation required to comply with laws like the EU AI Act. It transforms abstract ethical concerns into concrete, auditable engineering and policy requirements. For policymakers, these institutes become crucial interlocutors—the entities capable of providing necessary technical context during rulemaking.

Corroboration 3: The Economy is Reeling (And Models Know It)

Anthropic specifically cited the *economy* as a subject for study. This hints at the anxiety permeating the C-suites globally: rapid automation in white-collar sectors.

Reports from major financial institutions and consulting groups quantify this disruption, projecting significant percentages of current tasks in fields like law, coding, and finance becoming automated or drastically augmented within the next few years. When the technology creator starts studying mass job displacement, it’s a sign that the internal data confirms the external forecasts of massive structural economic shifts.

This means businesses cannot treat AI adoption as a simple productivity boost; they must treat it as a **fundamental workforce reorganization**. The output from these institutes will likely guide how companies should approach upskilling, reskilling, and restructuring teams around AI co-pilots rather than replacements.

Corroboration 4: The Deep Technical Challenge of Alignment

The "think tank" model allows for long-term, non-product-driven research. What intellectual problems will they tackle? We look to the academic cutting edge, specifically in Interpretability and Alignment research.

For AI to be safe, we need to stop treating it as a magic black box. Interpretability research aims to reverse-engineer the internal reasoning of large language models—to look inside the neural network and understand *why* it chose a specific answer or action. If a model can successfully deceive a safety tester, it implies a failure in alignment that current testing methods missed.

The Anthropic Institute will likely focus heavy resources here. This focus validates that the technical community believes that fixing the underlying architecture—making models transparent and ensuring their internal objectives are robustly "good"—is the only path to true safety for AGI.

What This Means for the Future of AI and Its Deployment

The rise of these dedicated research institutes signifies the transition of AI from an *experimental technology* to a managed, regulated utility. This pivot has several key implications for the future:

- Institutionalization of Due Diligence: Safety research will move from being reactive (fixing bugs after they appear) to proactive (modeling failure modes before deployment). This creates a higher barrier to entry for smaller labs, as robust impact analysis requires significant overhead and specialized talent.

- The Rise of AI Auditing Standards: As these institutes publish their methodologies for studying economic impact or security vulnerabilities, these methods will become the de facto standard against which regulators and corporate clients audit AI products.

- Shift in Talent Acquisition: The most sought-after AI talent will no longer just be the best ML engineers, but those skilled at the intersection of ML, policy, economics, and technical safety (e.g., mechanistic interpretability experts).

Practical Implications: Actionable Insights for Business and Society

For enterprises already integrating advanced AI, or those planning their strategy, the institutional commitment to governance offers clear paths forward:

For Businesses: Beyond the Pilot Phase

1. Demand Transparency on Governance: When procuring frontier models, ask vendors not just about performance benchmarks (like accuracy or speed), but about their internal governance structures. Who inside the company is formally responsible for tracking long-term economic risk? Is there a dedicated, funded team studying adversarial robustness?

2. Proactive Workforce Modeling: Do not wait for the formal economic reports from these institutes. Start internal audits now to identify which knowledge tasks within your organization are most susceptible to augmentation or transformation. This transforms a potential crisis into a strategic advantage through targeted reskilling.

3. Prepare for Explainability Requirements: If you operate in highly regulated sectors (finance, healthcare, defense), assume that model decision-making will soon need to be fully explainable to regulators. Invest in tools and processes now that map model outputs back to input data, supporting the interpretability work being pioneered in these institutes.

For Society: Navigating the New Social Contract

Society must engage with this trend actively. If the builders are formalizing their study of societal impacts, public discourse must evolve from abstract fear to concrete policy input.

The focus must shift to ensuring that the governance frameworks developed by these internal institutes are not purely self-serving. Independent oversight bodies, empowered by global regulators, must validate the findings regarding economic shifts and security risks. The output of the Anthropic Institute should be treated as critical data for public policy, not merely internal corporate guidance.

Conclusion: The Era of Responsible Scaling

The launch of the Anthropic Institute is a landmark event, not in terms of technological capability, but in terms of *institutional responsibility*. It confirms that the AI industry has crossed a threshold where unchecked capability scaling is no longer seen as tenable or profitable in the long term.

We are entering the Era of Responsible Scaling. Success in this new era will not be measured solely by the speed or intelligence of the next model released, but by the depth of understanding the developers have regarding the world they are about to reshape. The think tank model is the mechanism by which this necessary self-awareness is being converted into actionable strategy, setting the tone for how all future frontier AI development must proceed.