The Governance Turn: Why Anthropic's New Institute Signals AI's Maturation Beyond Raw Power

For years, the narrative around Artificial Intelligence development was a relentless, almost singular focus on scale. How fast can we train? How large can the model be? How much smarter can the next iteration become? This period of explosive growth, marked by ever-larger parameter counts, has now entered a necessary and profound new phase: The Governance Turn.

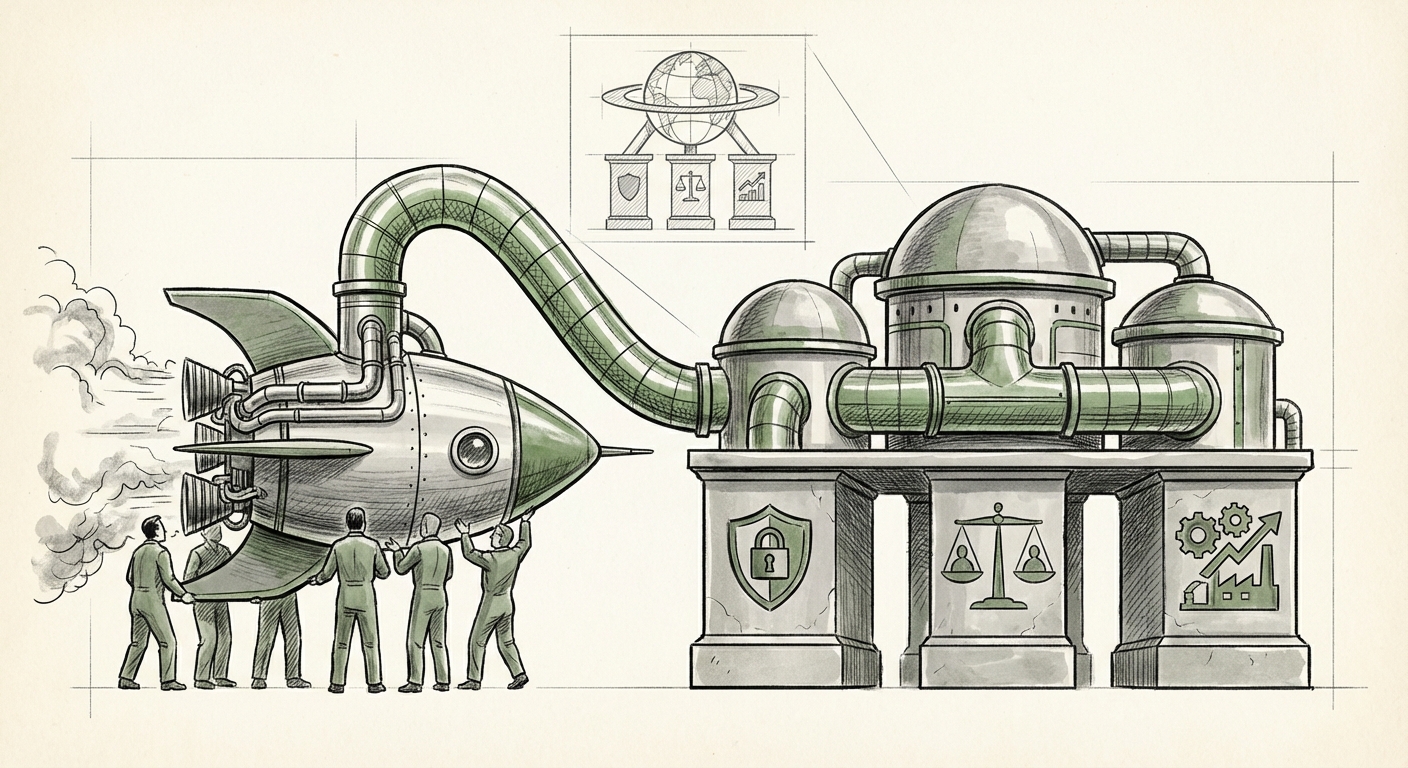

The recent launch of the "Anthropic Institute," a dedicated internal think tank tasked with studying the societal, economic, and security impacts of powerful AI, is not merely a PR move. It is a structural acknowledgement that the industry has reached an inflection point. Building more powerful AI is now inextricably linked to understanding—and managing—its consequences. This development forces us to re-evaluate where the true challenges in AI lie: less in the code, and more in the context.

The Shift: From Capability to Consequence

When Anthropic, a company fundamentally built around safety principles (hence its very name), formalizes an institute specifically for studying impact, it sends a clear signal to regulators, investors, and the public: We recognize the risk is proportional to the reward.

Think of it like this: Early automotive manufacturers focused solely on speed and horsepower. Once cars became ubiquitous, they had to dedicate massive resources to traffic laws, road safety engineering, and insurance liability. AI is now leaving the racetrack and entering the public highway. The Anthropic Institute represents this necessary pivot toward traffic management.

Corroboration from the Ecosystem

This move by Anthropic is not occurring in a vacuum. Several industry and geopolitical trends corroborate the urgent need for such internal governance structures:

- Competitive Benchmarking: Rival leaders, notably OpenAI, have established highly visible alignment research teams, such as their Superalignment team. This mirrors Anthropic’s focus, suggesting that robust internal safety research is becoming a mandatory feature for any company handling "frontier AI." For analysts, comparing the technical focus of OpenAI’s alignment work versus Anthropic’s broader institute (which includes economics) highlights differing, yet complementary, safety philosophies.

- The Regulatory Hammer: Governments worldwide are accelerating efforts to control high-risk AI. Measures like the EU AI Act impose stringent compliance requirements on developers of powerful models. For major AI labs, establishing an internal body focused on security and societal risk becomes an essential "internal compliance and foresight engine" to anticipate and meet looming legal standards. Understanding the impact of the EU AI Act on US tech companies is crucial context here.

- Economic Reality Checks: The grand promises of productivity gains must be weighed against potential disruptions. As external research, such as studies from economic bodies like the Brookings Institution on AI productivity impact, begins to quantify labor market risks, AI developers must internalize these projections. The institute's focus on the economy suggests they are preparing for an era where technological deployment requires economic mitigation plans, not just marketing projections.

The Three Pillars of the New AI Mandate

The Anthropic Institute is structured around three core areas: Society, Economy, and Security. These pillars define the new battleground for advanced AI development:

1. Security: Hardening the Frontier

In the early days, "security" meant protecting the model weights. Now, it means protecting the world from the model’s potential misuse. This involves research into advanced misuse scenarios—from autonomous cyber warfare to sophisticated disinformation campaigns. The goal is to build systems robust enough to refuse harmful instructions, even when those instructions are subtly framed.

This pillar directly feeds into industry collaboration initiatives, such as the Frontier Model Forum. When Anthropic tests novel failure modes internally, they are simultaneously preparing blueprints for the shared safety standards the entire industry must adopt to avoid catastrophic, uncoordinated risks.

2. Society: Navigating Cultural Shifts

This is the softest, yet perhaps most critical, area. How do models trained on vast datasets perpetuate or alleviate systemic bias? How do they influence democratic discourse? The societal pillar moves AI ethics from academic papers into tangible engineering requirements. For a technical audience, this means building robust interpretability tools; for a business audience, it means ensuring global market acceptance without cultural backlash.

3. Economy: Preparing for Disruption

Perhaps the most forward-thinking aspect of the institute is its explicit focus on the economy. This transcends simple productivity gains. It demands rigorous study into issues like:

- Concentration of AI capabilities and wealth.

- The speed of white-collar job displacement.

- The need for new educational pathways and social safety nets tailored for an AI-augmented workforce.

If AI firms acknowledge that their technology fundamentally reshapes global labor markets, they accept a moral and perhaps eventual legal responsibility to contribute to the solutions.

What This Means for Future AI Development

The establishment of formalized internal research institutes changes the DNA of AI development firms. It transforms them from pure R&D shops into quasi-governmental bodies attempting to self-regulate unprecedented technology.

Actionable Insights for Businesses

For companies integrating AI, this trend offers clear direction:

- Demand Transparency in Risk Assessment: When sourcing models, look beyond benchmark scores (like MMLU or HumanEval). Demand evidence of governance testing. Ask vendors about their internal impact analysis processes—do they have an "Anthropic Institute" equivalent, even if it’s smaller?

- Invest in Governance Literacy: Your legal, HR, and strategy departments must gain fluency in AI governance concepts. The risk of legal challenge or regulatory fine stemming from misuse (security) or workforce restructuring (economy) is rising rapidly.

- Anticipate Regulatory Friction: Assume that regulation is coming, not in five years, but in two. Organizations that proactively build structures like the Anthropic Institute will find compliance easier and gain a competitive advantage in earning public trust.

The Future Trajectory: From Internal Labs to Public Policy

The ultimate goal of these internal institutes is likely twofold: first, to ensure the *safety of the technology itself* (making it robust against misuse), and second, to generate the *data and recommendations necessary to guide external public policy*. When a company like Anthropic can present policymakers with rigorously studied, data-backed proposals on, for example, licensing requirements for extremely powerful models, they move from being regulated entities to being trusted technical advisors.

This shift is healthy, if somewhat belated. It moves the industry past the "move fast and break things" ethos and toward a more mature, stewardship-oriented approach. The race for intelligence is pausing briefly so that the architects can ask themselves not just, "Can we build it?" but more importantly, "How do we live with it?"

The creation of internal governance structures like the Anthropic Institute marks the end of the "Wild West" era of frontier AI. The age of rigorous, accountable development—where the study of consequences is as vital as the creation of capability—has officially begun.