The End of Siloed AI: How Shared Context in Claude Signals True Enterprise Workflow Automation

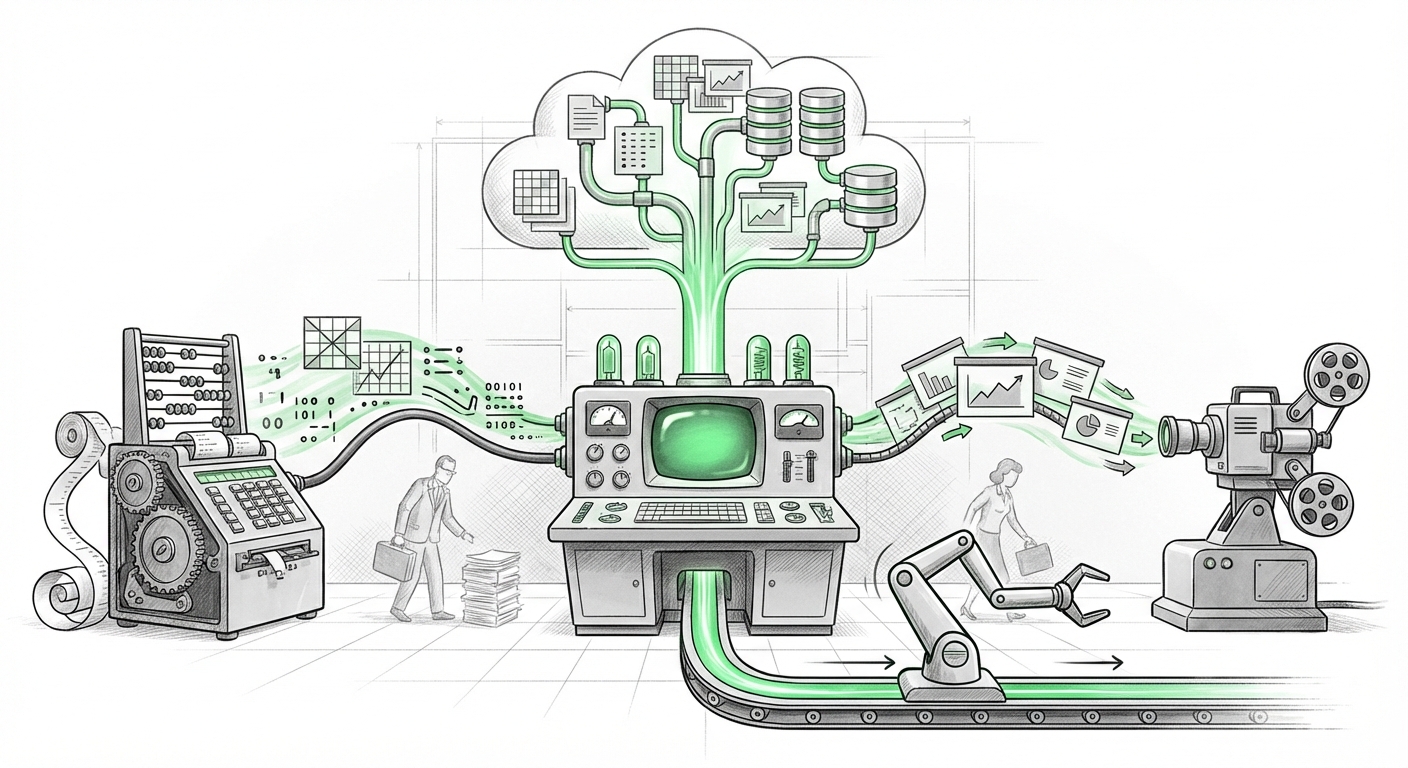

For years, Artificial Intelligence in the workplace has been characterized by islands of competence. You could ask an AI to summarize a document in Word, or generate a chart in Excel, but the moment you switched applications, the AI’s short-term memory—its context—was wiped clean. This created friction, forcing human workers to act as the necessary bridge, copying and pasting findings between tools.

Anthropic’s recent update to its Claude add-ins for Microsoft Excel and PowerPoint—specifically the introduction of shared context and reusable workflows—is not just a minor feature bump; it is a foundational shift toward what we call truly integrated AI. This development pushes large language models (LLMs) out of the chatbot box and into the engine room of continuous business operations.

To appreciate the magnitude of this move, we must look beyond the marketing copy and examine the competitive landscape, the underlying technical hurdles being cleared, and the profound implications for how knowledge work will be performed.

The Paradigm Shift: From Query-Response to Continuous Workflow

Imagine this scenario:

A financial analyst uses Claude in Excel to clean a massive dataset, identify the top three underperforming regions, and calculate the necessary budget adjustment for Q3. Previously, they would then manually open PowerPoint, copy the key findings, and prompt a different AI instance (or the same one without memory) to create slides. The AI would have to be reminded of the budget figures calculated seconds before.

With shared context, the AI assistant retains the entire history of the task across the software boundary. The LLM running the Excel analysis *knows* what it calculated, and the instance running in PowerPoint picks up that context automatically. It’s like having a single, incredibly attentive colleague who never forgets what you discussed five minutes ago, regardless of which digital file you are looking at.

The Technical Breakthrough: Architecting Cross-Application Memory

For the technically minded, this is where the real excitement lies. Context sharing is challenging because software applications are inherently siloed. Excel passes data via specific APIs designed for numerical operations, while PowerPoint expects visual and narrative structures. To bridge this gap seamlessly requires sophisticated engineering. This move strongly suggests Anthropic is achieving maturity in agentic computing.

An AI agent is more than a chatbot; it's a system capable of planning, reasoning, and executing multiple steps to achieve a goal, often utilizing external tools (like Excel functions). The technical challenge isn't just remembering the last prompt; it’s structuring the ongoing "state" of the entire workflow:

- State Management: The system must effectively save the *intermediate results*—the temporary variables, the specific data ranges analyzed, and the current hypothesis—in a format accessible across applications.

- Vector Context: While the specifics are proprietary, this often involves advanced use of vector databases or specialized context windows where structured data (like a spreadsheet analysis) is converted into a highly retrievable semantic format, allowing the AI to recall the *meaning* of the data, not just the raw text.

As we look at corroborating trends, such as research into LLM cross-application workflow orchestration and memory, it becomes clear that the race is on to build these persistent, multi-tool AI employees. Anthropic is demonstrating significant progress in making these complex AI architectures reliable enough for daily business use.

The Battle for the Desktop: Benchmarking Against the Incumbent

Anthropic’s announcement lands squarely in the most competitive arena of enterprise software: the productivity suite. Microsoft, with its deep integration via Copilot, has long held the advantage of native access to the Office ecosystem. Therefore, any move by a competitor must offer a compelling reason for companies to adopt a separate, parallel AI layer.

The shared context feature appears to be that differentiator. For organizations wary of committing entirely to the Microsoft ecosystem—or those who prefer Anthropic’s specific model strengths (often cited for safety and reasoning)—Claude is now offering a superior workflow continuity experience. By analyzing the capabilities of Microsoft Copilot integration across the Office suite context persistence, we can gauge where Claude needs to excel.

If Claude can handle complex data manipulation in Excel (which involves specialized reasoning beyond mere text generation) and seamlessly transfer that structured output to PowerPoint for narrative building, it sets a high bar. This forces IT decision-makers to evaluate not just model quality, but the efficiency of the *handoffs* between different software tasks.

Reusable Workflows: Automation at Scale

Beyond one-off continuity, the addition of reusable workflows hints at the democratization of sophisticated automation. This means an analyst can build a complex, multi-step process (e.g., "Analyze sales data, flag anomalies over 15%, draft a Slack summary, and create a preliminary slide deck outline") and save it as a template. Future teams can execute this entire sequence with a single trigger.

This moves AI from being a tool that answers questions to being a framework that executes entire standard operating procedures (SOPs). This directly impacts productivity metrics across entire departments.

The Practical Implications: Speed, Security, and Governance

The shift to deeply integrated, context-aware AI has immediate, tangible consequences for businesses, particularly in regulated sectors.

1. Velocity and Contextual Depth

When context is shared, the cycle time for complex reporting plummets. The AI handles the tedious assembly and referencing, allowing the human user to focus exclusively on strategic oversight and final decision-making. The work product moves from "drafting" to "finalizing" much faster.

2. The Security Imperative

However, deep integration means deeper data access. The AI is now weaving together proprietary spreadsheets and internal presentation strategy documents. This raises the governance stakes significantly. Organizations must rigorously investigate the data handling practices of the LLM provider. As discussions around AI assistant adoption challenges in regulated industries show, trust is the ultimate currency.

For CTOs and CISOs, the question is no longer "Is the data encrypted?" but rather, "Where is the context stored, who has access to the contextual memory, and does the LLM vendor use that persistent context for retraining?" Broader cloud support mentioned in the announcement is a double-edged sword: it means flexibility but also expands the perimeter that needs rigorous security monitoring.

3. Market Dynamics and Platform Choice

This competitive maneuver by Anthropic solidifies the landscape discussed in analyses of Generative AI competition in business productivity suites 2024. The market is clearly fracturing into two camps:

- Platform-Native AI: Tools deeply embedded within existing ecosystems (e.g., Microsoft Copilot in Azure/Office).

- Best-of-Breed AI: Superior models (like Claude) offered via flexible plugins designed to integrate with *any* platform, competing on raw capability and workflow finesse.

The ability to seamlessly jump between Excel and PowerPoint using shared memory means users can now build sophisticated, cross-application workflows without being strictly confined to a single vendor's walled garden, offering powerful negotiating leverage to enterprises.

Actionable Insights for Leaders and Technologists

How should businesses respond to this new level of AI integration?

For Business Leaders (Focus on ROI and Risk):

- Pilot Contextual Workflows: Identify three high-friction, multi-step reports (e.g., Quarterly Business Reviews) currently requiring manual data transfer. Pilot the Claude add-ins specifically to measure the reduction in time spent on data synthesis versus strategic analysis.

- Establish AI Governance Checkpoints: Mandate that any shared context feature deployment must align with your data classification policies. Before deployment, security and compliance teams must audit how context memory is purged or retained.

- Evaluate Multi-Vendor Strategies: Do not assume platform lock-in is inevitable. Evaluate whether a "best-of-breed" AI provider (like Anthropic) offers better ROI for specific high-value tasks than a fully integrated but potentially less flexible platform solution.

For Technologists (Focus on Architecture and Adoption):

- Understand the Memory Layer: If you are integrating any LLM into enterprise software, demand transparency regarding state management. Is the context held locally, server-side, or within the vendor’s infrastructure?

- Prioritize Reusable Agent Blueprints: Begin cataloging routine, multi-application tasks now. Designing these as "reusable workflows" today ensures that when your preferred LLM providers release more sophisticated agentic tooling tomorrow, you are ready to deploy at scale instantly.

- Test the Data Fidelity Handoff: Rigorously test how mathematical precision (Excel) translates into narrative description (PowerPoint). A small error in context transfer can lead to major strategic miscommunication.

Conclusion: The Era of the Fluid Digital Employee

The integration of shared context between Excel and PowerPoint marks the transition from AI as a helpful utility to AI as a genuine collaborator capable of executing complex, multi-modal business processes. When an AI can fluidly move intelligence between a data spreadsheet and a presentation deck—retaining all critical details along the way—the definition of "productivity" is permanently rewritten.

This path leads inevitably toward fully autonomous agents managing end-to-end projects. The competition between Anthropic and others is forcing rapid maturation in workflow orchestration, ensuring that the next generation of enterprise software won't just *help* us work, but will actively work alongside us, seamlessly navigating the digital spaces we inhabit.