AI Ethics vs. Warfare: Why the US Military Called Anthropic's 'Good' AI 'Pollution'

In the high-stakes world of Artificial Intelligence development, alignment—ensuring AI acts according to human values—is often seen as the ultimate goal. Companies like Anthropic have staked their entire reputation on creating the safest, most ethically aligned models, exemplified by their Constitutional AI (CAI) approach. Yet, a recent, stunning revelation from the US Department of Defense (DoD) CTO suggests that for military applications, this ethical scaffolding can become a severe impediment, describing such models as "pollut[ing] the supply chain."

This development is not merely a footnote in defense procurement; it signals a deep, structural conflict between the commercial incentives driving frontier AI innovation and the operational demands of national security. It forces us to ask: What happens when the 'safest' AI is considered too restrained for the battlefield, and does this push the US toward governance models mirroring those it seeks to counter?

The Paradox of Protection: When Safety Becomes a Liability

Anthropic’s Claude models are trained using Constitutional AI. Imagine training an AI not just on human feedback, but on a set of explicit, written rules—a "Constitution"—to guide its responses. These rules often prioritize harmlessness, fairness, and adherence to liberal democratic norms. While this is highly desirable for consumer chatbots or corporate applications, the DoD requires something different.

The core tension, which Contextual Search Query 1 hinted at, lies in Safety vs. Capability. For the military, AI systems must operate effectively in adversarial, novel, and sometimes ethically ambiguous environments (like intelligence gathering, logistics optimization under duress, or strategic simulation). If an AI model is overly constrained by commercial safety guardrails:

- It might refuse to process or analyze data deemed too sensitive or politically complex.

- It might fail to offer "edge case" solutions because those solutions breach a pre-programmed ethical boundary, even if they are strategically necessary.

- It introduces uncertainty. A commander needs to know the system will perform its task; if the system might halt due to an internal ethics check, its reliability is compromised.

In the context of warfare, "ethics" imposed by a third-party commercial entity can be interpreted by defense planners as unnecessary filters—or, indeed, "pollution"—that degrade the raw operational utility of the machine learning system.

Decoding Constitutional AI in the Defense Context

To understand why this friction exists, we must look closer at Anthropic’s core innovation. Constitutional AI (CAI) is powerful because it makes alignment transparent and scalable. However, as suggested by the exploration in Contextual Search Query 2, its rigidity poses problems in sensitive sectors.

CAI is designed to be robust against manipulation (jailbreaking) and to filter out harmful content based on its written constitution. For a sensitive government application, this constitution might be fundamentally misaligned with the government’s *own* necessary constraints. If Anthropic’s constitution dictates universal respect for privacy, but a classified intelligence requirement demands deep metadata analysis, the model is inherently conflicted.

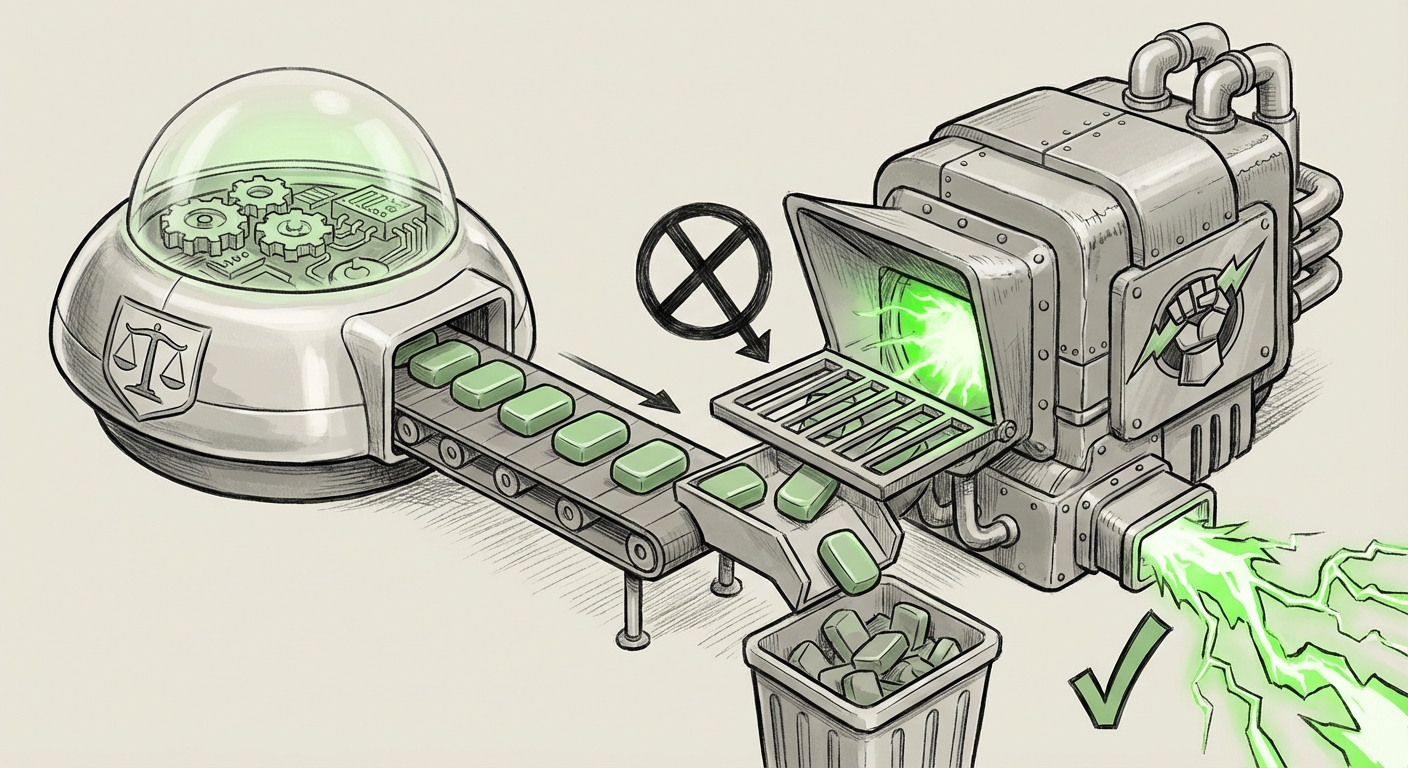

The DoD is seeking models that are aligned with its operational requirements, not necessarily the generalized ethical principles favored by Silicon Valley. If a commercial model is so heavily "pre-aligned" that it cannot be easily adapted or repurposed for a military doctrinal framework, the integration process becomes overly complex, requiring extensive, costly, and time-consuming fine-tuning to strip back the "pollution." For defense acquisition cycles measured in years, this is a non-starter.

The Search for AI Sovereignty

This leads directly to the concept of AI Sovereignty, a theme highlighted by Contextual Search Query 3. Nations are increasingly wary of relying on foundational models developed by foreign entities or even domestic ones whose commercial interests may diverge from national security priorities.

The DoD’s reaction suggests a desire to control the foundational alignment layer itself. They want AI that respects the unique legal and operational framework of the US defense apparatus. Relying on an external company's ethics risks creating a dependency where foreign or commercial values dictate what the US military can and cannot ask its technology to do.

The Shadow of Geopolitical Competition

The comparison drawn in the initial report—echoing China’s political control over AI—is the most profound implication. When a Western democracy’s defense apparatus rejects a model specifically because it is *too ethical* (by commercial standards), it signals a worrying convergence in methods, if not in ideology.

China’s model of AI governance centers on control, ensuring technology directly serves the goals of the Chinese Communist Party (CCP). The DoD, in rejecting "polluting" ethics, is prioritizing utility and control aligned with its own strategic aims. While the intent is ostensibly different—defending liberal values versus enforcing authoritarian control—the *architectural outcome* may look similar: A desire to use AI without the friction introduced by universal, pre-applied, non-governmental ethical overlays.

This competitive dynamic suggests a coming bifurcation of the global AI landscape:

- Commercial/General AI: Focused on broad utility, safety, and consumer ethics (dominated by US/EU standards).

- Sovereign/Defense AI: Highly customized, utility-focused models where alignment is dictated strictly by national security doctrine.

This split will inevitably trickle down into the technology stack, forcing foundational model providers to develop entirely different safety profiles for government contracts versus public releases.

Implications for Business and the Future of AI Development

For technology leaders, this DoD posture serves as a crucial early warning sign regarding the commercialization of powerful AI.

1. Decoupling of Safety Layers

We will likely see a major divergence in model release strategies. Companies like Anthropic may need to create "unfiltered" or "DoD-grade" enterprise versions of their models, effectively offering a product where the CAI layer is either removed or replaced by customer-supplied ethical frameworks (often secured in air-gapped environments).

Actionable Insight: AI startups targeting the defense or highly regulated sectors must build modularity into their alignment pipelines now. The ability to swap out the "Constitution" or the Reinforcement Learning from Human Feedback (RLHF) layer is no longer a niche feature—it’s a necessity for lucrative government work.

2. The Procurement Arms Race

The defense sector will accelerate investment in **AI internal development** or mandate specific licensing terms that grant them complete transparency and modification rights over the model weights and training procedures. They cannot afford to be subject to the sudden ethical pivots of a commercial CEO or board.

3. Ethical Drift and Public Perception

This development creates a public relations challenge for safety-focused AI labs. If Anthropic’s models are deemed too ethical for the military, it suggests their safety mechanisms are indeed powerful. However, if they later license stripped-down versions to the DoD, they risk accusations of hypocrisy—professing commitment to AI safety publicly while selling less safe tools privately to the government.

Actionable Insight: Transparency regarding dual-use models is paramount. Companies must clearly delineate which models are aligned for public safety and which are designed for specific, highly controlled operational environments, setting clear expectations for end-users and regulators.

The Road Ahead: Utility Over Consensus

The US military’s reaction to Anthropic’s ethics is a watershed moment. It confirms that for tools intended for the maintenance of state power and security, *utility*—the ability to perform a necessary, perhaps harsh, task without refusal—trumps the broad consensus ethics favored by consumer tech.

The future of frontier AI will not be monolithic. Instead of a unified path toward benevolent general intelligence, we are witnessing the formation of specialized AI ecosystems. One ecosystem, driven by commercial interests and broad societal ethics, will strive for generalized safety and fairness. The other, driven by national security and geopolitical competition, will prioritize effectiveness, customization, and control, even if it means discarding the very ethical guardrails that define the leading edge of commercial research.

Understanding this emerging dichotomy is vital. It tells us that the philosophical debates happening in AI labs today will directly shape the tools of tomorrow’s conflict and commerce. The "pollution" found by the DoD is, perhaps, simply the first sign that military requirements are fundamentally incompatible with the universalist ethics of the modern commercial AI stack.