The LLM Operating System: Analyzing GPT-5.4 and the Future of AI Orchestration

The pace of innovation in Large Language Models (LLMs) has always been staggering, but recent reports suggesting models like the theoretical GPT-5.4 are evolving to act as Operating Systems signal something far more profound than mere algorithmic refinement. This isn't just about generating better poetry or summarizing longer documents; this is about a foundational shift where AI moves from being a tool to becoming the orchestrator of complex digital workflows. As an AI analyst, this development suggests we are standing at the precipice of true, pervasive AI agency.

The Leap: From Predictor to Orchestrator

For years, the success of models like GPT-4 was measured by their proficiency in language tasks—translation, code generation, or conversational ability. They were incredibly smart parrots capable of impressive mimicry. The "LLM as an OS" concept, as highlighted in recent technical deep dives like *The Sequence AI of the Week #822*, completely reframes this capability.

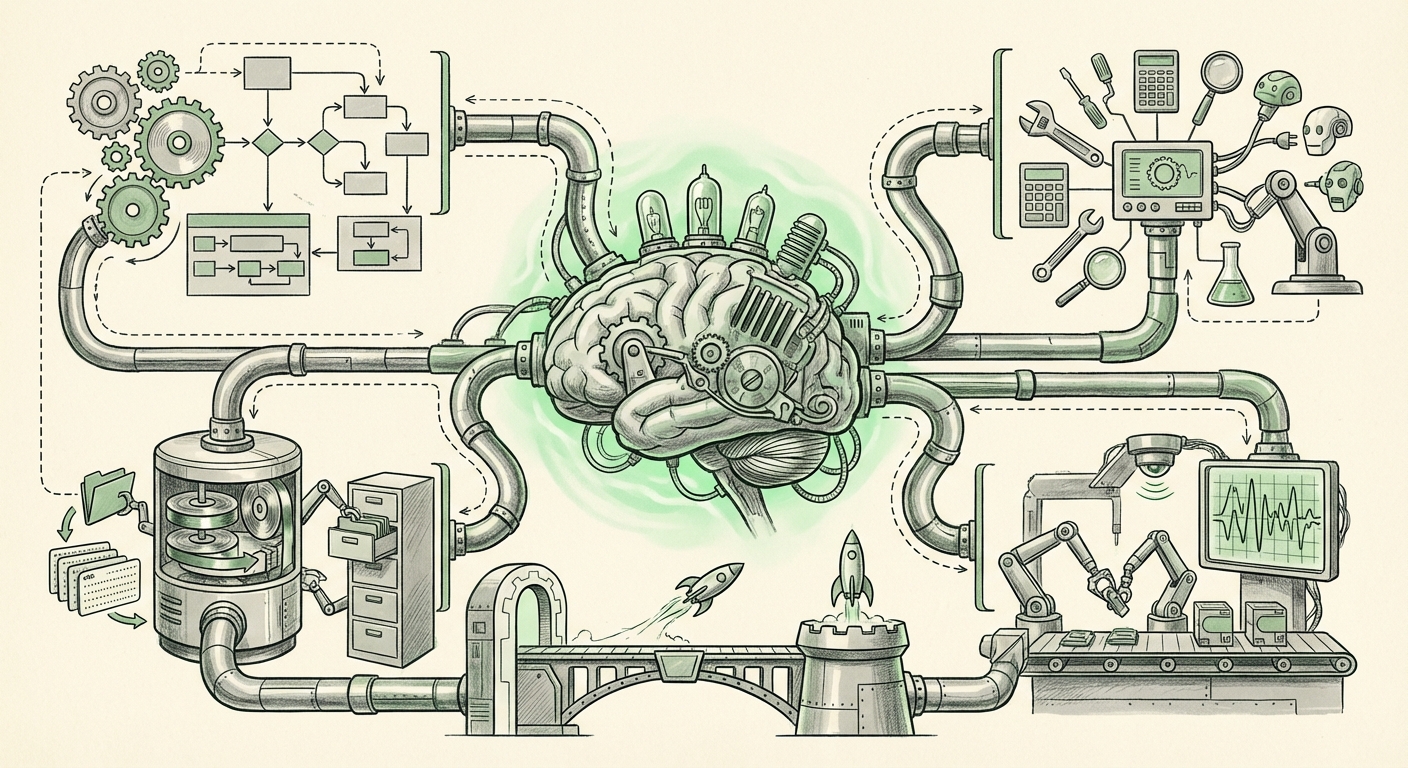

Think about the operating system on your computer—Windows, macOS, or Linux. It doesn't just write text; it manages memory, controls hardware access, schedules processes, and allows different applications to talk to each other reliably. An LLM acting as an OS aims to do this digitally:

- Planning & Decomposition: Breaking a large goal ("Book me a complex international trip within budget") into sequential, manageable sub-tasks.

- Tool Use & API Management: Knowing exactly which external tools (search engines, databases, booking APIs, code interpreters) to call, in what order, and how to interpret the results.

- State Management: Remembering where it is in a long process, correcting errors autonomously, and maintaining context across days or weeks of work.

This capability—orchestration—is the bridge between narrow AI assistance and broad, autonomous agency. It means the model is no longer just a content generator; it is becoming the **chief executive** of a digital workspace.

Corroborating the Trend: Architecture and Agentic Workflows

This vision isn't appearing in a vacuum. The industry has been building the scaffolding for this OS-like behavior for some time. The very premise that LLMs can become operating systems is being validated by the rise of powerful agentic frameworks.

When searching for confirmation on this shift (using queries like `"LLM as an operating system" architecture trends`), we find strong evidence in the ongoing development of open-source and commercial agent platforms. Frameworks like LangChain or Microsoft’s AutoGen are essentially attempting to build the user interface and scheduling layer atop a powerful core model. These frameworks formalize the required components:

- The Brain (LLM): The reasoning core (GPT-5.4 equivalent).

- The Memory: Short-term context and long-term knowledge retrieval.

- The Tools: Defined interfaces (functions/APIs) the Brain can confidently use.

The implication here is that OpenAI's internal progress on GPT-5.4 is converging with external community efforts. If the underlying model possesses superior internal reasoning and planning capabilities, it requires less external scaffolding to function reliably as an orchestrator. This convergence validates the roadmap: the next competitive battleground isn't just model size, but the **robustness of tool execution and planning logic** within the model itself.

Market Disruption: Transforming the Software Development Lifecycle

If an LLM can function as an OS, the implications for software development and enterprise IT are transformative. We move from using AI to *write* code to using AI to *manage* the entire development and operational stack.

The search query `impact of multimodal LLMs on software development lifecycle` yields insights into how this centralization of control will affect business operations. Consider a modern business process:

- Currently, a request flows from a manager (human input) to a ticketing system (process layer), which triggers scripts (execution layer) managed by various microservices (application layer).

- With an LLM OS, the manager gives the goal directly to the AI. The AI autonomously decides which APIs need modification, generates the necessary test code, runs the deployment pipeline (like Jenkins or GitHub Actions), monitors the results, and reports back—all without direct human intervention in the middle steps.

This level of abstraction threatens to significantly compress the middle layers of traditional software stacks. Venture capitalists and analysts are already focused on this threat (as indicated by interest in reports like *Will AI Agents Replace the Traditional Software Stack?*). The value shifts from building specific, repetitive software components to ensuring the AI OS has secure, correct access to high-value enterprise data and critical systems.

The Challenge of Trust and Verification

The biggest hurdle in this OS transition is trust. When an LLM is merely generating text, errors are usually obvious. When it is running code, accessing financial data, or deploying updates, errors become catastrophic. This forces businesses to invest heavily in:

- Sandboxing: Ensuring the AI Agent OS operates in strictly controlled, reversible environments.

- Verification Layers: Building secondary, non-AI verification tools to double-check the Agent's execution plans before they hit production systems.

The Theoretical Leap: Emergent Capabilities and AGI Context

Why are these models suddenly capable of OS-like reasoning? This is where theoretical research becomes essential, spurred by queries like `emergent capabilities in large language models beyond text prediction`.

The ability to use external tools—to access a calculator, run code, or query a database—is fundamentally different from simply predicting the next token based on the training data. This external interaction unlocks true system-level reasoning. When a model can observe the *output* of an action, integrate that new data into its internal state, and then plan the next action based on that reality, it mimics feedback loops essential to intelligence.

This mirrors the argument in research discussing "The Next Frontier: How Tool Use Unlocks System-Level Reasoning." The OS concept is the natural, highly practical culmination of this research path. It suggests that the architecture is mature enough to move past simple input/output towards persistent, goal-oriented computation.

Practical Implications and Actionable Insights for Leaders

For both technical teams and business strategists, the emergence of the LLM OS requires immediate, forward-looking adjustments. The question is no longer *if* AI will manage workflows, but *when* and *how* we will control it.

For Technical Teams (Engineers & Researchers):

- Master Function Calling & Tool Security: Deepen expertise in designing secure, reliable APIs (functions) that the LLM OS can call. Treat every exposed tool endpoint as a potential security vector.

- Focus on State and Memory: Develop robust, vector-based memory architectures that allow the Agent OS to maintain context over extremely long sequences of execution, which is critical for complex project management.

- Embrace Agent Frameworks: Begin prototyping internal tasks using existing agent frameworks (like AutoGen) to stress-test the current limits of planning and error correction, preparing for the leap to more powerful proprietary models.

For Business Leaders (CTOs & Strategists):

- Audit Workflow Interdependencies: Identify mission-critical, multi-step workflows that are currently brittle or manual. These are the first candidates for LLM OS takeover, offering the highest potential ROI in efficiency gains.

- Re-evaluate Vendor Lock-in Risk: If a single model becomes the OS for your entire operation, the dependency on that vendor (OpenAI, Google, etc.) becomes absolute. Develop a multi-model strategy for critical functions now.

- Invest in AI Governance Early: Since the LLM OS will be executing actions autonomously, governance is paramount. Define clear boundaries, failure protocols, and mandatory human oversight checkpoints for any process touching finance, security, or customer data.

Conclusion: The Dawn of the Computational Layer

The evolution of LLMs into OS-like entities is perhaps the most significant technological signal of the current AI cycle. It confirms that foundation models are not merely sophisticated search engines; they are becoming the central computational layer upon which future digital business will run. This shift validates the focus on agentic architecture and pushes the boundaries of what we consider "emergent intelligence." While the technical feasibility is gaining ground, the critical next phase will be proving reliability and ensuring robust governance. The future isn't just about faster processing; it's about smarter direction.