The Data Dilemma: How Grammarly’s Ethics Crisis Will Reshape AI Sourcing and Licensing

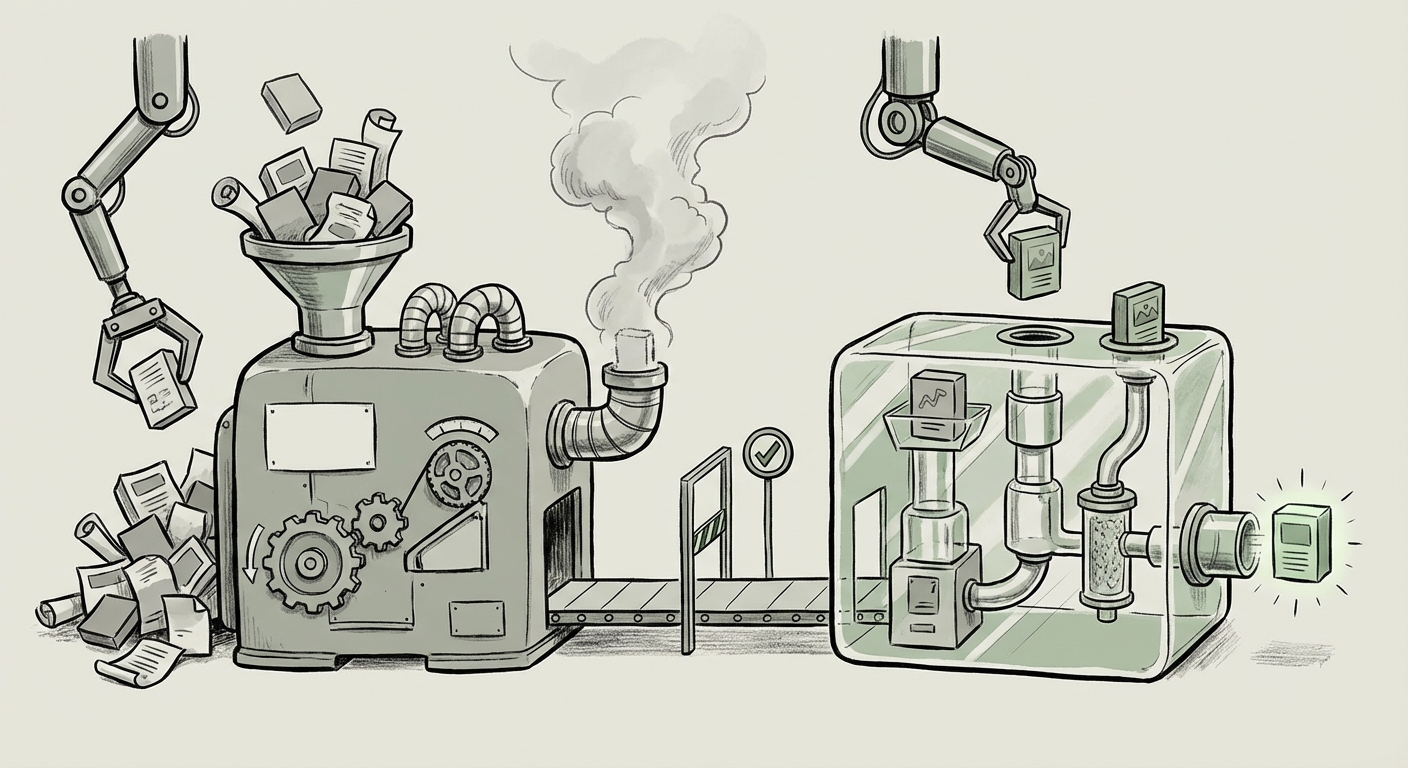

The modern Artificial Intelligence ecosystem is built upon a massive, often invisible, foundation: data. Generative AI models, coding assistants, and even sophisticated writing tools like Grammarly all rely on ingesting billions of data points—texts, images, and code—to learn patterns and offer advice. When a company like Grammarly faces controversy for allegedly claiming "inspiration" from expert authors and journalists without their permission for a feature named "Expert Review," it’s more than just a PR headache; it's a seismic event highlighting the fragility of AI ethics and data sourcing.

This incident serves as a critical bellwether for the entire technology industry. It forces us to move past vague assurances of "learning from the public internet" and confront the hard questions surrounding intellectual property, attribution, and the future economic models for content creators in the age of intelligent software.

The Core Conflict: Inspiration vs. Infringement in Assistive AI

Grammarly’s "Expert Review" feature suggests a nuanced form of machine learning. Unlike a chatbot generating a novel from scratch, Grammarly’s tool claims to offer writing critiques based on established, high-quality human standards. The controversy arises because the company appears to have implicitly borrowed the prestige associated with specific, living experts without obtaining consent.

This behavior mirrors the broader challenges faced by LLM developers. If an AI offers advice that closely resembles the philosophy or methodology of a known expert—say, a specific investigative journalist’s approach to structure, or an acclaimed author’s style guide—is that acceptable appropriation, or is it intellectual theft rebranded as training data utilization?

The Legal Firestorm: Training Data and Fair Use

To understand the gravity of the Grammarly situation, we must look at the massive legal battles currently unfolding, often targeted at the foundational models themselves. Search queries regarding "AI training data copyright infringement lawsuits authors" reveal a landscape where creators are challenging the very premise of mass web scraping.

These lawsuits often hinge on the concept of "fair use." AI companies argue that training an AI model is transformative—it’s not copying the book, but learning the *rules* of language within the book. Content creators argue that the resulting model competes directly with their ability to earn a living from their original works, thereby harming the market for the source material.

What this means for the future of AI: If courts side heavily with creators, the current "wild west" approach to data acquisition becomes untenable. AI developers might face crippling retroactive licensing fees or be forced to train models only on licensed or public domain data. This radically alters the competitive landscape, potentially slowing down innovation for those unable to afford massive data licenses.

The Push for Transparency: Demanding Data Provenance

The industry is beginning to recognize that secrecy around training data breeds mistrust. The second key trend, reflected in searches for "Generative AI transparency standards data provenance," points toward regulatory mandates.

Governments, especially in the EU with the emerging AI Act, are moving toward requiring **data lineage**—a clear record tracing every piece of data used to train a model. Imagine a requirement for an AI "nutrition label" detailing the proportion of copyrighted news, academic papers, and licensed texts used.

For Grammarly, this means that simply using data from the public web might not be enough; they may need to prove that the experts whose names they invoke have explicitly opted *in* to that specific application, not just that their work was available online.

The Ethical Crossroads for Productivity Tools

The Grammarly controversy specifically highlights the gap in "Ethical guidelines for using expert work in AI feedback tools." This is where assistive AI diverges from pure generation. When an AI provides a specific medical diagnosis aid or, in this case, expert writing review, it is borrowing professional credibility.

Creators are asking: Why should my career’s worth of effort be used to train a free or subscription service that directly competes with the human expertise I sell? This ethical pressure demands companies develop frameworks that respect professional boundaries. It's the difference between a student reading an expert's book (ethical) and a company using the expert's name to sell a machine claiming to be as good as the expert (unethical without permission).

The New Economics of Data: Licensing the Future

The third major implication stemming from these data challenges is the inevitable shift toward formal, structured licensing. The search for the "Future of digital content licensing for LLM training" shows that the industry is seeking sustainable business models.

Today’s tension is a negotiation over future pricing. Creators and publishers are realizing their data is the new oil. We are likely heading toward one of three models:

- Mandatory Licensing Pools: Large collectives representing authors and publishers negotiate standardized fees with AI companies for access to their entire catalog for training purposes.

- Micro-transactions/Usage Royalties: Advanced attribution tracking allows AI systems to pay small, automated royalties every time a specific piece of training data is demonstrably influential in an output.

- Zero-Tolerance Opt-Out: Strict legal enforcement means AI companies can only train on data explicitly marked as open-source or licensed for AI use, drastically shrinking available datasets.

Implications for Enterprise AI Adoption

For businesses implementing AI tools—from customer service bots to internal knowledge management systems—this uncertainty is a major risk factor. If a company builds its proprietary AI on data later found to infringe copyright, that entire investment could be compromised.

Actionable insight here is clear: Due diligence must now extend far beyond the software’s functionality to its data pedigree. Enterprises must demand assurances from vendors regarding the provenance and licensing status of their training data. Relying on tools whose data sources are legally ambiguous is a massive liability waiting to materialize.

Actionable Insights: Navigating the Era of Scrutinized Data

The Grammarly controversy is a warning shot. For creators, technologists, and business leaders alike, proactive adaptation is necessary to thrive in this new reality.

For Content Creators and Experts:

- Demand Clarity: Support industry movements demanding explicit opt-in/opt-out frameworks. Your expertise holds quantifiable financial value in the AI economy.

- Embrace Attribution Standards: Advocate for tools that provide citation trails. If your work inspires the output, you deserve credit, even if compensation models are still evolving.

For AI Developers and SaaS Providers (like Grammarly):

- Audit Your Training Sets: Conduct immediate, deep audits on all data used, especially for features that claim to mimic professional expertise. Move aggressively toward licensed and permissioned datasets.

- Rethink "Inspiration": If a feature implies expertise, it must be based on ethically and legally sound sourcing. Be prepared to transparently document where the "Expert Review" methodology originates. If you can't name the source ethically, you shouldn't name it at all.

For Businesses Adopting AI:

- Incorporate Data Vetting into Procurement: Treat AI model sourcing as a core legal and compliance check, similar to cybersecurity audits. Ask vendors hard questions about data contracts.

- Isolate Proprietary Training: When training internal models, ensure proprietary data used is siloed and protected, never mixing it with public scrapes that might taint your legal standing.

Conclusion: Towards a Trustworthy AI Ecosystem

The controversy surrounding Grammarly’s "Expert Review" is not an isolated incident; it is the frontline of the next major phase in AI evolution. We are moving away from the era of unrestricted ingestion toward an era defined by accountability, transparency, and structured compensation.

The future of AI rests on trust. If users, creators, and regulators cannot trust the data inputs—or the attribution claims—the entire technology risks being undermined by litigation and public backlash. The immediate fallout for Grammarly will involve reputational repair and potentially legal renegotiation. The long-term implication for the industry is the forced maturation of data sourcing—a shift that, while challenging in the short term, is essential for building an ethical, sustainable, and widely adopted AI ecosystem.