The Expertise Extraction Crisis: How Grammarly's AI Controversy Redefines Data Rights and Generative Trust

In the fast-moving world of Artificial Intelligence, technological capability often races far ahead of ethical and legal frameworks. This dynamic has created a fertile ground for conflict, nowhere more clearly illustrated recently than in the accusations leveled against Grammarly regarding its "Expert Review" AI feature.

The core allegation is simple, yet profound: Grammarly allegedly sought inspiration from, or outright used the names of, respected journalists and authors to lend credibility to its AI suggestions without obtaining their permission or offering compensation. For the AI industry, this is not just a public relations misstep; it is a flashing red light indicating a fundamental breakdown in the relationship between AI developers and the human expertise that powers their models.

The Unseen Cost of Training Data: Beyond Copyright Lines

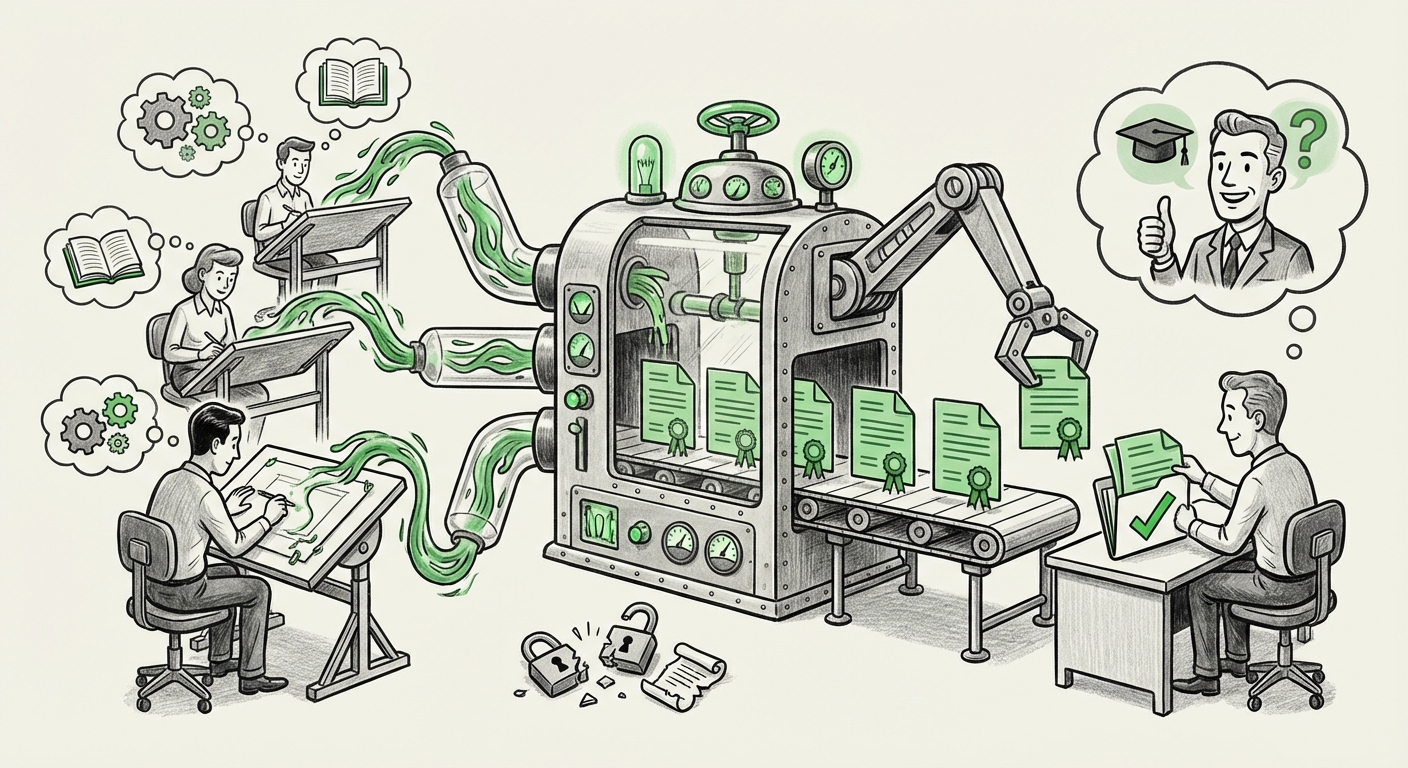

Large Language Models (LLMs)—the engines behind tools like Grammarly’s advanced features—do not learn in a vacuum. They are trained on petabytes of text scraped from the public internet. This process is incredibly effective for teaching grammar, style, and tone, but it inherently blurs the lines between public information and proprietary intellectual property. Grammarly’s move appears to cross a critical ethical threshold: moving from learning *style* from data to claiming *authority* from specific, named individuals.

This situation highlights a deep tension that we, as AI analysts, must focus on: The commercialization of borrowed expertise.

If an AI feature claims to offer "expert advice" based on mimicking the style of five specific Pulitzer Prize-winning authors, those authors have effectively become unpaid, non-consenting validators for a commercial product. This moves the debate past traditional copyright infringement (copying the work itself) and into the realm of personality rights and intellectual endorsements.

The Systemic Problem: Scraping and the Lack of Consent

The Grammarly incident is not an anomaly; it is a symptom of a wider industry practice. Our analysis of related trends shows that many major AI platforms operate on the assumption that publicly accessible data is free game for training. This has spurred significant backlash, prompting us to investigate search queries like "Generative AI 'data scraping' 'opt-out' policies major platforms".

The reality facing content creators is the "Silent Squeeze." Their life’s work, posted online, is used to build multi-billion dollar models, and they often have no meaningful recourse. As articles detailing creator pushback suggest, many writers and publishers are now demanding that platforms implement clear, accessible, and *enforceable* opt-out mechanisms for training data.

For businesses relying on AI tools, this ambiguity is a liability. If the foundation of the tool is legally contentious, that risk rolls downhill to the enterprise user.

The Legal Battlefield: Following the Precedent of Major Lawsuits

To understand the potential future implications for Grammarly and its peers, we must look to the highest-profile legal battles currently underway. Searching for updates on the "New York Times vs OpenAI lawsuit progress and implications" reveals the central legal argument that will shape the next decade of AI development.

The NYT case argues that generating text substantially similar to copyrighted source material, even if paraphrased, constitutes infringement. While Grammarly’s "Expert Review" may not copy entire articles, the principle remains: Is using the *essence* of recognized expertise, even without direct copying, a violation of implied terms of use?

The legal concept of "Fair Use" is being stretched thin. Traditionally, fair use allows limited use for commentary, criticism, or scholarship. It is highly debatable whether using the names of experts to promote a commercial editing tool qualifies for this protection. If courts rule restrictively against the AI developers in these major cases, the profitability and methodology of tools like Grammarly’s feature will need complete overhauls—requiring licensing deals or entirely new, internally sourced training sets.

Actionable Insight for Legal and IP Teams:

Businesses must audit their AI usage. If your internal tools or vendor solutions leverage third-party models, you need clarity on:

- The geographic region where the training data was sourced.

- The specific consent mechanisms (if any) provided to data contributors.

- Indemnification clauses from vendors covering IP infringement related to model outputs.

The Peril of Simulated Authority: Liability and Consumer Trust

Perhaps the most immediate concern for users is the introduction of features that mimic human authority. The development trend is clear: AI is moving beyond simple auto-complete to specialized, advisory roles. This leads us to query what happens when AI provides professional guidance, exemplified by searching for "AI 'expert review' features liability and accuracy concerns".

When Grammarly suggests a writing change based on the "expertise" of an author who never agreed to endorse the suggestion, two things happen:

- Trust Inflation: Users place undue faith in the advice because it appears sanctioned by a credible source, even if it is just an algorithmic shadow.

- Liability Diffusion: If the AI advice is poor, misleading, or introduces bias, who is responsible? Is it Grammarly for misattributing expertise, or the original expert whose training data was utilized?

For the general consumer, this is easily explained: Imagine asking a doctor for advice, and they say, "Well, Dr. Smith would suggest this," even though Dr. Smith is alive, well, and has never spoken to you or the doctor offering the advice. It feels manipulative.

This focus on simulated authority will lead to a consumer reckoning. Trust is the currency of the digital economy. If consumers feel that AI tools are cheapening or misappropriating genuine human skill, adoption rates for those specific features will plummet, regardless of the underlying technology’s brilliance.

The Future Trajectory: From Scraping to Sourcing

What does this mean for the *future* of AI tools? The trend observed in the Grammarly case forces a necessary pivot away from uncontrolled mass scraping toward controlled, compensated data sourcing.

1. The Rise of Licensed Datasets

We will see a significant increase in companies explicitly licensing high-quality, ethically sourced data for training. This might involve direct partnerships with publishing houses, universities, and professional guilds. While this makes training models more expensive, it builds a legally defensible and ethically robust foundation.

This signals a shift for AI developers: Quality over sheer quantity. A smaller, fully licensed dataset, where every author and expert has consented, will become more valuable than a massive, legally dubious scrape of the entire web.

2. Mandatory Provenance and Watermarking

Regulators globally, pushed by incidents like this, will likely mandate data provenance tracking. Future LLMs may need to provide an auditable trail showing where specific stylistic influences or knowledge bases were derived. Furthermore, outputs might require clear digital watermarking indicating that the content was AI-generated or AI-assisted, addressing the "From Citation to Simulation" issue head-on.

3. The Democratization of Opt-Out

If the industry does not self-regulate robust opt-out systems (as seen in discussions around "Major Tech Players Draft New Guidelines"), governments will impose them. We can expect standardized protocols, possibly managed through decentralized registries, where creators can permanently flag their work as ineligible for commercial model training without requiring complex legal action.

Practical Implications for Businesses and Creators

The Grammarly controversy is a wake-up call for two distinct groups:

For Content Creators and Experts:

Actionable Insight: You must actively manage your digital footprint. Assume that anything you publish online *can* be used for training unless explicitly restricted. Start documenting when and where your work is published. Be prepared to join collective action efforts, as individual lawsuits against tech giants are often impractical.

For Enterprise Technology Leaders:

Actionable Insight: Re-evaluate your reliance on "black box" models whose data foundations are opaque. When procuring AI solutions, prioritize vendors who offer transparency regarding their training methodologies. If an AI tool claims to offer specialized insights, demand to know the source of that specialization—was it based on consensual training, or scraped data attributes?

The future of business AI adoption hinges on demonstrable trustworthiness. If a tool is built on shaky ethical ground, the resulting outputs carry an inherent risk of being unusable in regulated or high-stakes environments.

Conclusion: Trust Must Be Earned, Not Extracted

The incident involving Grammarly’s "Expert Review" feature pulls back the curtain on the often-unexamined assumptions underpinning the generative AI boom. The ability to ingest and mimic human output has been mistaken for the right to commercialize human identity and expertise without permission.

As AI technology matures, its success will depend less on computational power and more on social license. If developers continue to treat human creativity as free fuel for their engines, they risk alienating the very creators whose work makes the AI valuable in the first place. The path forward demands a new social contract for data, one founded on consent, compensation, and accountability. Only then can AI transition from a disruptive force challenging IP to a truly trustworthy partner augmenting human potential.