The Attribution Crisis: Why Grammarly's Expert Review Signals a Tectonic Shift in AI Ethics and Data Rights

The recent report detailing Grammarly’s alleged use of established authors’ and journalists’ names without consent for its "Expert Review" feature is more than just a minor PR stumble; it is a flashing warning light for the entire generative AI ecosystem. This incident acts as a powerful microcosm, forcing us to confront the growing chasm between rapid technological capability and established ethical and legal norms.

As AI writing tools become deeply embedded in professional workflows, the quality of their advice hinges entirely on the data they consumed during training. When a major platform appears to borrow the *reputation* of recognized experts to validate its algorithms, the implications for intellectual property, user trust, and the future compensation of human creators are staggering. To understand where AI writing tools are heading, we must analyze this development through three critical lenses: the battle over training data rights, the shifting competitive landscape, and the essential evolution of AI transparency.

The Data Dilemma: Navigating Copyright, Fair Use, and Reputational Rights

At the heart of the Grammarly issue lies the fundamental question plaguing all large language models (LLMs): How much of the internet can an AI company ingest, analyze, and then re-package for profit without permission?

The Legal Tightrope of Training Data

For years, AI developers have largely relied on the "fair use" defense, arguing that feeding billions of web pages, books, and articles into a model to create derivative knowledge is transformative and thus permissible. However, this defense is under intense judicial scrutiny. Lawsuits filed by major publishers and creator guilds challenge this premise directly, arguing that the output of these models competes directly with the original creators whose work fueled the training set. (Query 1 focus: "copyright infringement" "large language models" "fair use").

In the context of Grammarly’s feature, the alleged violation shifts from the training data itself to the *output presentation*. It is one thing to train an LLM on Shakespeare; it is another to create a tool that claims its suggestions are sourced from the "expertise" of Stephen King or Joan Didion without their blessing. This touches upon **reputational rights**—the intangible value an expert accrues over a career.

For the average user and creator, the implication is that their life’s work, if published online, can be mined, distilled, and then used to lend unearned authority to a commercial product. This erodes the perceived value of authorship.

The Ethics of Borrowed Authority

Moving beyond strict law, the ethical landscape demands clarity on the difference between "inspiration" and unauthorized endorsement. (Query 4 focus: ethics of "inspiration" vs. "plagiarism" in generative AI). If an AI tool summarizes the documented techniques of a renowned writing coach and then brands those summaries as "Expert Review," it is essentially selling the *brand* of that coach. This is a form of intellectual property theft that is harder to prosecute than direct copying but perhaps more damaging to a creator's livelihood.

What this means for the future: We are rapidly moving toward a legal framework where model developers will need to prove a cleaner chain of custody for their training data. Expect increased pressure for **licensed data sets** and potential mandatory royalty structures for creators whose work significantly contributes to commercial AI models.

Industry Reaction and the Race for Trustworthy AI

The marketplace does not tolerate ethical ambiguity for long. The Grammarly controversy creates an immediate competitive opening for rivals who can demonstrably prove their ethical sourcing.

The Transparency Dividend

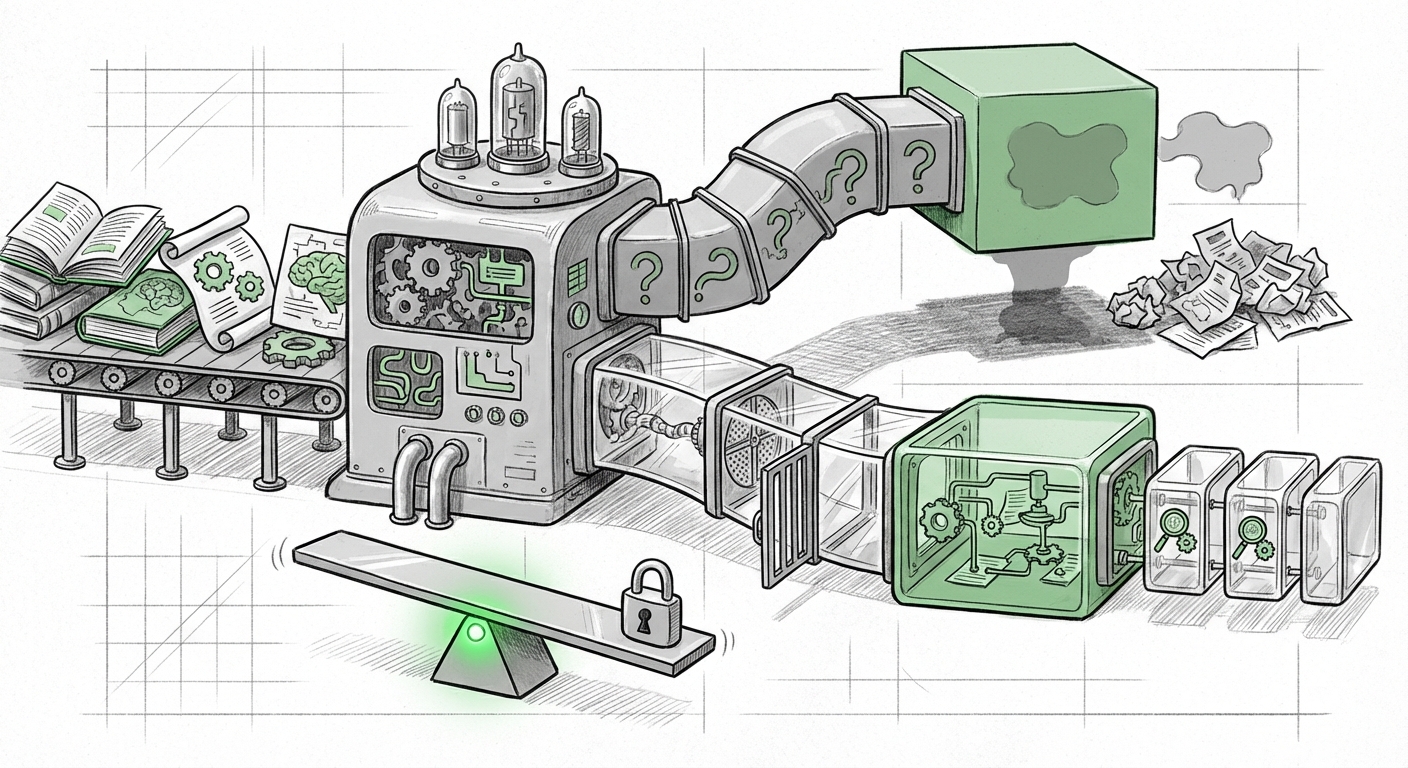

When one major player appears to operate in the gray zone of attribution, competitors immediately pivot their marketing to emphasize trust and transparency. (Query 2 focus: AI writing tool attribution scandal "transparency"). We are seeing a bifurcation in the AI writing tool market:

- The Scrapers: Tools that prioritize massive scale and speed, often accepting higher legal/reputational risk regarding data provenance.

- The Curators: Tools that emphasize clean, licensed, or self-generated data sets, offering buyers certainty about the origin of the advice.

For AI product managers, this signals a critical pivot. Features that add "cool factor" (like naming famous experts) will be overridden by features that add "trust factor." Users, especially in regulated industries like finance, law, or journalism, will increasingly demand proof that the AI advice they rely on is not based on legally questionable intellectual shortcuts.

Competitor Differentiation

If Grammarly’s core value proposition was previously "better grammar," its new value proposition might be tested as "ethics-compliant grammar." Competitors will likely release features that explicitly cite the source of their generalized knowledge—even if it's just citing the corpus itself (e.g., "Derived from a corpus of peer-reviewed academic papers"). This focus on source transparency will become a key differentiator in the saturated AI writing space.

The Evolution of Consent: From Opt-Out to Opt-In

The most enduring implication of these controversies is the necessary overhaul of how AI platforms manage user consent regarding data ingestion.

The Failure of Passive Consent

The current default mode—where anything posted publicly is assumed fair game unless explicitly opted out—is proving unsustainable when high-value professional reputation is on the line. (Query 3 focus: future of AI tools "opt-out" data training). The burden of proof is shifting. Experts do not want to constantly police the internet to ensure their work isn't being used to train their competition.

The future standard must lean toward proactive consent. We are seeing emerging solutions designed to address this:

- Data Labeling & Metadata: Implementing standardized metadata tags that clearly mark content as "Do Not Train" or "Licensed for Commercial LLM Training Only."

- Platform-Level Agreements: Major content platforms (like Reddit or certain news aggregators) are beginning to negotiate explicit licensing deals with AI firms, effectively putting a price on data use and establishing clear opt-in consent frameworks for their contributors.

- Granular Control: AI tool providers may soon need to offer granular control panels allowing users to determine which specific types of data (e.g., blog posts vs. internal documents) can be used for model refinement.

For Enterprise Technology Leaders, this means that adopting new AI services without a clear contractual clause regarding data provenance and indemnity against copyright claims is now a significant business risk. You must know what your tools are learning from, and what they are allowed to teach.

Practical Implications and Actionable Insights

The Grammarly incident is a necessary, if uncomfortable, catalyst forcing the entire industry to mature rapidly. Here is what businesses and creators must do to navigate this new reality:

For AI Developers and Product Teams:

- Audit Your Provenance: Immediately review the sources used to train any feature that claims to draw upon "expert knowledge." If the expertise is tied to living, identifiable individuals, ensure explicit licensing or consent is obtained.

- Prioritize Explainability over Bluster: Stop using vague terms like "inspired by." If a feature relies on external knowledge, build in attribution mechanisms, even if it means showing less data. Trust outweighs flash.

- Implement Clear Opt-Out/Opt-In: If you use user-generated content to fine-tune models, make the consent mechanism explicit, easy to find, and legally sound.

For Content Creators and Businesses:

- Review Terms of Service (ToS): Scrutinize the ToS of every AI tool you integrate. Pay close attention to clauses regarding model refinement and data ownership. Assume that if consent isn't explicitly stated, you risk losing control over your intellectual property.

- Demand Transparency: When evaluating new AI writing software, make data sourcing and attribution policies a mandatory checkpoint alongside feature sets. This is now a key component of supply chain risk management.

- Defend Your Brand Equity: If you are an expert, be vigilant. If you see your reputation leveraged to sell a feature you did not approve, act swiftly. The precedent set by public pushback today will shape the laws of tomorrow.

Conclusion: The Age of Accountable Intelligence

The era of "move fast and break things" is colliding head-on with the established rights of human creativity and intellectual property. The Grammarly controversy is a sharp reminder that AI models are not abstract mathematical entities; they are complex constructs built from the aggregated, hard-won knowledge of millions of people. Commercializing features that rely on borrowed authority without attribution is not just poor practice—it is unsustainable business strategy in an increasingly litigious and ethically aware digital landscape.

The future of successful AI writing tools will not belong to those that ingest the most data indiscriminately, but to those that can master the art of **accountable intelligence**: transparent sourcing, explicit consent, and genuine respect for the human experts who made the technology possible in the first place. This shift from unchecked scraping to deliberate, ethical curation will define the next wave of innovation, separating the fleeting novelties from the enduring, trustworthy platforms.