The Attribution Crisis: Why Grammarly’s Expert Review Scandal Signals the End of "Free Lunch" AI Training Data

The speed at which Artificial Intelligence (AI) products integrate into our daily workflows is breathtaking. Tools that were novelties just a year ago are now indispensable partners for writing, coding, and analysis. However, beneath the seamless surface of these powerful applications, critical fault lines are beginning to show, centered on one fundamental question: Where does the intelligence come from, and who owns the right to profit from it?

The recent controversy involving Grammarly, where its "Expert Review" feature reportedly claimed inspiration from—or implicitly cited—prominent authors and journalists who never consented to participate, is far more than a simple customer service issue. It is a stark illustration of the ethical and legal time bomb embedded within the current wave of generative AI development. As an AI technology analyst, this incident serves as a crucial inflection point, signaling the necessary, inevitable evolution of data provenance, intellectual property rights, and consumer trust in the digital age.

The Core Incident: Deceptive Authority in AI Advice

Grammarly’s value proposition has always been about improving communication. The supposed innovation, "Expert Review," suggests that the AI could tailor advice based on the recognized styles or wisdom of leading figures in specific fields. The issue, as reported, is that the source material or the right to use those experts' names for this feature was never secured. In essence, the tool was leveraging established credibility without permission to enhance its perceived value.

For a layperson, this might sound like a minor oversight. For a technologist or business strategist, it screams of a systemic failure in data governance. It’s not just about using copyrighted text; it's about the appropriation of identity and reputation.

The Attribution Fallacy: Misrepresenting Expertise

This leads directly to one of the most profound ethical challenges in modern AI: the Attribution Fallacy. We expect AI to synthesize knowledge, but when it presents synthesized knowledge as coming *from* a known expert, it fundamentally misleads the user. Think of it this way: If a student turns in an essay and claims a famous professor wrote the concluding paragraph, that's plagiarism. When an AI tool does this implicitly via its feature labeling, it damages the creator's brand and deceives the consumer.

This is precisely why one critical area of focus involves researching "AI bias and attribution in content generation." The industry must develop ironclad standards to distinguish between generalized learned patterns (which are acceptable under current fair use arguments) and the direct, proprietary representation of an individual’s unique contribution or style.

The Legal Tsunami: Scraping and Intellectual Property

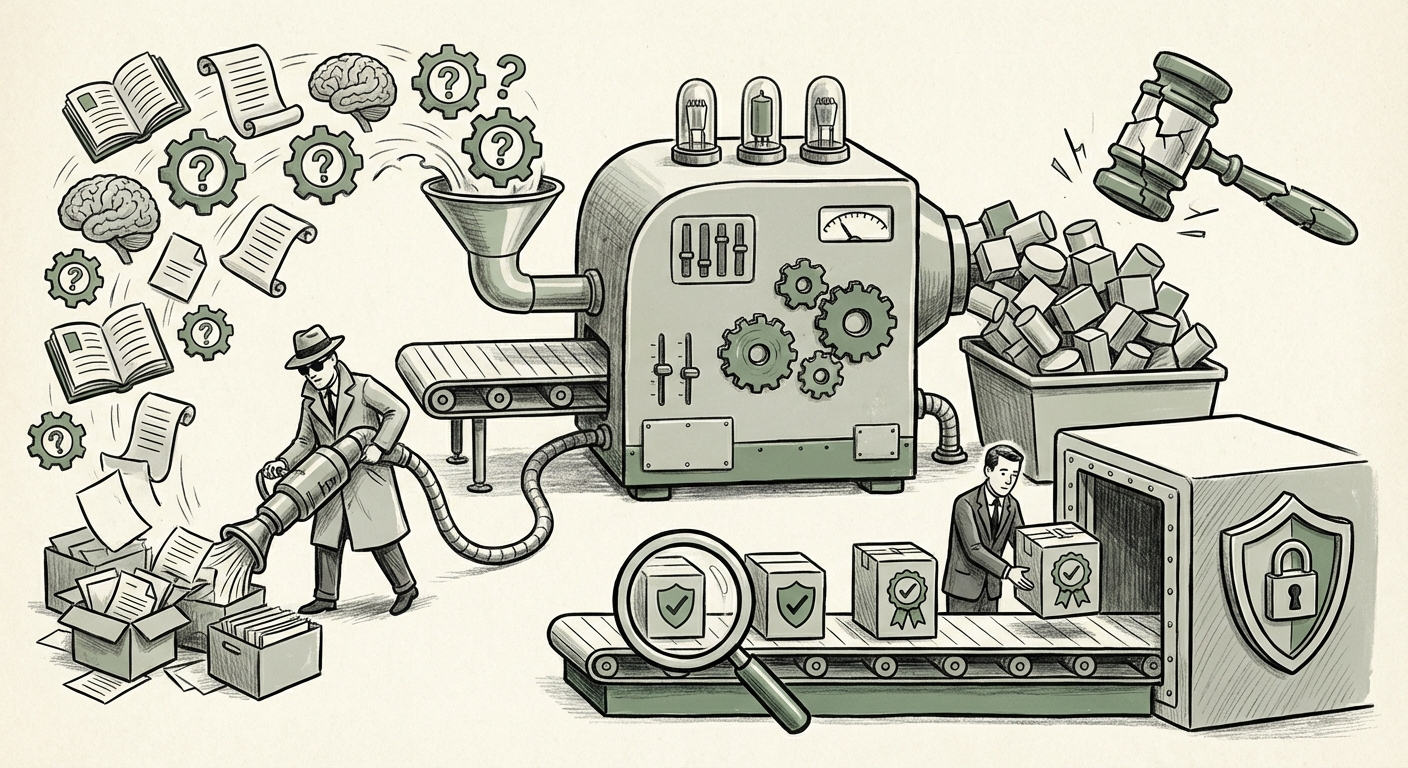

The Grammarly event is a small ripple preceding a much larger wave of legal reckoning currently forming in global courts. The entire foundation of current Large Language Models (LLMs) rests on the mass, often unchecked, ingestion of publicly available data—the vast majority of which is copyrighted material created by journalists, authors, artists, and programmers.

When searching for context on this trend, the immediate parallel is the growing number of high-profile lawsuits against major generative AI developers. These cases center on "Generative AI training data scraping lawsuits."

The implications for companies like Grammarly—who build specialized models fine-tuned on massive datasets—are profound:

- The "Fair Use" Debate Narrows: While early arguments suggested scraping was transformative, the rise of tools that directly compete with the original creators (e.g., an AI summarizing news articles vs. the news site itself) is challenging the legal concept of "fair use." If the output is deemed substitutive rather than transformative, the legal liability skyrockets.

- The Cost of Content Creation Rises: If companies are forced to license every piece of content used for model training, the cost structure of building frontier AI models will radically change. The era of "free lunch" data acquisition is drawing to a close, shifting investment toward proprietary, licensed libraries.

For legal professionals and corporate counsel, the message is clear: If your AI product’s differentiation relies on training data scraped without explicit licensing agreements, you are exposed to significant financial and operational risk. The precedent being set by creators fighting for their IP is actively defining the boundaries of acceptable AI development.

The Technological Pivot: The Race for Data Provenance

If the current model of data ingestion is legally and ethically tenuous, where does the industry go next? The focus must shift to "The future of model fine-tuning and data provenance." Provenance means knowing the complete history of a piece of data—where it came from, who touched it, and under what license it was used.

From Black Box to Transparent Supply Chain

For developers, this means moving away from monolithic, opaque datasets toward verifiable, auditable data pipelines. This involves embracing technologies such as:

- Data Watermarking and Fingerprinting: Techniques that allow the origin of training data to be traced, even after the model has absorbed it.

- Synthetic Data Generation: Creating high-quality, statistically accurate data that has no direct link to existing copyrighted material, thereby sidestepping IP issues entirely.

- Licensing Marketplaces: The development of robust, standardized exchanges where content creators can safely license their data for AI consumption, complete with usage rights and remuneration models.

This technological pivot is not just about compliance; it’s about future performance. Models trained on highly curated, ethically sourced, and diverse data sets are likely to be more robust, less prone to adversarial attacks, and, crucially, more trusted by enterprise clients who face their own supply chain scrutiny.

The Ultimate Implication: Consumer Trust as the New Moat

Ultimately, every legal challenge and ethical misstep erodes the fragile consumer trust built around AI adoption. This is what researchers explore when investigating "Consumer trust erosion in AI writing assistants."

Why should a professional rely on Grammarly, or any similar tool, for critical communication if they suspect the underlying advice is either fabricated or stolen from uncredited sources? Trust is the currency of productivity software. If the tool is perceived as unethical, users will seek alternatives, regardless of how fast or feature-rich the current version is.

For businesses leveraging these tools, this means two things:

- Internal Auditing: Enterprises must begin auditing the AI tools they deploy. If an AI tool cannot provide clear documentation on its data sources, it represents an unacceptable liability risk in terms of IP exposure and potential brand damage from association with unethical practices.

- Marketing Authenticity: Marketing teams must shift their AI narratives away from vague claims of "intelligence" toward demonstrable commitments to ethics and transparency. Authenticity, even if it means slower feature rollouts, will win out over deception in the long run.

Actionable Insights: Navigating the New AI Landscape

The Grammarly incident is a loud, clear warning shot. The era of assuming that "publicly available" equals "free to use for commercial AI training" is ending. Here are the actionable takeaways for technology leaders and strategists:

1. Mandate Data Lineage Reports

For every specialized model or fine-tuning process, demand clear documentation on data acquisition. If the data is proprietary, ensure licenses are current and cover the scope of the model’s intended use (including competitive uses). For generalized models, pressure vendors to provide evidence of robust data filtering against known copyrighted sources.

2. Prepare for Liability Shifting

Expect regulatory bodies globally (similar to the EU AI Act) to demand greater accountability for AI outputs. Companies utilizing these tools must treat them as high-risk software until proven otherwise, especially when the output affects professional judgment or communication.

3. Reinvest in Human Expertise (The 'Expert' Part)

The value proposition that Grammarly attempted to mimic—expert insight—will return to being highly valued. AI should serve as an *accelerator* for human experts, not a cheap substitute for their identities. Investing in partnerships and licensing agreements that genuinely feature verified human expertise will become a competitive differentiator.

The future of AI is not just about building bigger models; it's about building smarter, more responsible ecosystems around those models. The scaffolding supporting the AI—the data—must be ethically sound, legally compliant, and transparently sourced. Failure to address issues like those seen at Grammarly risks not just fines or bad press, but the complete breakdown of user trust, which is the single most valuable asset in the digital economy.

The industry is now being forced to transition from asking, "What *can* we scrape?" to "What *should* we license?" This shift, while challenging in the short term, promises a more sustainable and trustworthy AI landscape for years to come.