The AI Operating System Revolution: How LLMs Are Gaining Core Control

The recent buzz surrounding advanced models, suggested to be precursors to something like "GPT-5.4," points toward a monumental shift in artificial intelligence. We are moving rapidly past the era of the reactive chatbot. The next frontier isn't just generating better text; it's about models that can *govern*. The concept floating around—that these new systems are starting to act like true Operating Systems (OS)—is not hyperbole. It is the natural, engineered evolution of AI from a tool to a foundational digital environment.

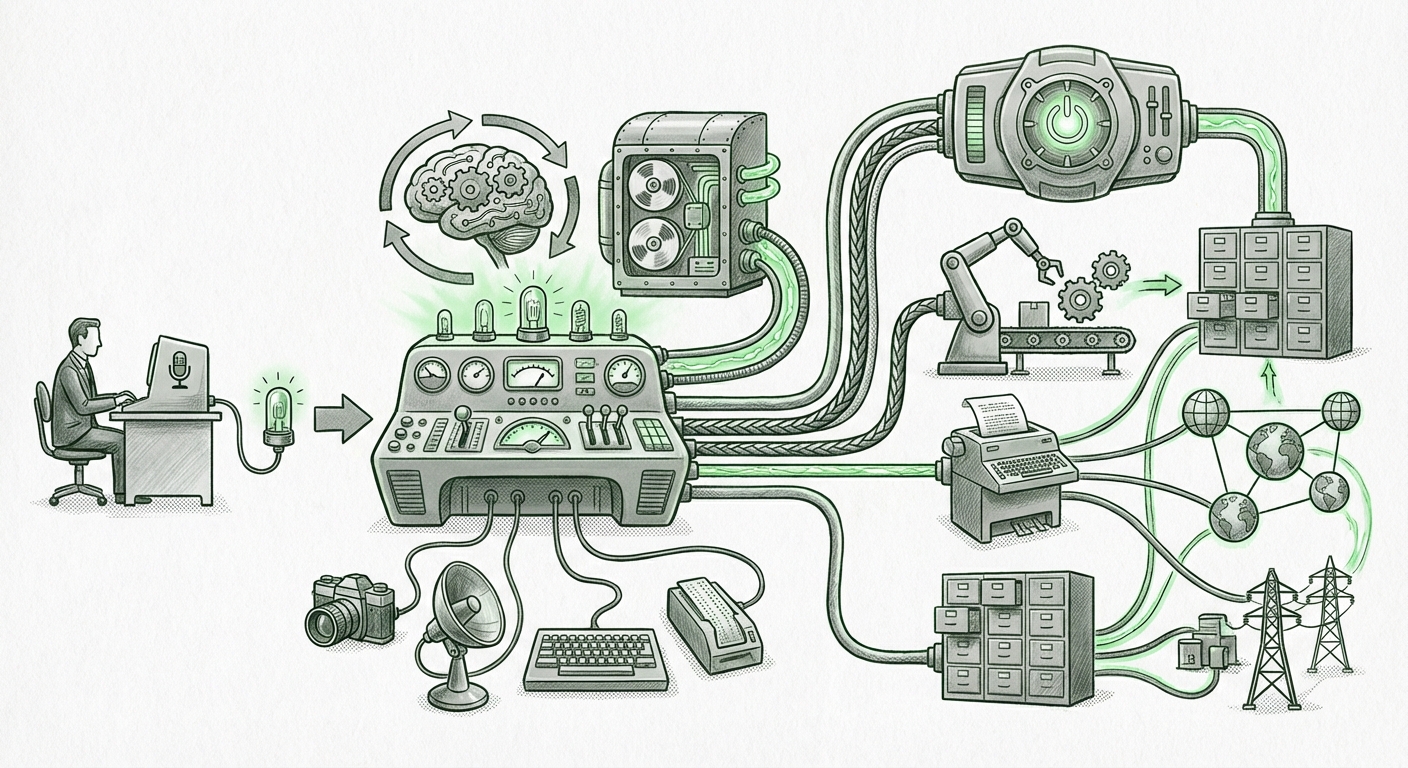

To grasp this, imagine your current computer OS (Windows, macOS, Linux). It handles inputs, manages memory, runs programs simultaneously, and enforces rules. A traditional LLM just answers questions. An AI Operating System (AI-OS), however, implies that the LLM kernel manages complex, multi-step tasks autonomously, coordinating tools, memory, and long-term goals. This transformation requires three critical architectural breakthroughs.

Phase One: Moving Beyond Reactive Answers to Deliberative Action

The initial capabilities of models like GPT-3 were impressive demonstrations of pattern recognition—what cognitive science calls "System 1" thinking: fast, intuitive, and automatic. To manage the complexity of an operating system, AI needs "System 2" capabilities: slow, conscious, and logical planning.

This shift is central to the "AI-OS" narrative. An OS must stop to think about resource allocation, scheduling, and error correction. We see research actively developing frameworks to force this deliberation. By requiring models to engage in **"Plan-and-Execute Architectures,"** developers are designing scaffolding around the core LLM. This scaffolding forces the model to pause, generate a sequence of steps, execute the first step, evaluate the result, and then replan if necessary.

For the business user, this means AI moves from being a helpful assistant to a proactive project manager. Instead of asking the AI to "draft an email," you will ask it to "launch the Q3 marketing review process." The AI-OS will then determine which tools (email client, data database, calendar scheduler) to access, in what order, and how to handle inevitable failures.

The Infrastructure Challenge: Memory and Persistence

A key limitation of historical LLMs is their short-term memory—the context window. If an OS only remembered the last sentence typed, it would be useless. An AI-OS needs perfect, persistent memory to maintain state across days, weeks, or even years of work.

This necessitates profound infrastructure changes that mirror how traditional computing manages data persistence. We are witnessing a race to build superior long-term memory layers.

RAG Evolves Beyond Simple Retrieval

The current standard, Retrieval-Augmented Generation (RAG), allows models to pull external data. However, for an AI-OS, RAG must become more sophisticated. It needs to handle not just document retrieval, but the retrieval and management of *internal state*—what the AI has learned about a specific ongoing project, user preference, or environmental constraint.

For developers, this means that the "model" itself might become less important than the surrounding memory management system it relies upon. The quality of the AI-OS will be defined by how intelligently it recalls and updates its understanding of its environment.

The Unified Interface: Multimodality as Control Surface

Operating systems are defined by their ability to manage diverse streams of information simultaneously—the graphical user interface (GUI), the command line interface (CLI), network packets, and peripherals. For an LLM to become an OS, it must master multiple modes of interaction.

This is where the rapid acceleration in Multimodal AI becomes crucial. A system that can only read text is restricted to command-line-like interactions. A system that can process video feeds, interpret auditory commands, read live code execution environments, and analyze complex diagrams effectively gains a graphical, sensory interface to the world—much like a human sitting at a desktop.

When AI can interpret the world visually and auditorily, it gains the ability to interact with *legacy software*—the graphical applications that don't have clean APIs—by simply interacting with them as a human would. This capability transforms the model from a semantic processor into a universal digital controller.

The Implications: What Does an AI-OS Mean for Business and Society?

If these trends converge into a stable AI-OS layer, the implications are vast, fundamentally reshaping productivity, security, and the nature of work itself.

For Business: Hyper-Automation and System Orchestration

The most immediate impact will be on enterprise workflows. Businesses will move beyond task automation to system orchestration. Instead of using dozens of specialized SaaS tools glued together by brittle integrations, the AI-OS becomes the central fabric.

- Self-Correcting Processes: If a manufacturing pipeline generates a faulty batch (detected via multimodal quality control), the AI-OS immediately flags the supplier, pauses future orders, generates a remediation plan, and alerts the human manager, all without explicit instruction beyond the initial goal.

- Zero-Shot Integration: Because the AI-OS can interpret visual UIs and command lines, it can learn to use any existing enterprise software almost instantly, bypassing the years-long process of building native API integrations.

For Technical Audiences: The Rise of Software 2.0

As noted by leading researchers, the move toward these agents is the realization of "Software 2.0"—where software is written not in explicit code, but through large neural networks trained on vast data. The AI-OS is the execution environment for this new software paradigm.

Developers will spend less time writing boilerplate logic and more time defining constraints, providing robust memory access, and verifying the high-level intent of the AI-OS. Debugging shifts from fixing syntax errors to debugging reasoning chains.

Societal Risks: Control and Explainability

With greater control comes greater risk. If an AI acts as an OS, it holds the keys to critical infrastructure, personal data, and operational continuity.

The challenge is that System 2 reasoning, while powerful, is often opaque. If the AI-OS makes a critical error in resource allocation, explaining *why* it chose a particular path—especially when that path involved complex internal memory retrievals and multi-step planning—becomes a formidable security and governance hurdle. We must demand better observability tools for the reasoning layer itself, not just the output layer.

Actionable Insights: Preparing for the OS Shift

For organizations looking to capitalize on this transition and mitigate associated risks, preparation must begin now:

- Audit for Agent-Readiness: Identify core business processes that are currently chained together by human intervention and manual tool-switching. These "gaps" are the first candidates for AI-OS takeover.

- Invest in Data Grounding (Not Just Data Volume): Focus on structuring internal data (policies, databases, user histories) into high-quality, retrievable vectors. The AI-OS is only as good as the persistent context it is given.

- Develop Oversight Toolkits: Before deploying advanced agents, create monitoring dashboards specifically designed to track planning steps, tool invocation success rates, and long-term memory drift. Treat the AI-OS like you would a mission-critical server farm.

The leap from the chat interface to the operating system interface represents the most significant technological inflection point since the advent of the public cloud. It is the moment AI stops being something we use *on* our computers, and starts becoming the software layer that runs *our* computers.