The AI Operating System: How LLMs Are Becoming the New Command Center of Computing

The evolution of Artificial Intelligence is often measured in benchmarks—speed, accuracy, and parameter count. But sometimes, a development signals a fundamental shift in *how* we interact with technology. Recent discussions surrounding models like the hypothetical GPT-5.4 suggest we are crossing that threshold: Large Language Models (LLMs) are moving beyond being smart chatbots to becoming the very infrastructure upon which digital tasks are managed—the new Operating System (OS).

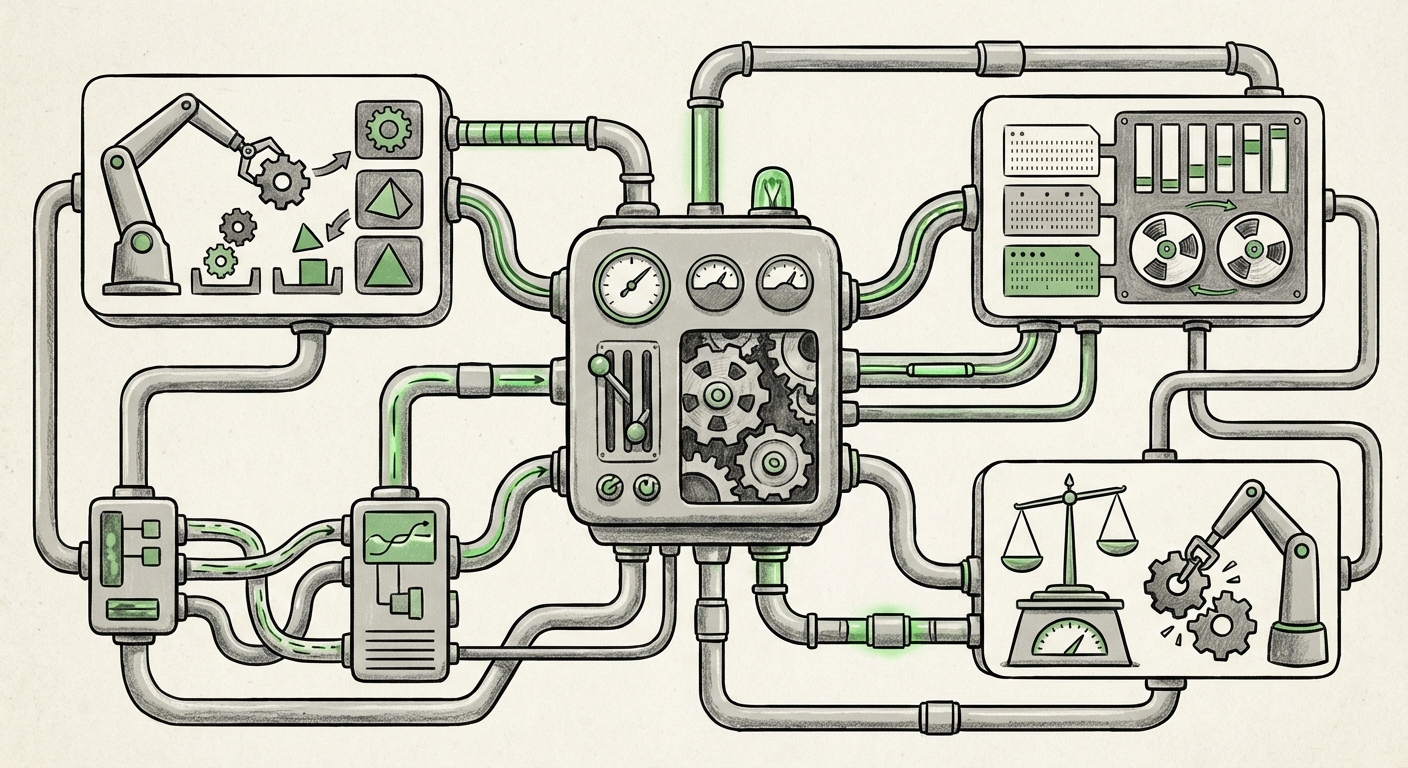

If a traditional computer OS (like Windows or Linux) manages hardware resources, memory, and processes, an AI OS manages digital resources, information flow, and cognitive agents. This is not just about answering questions; it’s about delegation, execution, and persistent state management. To understand the magnitude of this change, we must contextualize this shift through the lens of current research, competitive positioning, and the future of user interaction.

The Core Concept: From Generator to Governor

For years, LLMs have excelled at text generation, summarization, and code snippets. They were powerful, yet transactional. You give a prompt, you get a result. The "AI OS" paradigm flips this by granting the model persistent access to tools, memory banks, and a mechanism to orchestrate other AI modules (agents).

Think about what an OS does best: it handles complexity so the user doesn't have to. If you want to analyze a year's worth of sales data, book a complex international trip involving multiple stops and visa checks, or manage a development pipeline, a traditional computer requires opening dozens of specific applications. An AI OS promises to handle this entire sequence through a single, declarative instruction:

“Plan and execute the Q3 international expansion strategy, ensuring all legal documents are drafted, and book the executive travel itinerary based on budget constraints.”

This requires sophisticated **process management**—the model must decide which sub-agent handles legal drafting, which handles booking APIs, and how to manage errors if one step fails. This resource allocation and sequencing is precisely the function of a kernel in a traditional OS.

Corroborating the Trend: The Engineering Underpinnings

The idea of the LLM as an OS is not floating in a vacuum. Advances in agentic AI confirm that the industry is actively building the necessary scaffolding for this control plane.

1. Building the Control Plane: Agent Orchestration Frameworks

The first piece of supporting evidence comes from the burgeoning field of multi-agent systems. When we search for technical discussions surrounding "LLM as Control Plane" OR "AI Agent Orchestration Frameworks," we uncover the engineering needed to make this vision functional. Frameworks like Microsoft’s AutoGen are pioneering methods where multiple specialized AI agents (e.g., a coder agent, a tester agent, a planner agent) can communicate, critique each other, and resolve conflicts under the direction of a primary orchestrator LLM.

This orchestration layer *is* the nascent operating system. It manages:

- Task Distribution: Deciding which specialized tool or sub-agent gets the next job.

- Inter-Process Communication: Ensuring data flows correctly between steps.

- Error Handling and Recovery: If a tool fails, the OS model must loop back and retry or pivot to a new strategy.

For AI Engineers and System Architects, studying these frameworks reveals that the architectural design for an AI OS is already underway, moving from theoretical concept to deployable code.

2. The Competitive Pressure: System-Level Capabilities in Flagship Models

A trend is only powerful if it’s competitive. To validate the "OS" trajectory, we must look at major rivals. Searches focusing on "Google Gemini system capabilities" OR "Anthropic Claude 3 system instructions context" reveal that performance leaps are often tied to enhanced system control, not just bigger knowledge bases.

For instance, the massive context windows demonstrated by models like Gemini 1.5 Pro are crucial. An OS needs a persistent, large memory to track running processes, configuration files, and user preferences across sessions. A 1-million token context window functions as a highly effective, albeit temporary, system memory. It allows the model to hold the state of dozens of ongoing tasks simultaneously without forgetting the initial goals—a requirement for any functional OS.

Technology Analysts tracking these releases confirm that the race isn't just about writing better text; it's about building more robust, state-aware, and tool-integrated systems. This validates that system-level integration is a mandatory feature for the next generation of foundation models.

The End of the Interface: HCI Implications

Perhaps the most disruptive aspect of the LLM as an OS is its effect on Human-Computer Interaction (HCI).

Traditional computing has been defined by the Graphical User Interface (GUI)—the desktop, the icons, the folders. If an LLM acts as the OS, the user rarely needs to navigate those structures. The primary interface shifts to natural language command, much like the command-line interface (CLI) of old, but vastly superior due to comprehension.

When we look into research on "The future of computing interfaces beyond graphical user interfaces (GUI)," we see a growing consensus that the GUI is reaching its complexity ceiling for modern demands. Humans are better at describing intent than clicking through multi-layered menus.

For the average user (and even for many technical users), interacting via declarative language—"Set up an alert pipeline for high-risk financial trades and summarize market sentiment every hour"—is significantly faster and more intuitive than manually configuring cloud functions, setting up notification triggers, and building dashboards.

This promises an era of Ambient Computing where the technology fades into the background, managed entirely by linguistic intent, which requires the underlying "OS" (the LLM) to be deeply capable of managing the environment.

Implications for the Future of AI and Technology

The rise of the AI Operating System has massive repercussions across the technology stack.

For Software Development: The Death of the Silo

If an LLM controls the environment, the traditional application silo begins to break down. Developers will no longer just write code for an application; they will write plugins, tools, and APIs that the LLM OS can call upon.

The focus shifts from building monolithic applications to creating highly reliable, discrete functions that plug into the universal cognitive layer. This could dramatically lower the barrier to entry for creating complex software solutions, as the LLM handles the integration, error checking, and user-facing shell.

For Business: Hyper-Automation and Delegation

Businesses will move beyond simple Robotic Process Automation (RPA) to true Cognitive Process Automation (CPA). Instead of scripting repetitive tasks, managers can delegate entire operational workflows to the AI OS. This means:

- Instantaneous Onboarding: New employees can delegate complex setup tasks via natural language instead of spending weeks learning proprietary software stacks.

- Dynamic Resource Allocation: The OS can automatically spin up more powerful agent clusters for critical projects and scale them down during quiet periods, optimizing cloud spending without human intervention.

- Reduced Cognitive Load: Middle management spends less time coordinating handoffs between departments and more time on high-level strategy, as the AI manages the coordination layer.

The competitive advantage will shift to those who can define their business processes in ways that are most effectively interpreted and executed by the AI OS.

For Society: Governance and Security Challenges

With great control comes great responsibility, and significant risk. If an LLM controls the entire digital environment, security breaches become catastrophic. A compromised AI OS could lead to unchecked agent activity across critical infrastructure, financial systems, or personal data stores.

This forces immediate research into "AI sandboxing" and "governance layers." We need new security protocols built not just around preventing unauthorized access, but around auditing the *intent* and *execution path* of the system controller itself. These governance mechanisms must be robust, transparent, and auditable—perhaps even running on a separate, simpler, immutable 'hardware' layer that the main LLM cannot override.

Actionable Insights: Preparing for the Shift

For leaders, developers, and consumers, recognizing the AI OS trend demands proactive steps:

- Embrace Agentic Tooling: Don't just test new models for chat quality. Test their ability to reliably chain calls to external tools (APIs, databases). This is the test of an operating system kernel.

- Deconstruct Workflows into Atomic Functions: Businesses should begin mapping their complex processes into modular, API-accessible functions. The cleaner the modularity, the easier it will be for the future AI OS to manage them effectively.

- Prioritize Explainability in LLM Orchestration: Demand transparency tools. If the AI OS delegates a task, you must be able to query *why* it chose that agent, *how* it allocated resources, and *what* its error recovery plan was.

The movement toward an LLM acting as an operating system signals the end of the application-centric computing era and the beginning of the intent-centric era. We are trading rigid graphical interfaces for fluid, intelligent delegation. While the technology is immensely powerful, understanding the architectural shifts—from multi-agent frameworks to competitor capabilities—is essential for anyone aiming to lead in the next decade of technological advancement.