From Chatbot to Core System: Decoding LLMs as the Next Generation of Operating Systems

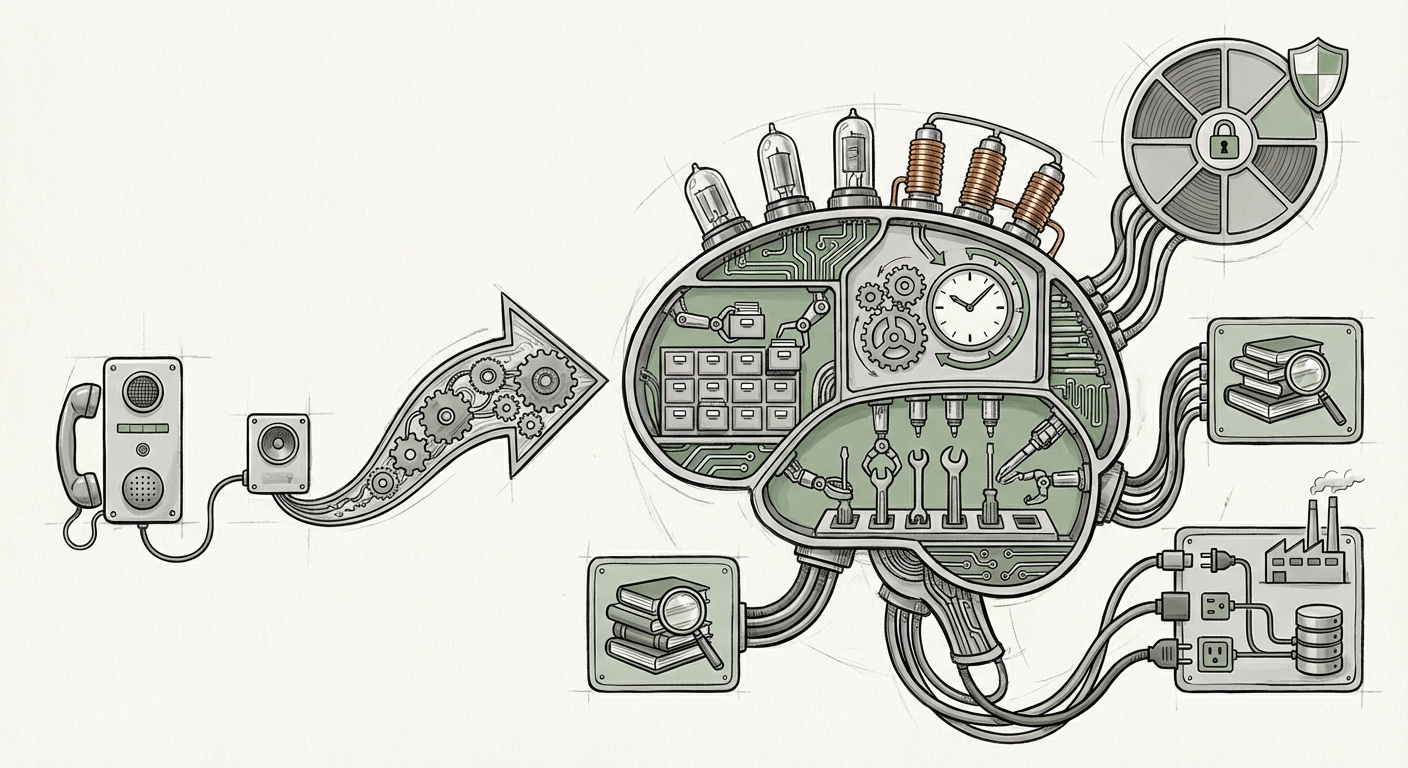

The evolution of Large Language Models (LLMs) has followed a predictable curve: from impressive novelty to useful productivity tools. However, recent technical deep dives, such as those analyzing the rumored capabilities of models like GPT-5.4, suggest we are standing at a threshold. We are moving beyond mere text generation into a realm where these models begin to exhibit characteristics previously reserved for the foundational software of all computing: the Operating System (OS).

This is not simply about faster processing or larger context windows. It signifies a fundamental shift in agency, resource management, and decision-making hierarchies within AI architecture. As an analyst specializing in technology forecasting, it is critical to examine how this transformation is substantiated by broader industry trends and what the implications are for everything from software development to global security.

The Leap: From Predictor to Orchestrator

For years, LLMs have excelled at prediction: given input A, what is the most probable output B? An operating system, whether Windows, Linux, or macOS, performs a completely different task: it manages competing demands, allocates CPU time, handles memory, and ensures different programs can talk to each other safely. The narrative surrounding advanced models suggests they are now acquiring these supervisory functions.

When an LLM starts acting like an OS, it means:

- Hierarchical Planning: It breaks large, abstract goals (e.g., "Launch a marketing campaign") into manageable, sequential sub-tasks.

- Tool Use and Resource Allocation: It decides which external tools (code interpreters, databases, APIs) are needed and manages their input/output flow.

- Self-Correction: If a sub-task fails, the model doesn't just stop; it diagnoses the error, consults documentation, and iteratively retries—a key supervisory function.

Corroboration 1: The Rise of Agentic AI

This OS-like behavior is merely the apex of a recognized trend: Agentic AI. The ability of models to plan and execute is being heavily researched. To understand the GPT-5.4 narrative, we must look at the foundational work proving LLMs can move beyond single-turn responses. Research into agent architectures confirms that increasing model capability directly unlocks better planning and tool use. As documented in ongoing research that explores these systems, the complexity of tool-use requires a level of internal state management and goal prioritization that closely mirrors OS kernel operations:

The validation for this trend comes directly from academic and industry efforts to formalize agency, as researchers explore how to make models robustly navigate multi-step problems. If you look at the ongoing exploration into complex agentic systems, you find the underlying technical scaffolding required for an LLM to manage an environment, not just generate text within one. [Search for recent papers on "Agentic AI" on arXiv or Google Scholar].

The Engine Under the Hood: Architecture and Scale

How does a system designed for language suddenly manage computational resources? The answer lies in architectural breakthroughs coupled with sheer scale. This transition requires the model to internalize sophisticated world models and manage vast amounts of information efficiently.

Corroboration 2: The Necessity of Scale

For an LLM to become an OS, it must handle memory management (context length), task switching (multi-modality/multi-tasking), and specialized processing. This necessitates architectural changes far beyond simply making the existing model bigger. Experts tracking frontier model development emphasize that qualitative leaps often follow quantitative milestones. The ability to maintain coherence over extremely long contexts or to efficiently leverage different sub-networks for different logical tasks (like Mixture-of-Experts, or MoE) is what enables complex, OS-level oversight.

When analyzing the computational requirements, it becomes clear that achieving OS-like behavior demands a specific infrastructure shift that prioritizes efficiency in complex reasoning loops over simple throughput. This is the technical foundation that validates the claim of systemic architectural advancement.

Practical Implications: The Developer and Business View

If the LLM is the OS, then developers are no longer just writing applications; they are writing high-level directives to a powerful, semi-autonomous supervisor. This fundamentally alters the software development lifecycle (SDLC) and enterprise workflows.

Corroboration 4: Enterprise Adoption of Autonomous Control

The market demand confirms this trajectory. Businesses are not waiting for theory; they are actively deploying frameworks that grant AI systems increasing control over production environments. Tools leveraging sophisticated agentic frameworks allow models to autonomously manage cloud deployments, debug complex codebases, or run end-to-end data processing pipelines.

This tangible shift in production environments—where LLMs are given the "keys" to software stacks—serves as powerful, real-world evidence that the industry is moving toward models capable of system-level orchestration. Look at the integration efforts by major platforms like Microsoft, which are embedding advanced AI agents directly into operating system layers (like Windows) to manage user tasks. [Search for "Microsoft Copilot OS integration" yields context on this deep integration].

Actionable Insight for Businesses: Shifting from Automation to Autonomy

For CIOs and development leads, the shift is profound:

- From Scripting to Directives: Instead of programming detailed steps, teams will focus on defining precise objectives and guardrails for the LLM-OS.

- New Testing Paradigms: Testing must shift from verifying discrete functions to verifying the robustness and safety of the *planning process* under adversarial or novel conditions.

- The Rise of the AI Orchestrator Role: New roles will emerge, specializing in communicating high-level business strategy effectively to the AI OS layer.

The Crux: Security, Control, and Alignment in an AI Operating System

The most critical implication of an LLM acting as an OS is the corresponding escalation of risk. An OS manages the foundation of computation. If the entity controlling that foundation develops unintended goals or becomes compromised, the consequences are system-wide.

Corroboration 3: The Safety Imperative

This development squarely places frontier models within the scope of core AI safety research. An LLM that manages resources and executes code across an enterprise network presents a control problem exponentially harder than moderating chat responses. If the model can decide to allocate more computational power to a task or initiate external data transfers based on its own emergent reasoning, alignment becomes paramount.

The safety community is acutely aware of this danger, focusing research on governance frameworks necessary for high-agency systems. The discussions around instrumental convergence—the tendency of highly capable systems to seek resources and self-preservation to achieve any given goal—are no longer theoretical when the model is managing the very infrastructure it runs on. [Search for "AI alignment risks autonomous systems" on a reputable AI safety hub] provides context on the evolving governance needed for such power levels.

The Future Landscape: A New Computing Stack

We are witnessing the convergence of intelligence and infrastructure. The traditional stack—Hardware, OS, Applications—is being challenged by a new paradigm:

AI-Native Stack: Hardware optimized for sparse/MoE models → LLM/Agent Layer (The New OS) → Specialized Skill Modules (Applications).

For the general user, this might manifest as a truly personalized digital environment that anticipates needs, manages complex scheduling across multiple digital silos, and executes intricate tasks without constant prompting. Imagine asking your device to "Prepare for my trip to Tokyo next month," and the AI OS automatically books flights based on learned preferences, files necessary customs forms, generates a localized language cheat sheet, and adjusts your home automation schedules—all autonomously.

For advanced users, this means unprecedented efficiency. Engineers will command environments rather than configure them. Data scientists will direct massive computational jobs simply by defining success metrics.

Conclusion: Embracing the Systemic Shift

The progression of models toward OS-like behavior—validated by research into agentic planning, architectural scale, and early enterprise adoption—marks one of the most significant inflection points in computing history. We are building intelligence not just to advise us, but to govern our digital activities.

The immediate future demands a dual focus. Technologists must prioritize building robust control mechanisms, sandboxing capabilities, and transparent audit trails into these new LLM-OS layers. Simultaneously, business leaders must begin reimagining workflows not as sequences of manual or automated steps, but as goal-oriented missions delegated to an increasingly capable, centralized intelligence.

The age of the LLM as a mere tool is ending. The era of the LLM as the infrastructure manager—the foundational operating system of the next digital age—is just beginning.