The Silicon Uprising: Why Meta's Custom Inference Chips Are Reshaping the Economics of AI

The Artificial Intelligence landscape is currently defined by two primary challenges: building models so large they require supercomputing power (training), and then running those models cheaply enough to serve billions of daily requests (inference). While the training phase captures headlines with its staggering hardware demands, it is the inference stage that dictates long-term profitability. This is why Meta’s recent announcement—unveiling four generations of custom AI chips specifically designed to slash inference costs—is not just an incremental hardware update; it is a declaration of strategic independence and a fundamental challenge to the current AI compute oligarchy.

For years, the entire industry has been tethered to one key supplier for high-performance AI processing: Nvidia. However, Meta is signaling that scaling AI to touch every user on Facebook, Instagram, and WhatsApp requires an economic blueprint that external vendors cannot provide. This shift toward **vertical integration**—building your own specialized tools—is transforming the future architecture of the digital world.

The Crux of the Matter: Training vs. Inference Costs

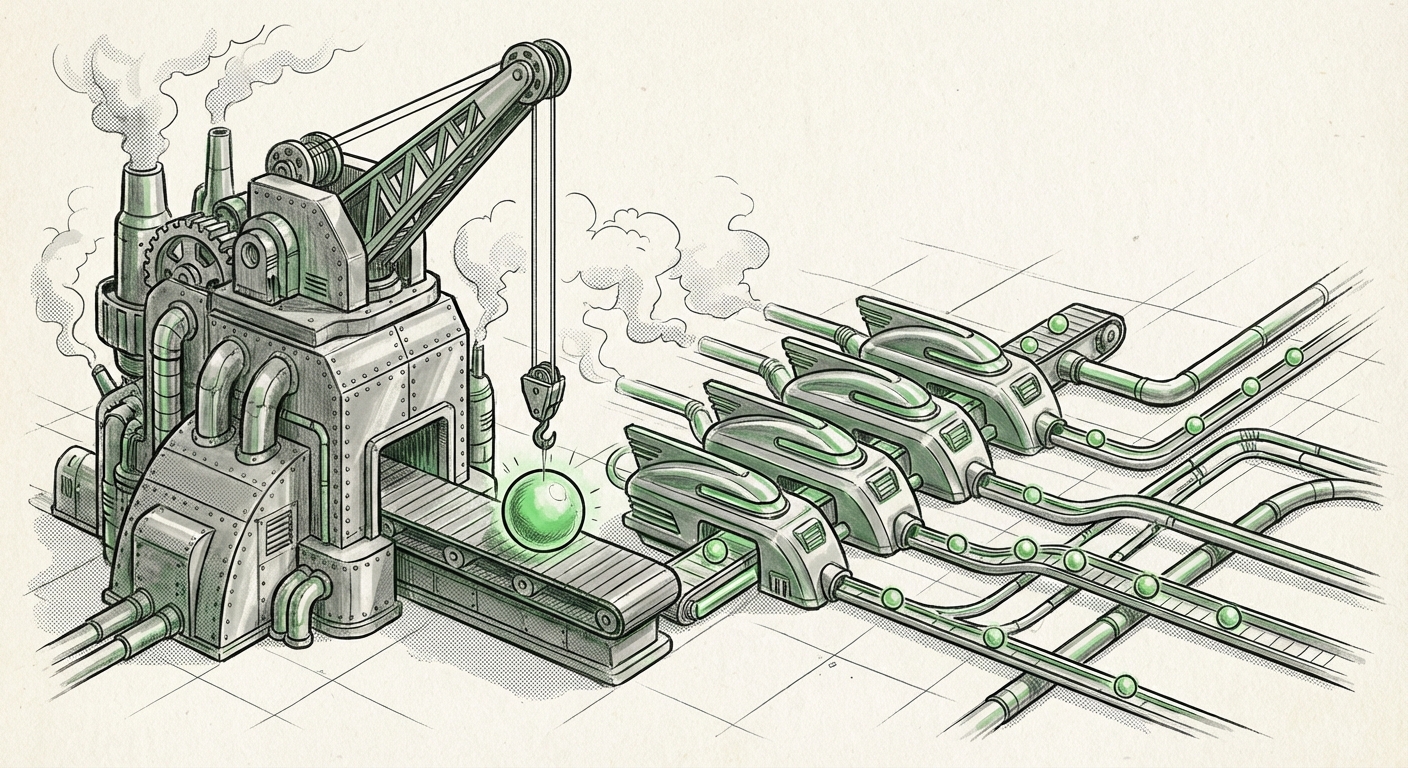

To appreciate the significance of Meta’s move, we must understand the difference between AI training and inference. Imagine building a complex skyscraper (training). This requires massive, general-purpose cranes and specialized heavy machinery working over months. Once the building is finished, maintenance and moving people in and out (inference) is a far more frequent, but less resource-intensive task per operation.

Nvidia GPUs, like the H100, are magnificent generalists—super-cranes perfectly suited for the heavy lifting of training large language models (LLMs). They offer flexibility but come at a premium. In contrast, inference involves serving millions, perhaps billions, of small, repetitive requests (like generating a caption, summarizing a post, or answering a simple query).

As contextual sources suggest, **"Why Inference is the Next Frontier for AI Hardware Efficiency"** is a critical question because inference scales horizontally. The cost of running an AI chatbot for one user is negligible; the cost of running it for a billion users suddenly breaks the bank. Meta’s custom chips, designed as Application-Specific Integrated Circuits (ASICs), are optimized ruthlessly for this specific task, prioritizing energy efficiency and throughput over the general flexibility of a GPU.

The Billion-User Math

Meta serves billions of people across its apps. Every millisecond of computation adds up. If a custom chip can perform an inference task using half the energy and at a quarter of the initial cost of a general-purpose GPU solution, the savings when multiplied across billions of daily interactions become monumental. This directly underpins the strategic motivation: **"Hyperscalers building own silicon for LLMs"** isn't about ego; it's about survival in the hyper-competitive market of free-to-use, massive-scale consumer applications.

The Great Decoupling: Challenging GPU Dominance

Meta’s announcement is the latest, and perhaps most aggressive, salvo in the **"AI Chip Wars"** being waged by Big Tech. Google has its Tensor Processing Units (TPUs), Amazon has Inferentia and Trainium, and Microsoft is heavily investing in its Maia chips. All these efforts share a common goal: reducing dependence on external suppliers, particularly Nvidia.

The high concentration of power in the GPU market has created an extreme cost structure. This dependency leaves hyperscalers vulnerable to supply chain bottlenecks and price hikes. When Meta designs its own silicon, it gains control over performance tuning, roadmaps, and, critically, pricing.

The discussion around **"Analyst Report: Will In-House Silicon Erode Nvidia’s Data Center Dominance?"** highlights this tension. While Nvidia’s high-end chips remain essential for training the *next* breakthrough model, they are overkill for the daily grind of inference. By fielding four generations of custom chips, Meta is signaling that they intend to shift a vast portion of their existing compute workload—the constant running of already trained models—onto their own efficient, proprietary hardware.

Implications for the Semiconductor Ecosystem

This move forces a restructuring of the entire semiconductor market. For chip designers and analysts, the key question becomes the **"Impact of custom AI chips on GPU demand forecast."** If the largest purchasers of AI hardware (Meta, Google, Amazon) dedicate significant capital to in-house silicon, the exponential growth curve for external GPU sales might flatten in specific high-volume sectors, particularly inference clusters. This necessitates that Nvidia focuses even harder on remaining several generations ahead in the highly specialized, resource-intensive training space.

The Architectural Advantage: Precision for Purpose

The technical details behind these custom ASICs are where the real performance gains are found. An ASIC is like a custom-built calculator designed only to do one type of math very fast; a GPU is a powerful Swiss Army knife capable of handling many types of tasks.

As researchers delve into **"AI inference chip design challenges,"** they find that the crucial elements for serving LLMs involve high memory bandwidth, efficient matrix multiplication units (for specialized math), and optimized power delivery. Meta’s chip development focuses on these precise bottlenecks. They can tailor the memory architecture to match the exact layer structure of their Llama models, resulting in far greater performance per watt than a general-purpose chip that must accommodate every potential workload.

This precision leads to immediate benefits:

- Lower Latency: Faster response times for users interacting with AI features in real-time.

- Reduced Power Consumption: Lower operating costs and a smaller overall carbon footprint for their enormous data centers.

- Feature Velocity: Meta can iterate faster on its AI features because its hardware roadmap is perfectly aligned with its software needs.

Future Implications: Democratization vs. Centralization

Meta’s commitment to custom silicon has profound implications that span both the philosophical debates around AI openness and the practical realities of enterprise deployment.

Fueling the Open Source Engine

Crucially, Meta often pairs its hardware ambitions with its open-source model strategy (Llama). The article context notes the connection between **"Meta Llama hardware strategy."** Developing custom inference chips means Meta can afford to run its powerful Llama models exceptionally cheaply across its global infrastructure. This cost advantage strengthens their position as the primary advocate for open-source LLMs. If Meta can deploy Llama 3 for a fraction of the cost competitors pay to run closed models, it provides a tangible economic incentive for developers to adopt the open ecosystem.

The New Cloud Model

For businesses relying on AI services, this vertical integration trend signals a bifurcation in the market:

- The Hyperscaler Advantage: Tech giants like Meta will use their custom silicon advantage to offer differentiated, potentially lower-cost, or higher-performance AI services to their end-users. Their operational costs become a competitive moat.

- The Enterprise Dilemma: Companies not large enough to design their own silicon will remain reliant on generalized providers (like Nvidia-powered cloud offerings). They must invest heavily in optimizing their models to fit within the constraints of generalized hardware, making efficiency a key consultancy focus.

This era is moving toward infrastructure specialization. We are seeing the rise of "AI-native data centers" built not just around powerful processors, but around processors custom-fit for specific AI workloads.

Actionable Insights for Leaders

What should technology leaders, investors, and engineers take away from Meta’s strategic pivot?

For Business Leaders and Investors:

1. Analyze the Total Cost of Ownership (TCO): Do not look only at the purchase price of hardware. For heavy-use generative AI applications, the TCO—driven by inference energy and operational costs—will quickly dwarf initial capital expenditure. Prioritize hardware partnerships that offer demonstrable inference efficiency (TOPS/Watt).

2. Watch the Inference Roadmap: The competitive edge is shifting from who has the biggest training cluster to who can serve results the cheapest. Track which vendors are releasing dedicated inference accelerators, as these will define market pricing for serving AI APIs.

3. Embrace Model Pruning and Quantization: Since custom chips demand highly optimized models, businesses must invest heavily in techniques that make their deployed models smaller and faster without losing accuracy (like quantization and pruning). This preparation makes your models portable to next-generation, custom silicon when it becomes available outside the hyperscalers.

For AI Engineers and Architects:

1. Master Inference Deployment Frameworks: Familiarity with frameworks that maximize utilization on specialized hardware (like ONNX Runtime, TensorRT, or platform-specific compilers) becomes vital. Engineers must become experts in pushing models to the metal, regardless of whether that metal is made by Nvidia, Google, or Meta.

2. Design for Efficiency from Day One: When training new models, incorporate inference efficiency constraints early in the architecture design. A model that performs excellently on a high-end GPU during training but tanks on efficiency during deployment will become prohibitively expensive to operate at scale.

Conclusion: The Inevitable March of Specialization

Meta’s unveiling of four generations of custom inference silicon is a clear signal that the age of relying solely on generalized, off-the-shelf GPUs for all AI workloads is drawing to a close. The economics of scaling AI to billions of users demand specialization. This strategic vertical integration is about capturing control, cutting costs, and accelerating deployment velocity.

While Nvidia retains a powerful advantage in the frontier training space, the immense and consistent cost burden of inference is too large for hyperscalers to ignore. We are witnessing the inevitable march toward hardware specialization, where the chip that trains the brain is different from the chip that enables the brain to converse efficiently with the world. The winners in the next phase of AI adoption will be those who master this specialized hardware efficiency, making AI pervasive, profitable, and perpetually available for everyone.