Nvidia's $26 Billion Bet: Why Powering Open Source AI Secures the Hardware Future

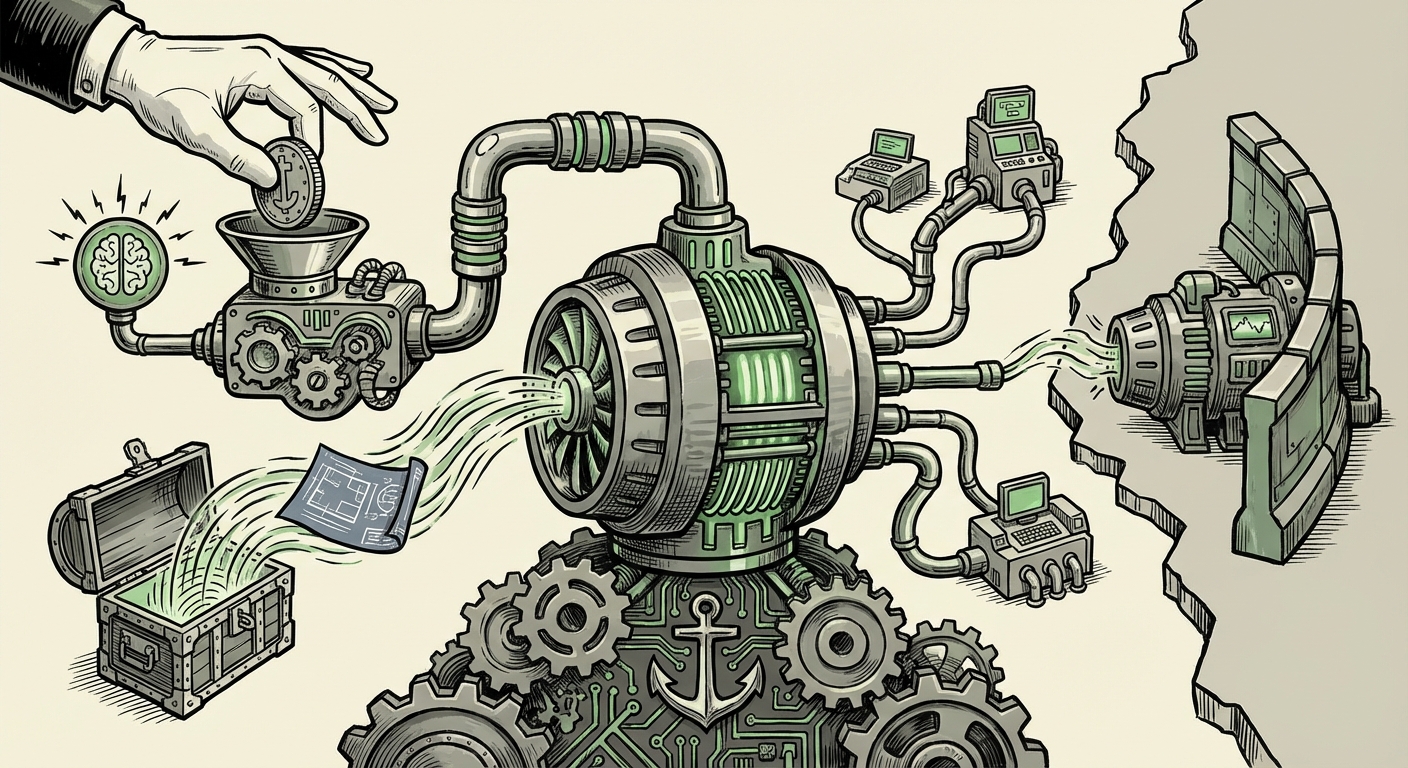

The Artificial Intelligence landscape is often portrayed as a stark battle between titans: the closed, secretive labs like OpenAI and Anthropic versus the open-source community driven by transparency and community contribution. However, recent revelations regarding Nvidia’s strategic roadmap signal a fascinating new phase in this conflict. An SEC filing indicating a massive **$26 billion investment** over the next five years dedicated to open-weight AI models suggests that Nvidia is not just observing the open-source trend—it is actively funding it, but with a very specific, hardware-centric goal in mind.

This move is less about altruism and more about market control. To understand the profound implications for technology trends, we must analyze this investment through three critical lenses: the momentum of the open-source movement, the geopolitical necessity of countering foreign dominance, and the unbreakable grip of the CUDA software ecosystem.

The Open-Source Inflection Point: Filling the Vacuum Left by Giants

For a long time, the most powerful AI models—the ones setting the performance benchmarks—were kept behind strict APIs. This created a frustrating "AI gap" for developers who needed full access to tweak, optimize, and build specialized applications without relying solely on the mercy of a few mega-corporations. Meta, with its commitment to releasing models like Llama, fundamentally shifted this dynamic.

As research suggests, the release of high-quality, permissively licensed models like Llama led to an immediate, explosive adoption rate within the developer community. This democratization fueled innovation at the edges—creating highly specific, efficient models that could run on less powerful hardware or cater to niche enterprise needs. This community vigor proves that the demand for open-weight models is not a fringe interest; it is the future engine of broad AI adoption.

Why Nvidia Cares About "Open" Models

Nvidia’s $26 billion commitment acknowledges this "Llama effect." If companies like OpenAI lock down their models, they implicitly limit the sheer *volume* of specialized training and inference tasks being performed worldwide. By funding and supporting the open ecosystem, Nvidia ensures that the next billion AI tasks—the thousands of startups fine-tuning models for specific tasks—are all built around hardware that requires their GPUs. It’s a strategy of enabling the competition to flourish, provided they run on your platform.

This approach aligns with ongoing discussions about the future of AI development. While proprietary models might hold the absolute frontier of capability, the real commercial value often lies in the deployment, adaptation, and optimization of strong, accessible models. Nvidia is investing in the *tools* and the *foundational models* that will define that deployment landscape.

The Geopolitical Imperative: Countering the Eastern Advance

A significant, often understated driver behind this strategic pivot is the rising competitive pressure from Chinese technology giants. As geopolitical tensions rise, control over foundational technology becomes a matter of national security and economic dominance. Reports tracking the progress of Chinese LLMs indicate that while the US still holds a lead in cutting-edge model size, the open-source sector in China is rapidly maturing, often benefiting from massive state support.

If the global standards for open-source AI development—the preferred frameworks, the optimized kernels, and the foundational weights—begin to coalesce around platforms favored by Beijing, the Western technological ecosystem faces fragmentation and strategic disadvantage. Nvidia, as the undisputed leader in the hardware underpinning global AI research, cannot afford to have its dominance challenged by hardware built around alternative ecosystems (like those favoring domestic Chinese chips).

Nvidia’s open-source funding acts as a preemptive measure. By injecting significant capital and engineering resources into open-weight model development accessible globally, they help anchor the primary development pipeline to NVIDIA-centric tools and hardware. This ensures that the next generation of global AI innovation is built on the **CUDA stack**, rather than being forced onto alternative architectures that might become dominant in closed-off geopolitical spheres. It’s a defense of market share disguised as community support.

The Unbreakable Hook: CUDA and the Logic of Lock-In

The most critical aspect of Nvidia's strategy is not the open-weight models themselves, but how these models will be developed and optimized. This brings us directly to the enduring power of the **CUDA ecosystem**.

For years, Nvidia has cultivated CUDA—a specialized programming language and software layer that allows developers to efficiently utilize the parallel processing power of their Graphics Processing Units (GPUs). For AI researchers, CUDA is less a choice and more a necessity. It is the standard, optimized, and best-documented path for training and running large neural networks.

The Trap of Optimization

When Nvidia invests in open models, they are not just funding model weights; they are funding the entire supporting infrastructure—the libraries, the optimized kernels, and the reference implementations required to make those models run fast. As technical deep-dives reveal, migrating complex AI workloads off the CUDA framework is an incredibly expensive, time-consuming undertaking for even large companies, often requiring months of specialized engineering effort.

Therefore, the $26 billion serves a dual function:

- Seed the Ecosystem: Provide attractive, high-quality base models for free use.

- Enforce Dependency: Ensure that the optimizations necessary to make those models competitive are deeply integrated into CUDA, Triton Inference Server, and other proprietary Nvidia software tools.

For a small startup, fine-tuning an open model on an Nvidia GPU running CUDA is the easiest, fastest route to deployment. For a massive enterprise, even if they possess alternative hardware options, the friction cost of rewriting their entire optimization stack to avoid Nvidia becomes astronomical. Nvidia is effectively spending billions to deepen the moat around its trillion-dollar hardware business.

Implications for the Future: What This Means for You

This strategic maneuver by Nvidia has concrete ramifications across the technology stack, affecting everyone from the academic researcher to the CFO.

For AI Developers and Researchers

Actionable Insight: Embrace the open models, but learn the Nvidia stack deeply. The availability of well-funded, high-quality open models means you can rapidly prototype without API dependency. However, to achieve the best performance—the speed and cost efficiency that keeps your project viable—you will almost certainly need to master tools like **CUDA, cuDNN, and TensorRT**. The future developer community will be fluent in open models spoken through Nvidia’s proprietary dialect.

For Business Leaders and Investors

Actionable Insight: Recognize that the *model* layer might become commoditized, but the compute layer remains strategically vital. Investments in AI infrastructure must account for the unavoidable cost of high-performance GPUs. While abstract debates rage about "open vs. closed" models, the reality on the ground is that access to cutting-edge *speed* still means access to Nvidia. Any diversification strategy must confront the non-trivial cost of decoupling from CUDA.

For Policy Makers

Actionable Insight: The investment frames a critical policy discussion. While competition is healthy, extreme concentration of compute infrastructure control poses systemic risk. If one company controls the necessary tooling (software) for the dominant hardware (GPUs), antitrust scrutiny may need to broaden beyond simple hardware sales to encompass the software ecosystem that creates the lock-in.

The Open Weight vs. Closed Source Debate Continues

This Nvidia move adds a complex layer to the ongoing philosophical debate. Closed-source advocates argue that massive foundational models require proprietary control for safety and responsible deployment. Open-source proponents argue for transparency to catch biases and foster broader innovation.

Nvidia’s strategy is a form of pragmatic middle ground: they support the open weights (the actual model files) which satisfies the need for transparency and community fine-tuning, while simultaneously ensuring the *execution environment* (the hardware and core libraries) remains centralized under their control. This ensures that as the ecosystem expands, Nvidia expands its revenue proportional to that expansion.

The future of AI deployment will likely feature a vibrant, heavily subsidized open-source layer built atop a highly efficient, proprietary hardware execution layer. Nvidia is essentially paying to ensure that its GPUs are the standard CPU of the AI age—not just for running the biggest proprietary models, but for running everything else, too.

This $26 billion is not merely an R&D budget; it is a five-year strategic fortification of the most dominant choke point in modern computing. By championing the community’s favorite models, Nvidia guarantees that the community will continue buying its indispensable hardware.