The Synthetic Battlefield: How AI Propaganda Breaches Media Trust and Redefines Information Warfare

The recent news that the major German news outlet, *Der Spiegel*, had to retract several images from its Iran coverage because they were likely generated or altered by Artificial Intelligence is more than just an embarrassing editorial slip-up. It serves as a stark, high-profile signal flare marking a critical technological inflection point. This incident forces us to confront the reality that generative AI has moved from a fascinating creative tool to a potent weapon in the realm of geopolitical information warfare.

As an AI technology analyst, the implications here are vast. We are watching the moment synthetic media achieves "escape velocity"—the point where its creation is easy, its quality is indistinguishable from reality to the naked eye, and its deployment is integrated into state-sponsored influence operations. The challenge is no longer *if* AI will be used to deceive, but *how* deeply embedded this deception has already become in our information ecosystem.

The Iranian Playbook: State Actors Embrace Hyper-Realistic Deception

The initial report centered on Iranian propaganda images finding their way into major European media. This isn't new; state actors have always used visual manipulation. What is radically new is the toolkit.

Before large language models (LLMs) and sophisticated diffusion models (like Midjourney or Stable Diffusion), creating convincing fake imagery required significant time, specialized graphical skills, and often expert fabrication labs. Today, a government entity or even a highly motivated non-state actor can generate dozens of hyper-realistic, contextually persuasive images in minutes, simply by typing a descriptive text prompt.

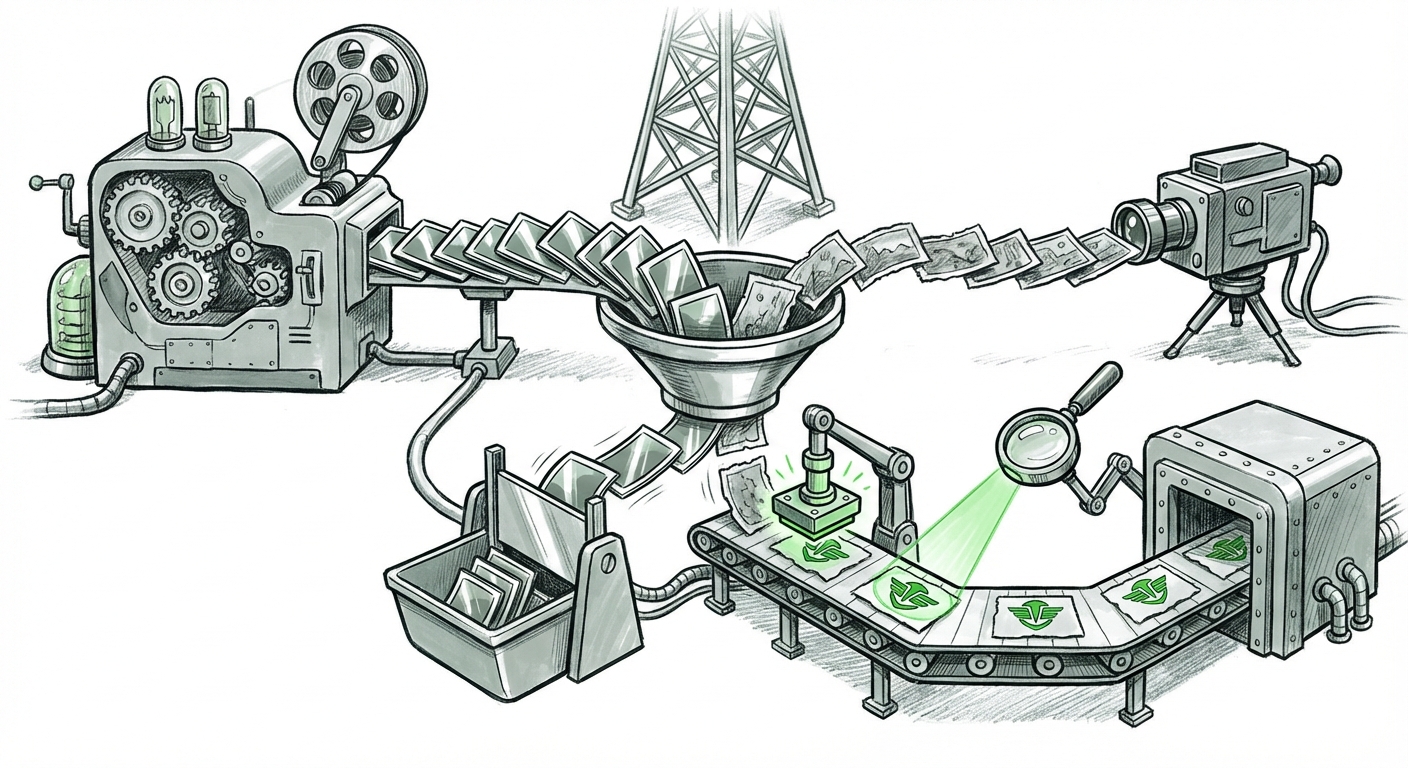

This speed and scale fundamentally change the calculus of information warfare. We must move beyond viewing this as isolated hacking and analyze it through the lens of **industrial-scale disinformation**. Intelligence assessments confirm that adversaries are actively integrating these tools. For example, searching into the **proliferation of AI disinformation in geopolitical conflict** reveals a recognized trend where actors are using synthetic media to amplify domestic narratives or sow discord in adversary nations.

For organizations tracking global security, this confirms that the primary threat isn't just textual misinformation; it’s the creation of a fabricated visual reality that undermines the credibility of legitimate photojournalism. If a viewer can no longer trust an image of an event, the foundational contract between media and the public breaks down.

What This Means for the Future of AI

This demands AI systems that are better at self-censorship regarding sensitive topics or that are inherently traceable. It pushes the frontier of multimodal AI capability—the ability of one system to generate and analyze text, video, and images seamlessly—making the creation of complex, fabricated narratives frighteningly efficient.

The Detection Arms Race: A War of Algorithms

When the tool for creation becomes universally accessible, the focus must immediately shift to the tool for defense. The *Der Spiegel* incident exposes a critical vulnerability in existing editorial workflows: **detection failure.**

Investigating **AI image detection accuracy and media authentication standards** reveals a technological arms race. Generative models are trained to create images that fool human eyes; detection models are trained to spot the subtle digital artifacts, noise patterns, or inconsistent lighting that reveal an image’s synthetic origin. However, every time a new detection method is published, prompt engineers rapidly adapt their inputs to evade it—a classic adversarial attack cycle.

This constant cat-and-mouse game has serious implications for technological investment. Relying solely on post-hoc forensic detection is a losing game in the long run, especially as models become more powerful.

Future Implications: From Forensics to Provenance

This vulnerability is rapidly accelerating the industry’s pivot toward **content provenance**. Instead of asking, "Is this fake?" the industry must shift to answering, "Where did this originate, and has it been altered?"

This is where standards like the **Coalition for Content Provenance and Authenticity (C2PA)** become vital. Imagine every digital camera, smartphone, and professional software package embedding an immutable, cryptographic signature into an image the moment it is captured. This signature details the capture device, time, and any subsequent edits. If an image lacks this verifiable chain of custody, it should be treated with suspicion—even if it looks real.

For developers and hardware manufacturers, the future involves embedding this security at the silicon level, making content verification a default feature rather than an optional add-on.

The Institutional Response: Rebuilding Trust Through Policy

The third critical area illuminated by this event is the failure of institutional governance. *Der Spiegel* was forced to clean up its content after the fact. This highlights the urgency for establishing clear, pre-emptive guidelines.

By examining **major news outlet policies regarding AI-generated imagery**, we see a fracturing landscape of journalistic ethics. Some organizations, particularly wire services that prioritize raw, verifiable visual data, have enacted near-total bans on synthetic images for news reporting. Others adopt a tiered approach, allowing AI for illustrative graphics (like concept art or visualizations) but strictly segregating them from factual photojournalism, requiring conspicuous labeling.

This divergence in policy creates confusion for the public and opportunities for bad actors exploiting regulatory gaps. If one major outlet permits AI-generated illustrations in sensitive political contexts, it lowers the bar for every other organization.

Practical Implications for Business and Society

For businesses that rely on brand trust—which is nearly every business today—the implications are twofold:

- Internal Verification Mandates: Marketing departments, internal communications teams, and corporate social media managers must immediately establish internal policies for AI-generated visual assets. Using an unchecked AI image in a corporate annual report or an emergency press release could lead to catastrophic reputational damage mirroring the *Der Spiegel* scenario.

- Supplier Vetting: Any third-party vendor or stock media service used must guarantee adherence to provenance standards. "Verified authentic" must become a procurement requirement, not a marketing bonus.

For society, the implication is a permanent elevation of digital literacy requirements. We must teach children and train adults to think critically about *all* media, understanding that "seeing is no longer believing." The baseline for skepticism must be permanently raised.

Actionable Insights for Navigating the Synthetic Future

To address the threats exposed by incidents like the one at *Der Spiegel*, stakeholders must adopt a proactive, three-pillar defense strategy:

1. Embrace Provenance Over Detection

Businesses and media houses must champion and integrate verifiable content standards (like C2PA). Focus development resources on tools that *verify* legitimate content pathways rather than purely on detecting deceptive content, which is always one step behind.

2. Develop Robust, Multi-Tiered Editorial Guidelines

News organizations must stop debating *if* they should use AI and start defining *where* and *how*. Clear distinctions must be drawn between AI used for illustration (which must be labeled) and AI used for documentary evidence (which must remain banned until absolute cryptographic security is achieved).

3. Invest in AI Literacy Infrastructure

This is a societal defense. Governments, educational institutions, and tech companies need to collaborate on widespread public education that explains generative AI capabilities, how deepfakes are made, and how to look for verification badges. This builds communal resilience against targeted propaganda campaigns.

Conclusion: The Imperative of Authenticity

The infiltration of Iranian propaganda images into a premier German publication is a textbook example of how rapidly sophisticated generative AI tools are being weaponized against established trust structures. It confirms that we are no longer in the early adoption phase of synthetic media; we are deep into the phase of strategic application by geopolitical competitors.

The future of AI will not just be about creating smarter tools; it will be about creating trusted tools. The technology giants that win the next decade will be those that not only build the most capable models but also provide the most secure, traceable frameworks to ensure that reality remains distinguishable from highly polished fabrication. For journalists, policymakers, and consumers alike, the mandate is clear: demand transparency, champion authenticity, and prepare for a visual world where everything is suspect until proven otherwise.