The Three-Command Future: How Simplified MLOps is Redefining AI Deployment Speed and Access

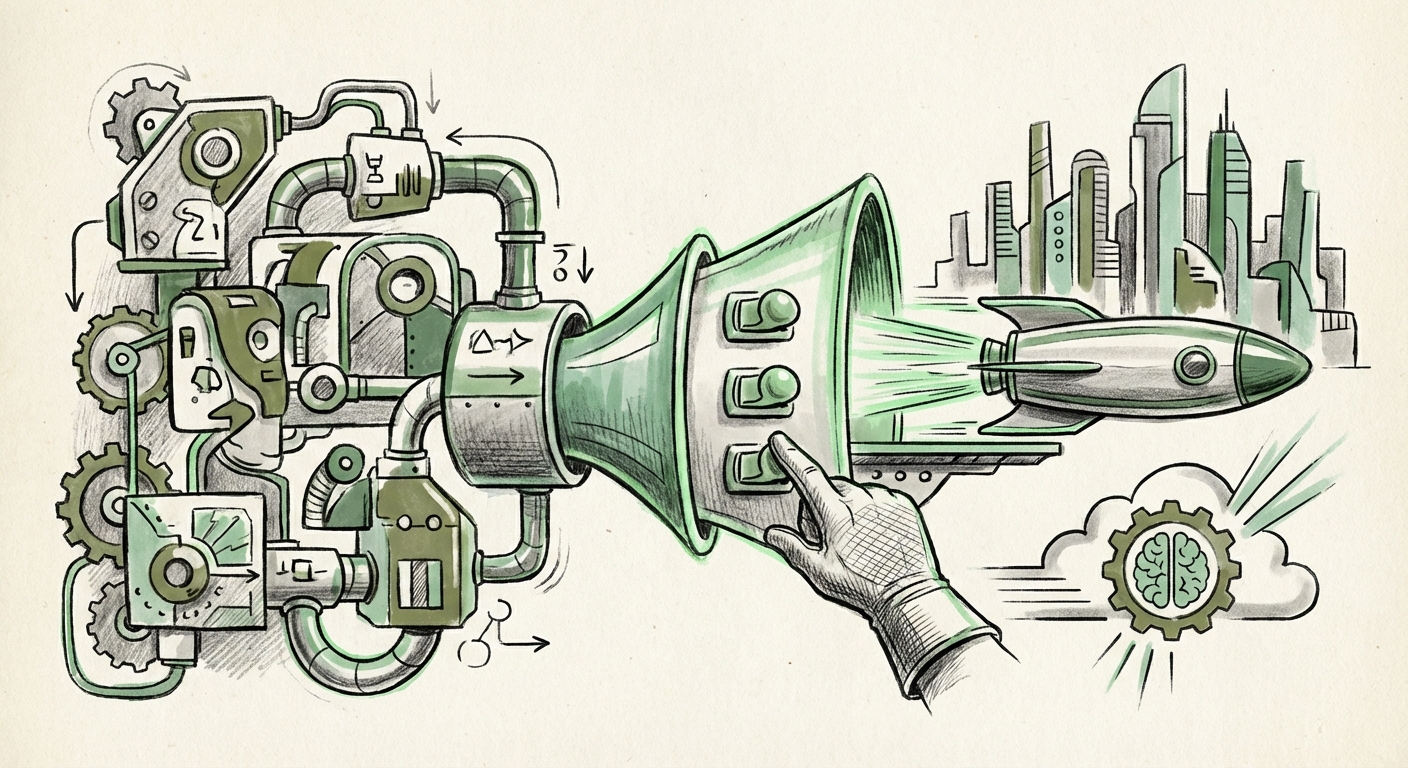

The journey from a promising machine learning model in a researcher's notebook to a robust, scalable application serving millions of users has historically been the AI industry’s biggest bottleneck. This process, known as MLOps, has been notoriously complex, requiring deep expertise in infrastructure, networking, and containerization.

However, recent developments suggest a major shift is underway. The announcement of **Clarifai 12.2**, introducing a seemingly simple "three-command CLI workflow" for deployment—involving initialization, local testing, and production deployment with automated GPU provisioning—is more than just a product update. It is a loud declaration that the era of abstracting away infrastructure complexity in AI is here. This move forces us to re-evaluate the future of AI production, developer accessibility, and enterprise velocity.

1. The Great Abstraction: Industrializing MLOps

For years, MLOps has been visualized as a maturity curve. Early stages involved siloed teams and manual scripts. Progress required adopting complex tools for tracking experiments, managing feature stores, and deploying models via container orchestration systems like Kubernetes.

What Clarifai, and similar platforms are aiming for, is to shortcut the painful middle stages. This simplification aligns perfectly with conversations surrounding the **"MLOps Maturity Model and Abstraction Layers."** If we consider MLOps maturity levels—where Level 1 is manual and Level 4 is fully automated governance—this move attempts to push users directly to the cusp of Level 3, where pipelines are automated but the underlying infrastructure is managed.

For the CTO and AI Architect, this simplification means a drastic reduction in operational overhead. When deployment moves from a multi-day, multi-team effort involving provisioning VMs or tuning Kubernetes manifests, to three simple commands, the focus shifts entirely. The core value proposition is no longer whether the model is 98% or 99% accurate; it’s whether the business can deploy 100 models this quarter instead of 10.

The Infrastructure Leap: Automatic GPU Selection

Perhaps the most telling feature is the automatic GPU selection and infrastructure provisioning. GPUs are the lifeblood of modern deep learning, but they are also expensive and finite resources. Managing this allocation manually is a prime source of friction and cost overruns.

This abstraction pushes the deployment experience closer to the **"Serverless AI Deployment"** model. In traditional serverless compute (like AWS Lambda), you focus on the code; the provider handles the hardware scaling. Here, the platform manages the *hardware specialization* (the GPU type needed for optimal inference) based on the model artifact provided. This minimizes waste and ensures that even smaller teams can harness cutting-edge hardware without needing dedicated cloud infrastructure engineers.

2. The Command Line as the New AI Frontier: Elevating Developer Experience (DX)

For developers, the Command Line Interface (CLI) remains the most direct and powerful way to interact with complex systems. The push to refine the AI CLI workflow highlights a key realization: AI adoption is directly proportional to Developer Experience (DX).

A strong DX means reducing cognitive load. If a developer needs to memorize 30 flags, understand Docker volumes, and configure networking rules just to deploy a vision model, adoption stalls. If they can use `init`, `test`, and `deploy`, the friction evaporates. This aligns with industry observations emphasizing simplicity over feature proliferation. Tools that prioritize intuitive SDKs and clean CLI interfaces are winning the productivity battle, as noted in discussions around the **"Impact of Developer Experience (DX) on AI Adoption."**

Implications for the Developer Persona

This trend directly impacts who builds and deploys AI:

- Democratization: Data Scientists, who are often fluent in Python/R but less so in Kubernetes/Terraform, can now own the end-to-end deployment pipeline without constant reliance on DevOps.

- Speed and Iteration: Rapid iteration becomes feasible. A developer can push a new model version, test it locally (a critical safety net), and deploy it for A/B testing within hours, not weeks.

- The Rise of the Full-Stack AI Engineer: The skills gap narrows. The most valuable engineers will be those who can seamlessly combine model development knowledge with rapid deployment proficiency provided by these streamlined tools.

3. The Competitive Landscape: Consolidation vs. Customization

Clarifai’s move occurs within a highly competitive arena dominated by hyperscalers (AWS SageMaker, GCP Vertex AI, Azure ML) and specialized vertical platforms. When a platform aggressively simplifies deployment, it inherently raises questions about the trade-off between convenience and control—the classic **"AI Platform Consolidation and Vendor Lock-in Debate."**

The Trade-Off: Ease vs. Portability

Simplified, specialized CLI workflows are highly efficient *within* that ecosystem. However, relying on automatic GPU selection tied to a specific vendor’s management layer might make migrating that deployment pipeline to a different cloud or an on-premise environment significantly harder down the line.

For enterprise IT strategists, this demands careful consideration. If the business priority is time-to-market (e.g., a startup needing to ship features instantly), the lock-in risk is acceptable. If the priority is regulatory compliance demanding multi-cloud redundancy or avoiding dependency on a single provider's pricing structure, they may prefer slightly more verbose, but more portable, deployment methods (like Helm charts for Kubernetes).

The future will likely involve a bifurcation:

- The Acceleration Track: Businesses prioritizing speed will embrace these highly abstracted, three-command platforms, accepting the specialization benefits.

- The Portability Track: Large, risk-averse enterprises will still rely on abstraction layers built atop open standards like ONNX and container technologies, ensuring hardware and cloud independence, even if deployment takes five commands instead of three.

Practical Implications: What Businesses Must Do Now

The shift toward automated, simplified MLOps carries immediate, actionable implications for organizations leveraging AI:

Actionable Insight 1: Audit Your Deployment Lag

Measure the time it takes—from model approval to production service endpoint—within your current MLOps stack. If that time is measured in weeks, investigate platforms offering high levels of abstraction. The cost of delayed deployment (lost revenue, missed market opportunities) often outweighs the cost of adopting a managed platform.

Actionable Insight 2: Reallocate Infrastructure Talent

If your senior DevOps or Cloud Engineers are spending significant time debugging GPU drivers or optimizing cluster autoscaling for ML workloads, their skills are being underutilized. Empower them to focus on core business infrastructure or security, while offloading routine model provisioning to specialized AI deployment tools. This directly addresses the comparative analysis seen when looking at **Serverless AI Deployment vs. Managed Infrastructure** offerings.

Actionable Insight 3: Standardize Artifacts, Decouple Deployment

To mitigate vendor lock-in risk (Query 4), ensure that the model artifacts themselves—the saved weights, the training metadata—are stored in a standardized, portable format (e.g., standardized formats like ONNX or highly portable serialized objects). This way, if you choose a platform like Clarifai today for speed, you retain the *option* to lift and shift the core intelligence later if business conditions change.

The Future Outlook: AI Becomes Plumbing

When a technology becomes ubiquitous, it stops being headline news and starts becoming invisible plumbing. Electricity, networking, and cloud storage are now foundational utilities—we focus on what we *build* with them, not how the electrons move.

The trend exemplified by Clarifai 12.2 suggests that model serving is rapidly joining this category. In the near future, asking an engineer, "How did you deploy that model?" might yield a dull answer, akin to asking, "How did you set up your HTTPS certificate?" The magic will no longer be in the deployment command itself, but in the *intelligence* the model delivers to the end-user application.

The acceleration of MLOps abstraction means that the competitive edge in AI will shift decisively upstream (better data, better model architecture) and downstream (better integration into user workflows). The middle ground—the messy operational gap—is rapidly being paved over by powerful, simple tools. For businesses, this means the barrier to entry for real-world AI application development has never been lower, demanding immediate strategic action to capitalize on deployment velocity.