The Three-Command Revolution: How MLOps Abstraction is Accelerating AI Production

The world of Artificial Intelligence is evolving at a breakneck pace. While breakthroughs in large language models and generative AI capture the headlines, the true engine driving enterprise adoption lies in a less glamorous but infinitely more critical field: MLOps (Machine Learning Operations). The recent announcement regarding Clarifai 12.2, introducing a streamlined three-command CLI workflow for model deployment, is not just a feature update; it’s a powerful signal marking the next critical phase in the AI lifecycle: the democratization and operationalization of MLOps.

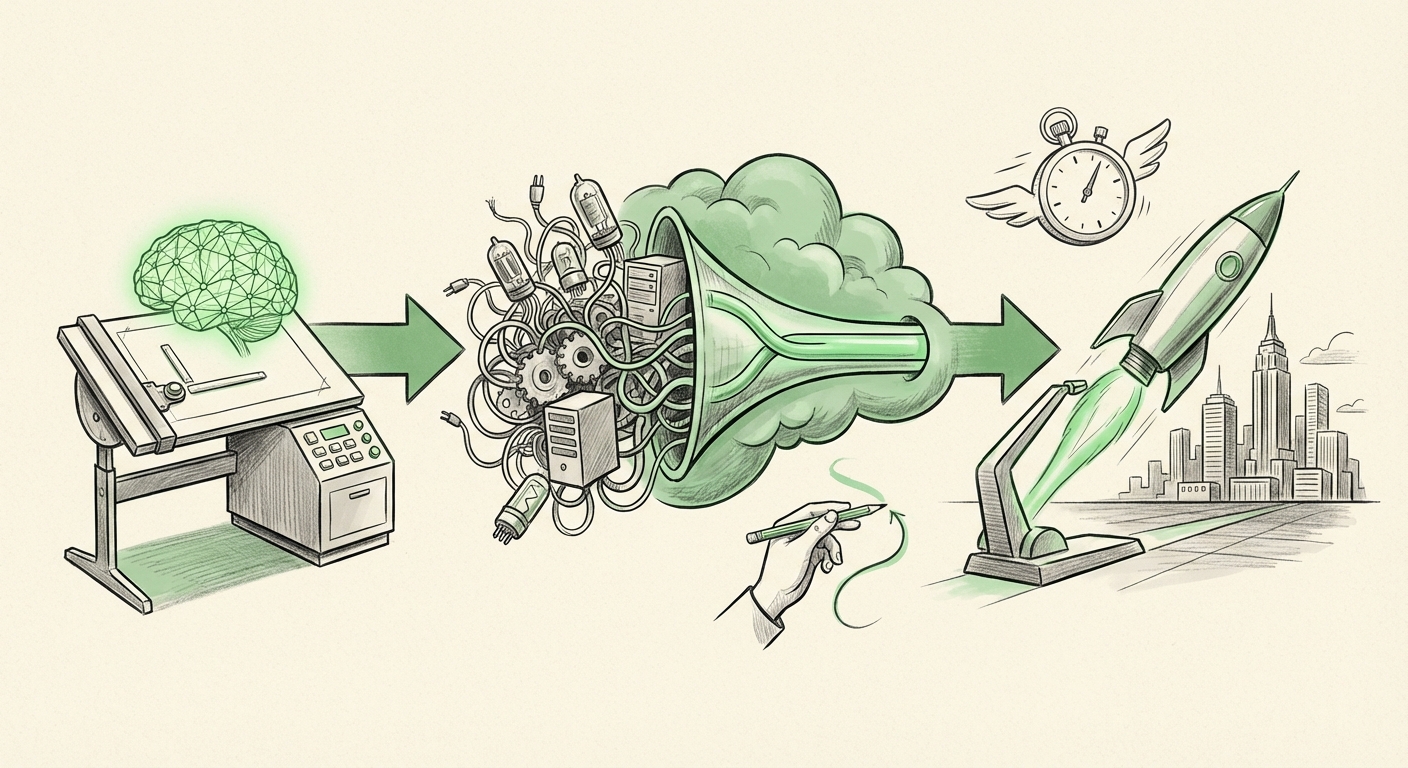

For years, moving a successfully trained AI model from a data scientist’s laptop into a real-world application has been a painful, multi-stage process requiring specialized infrastructure knowledge. Clarifai’s new approach targets this bottleneck head-on, hiding complexity behind simple commands: Initialize, Test Locally, and Deploy. This emphasis on **abstraction**—making GPU management and infrastructure provisioning invisible—is fundamentally changing who can deploy AI and how quickly they can do it.

The Bottleneck: Why Deployment Slows AI Down

Imagine you’ve built the perfect cake recipe (your model). You know exactly what ingredients (data) and oven settings (training parameters) are required. But now you need to bake a million cakes in a massive, industrial kitchen that has dozens of different ovens, some using gas, some electric, some needing special cooling units (GPUs/hardware). In the traditional MLOps landscape, the chef (Data Scientist) must also become a mechanic and an electrician to tell the kitchen exactly which oven to use, how to plug it in, and ensure it has enough power.

This analogy highlights the primary roadblock to scaling AI: the **infrastructure gap**. Development environments are optimized for experimentation, but production environments demand reliability, scalability, and efficiency.

Industry analysis, often mapped through MLOps maturity models, clearly shows that organizations struggle most when transitioning from model experimentation (Stage 2) to reliable production serving (Stage 3). The need for infrastructure abstraction is paramount. When an ML engineer has to manually configure Kubernetes clusters, select specific NVIDIA drivers, or negotiate cloud resource quotas just to deploy a model, development velocity grinds to a halt. Clarifai’s solution directly targets this bottleneck by automating the infrastructure plumbing.

The Infrastructure Tax: A Barrier to Entry

For a beginner or even an experienced developer new to AI infrastructure, terms like "automatic GPU selection" and "infrastructure provisioning" sound complex. In simple terms, it means the tool automatically figures out the best, fastest hardware available—whether it’s sitting in a private data center or running in the public cloud—and sets it up correctly without the developer needing to learn complex command-line arguments for every single server type. This reduces the "infrastructure tax" paid in time, cost, and specialized hiring.

The Rise of Developer Experience (DX) in AI

The focus on a simplified CLI workflow is a direct acknowledgment that the future of AI deployment belongs to the developers. This shifts the focus from *infrastructure engineering* to model engineering, dramatically improving the **Developer Experience (DX)**.

Modern software development thrives on tools that are intuitive, predictable, and integrated (think Git, Docker, or modern CI/CD pipelines). AI development has lagged because deployment required escaping this familiar workflow and diving deep into bespoke cloud consoles or complex configuration files. When deployment feels manual, developers avoid deploying frequently.

As highlighted in discussions around streamlined tooling, simplifying workflows via CLIs directly boosts productivity. If a developer can deploy a new version of a model with three standard commands—a pattern they already use for deploying traditional software—they are far more likely to iterate faster and catch performance regressions sooner. This is crucial because, unlike traditional code, AI models can degrade silently over time due to data drift; rapid deployment/testing cycles are the best defense.

This mirrors trends seen in infrastructure management tools, where companies like HashiCorp have won market share by abstracting away the complexity of cloud providers into unified, developer-friendly interfaces. AI platforms must follow suit to become indispensable.

Future Implication 1: Hybrid Cloud and Edge AI Thrive

One of the most significant implications of platform tools emphasizing local CLI deployment with automated provisioning is their benefit to hybrid and on-premise AI strategies.

While the major public clouds (AWS, Azure, GCP) offer powerful, proprietary MLOps suites (like SageMaker or Vertex AI), these inherently lock users into that specific ecosystem. For many regulated industries (finance, healthcare) or organizations with massive existing data center investments, true cloud-agnostic deployment is a requirement, not a feature. They need the ability to develop locally and deploy seamlessly, whether the target infrastructure is their own server room or a specific, certified cloud region.

When a platform like Clarifai emphasizes the CLI's ability to handle **automatic GPU selection and provisioning**, it strongly suggests portability. The platform is acting as a translator: taking the developer’s simple instruction and translating it into the specific hardware calls needed for diverse environments. This flexibility ensures that companies are not constrained by vendor lock-in and can meet stringent data residency or security mandates.

This capability is vital for the growth of **Edge AI**—deploying models on devices outside the central data center (e.g., factory floors, retail stores). Edge devices often have highly constrained or heterogeneous hardware. Abstracting this complexity via a simple deployment command ensures that models can be efficiently pushed to remote locations without requiring specialized on-site engineers for every update.

Future Implication 2: The Velocity Metric Defines Success

In the executive suite, the success of AI investments is increasingly tied to speed to value. The key metric is shifting from "Can we build an accurate model?" to "How fast can we get that accurate model into production and start seeing ROI?"

The benchmark for deployment speed is becoming the defining feature of successful MLOps tooling. If traditional deployment took days or even weeks—coordinating between data science, IT operations, and security teams—and a three-command CLI reduces that to minutes, the business impact is profound. This speed allows businesses to react almost instantly to market changes, regulatory shifts, or new data patterns.

This acceleration is not just about saving time; it’s about enabling entirely new business models that require real-time iteration. Rapid deployment means rapid feedback, which leads to safer, more robust, and more profitable AI systems.

Practical Implications for Business and Society

What does this trend toward deployment abstraction mean for organizations and the broader technological landscape?

For Businesses: Shifting IT Focus

IT departments and infrastructure teams can shift their focus away from the repetitive, manual task of configuring individual GPU instances. Instead, they can focus on creating secure, standardized "deployment blueprints" that the CLI can invoke. This moves IT from being a manual gatekeeper to being an enabler of rapid development, fostering better collaboration with data science teams.

For Developers: Broadening the Talent Pool

When deployment becomes easier, the pool of people capable of putting models into production widens significantly. Junior data scientists or analysts who are excellent at modeling but lack deep DevOps knowledge can now contribute directly to deployment. This lowers the hiring barrier for AI implementation and accelerates internal capability building.

For Society: Faster, Safer AI Rollout

While speed can sometimes imply risk, in this context, standardized, repeatable deployment commands actually enhance safety. When deployment is automated and versioned through a CLI, auditing, rollbacks, and testing become simple, codified actions. This methodical approach, applied at high speed, means that potentially harmful or biased models are caught and fixed faster, leading to a more stable integration of AI into critical services.

Actionable Insights for Adoption

For organizations looking to capitalize on this MLOps evolution, the path forward requires a strategic focus on tooling that prioritizes deployment simplicity:

- Audit Your Deployment Friction: Identify exactly where your models stall between training and serving. If manual resource allocation or environment configuration is the culprit, abstraction tools are your next priority.

- Prioritize DX in Tool Selection: When evaluating new MLOps platforms, judge them not just on their training features, but on the simplicity of their deployment commands. If the deployment workflow involves more than five distinct, manual steps outside a single script/CLI run, it's likely too complex for modern velocity targets.

- Embrace Hybrid Readiness: Assume that not all models will live in one place. Select platforms that explicitly support cloud-agnostic deployment via local CLI tools, ensuring future flexibility regardless of regulatory changes or shifting cloud spend strategies.

Conclusion: The Era of Effortless Production AI

The three-command deployment workflow pioneered by platforms like Clarifai 12.2 is more than just convenience; it represents a maturity milestone for the entire AI industry. We are witnessing the final stages of abstracting away the tedious infrastructure management that has long plagued production ML.

By collapsing complex provisioning into simple, developer-centric commands, the industry is preparing for an era where building an accurate model is only 10% of the job, and deploying it reliably is the remaining 90%—now achievable with unprecedented speed. The future of AI adoption hinges not on bigger models, but on smarter, faster, and more accessible operational pipelines.