The Interactive AI Revolution: Why In-Chat Data Visualization is the Next Frontier for LLMs

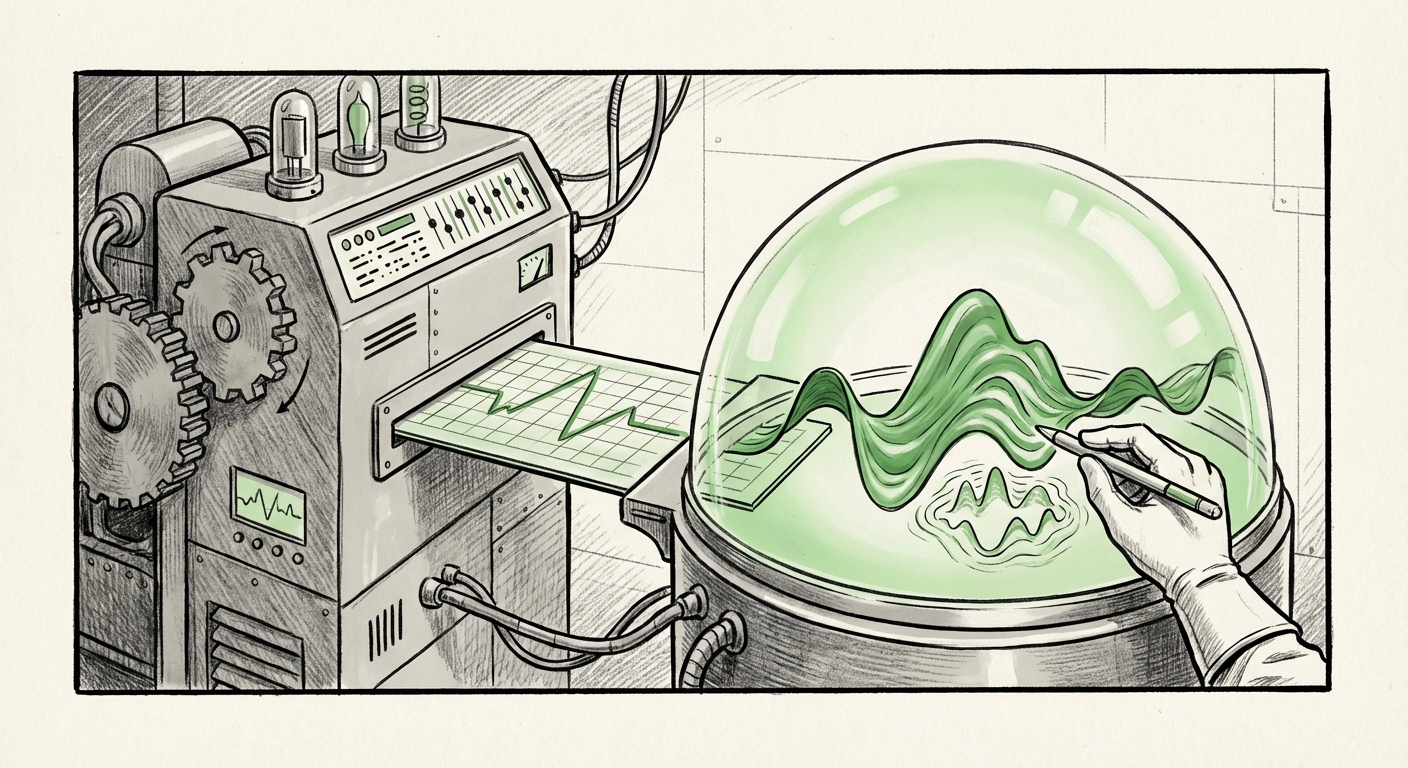

The world of Large Language Models (LLMs) is undergoing a quiet but profound transformation. For years, these powerful tools—like the early versions of ChatGPT or Claude—were essentially digital savants trapped in a text box. They could write poetry, summarize documents, and draft code, but when it came to data, they were merely skilled essayists describing what a chart *should* look like.

That paradigm is cracking. The recent beta launch by Anthropic allowing Claude to generate interactive charts and visualizations directly within the chat interface marks a decisive moment. This isn't just another feature; it signifies the LLM’s evolution from a static summarizer to an active, functional partner in data analysis and exploration.

From Textual Description to Functional Interaction: A Multimodal Shift

The core value proposition of modern LLMs has always been accessibility. They lower the barrier to entry for complex tasks. However, data analysis remained a high-friction area. A user might ask, "Show me the Q3 sales trends versus Q2," and the LLM would respond with perfectly structured text explaining the hypothetical upward slope.

Now, the response is different. The LLM doesn't just *describe* the trend; it renders it. And critically, it’s interactive. This means users can hover over data points, zoom into timelines, or filter segments—all without leaving the conversation flow.

Corroborating the Trend: The Multimodal Arms Race

This move by Anthropic confirms that the industry has recognized the next major battleground: multimodal functionality tied to execution capabilities. This is no longer about image generation; it’s about utility.

If we investigate how competitors like OpenAI (with ChatGPT) have been developing their data analysis tools, we see a parallel path. The very concept of a "Code Interpreter" or "Advanced Data Analysis" feature—where the AI runs Python code in a sandbox environment to process uploaded data and output results—is the technological prerequisite for Claude’s new feature. When we search for corroborating evidence through queries like `"ChatGPT" "data visualization" direct chat`, we confirm this escalating competition. The goal is clear: the first AI platform to seamlessly embed real-time computation and visual feedback into the dialogue will capture the lion's share of analytical workloads.

This feature parity push means that future LLM evaluations will hinge less on creative writing ability and more on reliable, safe, and interactive functional output.

The Technical Backbone: Code Execution as the Engine of Insight

How does an LLM, which fundamentally processes tokens, suddenly draw a graph? This is where the sophistication of modern LLM architectures—specifically tool-use and agentic frameworks—comes into play. The ability to create these interactive visualizations requires the model to:

- Understand the user's intent from natural language.

- Translate that intent into structured data processing requests.

- Generate the necessary code (often Python using libraries like Matplotlib, Plotly, or Vega-Lite) to create the visualization.

- Execute that code safely within a secure sandbox environment.

- Render the resulting dynamic graphic back into the chat interface for interaction.

When researching the future direction, using a query like `LLM "code interpreter" "interactive dashboard" future` highlights that this capability is seen by researchers as essential. The "Code Interpreter" is no longer just a debugging aid; it is the foundational tool that allows LLMs to interact with the real world of data. Interactive charts are merely the most visible, user-facing application of this underlying code execution engine.

For a user asking an LLM a question, this means the system is effectively running micro-programs on their behalf. This demands robust error handling and model training focused not just on language fluency, but on logical consistency and mathematical precision. The stakes for hallucination rise when the AI is not just writing text, but drawing conclusions based on executable logic.

Practical Implications: The Democratization of Business Intelligence

Perhaps the most significant long-term implication of in-chat interactive visualization lies in the Business Intelligence (BI) sector. For decades, turning raw data into actionable insights required specialized tools and skilled analysts proficient in platforms like Tableau, Power BI, or Looker.

If a marketing manager can now simply ask Claude, "Compare the regional spending variance for the last two quarters, show me where the anomalies are," and immediately receive a drillable scatter plot, the traditional BI workflow is fundamentally altered.

Our analysis of market disruption, framed by searches like `"AI chatbot" replacing "Tableau" "data exploration"`, suggests that conversational BI is emerging. This doesn't mean BI platforms will vanish, but their role will shift.

Shifting Roles in the Data Pipeline:

- Initial Exploration: The chatbot handles 80% of preliminary exploration: spotting trends, checking data integrity, and creating first-draft dashboards. This is vastly faster and accessible to non-technical staff (democratization).

- Governed Reporting: Traditional BI tools will remain essential for highly regulated, structured, large-scale reporting, security governance, and complex data modeling that requires permanent infrastructure.

- The Analyst as Prompt Engineer: Data analysts will spend less time dragging and dropping charts and more time crafting highly precise, complex natural language prompts to extract nuanced insights that simpler questions miss.

For business leaders, this means insights latency drops dramatically. Decisions can be informed by data within minutes of the data being available, rather than hours or days waiting for analyst reports.

Actionable Insights for Businesses and Developers

For companies looking to leverage this trend, a proactive strategy is necessary:

1. Embrace the Sandbox Mentality (For Developers & IT)

Ensure that any integration with LLMs that involves code execution is done within strictly governed, isolated environments. The security perimeter around the LLM's ability to "run" code is paramount. If your LLM can draw a chart, it means it can execute logic—a capability that must be monitored aggressively.

2. Retrain for Conversational Data Mastery (For Business Users)

Do not assume users will instantly know how to query the AI effectively. Invest in training focused on how to ask better data questions. Teaching users to think sequentially—"First, show me the total," then, "Now, segment that total by region," and finally, "Compare the highest segment against the lowest using a bar chart"—unlocks the model's full potential.

3. Evaluate Toolchain Integration (For Product Managers)

Consider how soon your existing proprietary data sources can be fed securely into these conversational interfaces. The true power comes when the AI isn't just visualizing sample data, but your live internal databases. This requires secure API grounding and context injection capabilities.

The Future is Visually Active

The introduction of interactive visualizations in the chat thread is more than a novelty; it is a necessary evolution. LLMs must move from being repositories of knowledge to being engines of *actionable discovery*. When users can manipulate data visuals simply by typing follow-up questions, the "black box" of data analysis begins to open up, inviting participation from everyone in the organization.

This continuous loop—ask, visualize, interact, refine the ask—creates an analytical fluency previously unavailable. We are witnessing the foundational layer of the next generation of analytical software being built directly into the conversational interface, paving the way for true, ubiquitous AI assistance.