The Era of Interactive Intelligence: Why Claude's Chat Visualizations Signal the Next AI Leap

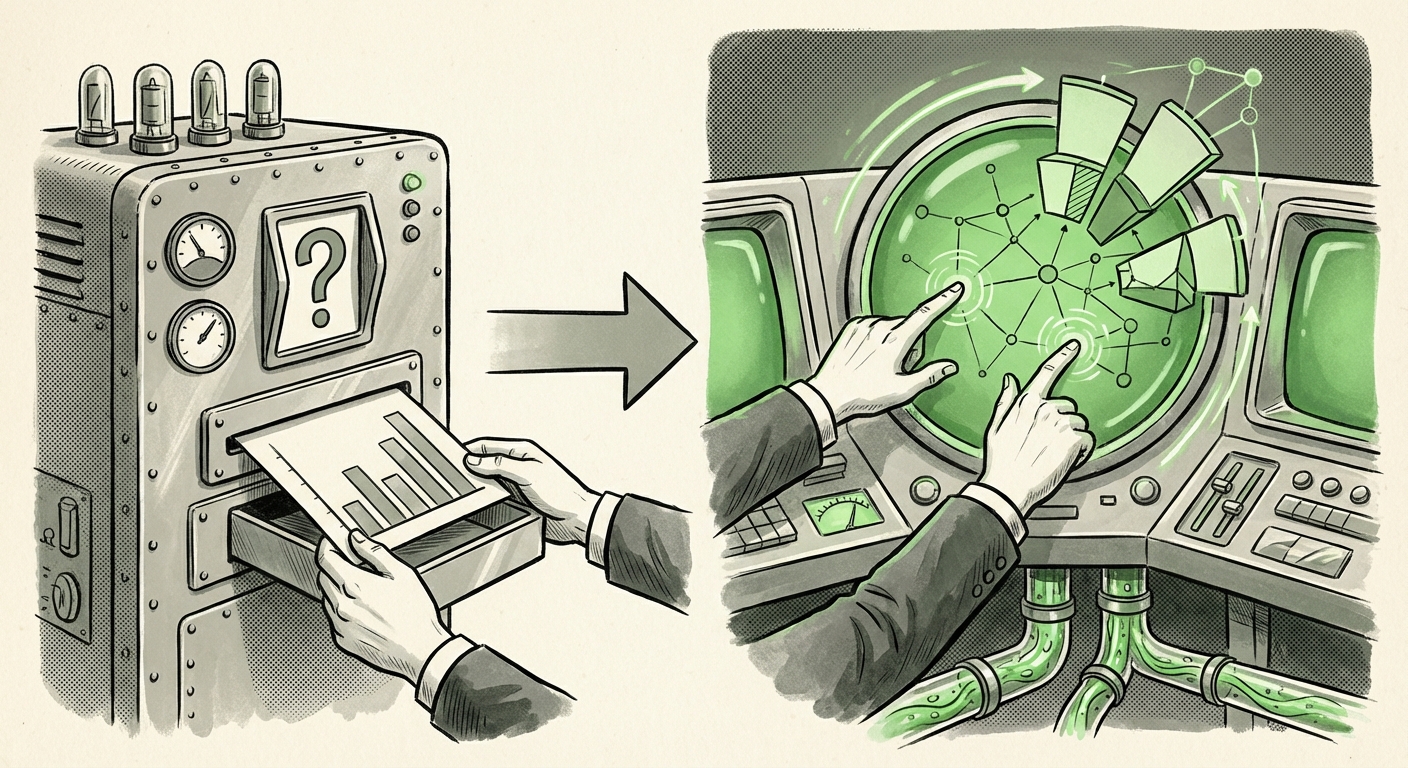

The evolution of Large Language Models (LLMs) has followed a predictable, yet exhilarating, trajectory: from simple text prediction to complex reasoning, and now, toward embedded, functional utility. The recent announcement that Anthropic’s Claude is rolling out beta features allowing users to generate interactive charts and visualizations directly within the chat interface is not merely an aesthetic upgrade; it is a critical inflection point signaling the transition from conversational AI to operational AI.

For analysts, developers, and business leaders alike, this signals that the days of requesting a chart, receiving a static image, and then asking the AI to modify the code *outside* the main conversation are rapidly fading. We are entering the age of integrated, dynamic intelligence where the LLM acts as an analyst, coder, and presenter all in one seamless session.

The Shift from Text Output to Functional Output

For years, the power of LLMs was constrained by their output format. You could ask an AI to analyze sales figures, and it would generate perfectly written paragraphs explaining the trends. If you needed to see those trends visually, you were tethered to a separate process: either downloading data to a spreadsheet or waiting for the AI to render a static image (a PNG or JPEG). This created friction—a speed bump in the data exploration workflow.

Claude’s new capability fundamentally breaks this barrier. When an AI can produce an interactive chart, it implies a sophisticated underpinning. It suggests the model is generating executable code (likely JavaScript components or framework-specific code like Vega-Lite or Plotly) within a secure, sandboxed environment in the chat window. This allows users to:

- Drill Down: Click on a segment of a bar chart to see underlying details.

- Filter Data: Instantly narrow the view by date range or category without re-prompting the AI.

- Test Hypotheses: Ask follow-up questions immediately based on the visual evidence presented, shortening the "think-and-verify" cycle from minutes to seconds.

This rapid feedback loop is precisely what defines powerful data analysis. As identified in industry analysis (Search Query 2 focus), the impact of embedded visualization on the data analysis workflow is revolutionary for UX Designers and Business Analysts. They move from being data *requestors* to true data *explorers* within the conversational space.

The Competitive Arena: Corroborating the Trend

Anthropic does not innovate in a vacuum. A feature as significant as integrated interactivity confirms that the broader industry is converging on this capability. If one major player moves toward functional output, others are invariably accelerating their own timelines.

The Race for Advanced Data Handling

The introduction of interactive charting by Claude directly challenges the established capabilities demonstrated by competitors. When we look at precedents, we find that OpenAI has long been pushing the boundaries with its Advanced Data Analysis (formerly Code Interpreter). This suite allows GPT models to execute Python code to manipulate data, often resulting in visualizations. Claude’s move seems to be democratizing this from a coding tool into a native, direct chat feature, potentially requiring less explicit instruction from the user.

Similarly, looking at ecosystem giants like Google (Search Query 1 focus), their investment in Gemini shows a clear path toward deep data integration with platforms like Google Sheets and Drive. The goal for all major LLMs is to become the primary interface for all digital work, and you cannot manage enterprise data effectively without robust, dynamic visualization tools baked in. This competitive pressure ensures that **LLM multi-modal data visualization beta features** will become standard across the board very quickly.

The Technological Underpinnings: Beyond Static Images

What does this mean for the engineers building these systems (Search Query 3)? It signifies a maturation beyond simply generating pixel data.

Generating a static chart is relatively straightforward: the model outputs descriptive text, and a separate component renders that description into an image file. Generating an *interactive* chart is entirely different. It demands:

- Accurate Data Structuring: The model must interpret the raw data correctly and translate it into a structured format recognizable by visualization libraries (like JSON for D3.js, Vega-Lite, or Plotly).

- Secure Sandboxing: The generated code must be executed in a secure environment (a sandbox) so that any script runs without compromising the host system or the user’s private environment.

- Seamless Integration: The resulting interactive component must render instantly and reliably within the chat window, retaining the conversational context.

This move validates the architectural shift occurring across the AI industry toward dynamic output generation. If an AI can safely and reliably build a functional interface element on the fly, it proves its capability to act as a complex agent, not just a sophisticated text predictor. This technology is the bedrock for future AI agents that manage complex tasks beyond simple Q&A.

Future Implications: From Dashboard Viewer to Data Collaborator

The trajectory set by Claude’s interactive charts points toward a future where the AI interface is indistinguishable from the operational tool itself. This has profound implications for how we work, learn, and strategize (Search Query 4 focus).

For Business Analysts and Strategists

Imagine a Chief Financial Officer (CFO) asking Claude, "Show me Q3 revenue variance by region, and let me filter out the top two outliers." In the past, this required the CFO to export data, open a BI tool, build the chart, and then perhaps summarize the findings back into the chat. Now, the entire process happens fluidly in one place. This accelerates strategic decision-making by removing the analytical bottleneck. For **Venture Capitalists and Long-Term Strategists**, this confirms that the true ROI of LLMs lies in reducing the time between insight generation and tactical action.

The Democratization of Data Literacy

Perhaps the most significant societal implication is the democratization of data literacy. Many people are intimidated by complex BI software or statistical packages. Conversational interfaces lower this barrier to entry dramatically. If you can ask questions in plain English—"Why did marketing spend decrease last month?"—and receive an immediate, interactive chart that lets you explore the answer visually, data becomes accessible to everyone, not just specialized analysts.

The Rise of Operational AI Agents

This capability is a necessary component for true AI agents. An effective agent must be able to perceive an outcome (via data), reason about it, and present the findings in a consumable format that allows the user to issue the next command. Interactive visualization is the “seeing” part of this loop. As these models become better at connecting their outputs to external systems (e.g., updating a database or initiating an email), these visualization tools will evolve into **real-time operational dashboards** managed entirely through conversation.

Practical Insights: What Organizations Must Do Now

For organizations looking to capitalize on this leap in LLM functionality, attention must pivot from simple adoption to integration strategy.

- Audit Data Security and Sandboxing: If your AI model is executing code to generate interactive elements, you must thoroughly vet its security architecture. Ensure that any environment generating live code is rigorously sandboxed to prevent data exfiltration or security vulnerabilities.

- Prioritize Data Preparation: The quality of the visualization is entirely dependent on the quality of the input data. Invest now in cleaning, structuring, and cataloging internal data sources so they are easily accessible and understandable by the LLM, whether through direct uploads or API connections.

- Redefine Workflow Training: Stop training employees on how to *use* a dashboard tool. Start training them on how to *prompt* an analytical partner. The skill shifts from tool manipulation to precise linguistic instruction and hypothesis formulation.

- Demand Interactivity: When evaluating LLM providers moving forward, treat the ability to render dynamic, interactive outputs—not just static images—as a non-negotiable feature for any role involving data analysis or reporting.

Conclusion: The Interface Becomes the Insight

Anthropic's commitment to interactive visualization within Claude underscores a universal truth in technology: utility follows integration. When complex functions become seamlessly embedded into the most intuitive interface—the chat window—they cease to be specialized tools and become universal capabilities. We are witnessing the transition of LLMs from information retrieval systems to genuine partners in data exploration and analysis.

The future of AI productivity will be defined by how quickly we can move from asking, "What happened?" to instantly exploring, "What if?" The next generation of AI interfaces won't just answer questions; they will invite collaboration through dynamic, self-generated visual interfaces, fundamentally changing what it means to analyze data in the digital age.