The Great AI Divide: Why Grok's Reliability is Challenging the Reign of Frontier LLMs

The recent performance report comparing xAI's Grok 4.20 against industry titans like Google's Gemini and OpenAI's presumed GPT-5.4 has sent a clear signal across the technology sector: raw capability is no longer the only metric that matters. While Grok 4.20 reportedly trails significantly in traditional, generalized benchmarks, it simultaneously set a new industry low for hallucination rates. This development isn't just interesting; it signifies a fundamental bifurcation in the direction of Large Language Model (LLM) development and deployment.

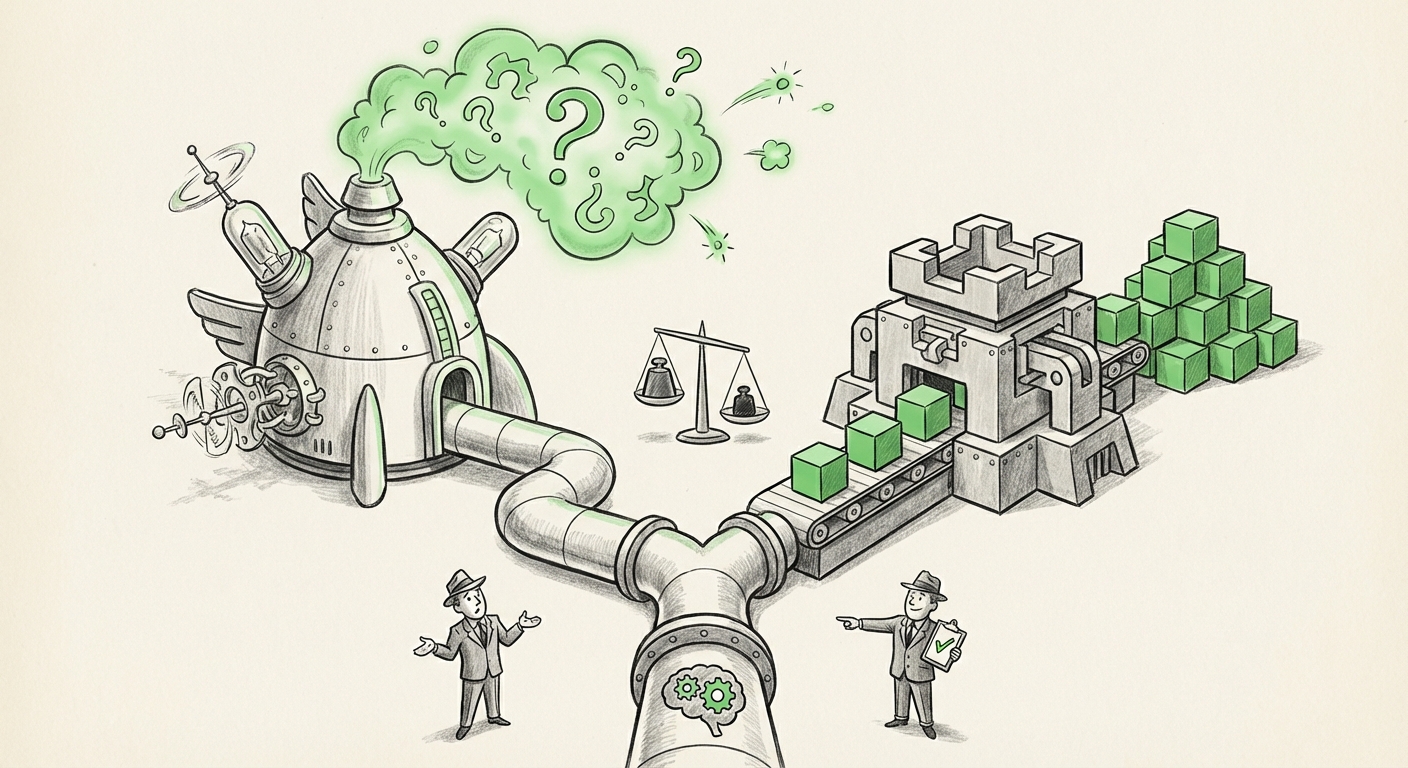

For years, the narrative has been a horsepower race—who can build the biggest brain with the most complex reasoning skills? Now, the focus is shifting. We are entering the era of AI specialization, where developers and businesses must choose between peak intellectual performance and absolute, reliable accuracy, often dictated by the cost of operation.

The Benchmark Battle: Capability vs. Truthfulness

When we talk about LLM benchmarks (like MMLU or general reasoning tests), we are measuring complexity, creativity, and the breadth of knowledge an AI can handle. Models like GPT-5.4 and Gemini compete fiercely here, pushing the boundaries of what artificial intelligence can deduce and generate. These frontier models are the research marvels, capable of tackling novel scientific problems or complex strategic planning.

However, the moment an AI moves from a research sandbox into a critical business pipeline—such as medical transcription, legal contract drafting, or financial reporting—its tendency to "hallucinate" (generate confident, yet entirely false, information) becomes a massive liability. This is where Grok 4.20 is making its calculated strike.

The fact that Grok achieved a record for not hallucinating, even while lagging on standardized tests, forces us to ask: what are we actually benchmarking for? If an LLM gives a perfect, creative answer to a question that matters 1% of the time, but provides a fabricated, dangerous answer the other 99% of the time, its value plummets in high-stakes environments. Conversely, an AI that is 99% accurate but slightly slower at creative writing is vastly more valuable for tasks requiring factual integrity.

The Growing Demand for Trustworthy AI

The industry is recognizing that reliability is a distinct engineering discipline. We are moving past the "black box" phase where we simply hoped the large models were truthful. The demand for auditable, fact-grounded systems is leading to intense research into controlling generative outputs. We can see this trend reflected in the focus on creating better testing frameworks, as noted in ongoing research into **"LLM hallucination rates benchmark 2024"** efforts. These new standards prioritize factual recall and citation verification over mere fluency.

For developers, this means that simply applying the latest flagship model via API might be riskier and more expensive than deploying a carefully constrained, fact-focused model. The need for **trustworthy AI** is creating a market segment ready for tools that prioritize verifiable accuracy over speculative genius.

The Economics of Inference: Cost vs. Capacity

The second critical pillar supporting Grok’s strategy is efficiency—being "cheap and fast." Running the largest frontier models is incredibly resource-intensive, requiring vast amounts of specialized hardware (GPUs) for every single query (inference). This translates directly into high API costs for users.

As businesses look to integrate AI across thousands of daily customer interactions or millions of internal reports, the cost curve of utilizing the most advanced models becomes prohibitive. This economic reality drives the second major trend we are seeing:

- The Frontier Model Tier: Used for R&D, novel problem-solving, and high-level strategy where the cost is justified by the uniqueness of the output (e.g., discovering a new drug compound).

- The Efficiency Model Tier: Used for high-volume, repetitive, yet critical tasks like data extraction, routine customer support, or initial document review, where cost-per-query is the dominant factor.

Research into the **"Trade-off between LLM performance and inference cost"** confirms that this is not just a niche concern; it’s a central business challenge. If Grok 4.20 can handle 80% of a company’s workload—the high-volume, fact-checking tasks—at half the cost and with fewer errors than the frontier models, the business case for adopting the slower, pricier giants shrinks dramatically for those specific use cases.

xAI’s Strategic Positioning: The Truth-Seeker Niche

To fully understand Grok’s development philosophy, we must look at the developer ecosystem and mission behind it. xAI, under Elon Musk, has consistently framed its purpose around achieving Artificial General Intelligence (AGI) through transparency and, crucially, a pursuit of truth unburdened by perceived institutional biases.

This focus aligns perfectly with a development strategy that champions reduced hallucination. While OpenAI and Google are trying to maintain broad appeal and navigate complex ethical guidelines that sometimes favor cautious (and occasionally evasive) responses, xAI appears to be optimizing for unvarnished factual output. Analyzing **"xAI strategy alignment with Elon Musk's vision for AI"** reveals that Grok is being engineered as a specialized tool for those who value verifiable data delivery above all else.

This differentiation strategy is sound in a maturing market. Rather than fighting head-on against incumbents with near-limitless compute budgets on generalized benchmarks, Grok targets the 'trust gap.' They are betting that a significant portion of the professional world needs an AI that they can fundamentally trust to state facts correctly, even if it cannot write a sonnet as well as its peers.

Implications for the Future of AI Deployment

This bifurcation—Capability vs. Reliability—will profoundly shape how organizations adopt and integrate AI:

1. Enterprise Architecture Will Diversify

Future enterprise AI stacks will rarely consist of a single model. We are moving toward a "Model Mesh" architecture. A large corporation might use GPT-5.4 for brainstorming new marketing campaigns, use Gemini for advanced code generation in R&D, and deploy Grok 4.20 (or similar hyper-reliable models) for all compliance documentation and customer service escalations. This means IT leaders must become adept at model routing and governance, directing queries to the right AI for the right job.

2. The Rise of Specialized Evaluation Metrics

The AI industry will increasingly rely on domain-specific benchmarks. A medical AI firm will care more about a "Clinical Accuracy Score" than an MMLU score. A financial compliance officer will prioritize "Citation Verification Rate" above all else. General benchmarks will become less relevant for purchasing decisions, replaced by specialized "Trust Scores."

3. The Economic Advantage of Precision

For startups and SMEs, the efficiency tier becomes the barrier to entry. If a company can deploy a powerful, cheap, and highly reliable model for core operations, they can compete with larger firms that are locked into the high operational expenditure of frontier APIs. Efficiency democratizes access to high-quality automation.

Actionable Insights for Businesses Today

How should decision-makers respond to this evolving landscape where specialized AI tools are gaining ground?

- Audit Your Use Cases: Categorize all planned AI deployments into two buckets: Innovation/Exploration (where creativity matters most) and Operational/Critical (where accuracy and cost matter most).

- Prioritize Fine-Tuning on Factuality: If you are building or fine-tuning models for factual tasks, dedicate significant resources to grounding and verifiable data sets. Treat hallucination mitigation as a primary feature, not a secondary bug.

- Demand Transparency in Benchmarks: When evaluating vendors, look beyond the headline benchmark scores. Ask vendors specifically about their hallucination reduction techniques, inference costs, and how they measure factual grounding in your specific domain.

- Prepare for Model Orchestration: Begin planning the infrastructure needed to manage multiple LLMs simultaneously. The ability to intelligently switch between a generalist model and a specialist, reliable model is the next crucial skill in AI governance.

Conclusion: The Future is Layered, Not Singular

The success of Grok 4.20 in staking a claim on reliability highlights a crucial evolution in the generative AI market. It confirms that the race to AGI—the ultimate generalist—is running parallel to a pragmatic, urgent race for reliable, cost-effective specialty AI. The future of AI deployment will not be defined by a single monolithic "best" model, but by a sophisticated, layered ecosystem where models are chosen based on the specific axis of competition they are designed to win: whether it's raw, bleeding-edge intelligence or unwavering, economical truth.