Beyond Pixels: Why Meta's JEPA Victory in Medical Imaging Heralds the Era of Predictive AI

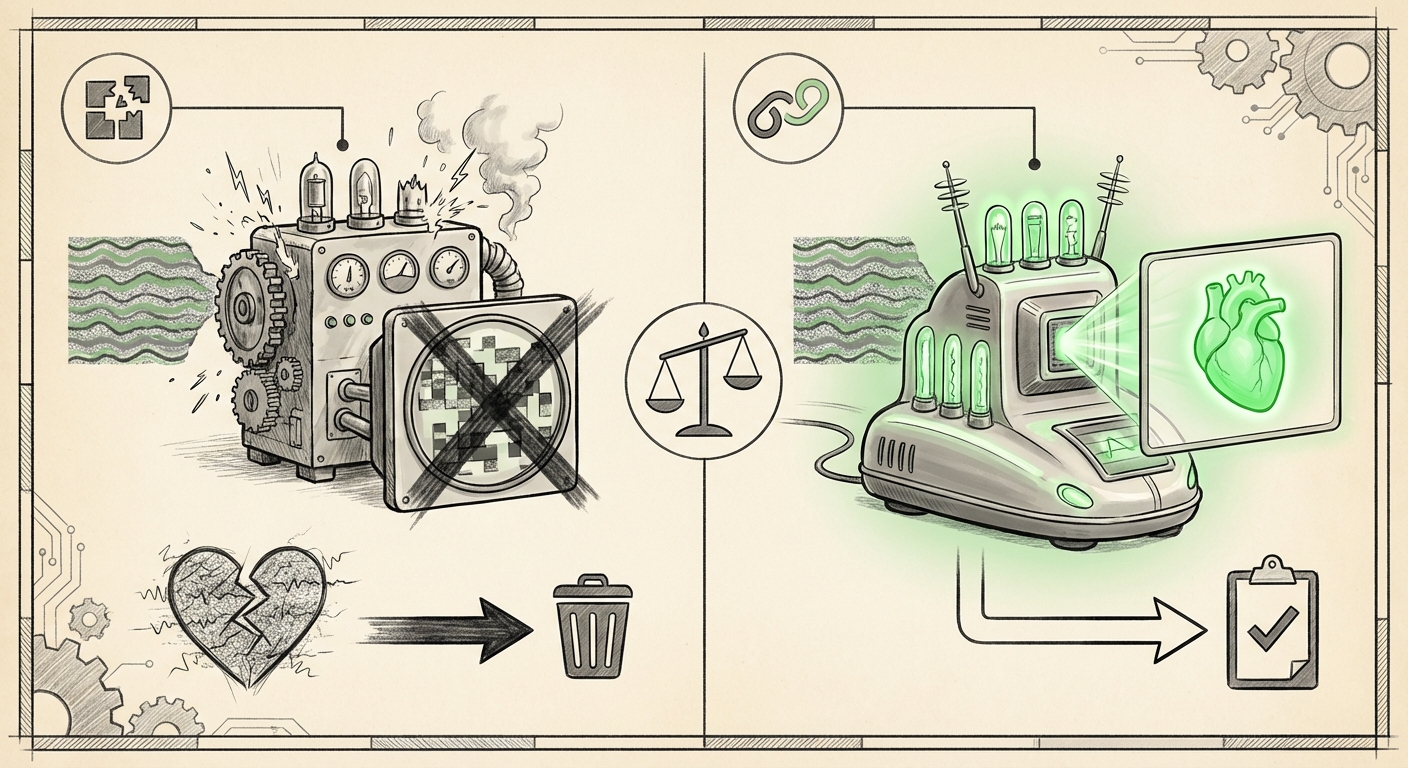

In the relentless pursuit of smarter, more capable Artificial Intelligence, the method we use to teach machines matters as much as the data we feed them. For years, Self-Supervised Learning (SSL)—training AI without human labels by forcing it to learn inherent data structures—has relied heavily on two main strategies: reconstruction (making a model rebuild a corrupted image) or contrast (making sure similar things look alike and different things look distinct). But a recent breakthrough involving Meta’s Joint Embedding Predictive Architecture (JEPA) in the challenging field of cardiac ultrasound suggests we are standing at the precipice of a major architectural shift.

When researchers applied JEPA to analyze noisy medical scans, it significantly outperformed established methods like Masked Autoencoders (MAE) and contrastive learning techniques. This isn't just a small technical win; it’s a powerful validation of a new way of thinking about representation learning. This article delves into why JEPA succeeded where others faltered, what this means for the future of robust AI, and the practical implications for industries reliant on complex, imperfect data.

The Achilles' Heel of Current AI: Dealing with Real-World Noise

To appreciate JEPA’s victory, we must first understand the battlefield: noisy medical imaging, specifically cardiac ultrasound. Unlike pristine photographs, ultrasound data is inherently messy. Images suffer from:

- Acoustic Artifacts: Sound waves reflecting off tissues create shadows, smears, or blurry patches.

- Patient Variability: Breathing, heart movement, and the angle of the probe constantly change the image structure.

- Machine Differences: Different ultrasound machines produce subtly different image qualities.

Traditional SSL methods struggle here. Masked Autoencoders (MAE) work by hiding parts of an image (masking) and forcing the AI to guess the missing pixels. If the model tries too hard to guess the exact pixels in a noisy region, it learns the noise itself, not the underlying anatomy. Similarly, Contrastive Learning forces the AI to ensure that two slightly different views of the same heart (e.g., two frames from a video) have very similar internal codes. This method can be too rigid, punishing the model for legitimate, noise-induced differences.

As contextual analysis confirms (Query 2 focus), the core technical challenge in medical AI is achieving robustness to noise without sacrificing diagnostic accuracy. We need models that understand the "idea" of a ventricle wall, regardless of whether that wall is slightly obscured by an acoustic shadow.

Enter JEPA: Learning by Predicting Latent Concepts

JEPA fundamentally changes the objective. Instead of predicting pixels or forcing feature similarity, JEPA operates entirely in the latent space—the compressed, conceptual understanding the AI develops internally.

Imagine teaching a child to recognize a cat. An MAE approach would be like showing them a picture with half the whiskers scratched out and demanding they draw the exact missing whiskers. A contrastive approach would be showing them two slightly fuzzy cat photos and demanding their internal feeling about both be identical. JEPA, however, works like this:

- The model looks at one part of the image (the context).

- It compresses this context into a high-level "thought" or latent representation (a vector).

- It then predicts what the latent representation of a *missing* part of the image should be, based only on the first part's representation.

Crucially, JEPA doesn't care what the actual pixels in the missing area are; it cares about the abstract, conceptual representation of that area. Because it learns these abstract relationships, noise—which is random and non-predictive—is effectively filtered out.

This approach has led to superior performance in ultrasound benchmarks (as detailed in the source article from The Decoder) because the model learns the essential structure of the heart motion and shape, ignoring the static or random electronic interference on the screen.

Corroboration from the Field: Validating the Shift

The emergence of JEPA is not isolated. Analysis across related fields strongly supports this predictive trend:

- Direct Architectural Superiority (Query 1): Research comparing JEPA directly against MAE in vision tasks often reveals that JEPA representations generalize better when deployed downstream. When tackling domain-specific tasks like medical classification, where the data distribution shifts slightly from training to testing, the abstract knowledge JEPA builds proves more transferable than the rote memorization of pixel patterns learned by reconstruction methods.

- The Theoretical Mandate (Query 3): Yann LeCun, a primary architect of JEPA, frames this as a necessary step toward Artificial General Intelligence (AGI). His vision is that true intelligence requires building "world models" through prediction. The success in medical imaging demonstrates that this theoretical framework is already yielding highly practical, state-of-the-art results in complex perception tasks, validating the paradigm shift away from pixel-level fidelity.

- Cross-Domain Translation (Query 4): If JEPA were merely an image-specific trick, its impact would be limited. However, its successful application in diverse fields—like predicting future video frames in robotics or understanding long-range dependencies in language—shows that the *principle* of latent predictive learning is universally powerful for creating robust representations.

Future Implications: The Robust AI Mandate

The JEPA success in noisy environments has profound implications that extend far beyond cardiology.

1. The End of Data Curation as the Primary Bottleneck

For years, the biggest bottleneck in medical AI deployment was the sheer difficulty and cost of obtaining perfectly labeled, high-quality datasets. If JEPA-style models can extract meaningful features from massive quantities of unlabeled, noisy, real-world clinical data, the dependency on scarce expert annotators lessens significantly. This democratizes AI development.

2. Enabling Mission-Critical AI

When AI is used for life-or-death decisions (like autonomous vehicles or surgical robotics), failure due to unexpected environmental noise is catastrophic. JEPA's built-in robustness to irrelevant data makes it an ideal candidate for these mission-critical systems. We can build AI that trusts its own high-level judgment rather than obsessing over peripheral visual noise.

3. Generative Models Go Conceptual

Current generative models often struggle to maintain long-term coherence because they are often trained on pixel reconstruction (or token prediction in language). Predictive latent models like JEPA could unlock the next generation of generative AI capable of creating complex, logically consistent long-form content—whether it’s a full-length, medically accurate simulation or a coherent novel.

Actionable Insights for Business and Research

This architectural evolution demands a pivot in strategy for technology leaders and researchers:

For AI Researchers and Engineers:

Actionable Insight: Start migrating core SSL pipelines from standard MAE or contrastive frameworks to latent predictive models. Focus benchmarking not just on accuracy on clean test sets, but specifically on performance degradation when realistic, domain-specific noise is injected.

For Healthcare IT and Bio-Engineers:

Actionable Insight: Prioritize the acquisition and storage of massive, raw, unlabeled clinical data streams (like continuous ECG feeds or raw ultrasound video archives). This raw data, previously considered too messy for high-quality training, is now the most valuable asset for training JEPA-style foundational models specific to your institution.

For Technology Strategists and C-Suite:

Actionable Insight: View JEPA and its successors not as a minor model upgrade but as a foundational technology layer. Investment in platforms capable of training these large, predictive representations will yield models that are inherently more trustworthy and adaptable to real-world deployment conditions, leading to faster ROI in high-stakes applications.

The success of Meta's JEPA in the notoriously difficult environment of cardiac ultrasound is a clear signal: the future of powerful, general-purpose AI is moving beyond trying to perfectly recreate reality, focusing instead on the robust, abstract concepts that *govern* reality. By learning what is missing in the conceptual space, these new architectures are showing us exactly how to build AI that truly understands the world, noise and all.