The AI Arms Race Accelerates: Why Meta’s 'Avocado' Delay Signals a New Era of Model Supremacy

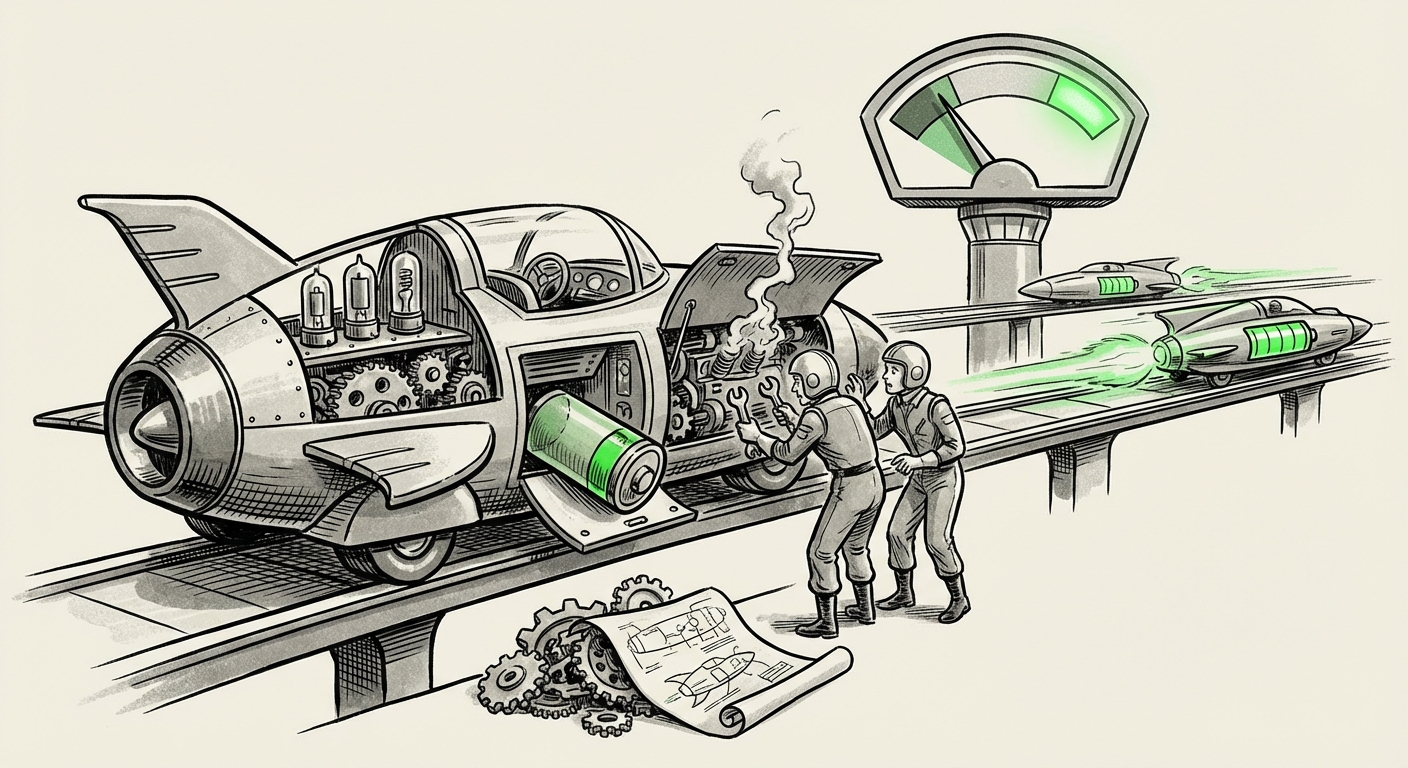

The world of Artificial Intelligence is moving at a dizzying pace. Just as the industry digests the latest breakthrough from OpenAI or Google, a new disruption emerges. The recent news that Meta is reportedly postponing the release of its highly anticipated AI model, codenamed "Avocado," due to internal tests showing it lags behind its primary rivals, is more than just a delay—it is a critical stress test for the entire industry.

This incident crystallizes a fundamental shift: the margin for error in developing foundational AI models has effectively vanished. In this new landscape, releasing a merely "good" model is equivalent to launching an obsolete one. Performance is the sole currency, and falling behind the leading edge—currently defined by OpenAI and Google—carries severe competitive penalties.

The Context: A Fierce Competition Setting the New Standard

To understand why Meta would delay a major model, we must first look at the current peak performance levels. The AI race is no longer about building *an* AI; it’s about building the *best* AI for complex reasoning, speed, and multimodality. Rivals aren't standing still:

- OpenAI’s GPT-4o set a new benchmark for speed and native multimodal interaction.

- Google’s Gemini series continues to push massive context windows and deep integration across its ecosystem.

- Anthropic’s Claude 3 Opus has proven formidable, often winning specialized reasoning benchmarks.

When internal tests reveal a model like "Avocado" cannot keep up, it suggests a gap not just of a few percentage points, but potentially a fundamental deficit in core reasoning or instruction following, as measured by standard tests (Query 1: "Latest LLM benchmark comparisons performance GPT-4o vs Gemini 2 Ultra"). For developers and enterprise users, these benchmarks—like MMLU (measuring general knowledge) or HumanEval (measuring coding ability)—are the only objective measures of a model's utility.

The Hidden Battle: Compute as the Ultimate Bottleneck

Why is it so hard for even well-resourced giants like Meta to keep pace? The answer lies beneath the software, in the hardware. Training frontier models requires access to tens of thousands of the most advanced Graphical Processing Units (GPUs), primarily from NVIDIA. The cost, acquisition timeline, and efficient utilization of these chips define the speed of innovation.

As suggested by analyses like those concerning hardware supply (Query 2: "Cost and timeline for training frontier AI models 2024"), achieving state-of-the-art performance is now inextricably linked to capital expenditure (CAPEX). Meta is investing billions, but if Google or OpenAI have secured access to the next generation of hardware (like the B200 chips) sooner, their ability to train larger, more complex models faster is enhanced. A delay in "Avocado" might signal a temporary constraint in either compute availability or the engineering time needed to optimally utilize their current clusters.

For a business leader, this translates simply: AI supremacy is becoming a game of financial resources and supply chain mastery as much as algorithmic talent.

The Strategic Tensions: Open Source vs. Closed Frontier

Meta holds a unique position in the AI ecosystem due to its commitment to the open-source movement, primarily through its Llama family of models. This strategy aims to foster widespread adoption and drive innovation outside of proprietary walled gardens.

However, the reported struggle of "Avocado"—a likely closed, cutting-edge model—creates internal strategic tension (Query 4: "Meta's open-source AI strategy impact on Llama adoption").

If Meta's best internal, closed models are perceived as trailing, the value proposition of their open models comes into question. Does this force Meta to:

- Accelerate Llama 4: Push the open-source release sooner, hoping community fine-tuning can bridge the performance gap?

- Reallocate Resources: Divert engineers from other projects to fix the core issues plaguing "Avocado"?

The decision to delay Avocado suggests Meta prioritized *quality over schedule* for its top-tier offering, recognizing that releasing an underperforming flagship product could damage its brand credibility, particularly when competitors like Anthropic are continuously impressing with highly capable, tightly aligned models (Query 3: "Anthropic Claude 3 Opus vs Google Gemini 1.5 Pro capabilities").

What This Means for the Future of AI Development

The "Avocado" incident is a microcosm of the future of generative AI: a Darwinian environment where only the strongest survive the internal testing phase.

1. The Commoditization of "Good" AI

As powerful open-source models become more accessible, the performance floor for proprietary models must rise dramatically. What was state-of-the-art six months ago is now the baseline for commercial deployment. The market is quickly differentiating between utility-grade AI (which is becoming cheap and accessible) and *frontier* AI (which remains exclusive and extremely expensive to build).

2. Hyper-Specialization and Alignment

The success of competitors like Anthropic highlights that raw intelligence isn't everything. Future models must excel in specific, high-value dimensions: long-context understanding, complex multi-step reasoning, and robust safety alignment. Meta's delay might stem from struggles in one of these specialized areas, indicating that scaling parameters alone is no longer sufficient for leadership.

3. The Rise of Iterative Launch Cycles

We are moving away from the predictable, annual flagship releases. Instead, expect rapid, smaller iterations (like GPT-4o) that incorporate learnings from internal testing immediately. The ability to deploy fixes and improvements weekly, rather than quarterly, will become a key competitive edge.

Practical Implications for Businesses and Society

For organizations building on top of these foundations, this constant jostling at the top has several direct consequences:

For Businesses: Navigating Vendor Lock-In and Risk

Businesses relying on a single model provider face increased volatility. If Meta delays, an organization relying on their ecosystem might see a pause in innovation pipelines. Companies must adopt a multi-model strategy—integrating APIs from Google, OpenAI, and Anthropic—to ensure redundancy and access to the best-in-class features across different tasks.

Actionable Insight: Conduct dual-stack testing. Instead of committing fully to one vendor, mandate that critical workflows be tested against at least two leading models to stress-test for performance drops or unexpected alignment shifts.

For Society: The Pace of Safety

The pressure to release competitive models often clashes directly with the need for rigorous safety testing. When Meta chooses to delay "Avocado" because it’s not good enough, it suggests a commitment to responsible rollout—a positive sign. However, if internal performance pressure forces future delays to be shorter or less rigorous, society faces the risk of powerful, yet flawed, AI systems being deployed into critical infrastructure.

For Developers: Mastering the Shifting Benchmarks

Developers must become experts not just in prompt engineering, but in understanding the evolving strengths and weaknesses of each major model family. The knowledge that "Avocado" may be weaker in complex math but strong in creative writing (for example) informs deployment decisions. Developers must continuously re-evaluate if the model they integrated last month still offers the best performance today.

Actionable Insight: Focus development efforts on abstraction layers. Build tooling that allows your application logic to swap out the underlying LLM provider with minimal disruption, insulating your business from the rapid performance swings of any single giant.

Conclusion: The Scrutiny of Scale

The story of the "Avocado" delay is the story of modern AI supremacy. It’s a high-stakes, multi-billion-dollar engineering marathon where stopping to refuel (or rework the engine) means falling behind rivals who may have secured better supplies of high-octane fuel (compute). Meta’s decision, while disappointing for those awaiting the model, shows an understanding that in 2024, "good enough" simply isn't good enough.

The future belongs to those who can master the confluence of algorithmic brilliance, massive capital investment in hardware, and the agility to rapidly iterate under intense competitive pressure. The race for foundational model dominance is escalating, making the next 12 months the most critical period yet in AI’s history.