Microsoft Copilot Health and the Race to Medical Superintelligence: Analyzing the Next Frontier of AI

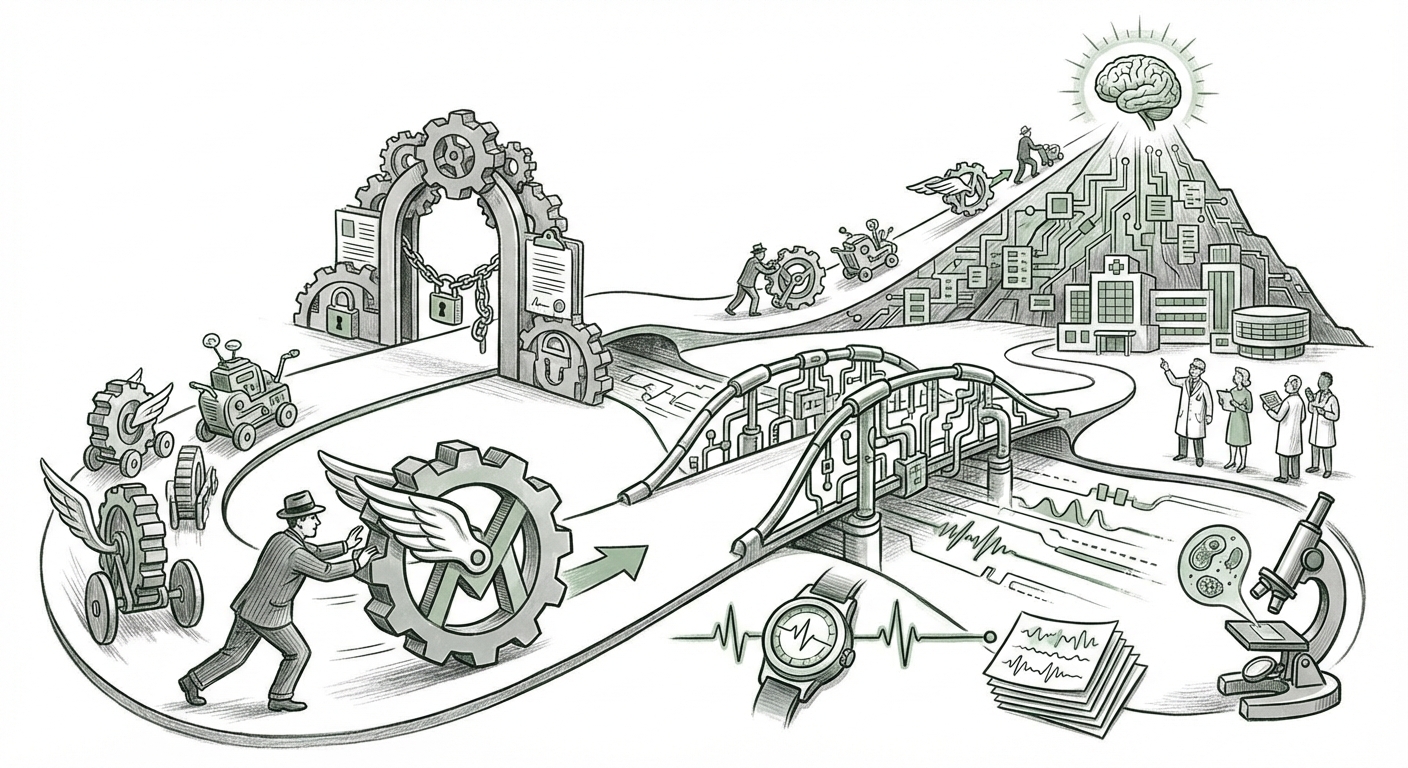

The recent unveiling of Microsoft Copilot Health is far more than just another product launch; it represents a critical inflection point in the maturity of Generative AI. For years, AI models have delighted us with creative text generation, code assistance, and general knowledge retrieval. Now, the foundational model developers—Microsoft, OpenAI, and Anthropic—are sprinting into the most high-stakes, complex, and regulated domain imaginable: human health. Microsoft’s ambition, explicitly stated as working toward "medical superintelligence," signals that generalized AI assistants are yesterday's news; the future belongs to hyper-specialized, deeply integrated vertical intelligence.

As an AI technology analyst, I see this move as the definitive shift from AI as a tool to AI as a critical infrastructure layer. This analysis synthesizes the immediate implications of Copilot Health, contextualizes it against key competitors, explores the monumental technical hurdles, and frames the urgent regulatory landscape that will define success or failure in this new era.

The Pivot to Vertical AI: From Chatbot to Clinical Assistant

Copilot Health is designed to be an AI health assistant that ingests vast amounts of personal data—wearables data, electronic health records (EHRs), and lab results—to offer personalized health advice. This process highlights the move toward deep integration and high-stakes application. It’s a significant leap from asking ChatGPT for dinner recipes.

The Competitive Arena Heats Up

Microsoft is not entering an empty field. The launch directly challenges established players and validates the massive investments already underway by others. Understanding this competitive landscape is crucial for gauging the pace of innovation:

- Google DeepMind and Google Health: Our research agenda must look closely at what Google is doing. Queries around "Google DeepMind AI in healthcare drug discovery" show that Google has long focused on the heavy lifting—like structure prediction with AlphaFold—and massive partnerships with hospital systems. Microsoft is aiming for patient-facing personalization, while Google has focused heavily on the backend discovery and diagnostic support tools. The race is now defined by who can bridge the gap between fundamental research and personalized, real-time patient interaction most safely.

- OpenAI/Anthropic: While Microsoft uses its partnership with OpenAI as leverage, the core model technology (GPT-4, Claude) still needs massive fine-tuning and safety guardrails specific to medicine. The competitive edge will not just be model size, but domain expertise and safety accreditation.

For businesses, this means the competitive advantage is shifting. It’s no longer just about having access to a foundation model; it’s about who can build the most trustworthy, data-rich, and clinically validated application layer on top of it.

The Technical Everest: Charting the Course to Superintelligence

The term "medical superintelligence" sounds like science fiction, but technically, it describes the ability of an AI system to process, synthesize, and reason across *all* available medical knowledge and patient data simultaneously, exceeding the capacity of any single human expert. To get there, developers must conquer several colossal technical challenges.

The Multimodal Data Fusion Problem

This is where the engineering complexity explodes. A human doctor relies on spoken narratives, visual examination, historical records (text), and lab reports (numerical tables). Copilot Health must achieve true multimodal data fusion. Our technical deep dive searches emphasize this: LLMs are excellent at text, but integrating the complexity of an MRI scan, the trend analysis of a year of blood sugar readings from a wearable, and the nuance of a handwritten note requires models that are inherently designed for multiple data types.

Achieving clinical-grade diagnostic accuracy means moving past plausible-sounding answers to answers that are verifiable, explainable, and robust enough to withstand the scrutiny of an emergency room. This level of reliability requires architectural breakthroughs far beyond the current state-of-the-art in general-purpose LLMs.

The Context Window and Knowledge Integrity

A key challenge is scope. A single patient might generate terabytes of relevant health data over a lifetime. The AI needs an effectively infinite context window to maintain the full patient history while simultaneously incorporating the latest global medical literature. If the system hallucinates a drug interaction or misinterprets a historical condition, the consequences are fatal. Therefore, the development roadmap must prioritize knowledge grounding and retrieval-augmented generation (RAG) systems specifically tuned for medical ontologies, far stricter than those used for general search.

The Inescapable Reality: Regulatory and Ethical Gateways

The biggest bottleneck slowing down the deployment of powerful health AI is not computation; it is trust, validation, and law. If the general AI race is a sprint, the health AI race is a marathon run through a minefield.

Navigating the FDA and SaMD Frameworks

When an AI model moves from providing general wellness tips to advising on medication adjustments or flagging a critical finding on a scan, it crosses the threshold into becoming a regulated medical device. Our essential research into "FDA regulation Generative AI in clinical practice" is vital here. The FDA classifies such systems as Software as a Medical Device (SaMD). The challenge is dynamic systems: Traditional medical devices are 'locked' once approved. Generative models, however, are designed to learn and evolve. How does the FDA regulate an AI that improves itself daily?

For Microsoft and its competitors, the immediate practical implication is a need for robust, transparent validation pipelines that can prove safety and efficacy not just at launch, but continuously. This regulatory uncertainty slows adoption, regardless of technical capability.

Data Privacy: The HIPAA Minefield

Copilot Health’s power relies on merging disparate, highly sensitive datasets (wearables, EHRs). In the US, this triggers the complex web of HIPAA regulations. While Microsoft has world-class expertise in compliance (Azure Health Data Services), the integration of personal, unstandardized wearable data into a HIPAA-compliant workflow requires novel technical and legal frameworks. Consumers must trust that their real-time physiological data will not be leaked or misused, even if aggregated and anonymized for model training.

Practical Implications: What Businesses and Society Must Prepare For

The arrival of sophisticated health AI impacts everyone, from technology providers to patients receiving care.

For Healthcare Providers (HCPs): Augmentation, Not Replacement

In the short term, Copilot Health and similar tools will serve as powerful *augmentation* tools for clinicians. They can drastically reduce the time spent on documentation, synthesizing complex patient histories before a consultation, and cross-referencing symptoms against billions of similar cases instantly. This frees up human bandwidth for empathy and complex decision-making—the parts of medicine AI cannot yet replicate.

However, CIOs and practice managers need actionable plans now regarding data infrastructure. Integrating legacy EHR systems with modern, real-time streaming data from consumer devices is a massive IT undertaking that must begin immediately if they wish to leverage these tools effectively.

For Patients: The Rise of the Empowered (and Overwhelmed) Consumer

For the average consumer, Copilot Health promises unprecedented access to personalized health insights. Imagine an AI that knows your genetic profile, diet, sleep patterns, and medication schedule, flagging a subtle change in your resting heart rate correlated with environmental data long before you feel ill. This is the promise of truly preventative health.

The risk, however, is alert fatigue and anxiety. If AI generates too many low-stakes warnings, patients may ignore critical ones. Furthermore, businesses funding these initiatives must actively study the effects of personalized health Nudges on behavior—are we creating healthier citizens, or simply more worried ones?

For AI Developers: The Specialization Premium

The market is telling developers clearly: specialization earns trust (and revenue) faster than generalization. Companies succeeding in health AI will be those that demonstrate domain mastery—understanding the difference between correlation and causation in a clinical context, and building models that admit when they don't know the answer. Investment in areas like multimodal fusion and explainable AI (XAI) will yield higher returns in health than in general consumer applications.

Actionable Insights for Navigating the Health AI Horizon

To move forward constructively, both technologists and decision-makers must focus on three actionable areas:

- Demand Transparency in Data Provenance: Technology leaders must insist on clear audit trails for every piece of advice Copilot Health offers. Where did the input data come from (wearable vs. EHR vs. general LLM knowledge)? This traceability is non-negotiable for clinical sign-off.

- Invest in Regulatory Sandboxes: Instead of waiting for final regulatory frameworks, businesses should actively participate in emerging FDA/EU regulatory "sandboxes" or pilot programs designed for rapidly evolving AI. Proactive engagement minimizes future disruption.

- Prioritize Human-in-the-Loop Design: For the next five years, success in health AI will be measured by how seamlessly the technology collaborates with, rather than replaces, human expertise. Design interfaces that present AI insights as high-confidence suggestions, clearly separating them from actionable clinical orders.

The race to medical superintelligence, kicked into high gear by Microsoft’s Copilot Health, is the most significant technological challenge of the current decade. It requires merging the lightning speed of foundational model development with the slow, deliberate rigor of medical science. The winners will be those who master the technical integration of multimodal data while earning the profound trust required to operate at the intersection of data, diagnosis, and human life.