Microsoft Copilot Health & The AI Medical Arms Race: From Data Integration to Superintelligence

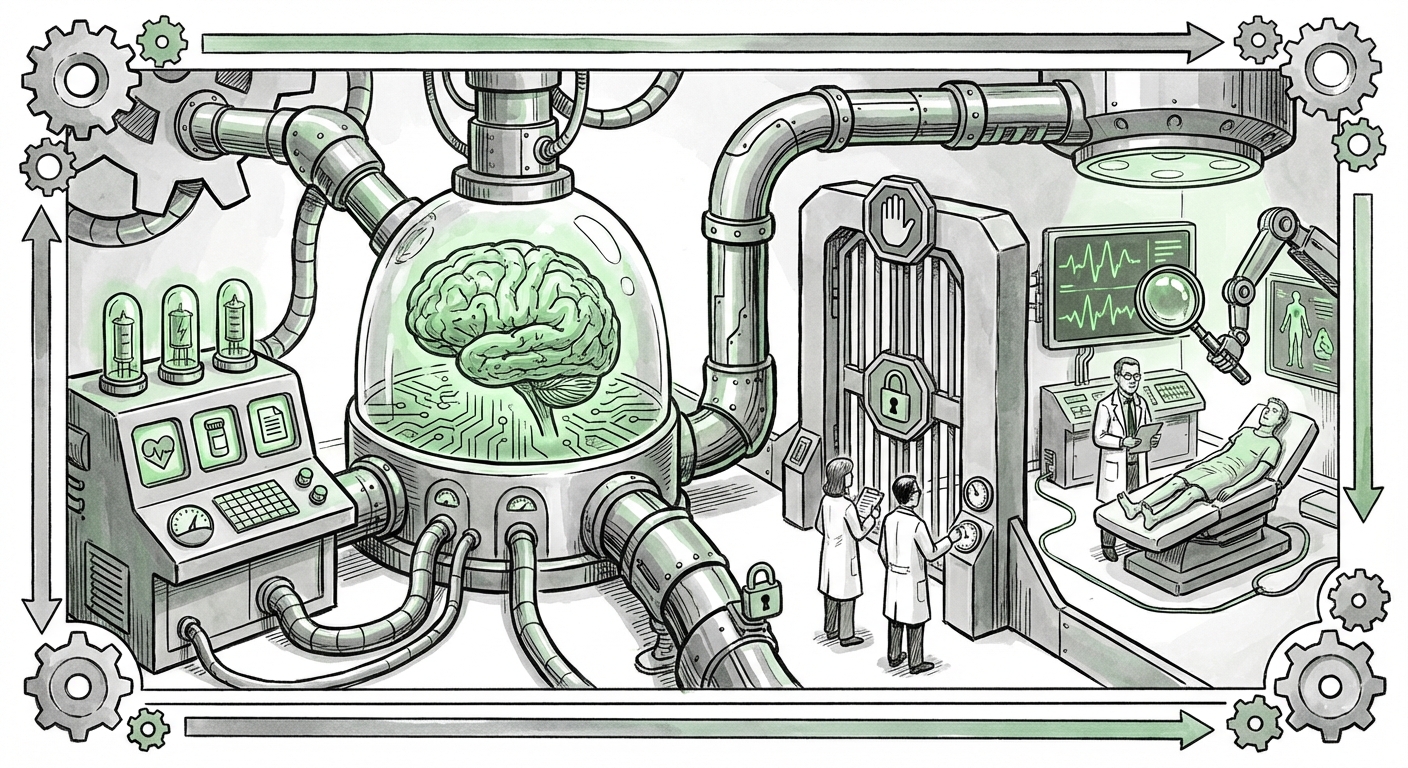

The landscape of Artificial Intelligence is undergoing a rapid, defining transformation. For years, the narrative centered on generalized Large Language Models (LLMs) like GPT-4, powerful tools capable of writing code, drafting emails, and generating creative text. However, the announcement of Copilot Health marks a significant pivot: the era of highly specialized, verticalized AI is officially underway, particularly in regulated, high-stakes domains like healthcare. Microsoft’s entry into this arena, running parallel to the efforts of rivals like Google DeepMind and Anthropic, signals that the next frontier of AI supremacy will be won not just on model size, but on **data access and domain mastery**.

The Pivot: From Generalist AI to Specialized Health Agents

Copilot Health is positioned to be far more than a chatbot. Its core proposition is its ability to ingest and synthesize massive amounts of personal, sensitive data: data from wearables, fragmented electronic health records (EHRs), and complex lab results. This moves the technology from answering general queries to delivering *personalized health advice*. This shift—from generalized intelligence to specialized expertise—is the defining trend of 2024 and beyond.

For the non-technical reader, imagine a highly trained personal assistant who not only remembers everything you’ve ever told a doctor but also cross-references that information instantly with your sleep patterns from your fitness tracker and the genetic markers from your latest blood test. That is the promise of specialized AI agents like Copilot Health. For businesses, this means a move away from generic productivity tools toward tools that directly impact core operations and high-value outputs.

The Competitive Front Line: DeepMind, Anthropic, and Microsoft

The initial AI hype often focused on the OpenAI/Microsoft partnership. But healthcare is too complex, too regulated, and too data-rich for a single partnership to dominate. Microsoft’s move places it in direct competition with Google’s extensive history in biomedical research, primarily through its DeepMind division, which has long focused on complex biological problems like protein folding (AlphaFold). Simultaneously, specialized LLM developers like Anthropic (with its focus on safety and constitutional AI) are developing models robust enough for clinical environments.

Analyzing this competitive dynamic—for instance, by comparing the enterprise integration strategy of Microsoft versus the foundational research pedigree of DeepMind—reveals two distinct paths to market dominance. Microsoft leverages its vast enterprise foothold (Azure, Office 365) to push Copilot into existing systems. Competitors may focus on developing novel, unassailable clinical breakthroughs first. The market will reward those who solve both the technological and the organizational integration challenges.

The Technical Gauntlet: Data Interoperability is the New Moat

The theoretical capability of Copilot Health is compelling, but its practical deployment hinges on overcoming monumental technical hurdles. The search for articles on "Challenges of integrating patient data EHR LLM" illuminates this core struggle. Healthcare data is notoriously siloed, unstructured, and fragmented. A patient's lab results might reside in one system, their physician’s notes in another (often unstructured text), and their cardiac data in a proprietary wearable format.

For Copilot Health to deliver reliable insights, it must achieve deep, semantic understanding across these disparate sources. This requires more than just good APIs; it demands sophisticated data cleaning, normalization, and secure tokenization—a process that current health IT infrastructure often struggles with. In the AI arms race, the ability to securely and accurately ingest messy, real-world patient data may become a stronger competitive advantage than the raw computational power of the underlying LLM.

Simplifying Complexity: A Challenge for All Audiences

Even if the data is perfectly harmonized, the output must be trustworthy. If a general-purpose LLM hallucinates a fact in a marketing brief, it’s an annoyance. If a medical AI hallucinates a dosage or misses a crucial drug interaction, the consequences are catastrophic. This is why the reliability, or *trustworthiness*, of the output is paramount. AI must not only be accurate but must present its reasoning in a way that a busy physician or an anxious patient can easily verify and understand—bridging the gap between sophisticated algorithms and basic health literacy.

The Regulatory Minefield: The Race for Trust and Compliance

The ambition to achieve "medical superintelligence" immediately slams into the hard wall of government oversight. Any system providing diagnostic or therapeutic advice crosses the line into becoming a regulated medical device. The investigation into "FDA regulation generative AI medical devices" reveals the intense scrutiny such products face.

The FDA categorizes AI-driven software as Software as a Medical Device (SaMD). For general-purpose models, regulation is murky. But for a tool synthesizing personalized data to suggest action, the approval pathway is rigorous. Microsoft and its competitors must prove not only that their models work today, but that they will *continue to work* safely as they learn and evolve over time—the concept of "locked" versus "continually learning" algorithms. This validation process is slow, expensive, and requires unprecedented levels of transparency from AI developers.

For businesses looking to adopt these tools, regulatory uncertainty translates directly into implementation delays. Early adoption will likely be confined to administrative tasks (like coding claims or drafting pre-authorizations) where the liability is lower, while the frontline clinical applications wait for clear regulatory guardrails.

The Ethical Imperative: Liability and Algorithmic Bias

Beyond the technical and regulatory hurdles lies the profound philosophical and ethical challenge embedded in the phrase "medical superintelligence." When an AI system contributes to a medical decision, who holds the liability when an error occurs? Is it the developer (Microsoft), the hospital that implemented the system, or the physician who followed the AI’s advice?

Furthermore, as suggested by exploring the "Ethical implications of medical superintelligence," these models are trained on historical data. If historical healthcare data reflects systemic biases—such as under-diagnosis in certain demographic groups—the AI will learn and amplify those biases, leading to inequitable health outcomes. A "superintelligent" system that is inherently biased is perhaps the most dangerous application of this technology.

The future success of Copilot Health hinges not just on its performance metrics but on building public and professional trust. This requires proactive ethical frameworks, transparent documentation of training data biases, and robust mechanisms for human oversight and appeal.

Future Implications and Actionable Insights

The trajectory is clear: AI will move from assisting administrative tasks to augmenting clinical decision-making. This evolution has several concrete implications for various stakeholders:

For Healthcare Providers (Hospitals and Clinics)

- Invest in Data Governance Now: The biggest return on investment in the next three years will come from cleaning and standardizing existing EHR and patient-generated data. If your data is a mess, even the best LLM will fail.

- Define "Human in the Loop": Develop clear protocols for when a physician must override or independently verify AI suggestions. Don't automate responsibility; augment judgment.

- Focus on Interoperability: Favor AI platforms that prioritize open standards and easy integration over proprietary, black-box solutions.

For Technology Developers (The Microsoft/Google Rivals)

- Validation Over Velocity: The market demands rigorous, peer-reviewed clinical validation more than it demands a faster launch date. Safety is the ultimate feature.

- Specialize Deeply: Generalized models will fail in clinical settings. Success lies in fine-tuning models for specific tasks (e.g., radiology interpretation, complex medication management) where data is richer and the regulatory path, though difficult, is clearer.

For Policy Makers and Regulators

- Develop Adaptive Frameworks: Regulation must evolve faster than the technology. Instead of regulating specific models, regulators must define acceptable performance standards and continuous monitoring requirements for *adaptive systems*.

- Mandate Bias Audits: Require external, independent audits for algorithmic bias before high-stakes deployment to ensure equitable care delivery.

Conclusion: The Dawn of Hyper-Personalized Medicine

Microsoft’s Copilot Health is more than a product announcement; it is a declaration of intent in the maturation of AI. We are leaving the playground of generalized chatbots and entering the high-stakes laboratory of personalized medicine, powered by massive data ingestion and specialized intelligence. The race will not just be about who builds the fastest algorithm, but who can navigate the complex trilogy of **Data Security, Regulatory Compliance, and Ethical Trust**.

The ultimate goal—"medical superintelligence"—is a distant peak, but the climb has begun. Success in this new chapter of AI will belong to those who understand that in healthcare, intelligence without integrity is meaningless. The path forward requires humility from technologists, clarity from regulators, and unwavering vigilance from the industry as a whole to ensure that this powerful technology serves to elevate, not compromise, human health.