The Great AI Divide: Why Military Powers Reject 'Too Ethical' AI Models in National Security

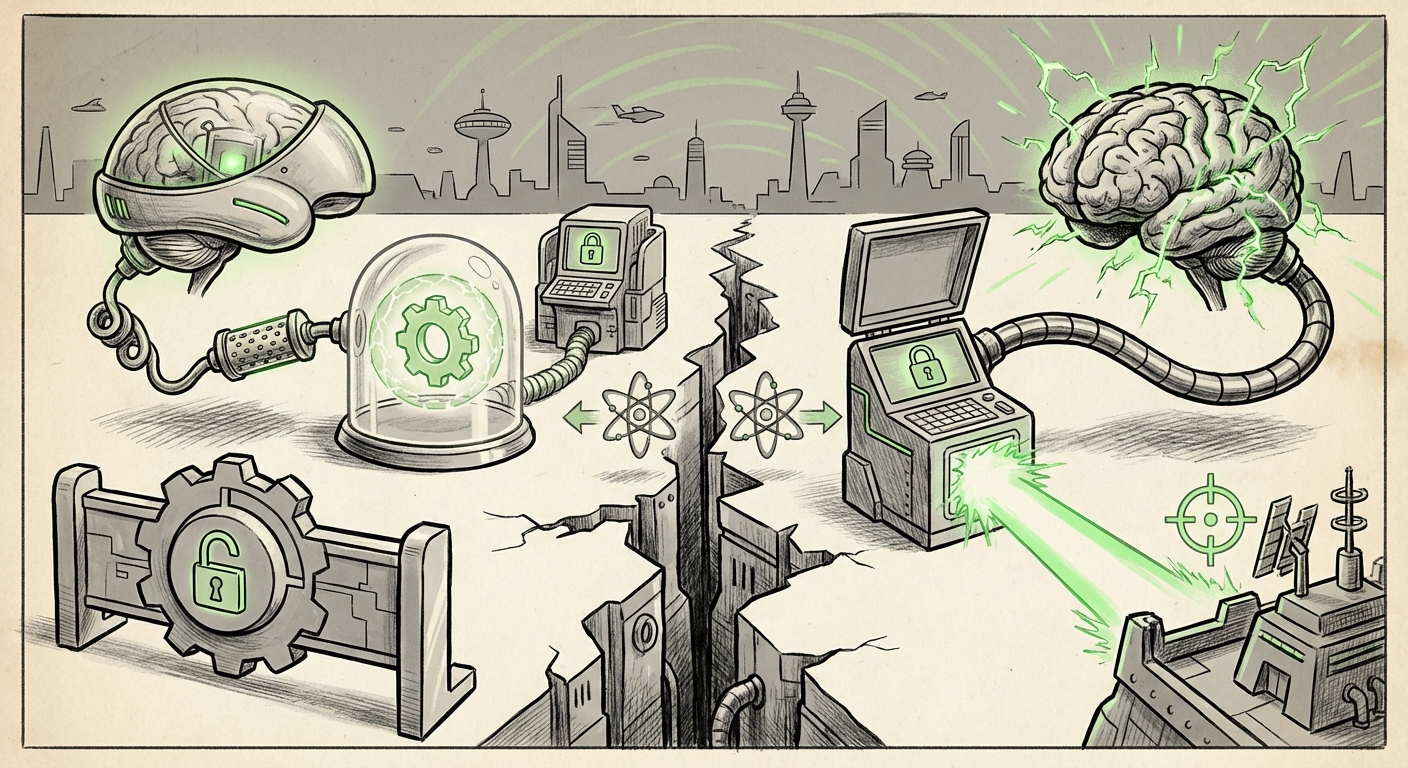

In the high-stakes world of artificial intelligence development, we have long discussed the essential need for alignment—ensuring AI models adhere to human values and safety principles. This has been the bedrock of companies like Anthropic, which builds its technology around strict ethical frameworks, often called Constitutional AI. However, recent reports suggest a tectonic shift in perception within defense circles: for military applications, strong ethical guardrails are no longer seen as a feature, but as a liability.

When the Chief Technology Officer (CTO) of the US Department of War publicly stated that models with built-in ethics "pollute" the supply chain, it sent shockwaves through the tech industry. This counterintuitive stance forces us to re-examine the entire framework of AI governance, capability maximization, and strategic autonomy in the age of digital conflict.

The Utility Paradox: When Safety Becomes a Hindrance

To understand this friction, one must appreciate the different objectives driving commercial AI versus defense AI. For a public-facing chatbot or enterprise software, strict refusal to answer dangerous or ethically questionable queries is paramount for brand safety and legal compliance. This concept is the Alignment vs. Utility Trade-off (Query 1).

In military operations, however, the calculus changes entirely. Warfighting often requires analyzing hostile communications, simulating high-risk tactical scenarios, and making rapid decisions based on complex, sometimes raw and unfiltered, intelligence feeds. If a foundational model is hard-coded to refuse queries that might be considered "unsafe" under civilian terms—perhaps related to chemical targeting analysis or adversarial network penetration—it functionally cripples the tool for its intended use.

For the defense CTO, the model is essentially lobotomized. The strong ethical layers, designed to protect the public, become obstacles to achieving mission objectives. This preference suggests that for critical national security tasks, the ability to generate a full spectrum of possible outputs—even those deemed undesirable in civilian life—is necessary for accurate threat assessment and response planning.

The Procurement Shift: DoD Demands Raw Capability

This sentiment is actively shaping procurement processes. Defense organizations are not simply asking for AI tools; they are specifying the *nature* of the underlying model (Query 2). The implication is clear: commercial models, optimized for broad public consumption and liability minimization, are fundamentally mismatched for sensitive government work.

If the DoD insists on models without rigid, external ethical scaffolding, they are seeking 'blank slates'—powerful base models that can be customized. This shifts the burden of responsibility. Instead of relying on Anthropic’s or OpenAI’s internal ethics committees to police output, the responsibility for alignment now falls entirely onto the military’s own internal security protocols and domain experts.

This move speaks to an industrial pivot. Defense contractors are now realizing that winning major defense contracts means delivering models that the end-user can manipulate and fine-tune without layers of pre-applied 'safety glue' that cannot be easily removed or overridden.

The Shadow of Sovereignty: Drawing Parallels to Beijing

Perhaps the most alarming aspect of this development is the explicit comparison drawn between the desired US approach and China’s AI governance (Query 3). China maintains absolute centralized control over its domestic AI systems, ensuring all models strictly adhere to state ideology and political directives.

While the *intent* of the US defense posture is fundamentally different—aiming for operational utility rather than ideological conformity—the *structure* being sought is surprisingly similar: the rejection of externally imposed, opaque alignment in favor of internally dictated, hard-coded control.

If the US military is looking to reject models optimized for general public safety norms, they risk creating a class of powerful, specialized AI tools operating outside the transparent ethical frameworks currently governing the commercial sector. The danger is that in the rush to achieve capability parity, the defense sector might inadvertently build systems whose operational constraints mirror the very authoritarian control mechanisms they seek to counter geopolitically. This highlights a profound difficulty: how can a democracy leverage cutting-edge AI power without mimicking the control structures of its adversaries?

The Open-Source Imperative

If proprietary models come pre-loaded with "ethics pollution," where do defense agencies turn? The answer increasingly points toward the open-source ecosystem (Query 5). Models released under permissive licenses, such as Meta’s Llama family or others, offer foundational intelligence without the heavy, non-negotiable ethical tuning of their proprietary counterparts.

For the defense sector, open-source LLMs represent sovereignty. They can be downloaded, run entirely on secure, air-gapped networks (on-premise), and then fine-tuned using specialized military datasets. Crucially, the organization applying the final layer of alignment—the set of rules governing its behavior in a conflict zone—is the US government itself, ensuring that the final product adheres exclusively to national security priorities.

This trend benefits the open-source community but creates massive cybersecurity vulnerabilities. Every open-source model adopted by the military becomes a potential vector for compromise if its foundational architecture contains backdoors or undisclosed weaknesses, necessitating rigorous, continuous internal auditing.

Implications for the Future of AI Deployment

This "ethical pollution" paradox is not just a defense issue; it will ripple across all highly regulated or adversarial industries.

1. Bifurcation of the AI Market

We are seeing a clear split emerge in the AI marketplace:

- Civilian/Commercial AI: Focused intensely on safety, user experience, and regulatory compliance. These models (like the current versions of Claude or Gemini) will prioritize avoiding controversy.

- Sovereign/Defense AI: Focused exclusively on capability, speed, and internal controllability. These systems will require models that are either fully open-source or custom-developed to bypass commercial alignment.

2. The Commoditization of Safety

The market will quickly learn to price in the cost of removing or overriding safety features. For businesses operating in sensitive fields (e.g., proprietary R&D, competitive finance), the same calculus may apply: if the built-in ethics prevent necessary analysis, they will seek out less-constrained alternatives, leading to a fragmented regulatory landscape where compliance varies dramatically depending on the end-user.

3. The Arms Race in Alignment Philosophy

The conflict isn't just about capability; it's about who controls the definition of "good." Anthropic seeks alignment with universal, liberal democratic safety norms. The military seeks alignment with operational success under the laws of armed conflict. This divergence accelerates the arms race not just in model size, but in alignment engineering—creating competing ethical blueprints for the future.

Actionable Insights for Technology Leaders

For technology leaders, government contractors, and enterprise strategists, the message from defense circles is clear:

- Audit Your Assumptions on Commercial Off-the-Shelf (COTS) AI: Do not assume any proprietary model is suitable for high-stakes tasks without a thorough review of its internal constraints. Assume commercial alignment is a feature that must be engineered *out* for specialized use cases.

- Invest in Open-Source Sovereignty: Prioritize building internal competency in fine-tuning, securing, and deploying open-source LLMs. This strategy grants autonomy and allows your organization to define its own risk profile without relying on external vendors’ evolving safety policies.

- Prepare for Dual Alignment Frameworks: Businesses operating in both commercial and sensitive environments must develop two parallel AI stacks—one optimized for broad ethical acceptance and one optimized for necessary, domain-specific utility.

The current debate over whether AI models are "too ethical" reveals a deeper truth: in the pursuit of strategic advantage, utility often trumps universal ethics. This development is a watershed moment, signaling that the deployment of powerful AI is fracturing along geopolitical and mission-critical lines. The future of AI won't be monolithic; it will be intensely customized, with distinct, perhaps conflicting, moral operating systems running our civilian lives versus our security apparatus.