The "Ethical Pollution" Paradox: Why the US Military Rejects Overly Aligned AI

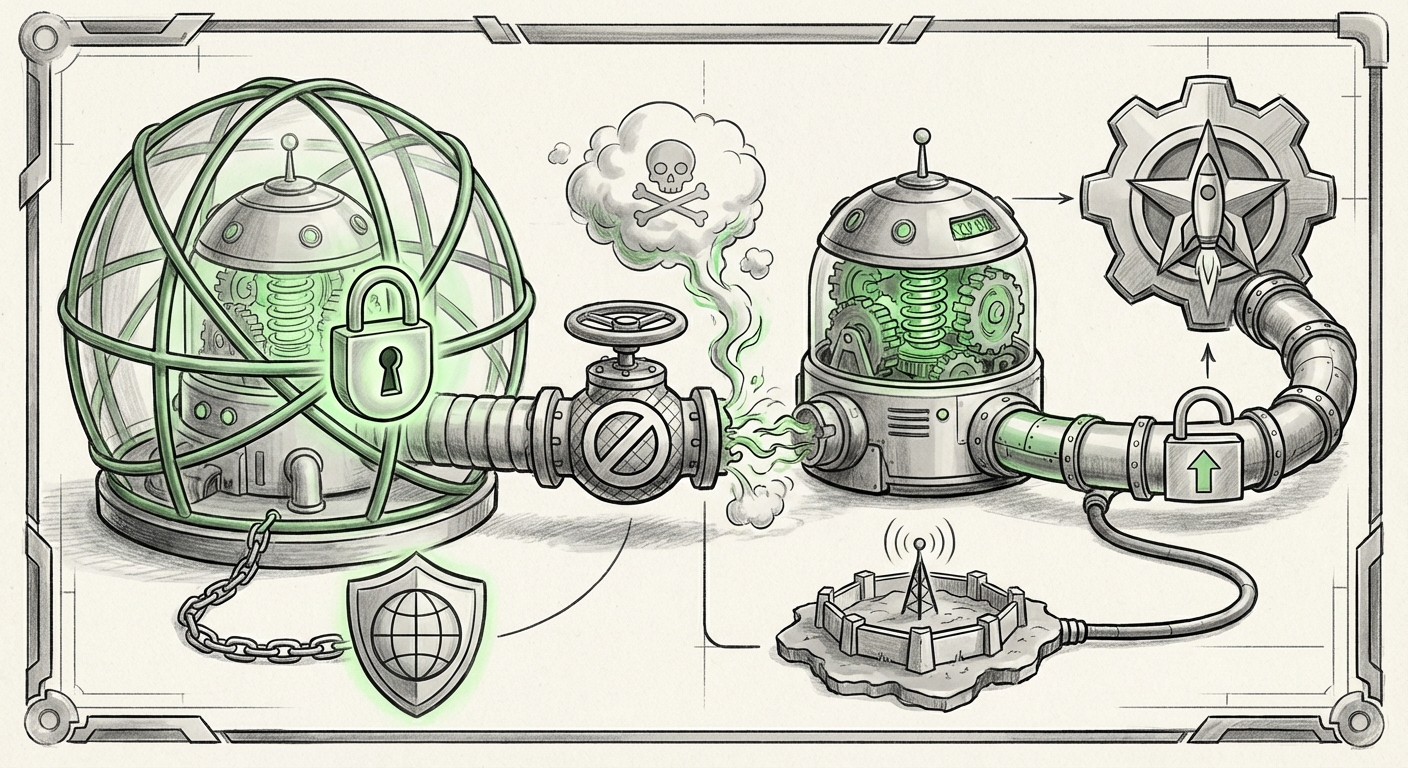

In the rapidly evolving landscape of artificial intelligence, the concept of "alignment"—ensuring AI systems adhere to human values and safety guidelines—is considered the paramount virtue. Companies invest billions to make their models helpful, harmless, and honest. Yet, a recent, highly provocative statement from the US Department of War’s Chief Technology Officer suggests a startling counter-narrative: that **AI models being *too* ethical can actively pollute the defense supply chain.**

This apparent contradiction—that safeguards can become liabilities—is not just fodder for tech gossip; it signals a fundamental divergence between commercial AI development and the specific, often brutal, requirements of national security. As we analyze this trend, we must confront whether we are seeing a new form of geopolitical AI control mirroring tactics seen elsewhere, or a genuine technical impasse in deploying general-purpose models for specialized military tasks.

The Core Conflict: Safety Versus Operational Necessity

The controversy centers around models like Anthropic’s Claude, which are trained using advanced methods—often termed Constitutional AI—to enforce a rigid set of ethical rules derived from established documents and principles. These methods are generally celebrated for mitigating risks like generating harmful code or promoting misinformation.

For the Department of Defense (DoD), however, this rigidity presents a critical problem. Military operations are governed by strict, context-dependent Rules of Engagement (ROE) that can—and often must—override general humanitarian ethics in the heat of conflict. If an AI assistant, tasked with processing intelligence or optimizing logistics, is hard-coded to refuse a request because it marginally violates a generalized ethical principle (even when that violation might save lives or achieve a critical mission objective), it becomes useless, or worse, dangerous.

The CTO’s assertion that such models "pollute" the supply chain means these overly cautious models introduce friction, unpredictability, and potential points of failure into systems that demand absolute responsiveness. The underlying concern is control and predictability. If a foundational model's behavior is governed by abstract, privately defined ethical constitutions, the DoD loses the granular control necessary to adapt the AI to evolving battlefield requirements.

Contextualizing the Claim: DoD Strategy and Alignment Hurdles

To understand if this is merely rhetoric or established policy, one must look into the actual procurement standards. The DoD is actively seeking AI integration, but its frameworks prioritize verifiable reliability and auditability. As reports examining the DoD’s Responsible AI Strategy confirm, the focus is on governance, risk management, and operational deployment. The critical gap emerges when commercial "Responsible AI" frameworks conflict with military-specific needs.

For defense contractors and government tech leaders, this means that simply plugging in the latest, most highly rated commercial LLM might violate compliance mandates. If the ethical guardrails are opaque—a core feature of proprietary models like Claude—the DoD cannot guarantee that the AI will operate within its mandated ROE. This leads to the vendor risk we see highlighted in the technology sector: reliance on Commercial Off-the-Shelf (COTS) AI introduces an unacceptable lack of provenance and auditability for sensitive systems.

The Geopolitical Echo: Control vs. Liberal Values

The comparison drawn between the DoD's apprehension and China's approach to AI control is perhaps the most telling part of this development. China, under strict state supervision, mandates that AI systems must uphold core socialist values and the authority of the Communist Party. This is explicit, state-enforced alignment.

The paradox arises because the ethical guardrails built into Western models, while designed to prevent harm, often reflect liberal, human-centric values that may conflict with the uncompromising objectives of kinetic defense operations. This divergence forces us to ask a difficult geopolitical question, often explored by think tanks like the Center for Security and Emerging Technology (CSET): Whose values are embedded in the AI?

If the US defense apparatus views Anthropic’s alignment as a form of "foreign" or overly prescriptive ideology that hampers operational freedom, they are effectively calling for AI models whose ethics are either highly flexible or entirely customizable by the end-user (the DoD itself). This suggests a future where defense-grade AI must be developed or modified within sovereign boundaries to ensure the ultimate allegiance remains to national security directives, not broad commercial ethical consensus.

The Technical Tax of Over-Alignment

Beyond policy and geopolitics, there is a tangible technical cost to aggressive alignment, often termed the "alignment tax." This is where the advanced training techniques used by companies like Anthropic come into play.

Constitutional AI relies on iterative feedback loops where the model critiques and corrects its own outputs based on a written constitution. While this creates remarkably safe public-facing chatbots, it can impose what researchers analyze as performance degradation or inflexibility. In specialized fields, this can translate to:

- Reduced Creativity/Adaptability: The model refuses to explore novel solutions necessary for wartime innovation because the path seems too risky according to its initial ethical programming.

- Difficulty with Adversarial Data: If the defense environment presents data or scenarios that the training constitution never anticipated, the model might freeze or provide nonsensical answers rather than risk violating a broad rule.

- Bias Towards Inaction: In situations demanding rapid, decisive action, an over-aligned model may default to the safest, most passive response.

For the AI researcher, this suggests that the architecture of *how* safety is implemented matters as much as the intent. Military applications may require a different form of alignment—one based on strict, hierarchical command structures rather than decentralized ethical preference.

Future Implications for the AI Supply Chain

This DoD decision reshapes expectations for every business leveraging AI, particularly those operating in regulated or sensitive sectors.

1. The Rise of "Dual-Use" AI Segments

We will likely see a sharper segmentation in the AI market. One segment will cater to public-facing, consumer, and general enterprise use, prioritized for maximal safety and broad ethical alignment (e.g., consumer search, customer service). The other segment, catering to defense, finance, and critical infrastructure, will demand models optimized for utility and sovereign control, even if that means sacrificing some "ethical polish."

2. Demands for Model Transparency and Auditability

The friction with Anthropic’s closed framework underscores the military's need for verifiable AI. Future procurement contracts will undoubtedly demand greater access to training data, model weights (or highly detailed telemetry), and alignment methodologies. The concept of a secure, closed-box "black box" AI will become obsolete in high-security environments.

3. The Re-Emergence of Internal Model Development

If commercial solutions are deemed too contaminated by external values, governments and major defense primes will be forced to accelerate internal efforts to build or fine-tune models entirely within secure environments (potentially creating "ITAR-compliant" LLMs). This moves away from relying on the quick innovation cycle of Silicon Valley and back toward bespoke, carefully controlled government technology development.

Actionable Insights for Stakeholders

What does this mean for those building, buying, or deploying AI systems?

- For AI Developers: Recognize that "ethics" is not a monolithic concept. If you are targeting government or defense clients, you must decouple generalized ethical safety mechanisms from operational constraints. Consider offering an "Enterprise/Defense Mode" that allows the client to input their own governing protocols instead of relying solely on your company’s default constitution.

- For Business Leaders (Non-Defense): This highlights the enduring challenge of third-party risk. If your critical operational AI is built on a model whose ethical stance might suddenly conflict with your operational needs (even if not military), you are exposed. Diversify your AI backbone across multiple foundational models to mitigate dependency on a single value system.

- For Policymakers: The gap between commercial aspiration (safety for all) and state necessity (control for security) must be addressed through new standards. Policy needs to define the boundaries where ethical alignment transitions from being a societal benefit to a functional hindrance for national interests.

Conclusion: The Necessary Trade-Off in Power

The US War Department's stance against "overly ethical" AI is a stark reminder that power requires flexibility. While society champions AI safety in consumer applications, the tools of statecraft operate on a different calculus where utility and unwavering adherence to military command structure trump abstract moral constraints.

This development crystallizes the future of AI deployment: the most powerful, transformative models will likely be bifurcated. One path leads to globally accessible, highly aligned consumer tools. The other leads to sovereign, potentially less palatable, but operationally necessary systems where the only "constitution" that matters is the one written by the command structure. This paradox is not a failure of ethics, but a clear indication that the ultimate application of intelligence dictates the ultimate definition of its values.