The Battlefield Data Revolution: How Sharing Live Combat Footage is Forging the Next Generation of Autonomous Warfare AI

The conflict in Ukraine has become a crucial, albeit tragic, proving ground for military technology. While attention often focuses on the resilience of specific weapon systems, a far more significant long-term development is occurring behind the scenes: the feeding of high-fidelity, real-time combat data into Artificial Intelligence (AI) training pipelines. When a nation opens its battlefield data to its allies specifically to train autonomous drones, it signals an inflection point—a shift from theoretical military AI development to rapid, practical, and data-driven deployment.

As an AI technology analyst, this development is electrifying. It bypasses years of reliance on expensive, limited, or sanitized simulation data. This is not just about faster drones; it’s about fundamentally accelerating the pace of military machine learning across allied defense postures. But what does this mean for the technology itself, the ethics surrounding it, and the global strategic landscape?

The Data Advantage: Real-World Scenarios Trump Simulation

Machine learning models, especially those powering autonomous systems, are entirely dependent on the quality and diversity of their training data. Imagine trying to teach a self-driving car to recognize a stop sign when it has only ever seen perfectly lit, clean images. Now imagine teaching an autonomous drone to identify a disguised enemy vehicle in heavy fog, electronic jamming, and under fire.

Real combat data offers invaluable context that simulations struggle to replicate:

- Adversarial Noise: Live footage includes the chaotic visual and electronic "noise" generated by modern conflict—smoke, sensor interference, poor weather, and enemy countermeasures. AI trained on this is far more robust.

- Edge Cases: Battlefield scenarios present unique situations—a vehicle partially obscured by debris, an unexpected thermal signature—that are rare in pre-planned training exercises.

- Human Intent: Observing how targets behave in real threat scenarios refines AI predictions about movement and intent, crucial for autonomous targeting.

This sharing initiative leverages the immediacy of the conflict. Allies are effectively gaining access to a continuous, massive, and constantly updated dataset of what works and what fails against contemporary threats. This accelerates the development cycle for systems, turning years of R&D into months of refinement. This methodology directly relates to Query 2, exploring how allies utilize this information to build robust **synthetic data generation** environments.

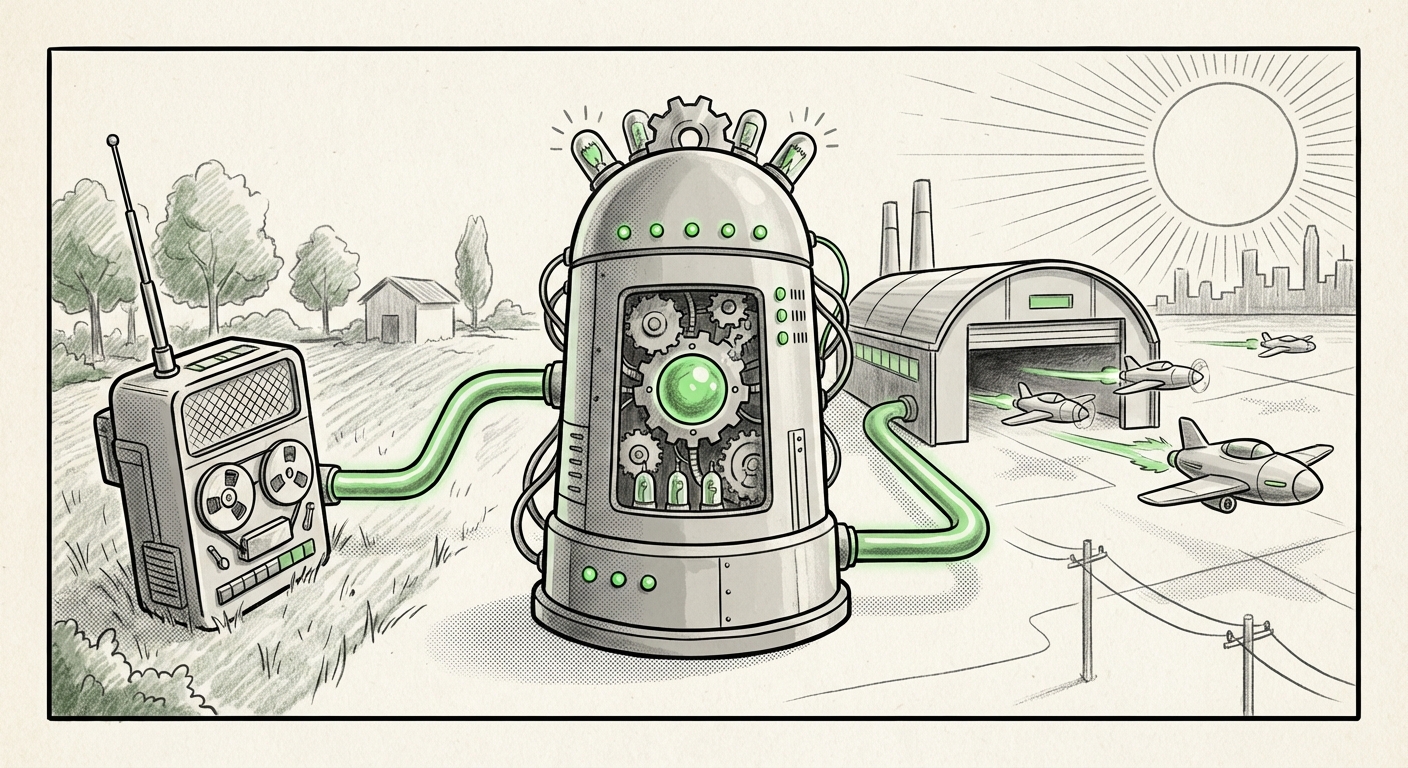

From Raw Feed to Digital Twin: Scaling AI Training

While raw combat footage is gold, it cannot be the sole input. As suggested by analysis surrounding this trend, defense labs are now using this validated, real-world data to construct incredibly accurate digital twins of operational environments. This is the critical scaling mechanism.

In simple terms, if Ukraine provides a thousand examples of a specific armored vehicle being hit correctly, engineers can use that data to fine-tune algorithms, and then use advanced simulation tools to generate *millions* of variations (different lighting, different angles, different environmental interference) based on that real-world foundation. This process, known as data augmentation, ensures that when a new drone model deploys, it has been tested against near-infinite variations of the real threat environment without risking a single drone in the field.

The Strategic Shift: NATO Interoperability and Data Governance

This exchange of deep technical data isn't just a transactional gift; it implies deep trust and existing technological alignment. This move necessitates, and confirms, robust frameworks for allied cooperation. Our analysis (informed by Query 3) suggests that such sharing relies on pre-existing or rapidly established **NATO AI strategy** and data-sharing protocols.

For businesses and governments focused on defense procurement, this means the future of interoperability won't just be about fitting parts together; it will be about ensuring shared cognitive capabilities—AI trained on compatible data standards that allow systems from different nations to cooperate seamlessly.

The implication for technology providers is clear: Proprietary, closed-source AI systems that cannot integrate into this allied data ecosystem will rapidly become obsolete. Success hinges on modularity, transparency in data handling, and adherence to shared security classifications.

The Ethical Crucible: Autonomous Weapons and Accountability

The most profound challenge raised by this acceleration touches upon governance, law, and ethics. When we train AI models using data gathered during active hostilities, we are embedding the reality of that conflict directly into the decision-making logic of future autonomous weapons systems (AWS).

This brings us directly to the core of Query 1: the debate around **Lethal Autonomous Weapons Systems (LAWS)**. If an AI drone, trained on Ukrainian combat footage, makes an autonomous targeting error months later in a different theater of operations, where does the accountability lie?

- Is it the human operator who launched the drone?

- Is it the engineer who wrote the code?

- Is it the data labeler who categorized the target in the initial dataset?

The speed of AI development is currently outpacing the speed of international regulation (like the efforts at the UN CCW). Sharing this live data forces allies to define, rapidly, what level of autonomy they are comfortable deploying. Do they limit the AI to "detect and classify," requiring human confirmation before engagement (Human-in-the-Loop), or do they move toward "detect, decide, and deploy" (Human-on-the-Loop or off)? The better the training data, the more tempting it becomes to increase autonomy for speed and effectiveness.

Societal Impact: From Battlefield to Commercial Sector

While the immediate focus is military, the underlying technological leaps inevitably bleed into the commercial sector. This conflict is serving as a real-world stress test for complex computer vision, real-time decision-making under uncertainty, and multi-agent coordination.

Consider the principles driving **drone swarm technology adoption** (Query 4). Whether the application is military surveillance or civilian infrastructure inspection (e.g., inspecting pipelines or wind farms across vast territories), the core AI challenges are the same: resilience, communication efficiency, and collective decision-making without constant human oversight.

Businesses investing in complex automation—from logistics robotics to agricultural technology—will benefit from the foundational breakthroughs made in defense AI, particularly regarding robustness against sensor degradation and chaotic environments. The insights gained on handling massive, unstructured video data streams will become the standard for future commercial AI applications.

Future Implications and Actionable Insights

This moment demands a proactive approach from stakeholders across the technology, policy, and investment landscapes.

1. For Defense Contractors and AI Developers: Prioritize Data Provenance

If you are developing defense AI, the quality and source of your training data are now your primary differentiator. Systems that can demonstrably incorporate validated, conflict-tested data will outperform simulation-only models. Developers must invest heavily in secure, auditable pipelines that track the provenance of every piece of training information to address accountability concerns.

2. For Policy Makers: Establish Rapid Governance Sandboxes

Traditional regulatory cycles are too slow for AI deployment rates demonstrated here. Governments must establish trusted, cross-national AI testing environments where ethical boundaries for autonomy can be stress-tested using sanitized or synthetic data derived from these live feeds. The goal should not be to stop progress, but to channel it safely, ensuring human judgment remains the ultimate check against lethal systems.

3. For Investors: Watch the Simulation Ecosystem

The companies that can effectively translate raw battlefield input into scalable, reliable synthetic training environments (Query 2) are poised for massive growth. Investigate firms specializing in advanced physics modeling, digital twin creation, and data augmentation for defense applications. They are the critical middlemen translating operational need into deployable intelligence.

4. For the Broader Public: Understanding the New Normal

The barrier between high-end military research and consumer technology is thinning. The public must engage with the debate about autonomous capabilities now, while the ethical lines are being drawn in the context of allied defense cooperation. The technology being perfected for drone swarms today will inevitably inform the logistics and automation systems of tomorrow.

The sharing of battlefield data is more than a technical update; it is a strategic declaration. It signals a commitment by allied nations to leverage the most powerful tool available—real-world data—to achieve an AI advantage in autonomous capabilities. The speed at which these systems learn will redefine deterrence, speed of engagement, and, critically, the very nature of human involvement in conflict.