The Million-Token Revolution: How Anthropic’s Price Cut Unlocks True Enterprise Understanding in AI

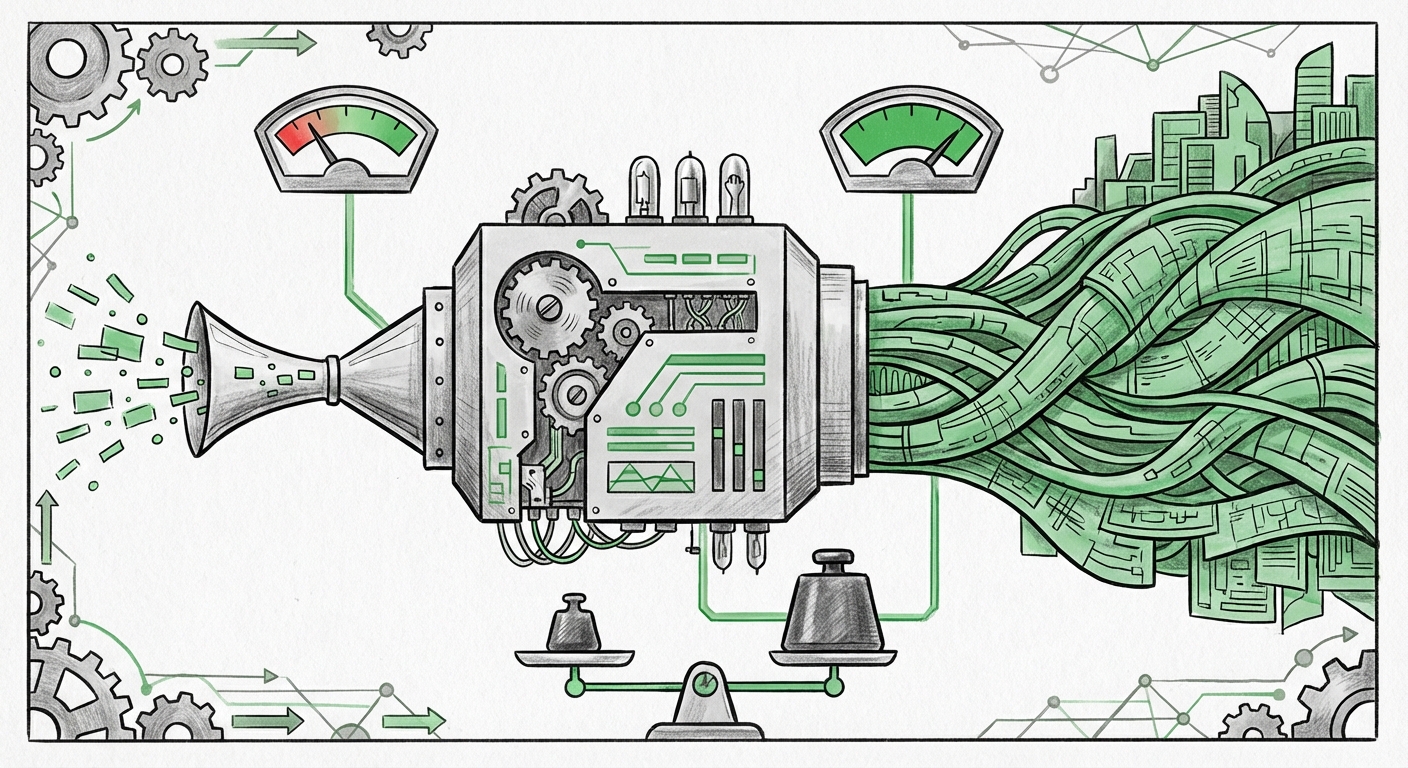

In the fast-paced world of Artificial Intelligence, milestones are often measured in sheer parameter counts or speed. However, a recent move by Anthropic signals a critical pivot: the focus is shifting from *how much* data the model sees to *how deeply* it can process it within a single interaction. Anthropic has dropped the surcharge for its industry-leading 1-million-token context windows in Claude Opus 4.6 and Sonnet 4.6, effectively making deep, document-heavy comprehension economically viable for mainstream enterprise use.

This development is more than a simple price adjustment; it’s an economic catalyst that changes the blueprint for complex AI deployments. For context, a million tokens is roughly equivalent to processing 1,500 pages of text or a substantial codebase simultaneously. Previously, pushing the boundaries past 200,000 tokens often meant doubling the cost, creating a significant barrier to entry for deep analysis tasks.

To understand the full gravity of this shift, we must analyze it through three critical lenses: the competitive pressure driving this decision, the likely technological maturity enabling the cost reduction, and the immediate, transformative implications for business applications.

Section 1: The Shifting Competitive Battlefield – From Speed to Depth

The AI ecosystem is currently defined by a fierce, duopolistic struggle for developer mindshare, primarily between OpenAI and Anthropic. When one player moves the goalposts on capability, the other must follow suit or risk losing market share in high-value sectors.

The pricing removal by Anthropic places immediate pressure on competitors regarding long-context handling. We must look to the closest competitor for context:

- Competitive Context Query: `"GPT-4o" context window pricing vs Anthropic Claude 3 Opus`

If OpenAI’s GPT-4o, known for its speed and cost improvements, maintains significant premium pricing for context windows exceeding its standard limits (which often hover around 128k or 200k tokens), Anthropic has established a clear advantage for tasks requiring true "read-it-all" comprehension. For CTOs and AI Strategists, this means the Total Cost of Ownership (TCO) calculation for large-scale data processing just tipped in favor of Claude for certain applications.

This isn't just about being cheaper; it's about parity at a higher capability. By eliminating the surcharge, Anthropic suggests that processing one million tokens is no longer exponentially harder than processing 200,000 tokens for their optimized models. This reframes the conversation from a feature battle (who has the longest window) to an economic viability contest (who can deliver the longest window affordably).

Section 2: The Unseen Engineering Breakthrough: Making Scale Affordable

Why would a company suddenly decide that servicing a massive context request costs the same as a moderate one? This strongly implies significant, often unannounced, engineering advances related to inference efficiency. Inference—the process of running the model to generate a response—is the primary operational cost for LLM providers.

The key bottleneck for long contexts is managing the Attention mechanism within the Transformer architecture. Every new token added to the context requires computational memory (the Key-Value or KV Cache) that grows linearly or quadratically depending on the implementation. Feeding a million tokens strains GPU memory to its absolute limit.

This move suggests success in tackling these engineering challenges:

- Architectural Query: `architectural improvements enabling cheaper million-token context LLMs`

Potential breakthroughs could involve:

- Optimized KV Caching: Smarter ways to compress or discard less relevant parts of the context memory without losing accuracy.

- Hardware Co-Design: Better utilization of specialized AI accelerators (like Nvidia’s H100s or custom silicon) specifically tuned for the unique memory access patterns of long-context inference.

- Inference Quantization: Further refinement in running the model at lower precision (e.g., 4-bit or lower) during inference without catastrophic performance degradation, saving memory substantially.

For ML Engineers, this is validation that the industry is rapidly moving past the theoretical scaling limits that once plagued long-context models. The technology to handle massive context efficiently is now mature enough to be commoditized.

Section 3: Practical Implications – The RAG Revolution Matures

The most immediate and exciting beneficiary of this pricing shift is Retrieval-Augmented Generation (RAG). RAG systems typically work by retrieving relevant small chunks of information (vectors) from a massive database and inserting them into the LLM’s prompt for answering. The context window length dictates how much retrieved information you can inject at once.

When context was expensive, developers had to be surgically precise with retrieval, often limiting the model to just the 'top 5' most relevant document snippets. This often led to missed context or overly narrow answers.

Now, the game changes:

- Enterprise RAG Query: `impact of cheaper long context windows on enterprise RAG performance`

Businesses can now afford to implement "Full Context RAG" or "Deep Context Synthesis":

- Legal Discovery & Compliance: Instead of feeding 20 relevant clauses from a 500-page contract set, a firm can submit the entire contract set (150,000 tokens) alongside the query. The model can then synthesize a response that accounts for cross-document dependencies, vastly reducing human review time.

- Codebase Analysis: Developers can submit the entirety of a legacy service repository (tens of thousands of lines of code) into the context window and ask the LLM to diagnose a bug or propose a refactor. This moves LLMs from simple code completion tools to true junior engineering partners.

- Financial Modeling: Feeding quarterly reports, analyst projections, and internal memos—all in one go—to derive holistic investment theses without relying on complex, multi-step chaining that loses context along the way.

For product managers, this means building more reliable, context-aware applications is now cheaper. The friction associated with context management (chunking, overlap, metadata stuffing) decreases when the context buffer is nearly infinite.

Section 4: The Governance Hurdle – Data Privacy in Deep Contexts

While the capability rush is exhilarating, the expansion into massive context windows introduces unavoidable friction on the governance and security front. If it is now affordable to send a million tokens, what data are you sending?

When a model sees just a few snippets, the risk exposure is relatively contained. When it ingests entire proprietary documents, financial statements, or private customer service transcripts, the data governance responsibilities scale dramatically.

- Governance Query: `LLM context window size data governance compliance`

This raises serious questions for compliance officers:

- Data Residency and Transmission: Are the providers processing this massive influx of data within required geographic boundaries? For regulated industries (finance, healthcare), sending 1MB of sensitive text across a border, even temporarily for processing, can trigger compliance failures.

- Prompt Injection Risk: A larger context window provides a larger attack surface. Sophisticated prompt injection attacks could potentially force the model to divulge information it synthesized from previously processed internal documents within the same session.

- Data Leakage via Fine-Tuning: Although Anthropic likely separates API input from future training data, the sheer volume of proprietary data being handled necessitates rigorous contractual assurances and audit trails regarding data handling policies.

For large enterprises, the actionable insight here is to treat any input exceeding 500,000 tokens as a high-risk data transmission event requiring explicit sign-off from legal and security teams, regardless of the API provider's stated privacy policy.

Conclusion: The Age of Contextual Supremacy

Anthropic’s strategic pricing removal is a declaration of confidence in their underlying architecture and a direct challenge to the status quo. It signals that the AI race is moving past simple access to models and into the era of Contextual Supremacy.

The future of AI application development will favor systems that can synthesize complex, distributed information in a single pass, rather than systems that rely on brittle, multi-step chaining across databases. This cost reduction democratizes true deep understanding, moving it from specialized research labs into the hands of everyday enterprise developers.

However, this powerful new capability demands increased responsibility. Businesses must balance the immense efficiency gains—from faster bug fixes to holistic legal analysis—with robust governance frameworks ensuring that the massive context they feed into these models remains secure, private, and compliant.

The cost of understanding has just plummeted. The cost of handling that understanding responsibly is now the next major barrier to master.

***

Reference Note: The basis for this analysis is the reported reduction in context window surcharges for Claude Opus 4.6 and Sonnet 4.6, as first reported by The Decoder: Anthropic drops the surcharge for million-token context windows, making Opus 4.6 and Sonnet 4.6 far cheaper. Further insights regarding competitive positioning and enterprise architecture were drawn from standard industry analysis frameworks.