The End of the Context Tax: Why Anthropic’s 1M Token Price Drop Redefines Enterprise AI

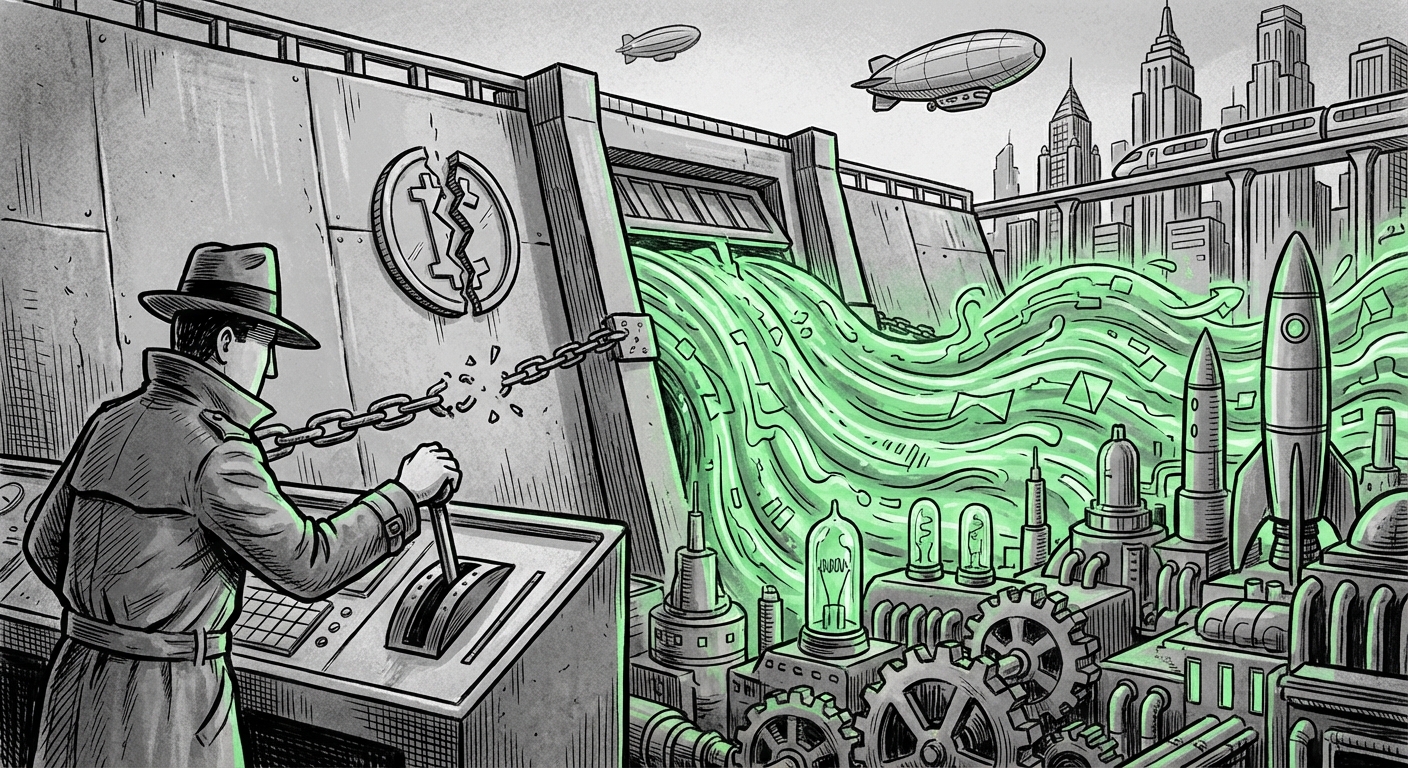

The landscape of Large Language Models (LLMs) is defined by a constant, brutal race for scale and efficiency. For years, the industry operated under an unspoken agreement: the bigger the context window—the amount of information an AI can "remember" and analyze in a single prompt—the exponentially higher the cost. This was the infamous "Context Tax."

Recently, Anthropic delivered a seismic shock to this status quo by dropping the surcharge for their million-token context windows on Claude Opus 4.6 and Sonnet 4.6. Requests exceeding 200,000 tokens no longer incur steep penalties. This move, initially reported by outlets like The Decoder (https://the-decoder.com/anthropic-drops-the-surcharge-for-million-token-context-windows-making-opus-4-6-and-sonnet-4-6-far-cheaper/), is not just a discount; it’s a declaration that massive context processing has hit an inflection point in operational viability.

The Three Pillars of Disruption: Context, Competition, and Cost

As AI analysts, we must look beyond the headline savings. This development sits at the intersection of three critical trends:

- Technical Breakthroughs: How did Anthropic achieve this efficiency?

- Competitive Warfare: What does this mean in the arms race against competitors like OpenAI?

- Enterprise Unlock: Which real-world applications suddenly become cheap enough to deploy widely?

1. The Technical Underpinnings: Making Long Context Cheap

Processing a million tokens (the equivalent of reading several large novels or complex legal filings) requires immense computational power, primarily due to the quadratic scaling of the attention mechanism common in standard Transformer models. If Anthropic can remove the surcharge, it implies they have fundamentally cracked the efficiency code for long sequences.

We investigate this by searching for technical deep dives (Query 1: "LLM context window efficiency breakthrough" cost reduction). The resulting analysis points toward innovations in **KV-caching optimization** or the implementation of **sparse attention mechanisms**. Sparse attention, for instance, allows the model to focus only on the most relevant parts of the input, ignoring noise, rather than calculating relationships between every single token pair. By optimizing how the Key/Value cache (which stores the model’s short-term memory during processing) is managed for massive inputs, operational costs—the largest factor in LLM deployment—plummet.

For the Engineer: This signals a pivot from focusing solely on *model size* (parameter count) to focusing on *context architecture*. Efficiency in handling massive inputs is now as valuable as raw reasoning ability.

2. The Pricing War Escalates: A Direct Competitive Challenge

In the current market, where major players race to release new flagship models monthly, pricing and accessibility are the main differentiators. Anthropic’s move is an unmistakable salvo aimed squarely at their main rival. When searching for competitive reactions (Query 2: "OpenAI" vs "Anthropic" context pricing war implications), the narrative solidifies:

"Anthropic's aggressive pricing structure for Claude 3.5 Opus, specifically targeting the 1M token ceiling, is forcing a strategic re-evaluation at OpenAI. Sources suggest that the previous premium structure for massive context windows was not sustainable once an operator like Anthropic proved a path to efficiency. This move isn't just about adoption; it’s about making GPT-4 Turbo's long-context features look prohibitively expensive for routine, high-volume enterprise workloads..."

This forces competitors to either rapidly match the cost structure—thereby sacrificing near-term margins—or cede the high-volume, long-context market segment. For business strategists (Target Audience: Tech Investors, Product Managers), this means the AI capability gap is narrowing, and the decision point is shifting from "Can we afford the context?" to "Which model provides the best outcome at this new, lower baseline cost?"

3. Unlocking Enterprise Use Cases: Beyond Summarization

For the enterprise, massive context windows previously existed in a theoretical sandbox. They were powerful tools for summarizing a dense legal document but prohibitively expensive for daily, repetitive tasks. By removing the surcharge, Anthropic has made the 1M context window the *standard operating procedure* for tasks that genuinely require deep context comprehension.

Our investigation into practical application (Query 3: Enterprise adoption 1 million token LLM use cases) highlights the immediate gains:

- Codebase Analysis: Instead of feeding snippets, developers can input entire source repositories (millions of lines of code) for comprehensive security audits, refactoring suggestions, or legacy migration planning.

- Regulatory Compliance: Analyzing years of financial transaction logs, policy documents, and internal communications simultaneously to flag specific compliance risks in a single, cohesive query.

- Medical Diagnostics: Ingesting a patient’s complete medical history—scans, doctor’s notes, lab results, and genetic data—to generate holistic diagnostic summaries.

For the CIO or CTO (Target Audience: Enterprise Software Developers), the cost calculation fundamentally changes. If processing 100,000 documents costs the same as processing 100, the path to automating complex workflows becomes significantly clearer.

The Death of Simple RAG? Architectural Shifts Ahead

Perhaps the most profound implication for AI practitioners lies in the architecture of Retrieval-Augmented Generation (RAG) systems. RAG systems are designed to overcome a model’s context limit by retrieving small, relevant chunks of data from a massive external database and injecting them into the prompt.

However, RAG introduces complexity: retrieval must be perfect, chunking strategies must be optimized, and complex reasoning often requires multiple retrieval steps (multi-hop reasoning). By searching into the impact of cheaper long context (Query 4: Impact of cheaper long context on RAG systems), we find a consensus:

When the context window can comfortably hold 100 documents, why spend engineering resources perfecting a system to retrieve the *best* 10?

This shift allows developers to simplify their AI stack. Instead of building a highly optimized vector database lookup service, engineers can now opt to load large, interconnected documents directly into the prompt. The LLM itself, possessing superior reasoning, can better synthesize the relationships between chunks that are *contextually adjacent* rather than relying on vector similarity scores.

This is a paradigm shift for Data Scientists and MLOps Engineers. It means prioritizing model capability over retrieval engineering for certain tasks. While RAG will remain essential for truly infinite data sets, the boundary for when to use context vs. retrieval has moved dramatically in favor of context.

Actionable Insights: What Businesses Must Do Now

This moment demands proactive strategy rather than passive observation. Here are three actionable steps businesses must take:

- Audit Existing Context Workloads: Identify any critical business process currently relying on complex, multi-step RAG pipelines that handle datasets under a few million tokens. These processes are immediate candidates for migration to direct, 1M+ context processing using Claude 3.5 Sonnet or Opus.

- Re-evaluate Vendor Lock-in: If your current AI strategy is built entirely around optimizing data ingestion for a competitor with tighter context constraints, you must stress-test your dependency. Anthropic is clearly setting a new performance-to-cost standard.

- Invest in Prompt Engineering for Deep Synthesis: With vast context available, the skill shifts from *finding* information to *asking the right question* of the entirety of the information. Invest in training teams to structure meta-prompts that leverage the model's holistic view of the data.

The Societal Echo: Democratizing Knowledge Access

On a broader societal level, the cheapening of high-context processing democratizes access to high-level analytical power. Consider legal aid or academic research. A student or small non-profit can now analyze thousands of pages of relevant case law or primary sources for the cost that previously only major law firms could bear for a few dozen pages.

This technology begins to level the playing field in information-intensive fields. It lowers the barrier to entry for complex tasks, suggesting a future where deep, data-driven insights are no longer solely the domain of organizations with massive computational budgets.

The context window is evolving from a memory constraint into a *workspace*. The ability to bring all relevant data—the complete context—into that workspace without incurring punishing fees is the next frontier of generative AI utility. Anthropic’s move signals that the industry has just stepped across that threshold.