The Context Revolution: How Anthropic's Price Cut Just Unlocked the Next Era of Enterprise AI

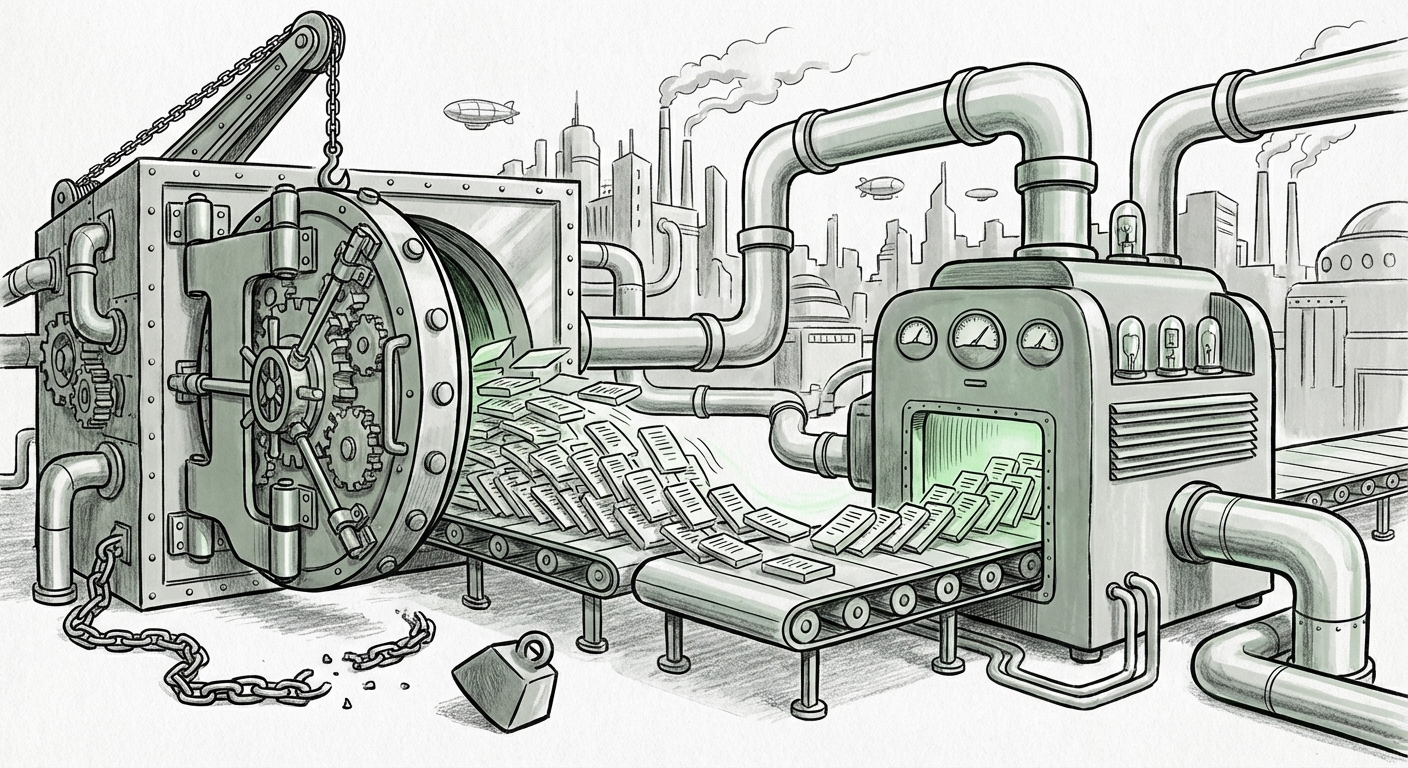

The race for better Artificial Intelligence isn't just about bigger models; it’s increasingly about memory. For months, the ability of Large Language Models (LLMs) to process vast amounts of information—known as the context window—has been held hostage by prohibitive pricing. Handling one million tokens (roughly 750,000 words) used to cost a premium, often doubling the price of a request, acting as an invisible barrier to true enterprise adoption.

That barrier just crumbled. Anthropic’s recent decision to drop the surcharge for long contexts on its top-tier Claude 3 Opus 4.6 and Sonnet 4.6 models is far more than a simple price adjustment. It is a declaration of infrastructural maturity and a strategic maneuver that signals the commoditization of massive context. This move forces the entire industry to recalibrate its expectations for what complex AI applications can be.

The Million-Token Hurdle: Why Context Cost Was a Bottleneck

To understand the magnitude of Anthropic’s move, we must first understand the engineering challenge of long context. Think of a model’s context window as its short-term memory. If you ask a model to summarize a 5-page article, it’s easy. If you ask it to review the entire charter and all associated documents for a merger worth billions, you need massive context.

Historically, LLMs struggled with long inputs because of the foundational structure of the Transformer architecture—the "attention mechanism." In simple terms, as the input length ($N$) doubles, the computing power required can sometimes quadruple (the dreaded $O(N^2)$ complexity). This explosion in demand on expensive GPU memory (specifically the KV Cache) is what led providers to charge a significant surcharge for context windows exceeding 100k or 200k tokens.

Anthropic’s removal of this surcharge means one of two things, or likely both:

- Infrastructural Mastery: They have implemented significant internal efficiencies, perhaps better memory management or novel inference algorithms, that dramatically lower the *marginal cost* of serving an extra token in a very long sequence.

- Strategic Market Aggression: They are deliberately trying to corner the market share requiring deep document understanding by making their offering undeniably the best value proposition for complex, context-heavy tasks.

For the developer audience, this is a license to build without constantly worrying about input token budgets. For the CIO, this means the financial barrier to entry for truly transformative AI projects—like synthesizing decades of institutional knowledge—has vanished.

The Ripple Effect: Triggering the LLM Pricing Wars (Query 1 & 4)

The AI landscape is intensely competitive. When one major player makes a move that fundamentally alters the cost structure of a key feature, rivals are forced to respond. This development directly feeds into the ongoing **"context window pricing wars."**

If you are running an application that processes massive user manuals or deeply analyzes research papers, switching costs are high, but so is the cost of overpaying for tokens. Anthropic’s move effectively lowers the bar for parity. Competitors like OpenAI (with GPT-4 Turbo) and Google (with Gemini 1.5 Pro) must now immediately evaluate their own long-context pricing tiers.

For practitioners evaluating APIs, the difference is stark. A single legal discovery process could involve 500,000 input tokens. If a competitor charges double the rate for those last 300,000 tokens, the application’s operating cost skyrockets unnecessarily. By eliminating the surcharge, Anthropic presents a unified, predictable cost structure for even the most demanding tasks. This quantitative advantage forces competitors to either match the price or find an equivalent functional advantage elsewhere, accelerating the overall pace of innovation.

Unlocking New Enterprise Paradigms (Query 2)

The most profound implication of cheap, massive context is the shift from relying on Retrieval-Augmented Generation (RAG) to **native context ingestion.**

RAG is the current dominant technique where an LLM uses a search engine (a vector database) to pull small, relevant chunks of data from a massive knowledge base *before* answering a query. It works, but it's imperfect—if the required information is spread across three disconnected documents, RAG might miss the critical link.

With million-token windows, organizations can bypass much of the complexity of RAG development for many use cases:

- Codebase Analysis: Imagine feeding an entire legacy codebase (hundreds of thousands of lines) into Opus and asking it to identify every instance where a specific, deprecated function call is used, or to draft a migration path for a new framework. This was computationally prohibitive before.

- Regulatory Compliance & Due Diligence: Legal and financial firms can ingest years of internal compliance reports, meeting transcripts, and contractual agreements in a single prompt to spot subtle patterns of risk that chunking might obscure.

- Medical and Scientific Synthesis: Researchers can load dozens of full-text academic papers and ask the model to synthesize novel connections between disparate findings—a task that requires deep, contiguous memory.

This democratizes sophisticated intelligence. It moves AI from being a highly specialized tool requiring expert prompt engineering and complex system architecture (RAG) to being a powerful, general-purpose analysis engine that simply needs the relevant data fed directly to it.

The Technical Backbone: Efficiency at Scale (Query 3)

Why is Anthropic able to offer this discount when others hesitated? The answer lies beneath the hood, in advancements in **hardware efficiency improvements for LLM context serving.**

The secret sauce for serving these massive contexts affordably often involves optimizing how the model stores the intermediate calculations for attention—the aforementioned KV Cache. This cache eats up precious, fast GPU memory. Innovations in techniques that compress, offload, or dynamically manage this cache are what allow a single high-end GPU to serve a 1M token context request without timing out or becoming prohibitively expensive.

When we see a price drop like this, we are witnessing the successful industrialization of recent ML research breakthroughs. It suggests that the algorithms underpinning Claude 3 are significantly better at handling the quadratic cost challenge inherent in long sequences than previous generations of models.

This technical validation is crucial. It tells the industry that long context is not a fleeting feature reliant on massive, unsustainable hardware clusters; it is becoming a sustainable, engineered capability. This pushes the entire ecosystem—from chip makers to framework developers—to focus on scaling these efficiency techniques further.

Actionable Insights for Leaders and Builders

The landscape has fundamentally changed. Here are the immediate implications for different stakeholders:

For AI Developers and Practitioners:

Revisit your RAG Strategy: If your current RAG implementation is overly complex, see if you can transition parts of your workload directly into the context window. Test the performance of direct ingestion versus retrieval. You might find that a simpler, direct prompt yields higher quality and lower overall cost.

Embrace Complexity: Start prototyping the "impossible" applications you shelved due to token limits. Analyzing entire user manuals, legal contracts, or multi-day meeting logs is now within budget for serious testing.

For Business Strategists and CIOs:

Budget Reallocation: The high cost of context was a CAPEX/OPEX drain. Reallocate those savings toward expanding the *scope* of AI projects, not just paying for the same old tasks. Invest in training your teams on how to formulate prompts that utilize this newfound deep memory.

Demand Parity: Engage your current LLM vendors aggressively. This move sets a new industry floor. You should be asking not just *if* your current provider can match this, but *when* and *how* they will surpass it. The competitive pressure is immense.

The Future is Contextually Aware

The transition from models that remember a few paragraphs to models that can ingest entire libraries is the next great inflection point in generative AI. It shifts the primary utility of the LLM from being a sophisticated text generator to becoming a powerful, comprehensive reasoning engine.

When an AI can truly hold all the relevant facts of a situation in its mind simultaneously—be it a 300-page government report or a decade of customer service transcripts—its outputs move from being generalized answers to highly specific, actionable business intelligence. Anthropic’s aggressive pricing strategy is the catalyst pushing this future from the research lab into the mainstream enterprise environment. We are entering the age of the LLM as the ultimate institutional memory.