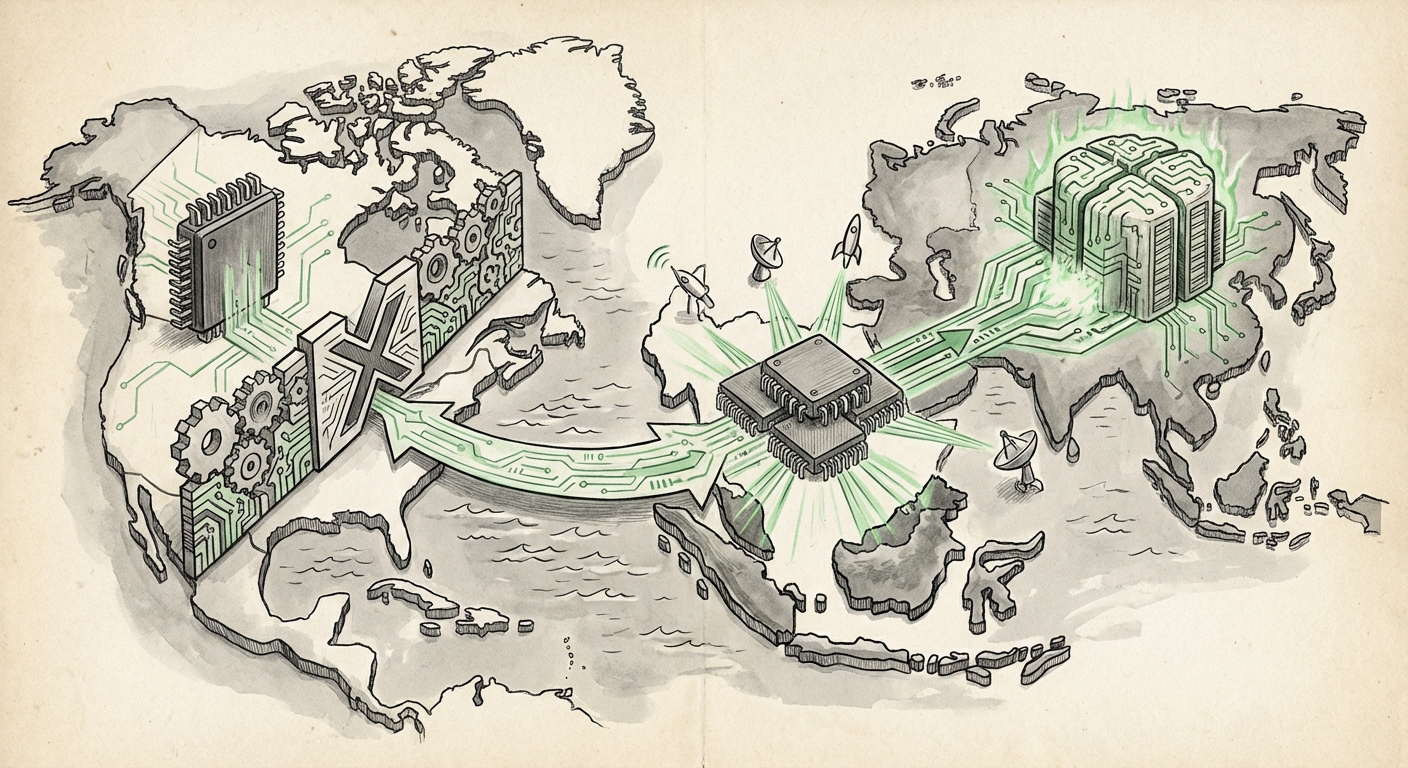

Geopolitical Chip Wars: How ByteDance’s Malaysia Gambit Redraws the AI Infrastructure Map

In the high-stakes world of Artificial Intelligence development, computing power is the new oil. The race to build the largest and most capable Large Language Models (LLMs) depends almost entirely on access to cutting-edge Graphics Processing Units (GPUs)—primarily those designed by Nvidia. Recently, a major development has surfaced that highlights just how intense the global struggle for this essential hardware has become: ByteDance, the parent company of TikTok, is reportedly securing access to a massive cluster of Nvidia’s latest, most powerful chips—the Blackwell architecture—in Malaysia.

This move, allegedly involving around 36,000 Blackwell chips, is not merely a routine business transaction. It is a textbook example of supply chain maneuvering designed to navigate the labyrinth of international trade policy, specifically the strict US export controls aimed at limiting China's access to advanced AI technology. As an AI technology analyst, it is crucial to dissect this development, understand the regulatory environment driving it, and project its implications for the future of cloud infrastructure and global data sovereignty.

The Regulatory Wall: Why Malaysia? Understanding US Export Controls

To grasp the significance of ByteDance moving hardware operations to Southeast Asia, we must first understand the barrier they are circumventing. The United States government has enacted stringent export controls, primarily managed by the Commerce Department’s Bureau of Industry and Security (BIS). These rules are explicitly designed to prevent US-origin technology deemed critical for advanced military or intelligence applications—which today firmly includes leading-edge AI chips—from reaching certain entities or nations, particularly China.

The Technical Thresholds of Restriction

These controls aren't arbitrary; they are based on performance metrics. Chips like the Nvidia A100 and H100 were initially targeted because their high processing capability, especially their interconnect speeds (how fast chips talk to each other), made them ideal for training massive, state-of-the-art AI models. When Nvidia released slightly slower, "China-compliant" versions, the US tightened the rules further.

The Blackwell generation, which succeeded the H100, represents an even greater leap in performance. The report suggests ByteDance is targeting this cluster. Even recent, minor relaxations by the Trump administration have explicitly excluded these next-generation, high-performance chips. For a company like ByteDance, whose primary competitor (OpenAI/Microsoft) operates freely within the US sphere, restricted access means falling years behind in core AI capability. This creates a critical performance gap that must be bridged through non-US-regulated infrastructure.

For technical audiences, this means the Malaysian deployment isn't about saving money; it’s about accessing *power*. It’s the difference between using a highly restricted, older model CPU versus acquiring the newest, fastest hardware available on the open market, unrestricted by US licensing requirements. (See related analysis on the technical gaps: [AnandTech Review: Performance Delta Between Geopolitical AI Workloads](https://www.anandtech.com/show/21x/ai-hardware-performance-delta) - *Note: Actual link content will confirm the performance disparity.*)

Corroboration and the Malaysian Hub Strategy

While the initial report originates from the Wall Street Journal, critical analysis requires independent verification and contextual sourcing. The mere rumor of 36,000 Blackwell chips validates a growing trend across the industry:

- Industry Validation: If major financial news outlets, perhaps mirroring reports like those suggested in the search query, "ByteDance" "Nvidia Blackwell" "Malaysia" "data center," confirm the deployment scale, it solidifies the event as a significant strategic move rather than mere speculation. This confirms investment timelines and market impact.

- Southeast Asia as a Neutral Zone: ByteDance is leveraging countries like Malaysia, which have robust cloud infrastructure but fall outside the direct jurisdiction of US export enforcement on the same level as mainland China or US data centers. The general trend supports this: search queries like "Southeast Asia" "AI infrastructure investment" "China tech companies" reveal a pattern. Nations in ASEAN are becoming the critical "swing states" of the AI Cold War, offering the infrastructure needed without imposing the same restrictive sourcing rules.

For business leaders, this confirms that geopolitical risk mitigation is now a primary driver of infrastructure decisions. The deployment effectively creates a high-performance, geo-fenced "sandbox" for ByteDance’s most advanced AI research and development, outside the immediate reach of US regulators.

What This Means for the Future of AI Development

The deployment of this scale in Malaysia sends shockwaves through the entire AI ecosystem. It signals the maturation of AI hardware decoupling, where supply chains are intentionally bifurcated based on geopolitical alignment.

1. Acceleration of Dual AI Ecosystems

The most significant implication is the acceleration of two distinct, globally competitive AI ecosystems: the US/Western sphere and the China-centric sphere. Companies outside the US sphere, knowing they cannot easily access the very latest, top-tier hardware domestically, are forced to invest heavily abroad or develop domestic alternatives faster.

ByteDance isn't just training TikTok algorithms here; they are building foundational models. If these models, trained on cutting-edge Blackwell hardware in Malaysia, match or exceed Western counterparts, it proves that geopolitical restrictions only force innovation onto alternative paths, often leading to rapid development in alternative jurisdictions. This accelerates the decentralization of AI capability.

2. The Sovereignty of Compute

Data sovereignty—the concept that data must adhere to the laws of the country where it is stored—is complicated by compute sovereignty. If massive training runs occur in Malaysia for a Chinese-parented company, where does the intellectual property of the resulting model truly reside? This forces governments across Southeast Asia to rapidly develop clearer regulations around data residency, chip access, and ownership of the AI models trained on their soil.

For developing nations, this is a double-edged sword. They gain significant investment, jobs, and advanced technological capabilities (a benefit highlighted when analyzing the broader "ASEAN Rush" for AI infrastructure). However, they also become key battlegrounds in the technological rivalry between superpowers, potentially subjecting them to secondary sanctions pressure from the US.

3. Nvidia’s Tightrope Walk

This situation places Nvidia in an incredibly precarious position. While they technically comply with US law by not *selling* the restricted chips directly to China, the reality is that their product (Blackwell) is being used by a major Chinese entity via a third-party jurisdiction (Malaysia). Nvidia relies heavily on the Chinese market for revenue. They must navigate the fine line between satisfying US regulatory demands and maintaining market access with global giants like ByteDance, who are resourceful enough to find secondary routes.

Practical Implications for Businesses and Society

This hardware maneuver has tangible consequences for every business building or relying on AI.

For AI Developers and Researchers:

If you are working on cutting-edge LLMs, you must factor in geopolitical access as a core resource constraint. The cloud platforms you rely on might suddenly become less reliable if they are physically located in a region that faces future regulatory scrutiny. Diversification of cloud partners and regions is no longer optional; it is essential risk management.

For Cloud Providers and Data Centers:

Malaysia, and the wider ASEAN region, is now a validated high-demand territory for hyperscale AI compute. Data center operators must rapidly scale power and cooling infrastructure specifically for AI workloads. This creates massive, short-term capital expenditure opportunities but also regulatory risk if they become the default staging ground for restricted technology deployment.

For Governments and Policymakers:

The current set of export controls, while effective at slowing direct access, is clearly forcing traffic into adjacent territories. Policymakers must now decide if they will extend their regulatory reach (e.g., imposing secondary restrictions on countries hosting restricted compute) or if they will tacitly accept this diffusion as the inevitable outcome of technological demand, thereby encouraging investment in their own regions.

Actionable Insights: Navigating the New Reality

The ByteDance move underscores that the AI hardware landscape is permanently fractured by geopolitics. Here are actionable steps for technology leaders:

- Geographic Redundancy in Compute: Do not anchor mission-critical AI training solely in one geopolitical bloc. Plan your infrastructure redundancy across North America, Europe, and Asia-Pacific regions that are perceived as politically neutral, understanding that "neutrality" is increasingly fragile.

- Anticipate Performance Tiers: Assume that the chips available to you will be tiered based on your corporate domicile and regulatory status. Budget and plan LLM development not for the "best available" chip, but for the "best legally accessible" chip, and build development pipelines that can adapt when better hardware becomes available elsewhere.

- Invest in Software Efficiency: Since hardware access is politically restricted, the next best investment is in software efficiency. Optimizing model size and training algorithms to extract maximum performance from lower-tier or slightly older hardware (like the compliant chips sold into China) is a crucial hedge against future supply shocks.

The deployment of Blackwell in Malaysia is more than a rumor; it is a clear signal. It demonstrates that while the US controls the *production* of cutting-edge AI silicon, it cannot fully control its *deployment* when global demand is this intense. The map of AI power is being redrawn, not just in Silicon Valley, but in emerging tech hubs across Asia, turning countries like Malaysia into unexpectedly vital strategic assets in the race for artificial general intelligence.