The AI Segmentation Revolution: Why Google's Nano Banana Models Signal the End of 'One-Size-Fits-All' AI

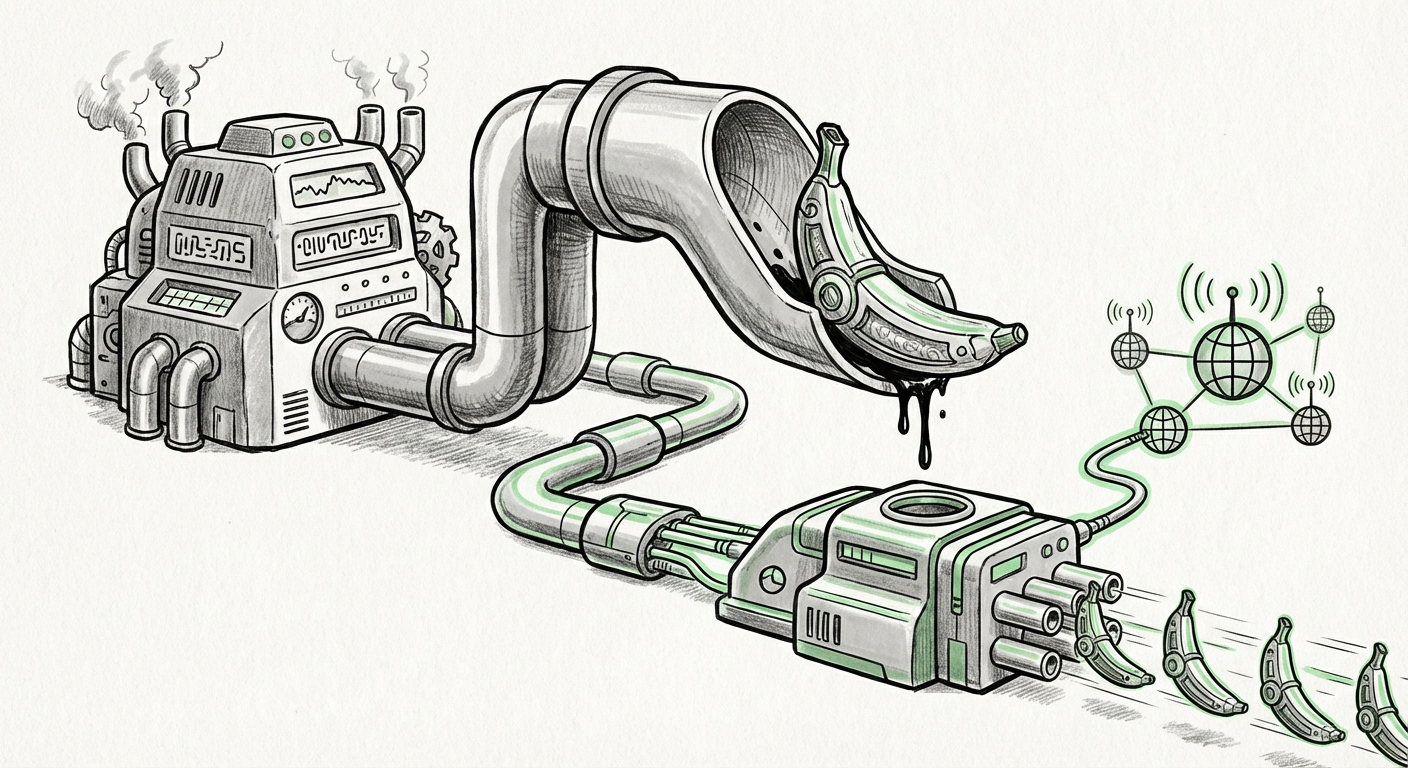

The world of Artificial Intelligence development has long been characterized by a relentless pursuit of scale. Bigger models, more parameters, and unprecedented computational power dominated headlines. However, the recent breakdown of Google’s "Nano Banana" image generation lineup—specifically the introduction of a highly capable, cost-effective version—marks a significant pivot. This isn't just about a new product; it’s a declaration that the future of accessible and practical AI lies in segmentation, efficiency, and real-time grounding.

The core revelation is compelling: Nano Banana 2 reportedly delivers 95% of the flagship Pro model’s capabilities while being cheaper and smarter enough to search the web for current reference material. For both the engineers building these systems and the businesses planning to deploy them, this signals a critical maturation of the Generative AI market.

The Economics of Efficiency: Why Smaller Models Win the Volume Game

For years, the narrative suggested that the most powerful model—the one with the most parameters—was the only one worth using. This approach, while leading to breakthroughs, created a massive barrier to entry due to prohibitive training and, more importantly, inference costs. Imagine a complex factory designed only to produce luxury sports cars when 90% of the market needs reliable, affordable sedans.

Google’s strategy, mirrored across the industry (as seen in discussions around cost efficiency in LLMs), addresses this directly. By creating tiers, they are optimizing for two distinct customer segments:

- The Pro Tier: Reserved for niche tasks requiring absolute peak quality, experimental accuracy, or complex reasoning that justifies the high computational expense. This is the 'research-grade' or 'premium creative' option.

- The Economy Tier (Nano Banana 2): This model targets the vast majority of business applications—marketing assets, internal documentation graphics, rapid prototyping—where 95% quality at 10% of the cost is an overwhelming value proposition.

From a technical standpoint, this validates breakthroughs in model slimming techniques. This is often achieved through methods like knowledge distillation (where a smaller model learns from the outputs of a larger one) or aggressive quantization. As researchers have explored the limits of AI model parameter count reduction vs performance, the takeaway is clear: specialized, smaller architectures can be incredibly potent when trained and tuned correctly. This trend moves AI from the exclusive domain of massive data centers toward broader, more sustainable deployment.

Actionable Insight for Technical Teams:

Stop defaulting to the largest available model for every API call. Engineers must now benchmark the cost/benefit ratio. If Nano Banana 2 meets the requirements, deploying it saves significant operational expenditure (OpEx) without a noticeable drop in end-user experience.

The Real-Time Frontier: Integrating the Web into Creation

Perhaps the most transformative feature of the cheaper Nano Banana 2 is its autonomous web search capability. Traditionally, generative models are confined to the knowledge they held at the moment their training concluded—a static snapshot of the internet. If you asked a static model to create an image related to an event that happened yesterday, it would fail or hallucinate.

Nano Banana 2 integrating web search is a concrete step toward Retrieval-Augmented Generation (RAG) for visual media. This capability is vital because it grounds creative output in current reality, eliminating knowledge cutoff dates.

Consider the applications: A marketing team needs a graphic featuring the latest product packaging just released this morning. A news outlet needs an illustration referencing a specific, breaking political milestone. Static models fail here. Dynamic models that can pull relevant, up-to-date reference images before rendering bridge this gap, making the AI infinitely more relevant for fast-moving industries.

This mirrors similar efforts happening across the generative ecosystem, notably the ongoing focus on OpenAI’s integration of browsing into GPT-4. The race isn't just about who can generate the prettiest image; it’s about who can generate the most accurate and timely image.

Implication for Societal Trust:

While autonomy is powerful, it introduces new responsibilities. The model must now verify its search results to ensure the reference images it pulls for generation are credible and appropriate. This requires robust safety guardrails layered on top of the RAG pipeline to prevent the dissemination of misinformation or biased visuals pulled from unreliable sources.

The Competitive Landscape: Tiered Offerings as Strategy

Google is not operating in a vacuum. The move to sophisticated tiering is a direct competitive response to established strategies across the AI landscape. When examining the battle between giants like Google and OpenAI, their productization mirrors this segmentation.

The comparison between GPT-4o pricing vs Gemini Advanced illustrates this perfectly. Companies are learning that consumers and developers are willing to pay a premium for the "flagship" model, but the high-volume market demands affordability and speed. By offering clear trade-offs (e.g., Pro gets the absolute highest fidelity, Nano 2 gets speed and web access), Google segments its revenue streams effectively.

This tiered approach dictates how businesses choose infrastructure. A small startup might heavily utilize Nano Banana 2 via API for all its front-end visual needs, while a major movie studio might license the Pro version for highly specialized VFX pre-visualization. This forces vendors to invest heavily in both core research (Pro) and widespread deployment engineering (Nano).

Future Implications: The Modular AI Ecosystem

What does the success of the Nano Banana 2 model suggest for the next five years of AI?

1. The Rise of the "Agent Economy"

An autonomous, efficient model that can perform research before executing a task is the cornerstone of an AI agent. Nano Banana 2 isn't just an image generator; it’s a rudimentary agent capable of *Plan -> Search -> Execute*. As this capability filters down to cheaper tiers, we will see an explosion of specialized agents capable of handling complex, multi-step tasks across business workflows.

2. Democratization of High-Fidelity Creation

If 95% quality is achieved affordably, the barrier to entry for high-quality digital asset creation plummets. Small businesses, independent creators, and educators will gain access to tools that previously required large design budgets. This lowers the cost of digital content creation dramatically, potentially leading to an inflation of easily produced visual media.

3. Focus on Vertical Optimization

While general models will remain important, we will see more companies developing models specifically optimized for single verticals—a 'Nano Finance Image Generator' or a 'Nano Medical Illustration Model.' These models will sacrifice general knowledge for extreme depth and accuracy in their specific domain, often built upon efficient base architectures like Nano Banana 2.

4. Latency Becomes the New Bottleneck

When quality is comparable (95%), the deciding factor in adoption often shifts to speed. How quickly can the image return? For real-time applications like interactive gaming or live customer service interfaces, low latency is non-negotiable. Cheaper, smaller models inherently reduce latency, making them the preferred choice for interactive experiences.

Actionable Takeaways for Business Leaders

For business leaders navigating this rapidly evolving AI infrastructure, the message from Google’s segmentation is clear:

- Audit Your Use Cases: Identify every instance where you use generative AI. Does that task truly require the 100% perfection of the flagship model, or would 95% suffice? Re-mapping high-volume, low-stakes tasks to the economy tier can yield immediate cost savings.

- Prioritize Real-Time Capabilities: Any AI tool that cannot access current information will quickly become obsolete for dynamic business functions (marketing, news, finance). Factor RAG or web-grounding capabilities into your procurement checklist.

- Prepare for Agent Integration: The transition from simple "prompt-to-output" to agent-based "task-to-completion" is imminent. Start testing workflows that require the AI to make preliminary decisions (like searching for input data) before rendering the final product.

Conclusion: The Maturity Phase of Generative AI

The strategic introduction of tiered, efficiency-focused models like Nano Banana 2 confirms that Generative AI is entering its maturity phase. The initial shockwave of raw capability is being replaced by the practical engineering of deployment, cost management, and utility. This move by Google validates a sophisticated market reality: **utility often trumps pure potential.** The ability to deliver nearly perfect results affordably, and ground those results in the present moment, is the true competitive edge moving forward. The age of the monolithic AI brain is yielding to the era of the specialized, cost-conscious, and context-aware digital workforce.