The Age of Tiered AI: How Google's Nano Banana Strategy Signals a Shift to Efficient, Grounded Generative Models

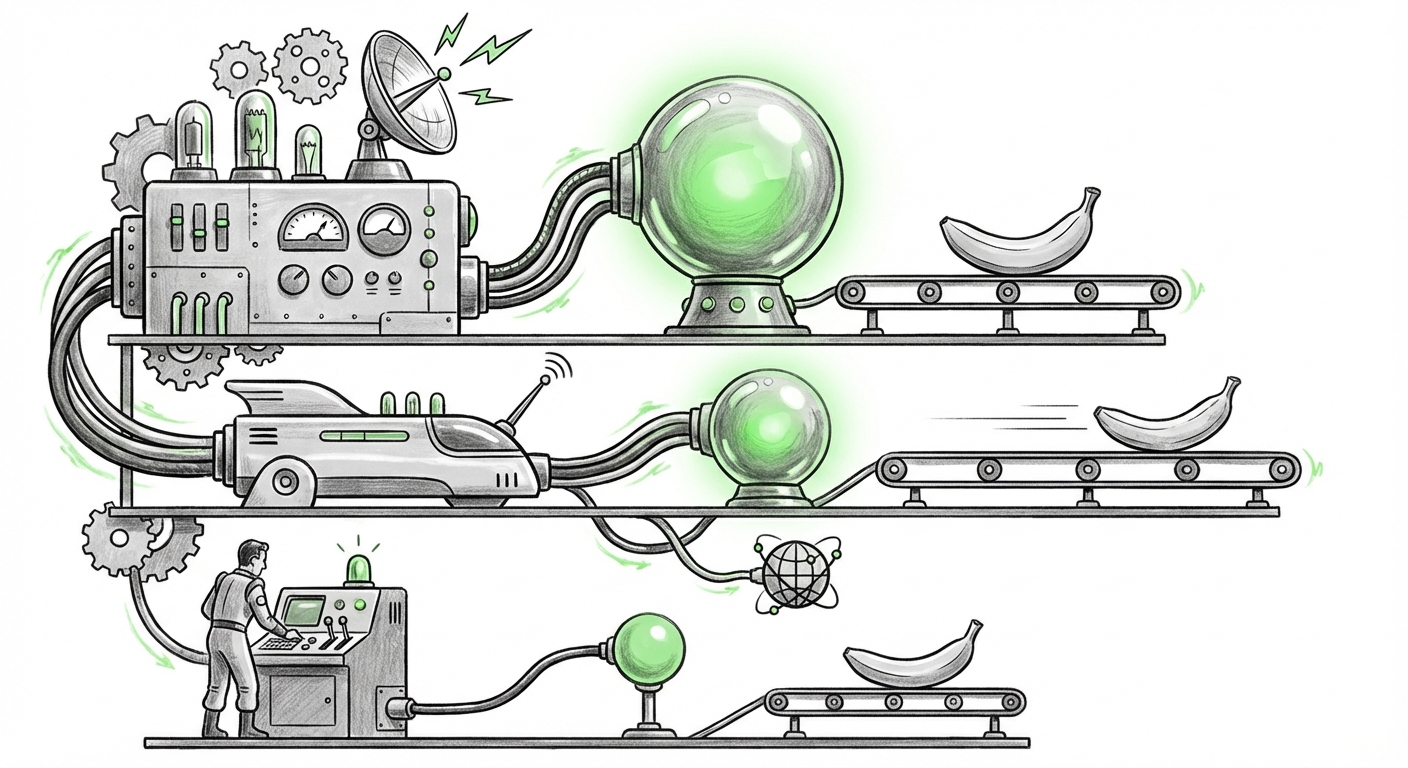

The generative AI landscape is often defined by massive, headline-grabbing launches of ever-larger foundation models. However, recent news detailing Google’s three "Nano Banana" image generation models suggests a far more nuanced, and perhaps more commercially significant, shift is underway. The key takeaway isn't just the new model, but the strategic breakdown: a cheaper version, Nano Banana 2 (NB2), reportedly achieves 95% of the flagship "Pro" model’s capability while crucially gaining the ability to search the web for reference images autonomously.

As an AI analyst, this revelation signals a move away from the pure pursuit of scale and toward the critical trifecta defining the next era of AI deployment: Efficiency, Democratization, and Grounding. This tiered approach is not just smart product management; it is a blueprint for how scalable, real-world AI systems will be built and consumed across industries.

The Efficiency Imperative: Why 95% is the New 100%

For years, the AI race felt like a brute-force competition: the bigger the model (more parameters), the better the results. This required immense computational power (GPUs) and astronomical training costs. Google’s NB2, achieving near-parity performance at a lower cost, directly challenges this paradigm. This trend is resonating throughout the sector.

The Rise of Small, Mighty Models (SLMs)

When we investigate industry movements focused on "Efficient large language models" vs "flagship model" performance tradeoff, we see that companies are actively seeking the 'sweet spot' where performance gains diminish against increased cost. Techniques like distillation, pruning, and quantization allow developers to shrink powerful models without collapsing their core competency.

For the CTO and the infrastructure engineer, this is revolutionary. Running a full-fat Pro model for every user request is financially unsustainable for high-volume applications. If NB2 delivers 95% quality, the 5% performance sacrifice is easily outweighed by the massive reduction in inference costs. This efficiency fuels true model democratization—making powerful AI accessible to startups, smaller enterprises, and high-volume consumer applications that simply cannot afford the flagship price tag.

This tiered structure allows Google (and any competitor adopting this strategy) to cater to different user tiers:

- Pro Users: Need the absolute cutting edge, perhaps for highly specialized art or regulatory compliance where every pixel matters. They pay a premium.

- NB2 Users: Need high-quality, fast, and affordable results for marketing copy, rapid prototyping, or internal tools. They benefit from the massive cost savings.

In simple terms: Why pay for a high-performance race car (Pro) to drive to the grocery store when a highly efficient, cost-effective sedan (NB2) gets you there 99% as well?

The Grounding Revolution: AI That Checks Its Sources

The second, and perhaps most impactful, feature of Nano Banana 2 is its ability to "search the web for reference images on its own before generating output." This is the industry’s answer to the perennial problem of AI hallucination and stagnation.

Moving Beyond Static Knowledge

Traditional generative models are inherently limited by the date their training data was collected. They cannot reference current events, new product designs, or the latest cultural memes. They generate based on what the world *was* like at the time of their last training cut-off.

By integrating real-time web search—a feature being aggressively explored across text and image AI—the model becomes grounded. When asked to generate an image of "the current popular social media interface," NB2 can query current search results to pull visual references, ensuring the output is timely and relevant.

This concept of Generative AI "real-time data" integration is essential for enterprise adoption. For businesses, outdated AI outputs are liabilities. If a marketing team asks for an image based on a competitor’s latest product launch, a static model will fail. A grounded model succeeds.

Furthermore, grounding vastly improves reliability and trust. When an image generation tool can show *why* it made certain design choices by referencing external sources, it moves from being a black box to a transparent co-creator. This transparency is non-negotiable for professional workflows.

Implications for Business Strategy and Adoption

The Nano Banana structure forces businesses to rethink their AI procurement and deployment strategies. The focus shifts from "which model is the best?" to "which model tier is the most economical for this specific job?"

The Economics of Adoption: Cost Reduction Strategies

When we examine Cost reduction strategies for enterprise AI image generation platforms, the emergence of efficient, tiered models is the primary driver for wider adoption. Previously, deploying AI internally meant large, fixed infrastructure costs. Now, companies can implement usage-based pricing that scales effectively:

- High-Volume, Low-Stakes Tasks: Use NB2 for internal documentation, early-stage mood boarding, or employee training materials where marginal quality differences are irrelevant.

- High-Stakes, Creative Tasks: Reserve the Pro model for final advertising campaigns or highly specific branding assets.

This granular cost control unlocks ROI for mid-market companies. AI moves from being a specialized laboratory expense to an integrated operational tool.

Actionable Insight: Auditing Your AI Workloads

Businesses should immediately audit their planned or existing AI workloads based on this tiered structure:

- Performance Threshold Mapping: For every task (e.g., generating social media images vs. generating concept art for a new car model), determine the minimum acceptable quality level. Is 95% sufficient?

- Grounding Needs Assessment: How often do your generation requests require knowledge of events from the last six months? If frequently, grounding capability (like NB2’s search feature) is more important than raw creative polish.

- Cost Modeling: Model the cost difference between running 10,000 requests per day on the Pro model versus NB2. The savings will dictate which tier gets deployed across the organization.

The Future Landscape: Personalization and Specialization

Google’s move validates a broader trend away from the "one-size-fits-all" model. The future of AI is modular and specialized, built upon efficient foundations.

The Rise of Verticalized AI Ecosystems

Imagine the future of AI deployment:

A fashion retailer won't subscribe to one massive image model. Instead, they might use a highly specialized, fine-tuned version of the NB2 architecture for creating quick mockups of seasonal clothing on diverse models (efficiency + grounding for current trends). Simultaneously, their top design team might access a proprietary Pro-level model layered with internal, private design datasets, ensuring brand consistency.

This ecosystem means that AI providers will increasingly become platform operators, offering a "menu" of model capabilities rather than just a single API endpoint. This flexibility encourages deeper integration into existing software stacks, lowering the barrier to entry for developers.

The Simplicity for the End User

Crucially, while the backend architecture becomes more complex (managing three models), the user experience must remain simple. The user should ideally select a "Quality Profile" (e.g., Standard, Premium) and the platform handles the routing to NB2 or Pro seamlessly. This abstraction shields non-technical users from the underlying computational trade-offs, delivering the right quality at the right price without friction.

Analyzing the Competitive Landscape

This announcement puts pressure on competitors like OpenAI and Stability AI. If Google can offer a cost-effective, grounded solution, competitors must rapidly match this capability or risk ceding the high-volume, cost-sensitive market segment.

The race is no longer just about who has the most parameters, but who has the best-calibrated portfolio of models that meet real-world operational demands. The integration of search capabilities into generative outputs—often referred to as retrieval-augmented generation (RAG) in text models—is now proving essential for visual tools, pushing every major player to connect their models to the live internet.

Conclusion: Intelligence at Scale Requires Smarter Economics

The Nano Banana revelation is a crucial marker on the roadmap for mature AI deployment. It confirms that the industry is pivoting from a Phase 1 focus on proving possibility (Can AI create images?) to a Phase 2 focus on proving viability (Can AI create images affordably, reliably, and in real-time?).

The future belongs to the platforms that master the art of the trade-off. By providing tiers that balance cost against near-perfect performance and by grounding their outputs in current reality, Google is signaling that the next wave of AI success will be measured not by the size of the model, but by the intelligence embedded in its economic structure and its ability to connect to the living world.