Hume AI's TADA: Zero Hallucination Speech, 5x Speed, and the Open-Source Revolution in Generative Audio

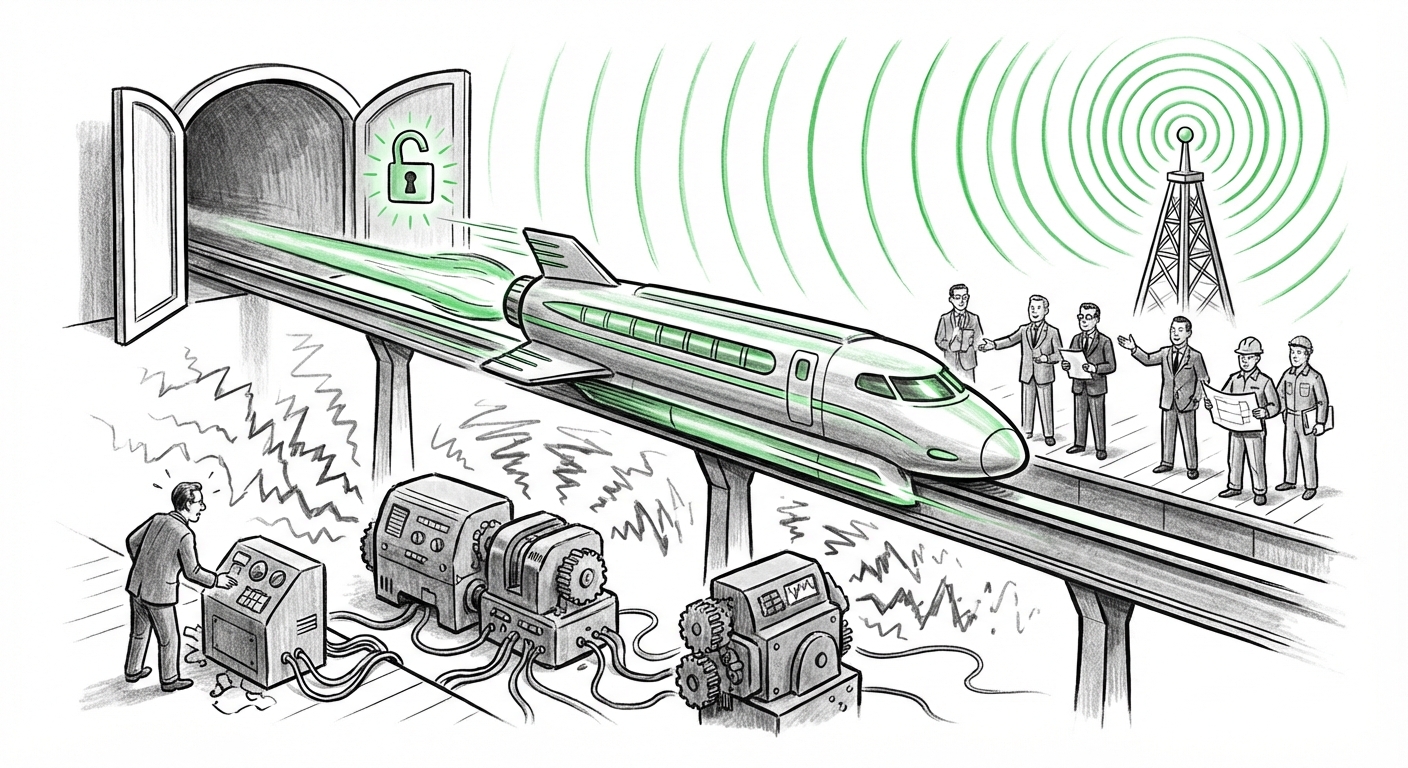

The landscape of generative AI is constantly reshaped by new breakthroughs, but few recent developments strike at the core necessities of enterprise adoption quite like the open-sourcing of Hume AI’s TADA speech model. Touted as being up to five times faster than its proprietary rivals while achieving a perfect record of zero hallucinated words in testing, TADA is not just another voice synthesizer; it represents a crucial pivot point toward **reliable, high-throughput synthetic media**.

As an AI technology analyst, I see this move—releasing a leading-edge model under the permissive MIT license—as a triple threat: it advances model architecture, addresses the critical issue of AI trust, and significantly shifts the competitive dynamics in the generative audio market.

The Architectural Leap: Synchronous Multimodal Processing

To appreciate TADA's speed and reliability, we must look under the hood at its architecture. Traditional text-to-speech (TTS) systems often involve multiple, sequential components: one model generates phonetic symbols, another predicts acoustic features, and a third synthesizes the raw waveform. This pipeline is inherently slow and prone to compounding errors.

TADA, however, embraces the leading edge of **multimodal AI processing text and audio in sync**.

Think of it like this: If an older system needed to read a recipe (text), pause, figure out the oven temperature (acoustic prediction), pause again, and finally start cooking (waveform generation), TADA reads the recipe, determines the heat, and starts baking all at once. This synchronous handling means the model is working with integrated context from the start. This unification drastically reduces latency, explaining the reported five-times speed increase over many current models.

For researchers and ML engineers, this validates the industry move toward unified foundational models capable of handling different data types natively. When a model understands text and audio simultaneously, its outputs become richer, more natural, and, crucially, more tightly controlled—a prerequisite for eliminating factual errors in speech generation.

The Trust Imperative: Why "Zero Hallucination" Matters

In the world of large language models (LLMs), "hallucination" means the AI confidently states something false. While embarrassing in a chatbot, this is catastrophic in commercial applications.

When you apply the concept of hallucination to speech synthesis, it means the model might confidently say the wrong number, misquote a policy, or invent a crucial piece of information during a customer interaction. This is precisely where the development of **zero-hallucination models** becomes a game-changer. While TADA’s specific implementation likely focuses on ensuring the generated speech perfectly matches the *input text* (preventing the model from injecting external, incorrect information into the spoken output), the symbolic victory of a "zero hallucination" claim in the audio domain signals a maturation of the entire generative pipeline.

This focus on **reliability is the key to unlocking enterprise AI adoption.** Business leaders, especially those in regulated sectors like banking, legal, or telecommunications, cannot deploy systems they cannot audit for truthfulness. A system that guarantees the spoken word is precisely what the script dictates is a system they can trust for automated compliance, high-stakes customer support, and internal training.

The Enterprise Applications of Reliable Speed

The combination of high speed and guaranteed fidelity opens up several immediate use cases:

- Real-Time Interactive Voice Response (IVR): Current IVR systems sound robotic and slow. TADA enables phone support bots to sound indistinguishable from humans while responding instantly, drastically improving customer experience and reducing wait times.

- Dynamic Content Localization: Companies can instantly generate high-quality voiceovers for global content (training videos, marketing material) in dozens of languages, matching the pace of rapid content iteration.

- Accessibility Tools: Faster, more natural speech generation makes screen readers and assistive technologies more pleasant and less taxing for daily use, enhancing accessibility across the board.

The Open-Source Challenger: Reshaping Market Dynamics

The decision by Hume AI to release TADA under the permissive MIT license alongside a performance claim that rivals closed-source leaders is perhaps the most disruptive element of this news. This pits open innovation directly against proprietary ecosystems.

The prevailing trend has seen the most powerful models locked behind APIs, controlled by a few well-funded tech giants. This limits customization, creates dependency, and often incurs steep per-use costs.

When a model that is demonstrably faster and highly reliable enters the public domain, the impact is immediate:

- Democratization of Performance: Smaller startups and academic labs can now build cutting-edge applications without needing multi-million dollar compute budgets or licensing fees.

- Rapid Iteration and Security: The open-source community can quickly find and patch vulnerabilities, optimize the model for specific hardware, and build specialized forks—accelerating improvement far beyond what a single company can manage.

- Competitive Pressure: Competitors must now match TADA’s baseline speed and accuracy while offering superior features or better pricing if they wish to keep their closed-source offerings attractive. This directly challenges the business models built on scarcity of top-tier performance.

This aligns with growing calls for greater transparency and auditability in AI. By opening the model, Hume AI signals a belief that the next wave of innovation will come from collaboration, not just closed competition.

The Ethical Horizon: Speed, Accuracy, and Synthetic Reality

As we contextualize this technology, we cannot ignore the inherent ethical responsibilities tied to highly advanced speech generation.

The very features that make TADA commercially viable—high speed, high accuracy, and natural output—also make it a powerful tool for misuse. The capability for **real-time voice cloning and synthetic media** demands proactive governance.

The speed of TADA means that a bad actor could generate convincing, untraceable audio content almost instantly. This places immediate pressure on regulators and platform providers. The push for media authenticity standards, digital watermarking, and clear labeling of synthetic content will only intensify as the quality gap between real and generated audio shrinks to zero.

For businesses deploying TADA-like technology, ethical deployment is non-negotiable. This includes rigorous internal policies on preventing unauthorized voice cloning and mandatory clear disclosure when end-users are interacting with an AI voice rather than a human. The ability to eliminate speech hallucinations is a technical win; the responsibility to prevent malicious audio generation is a societal mandate.

Actionable Insights for Leaders and Developers

The TADA announcement is a clear signal: the era of slow, inaccurate generative audio is drawing to a close. Here is what leaders and builders should be focusing on now:

For Business Leaders and Decision Makers:

- Audit Current Roadmaps: Review any planned deployment of TTS or voice AI. If it relies on older, sequential architectures, TADA or similar synchronous models could offer massive cost and latency improvements immediately.

- Prioritize Trust Over Novelty: Focus procurement and development efforts on models that provide verifiable reliability guarantees (like the zero-hallucination claim) over models that merely offer interesting, but potentially unreliable, creative flair.

- Develop AI Governance Frameworks: Assume highly realistic synthetic audio will become common. Create clear policies now on when and how synthetic voices can be used internally and externally to maintain customer trust and ensure legal compliance.

For Developers and Researchers:

- Explore the Multimodal Paradigm: Dive deep into the TADA architecture to understand how integrated context leads to performance gains. This approach will undoubtedly bleed into vision and text-to-video generation next.

- Benchmark Open Source: Rigorously test the open-sourced TADA against proprietary APIs. The MIT license allows for true, unencumbered integration, potentially leading to breakthroughs in low-resource or edge device deployment that proprietary models cannot match.

- Focus on Watermarking & Provenance: Given the open nature of the model, invest time in building robust detection and provenance tools to tag and track AI-generated audio, building the necessary security infrastructure around this powerful new tool.

Conclusion: The Dawn of Reliable, Instantaneous Sound

Hume AI’s TADA announcement is far more than a press release about a faster model. It is a declaration that the engineering focus in generative AI is shifting from simply *making* content to making content that is *fast, accurate, and verifiable*.

By merging architectural efficiency (synchronous processing), reliability (zero hallucinations), and commitment to community (open-sourcing), TADA sets a challenging new standard. We are moving swiftly past the novelty phase of generative AI and entering a phase where these tools must prove their industrial utility. The ability to generate perfect, instantaneous speech at scale is no longer a futuristic dream; it is now a tangible, accessible technology ready to reshape human-computer interaction across nearly every digital touchpoint.