Why Did Meta Delay Avocado? The Real State of the AI Arms Race and the Shifting Sands of Leadership

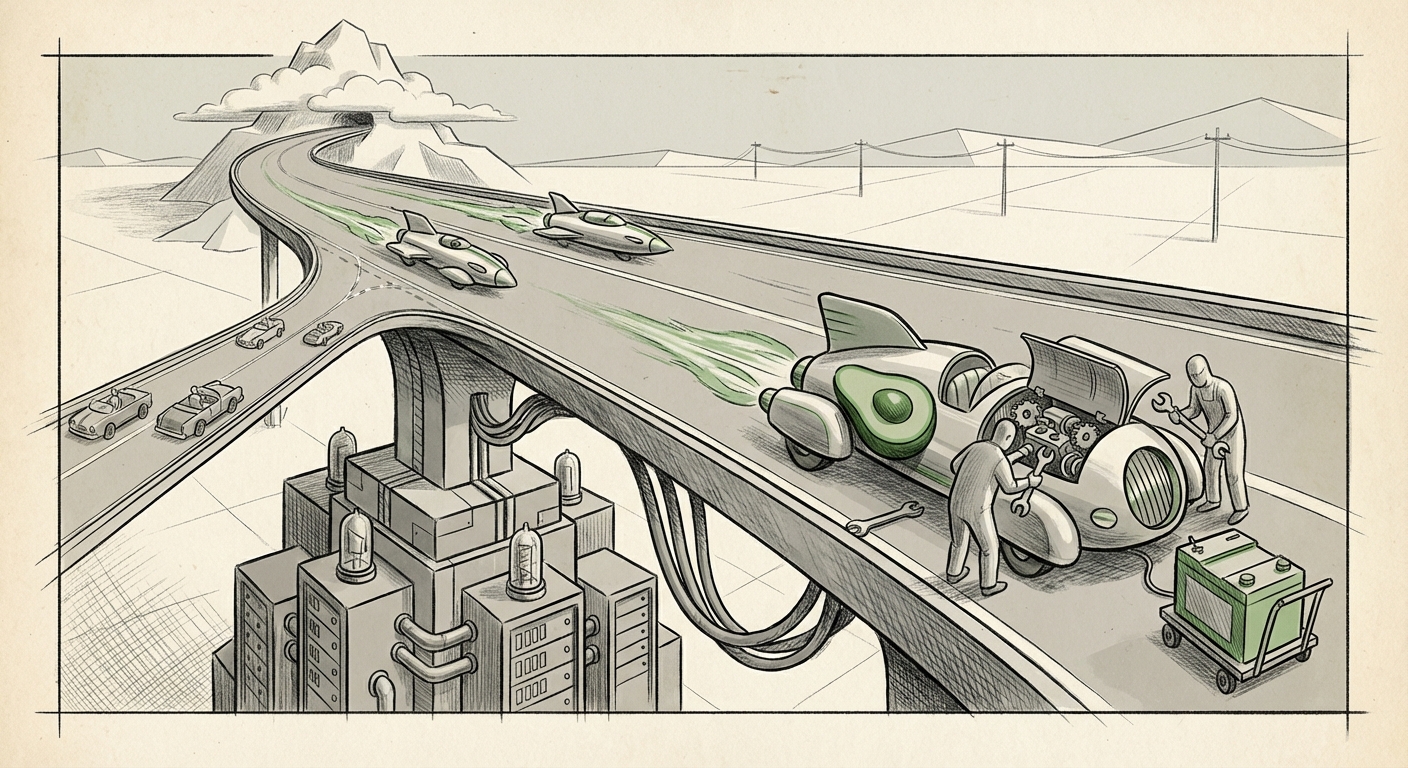

The world of Artificial Intelligence moves at breakneck speed. Yesterday’s state-of-the-art is today’s baseline. This rapid evolution was recently highlighted by reports suggesting Meta has postponed the release of its next flagship AI model, internally dubbed "Avocado," because it simply couldn't keep pace with the performance of rivals like Google and OpenAI. For those tracking the tech giants, this isn't just a product delay; it’s a vital signal about the true cost, complexity, and competitive dynamics defining the current frontier of AI development.

As an AI technology analyst, I view this setback not as a defeat for Meta, but as a critical data point illustrating the sheer difficulty of maintaining the lead. The competition is no longer about making *a* good model; it's about building models that push the boundaries of intelligence, safety, and efficiency simultaneously. To understand the implications of Avocado’s pause, we must look beyond the headlines and analyze the foundational challenges driving this intense arms race.

The Moving Goalposts: Benchmarks Define the Battlefield

When an internal test shows a model "can't keep up," it means the performance metrics—the benchmarks—have moved further and faster than expected. Think of benchmarks as standardized exams for AI. If your student studies diligently, but the difficulty of the exam suddenly doubles, they will score lower, despite their hard work.

The primary battleground involves platforms like GPT-4o, Claude 3, and Google’s Gemini family. These models are setting increasingly high bars in complex reasoning, multimodal understanding (handling text, images, and audio seamlessly), and coding ability. A delay in Avocado suggests that Meta’s engineers encountered bottlenecks where their architecture or training data could not bridge the gap against proprietary advancements made by competitors.

The technical audience understands that even small percentage gains on standardized tests (like MMLU or HumanEval) require massive, often unpredictable, increases in compute and novel architectural tweaks. **What this means for the future:** The gap between the top tier (OpenAI/Google) and the rest of the field is currently defined by access to superior research insight, optimized training pipelines, and sheer computational capital. Unless Meta can innovate a breakthrough architecture, catching up simply means spending more money, faster—a race that is becoming increasingly expensive for everyone.

The Strategic Schism: Open Source vs. Proprietary Power

Meta has long championed an open approach, exemplified by its incredibly successful Llama family of models. Open-sourcing allows Meta to distribute its technology widely, fostering community innovation and rapidly testing its models in diverse real-world scenarios. This is a powerful strategic moat built on widespread adoption.

However, the rumored "Avocado" model suggests Meta is also striving for a closed, frontier-level system capable of matching GPT-4 or Gemini Ultra directly. This creates an internal tension:

- Talent Allocation: Are Meta’s best research teams divided between polishing the highly scalable, but slightly older, Llama architecture, and developing the cutting-edge, often secretive, "Avocado" pipeline?

- Philosophy Clash: If the leading proprietary models (like those from OpenAI) are consistently ahead, it calls into question whether an open approach can *ever* capture the absolute bleeding edge first.

This strategic conflict, frequently discussed in strategy circles, forces Meta to choose where to place its primary bets. If Avocado stalls, it reinforces the narrative that closed ecosystems, fueled by massive centralized investment, retain the advantage for achieving peak performance.

Actionable Insight for Businesses: Companies relying on Meta’s Llama for deployment must watch closely. If Meta shifts substantial resources away from open models to exclusively chase proprietary performance, the pace of innovation in the open-source ecosystem might temporarily slow, impacting customization timelines for smaller enterprises.

Anthropic’s Quiet Ascent: Safety as a Performance Vector

The inclusion of Anthropic in the list of leaders is not accidental. While Meta and Google often compete on raw capability, Anthropic (backed by Amazon and Google) has successfully positioned itself as the standard-bearer for safety and alignment—the philosophy of ensuring AI acts as intended.

Anthropic’s Claude models have consistently punched above their perceived weight class, often leading in complex ethical reasoning tasks and, increasingly, matching competitors in raw benchmark scores. This suggests that their focus on safety engineering is not a brake on performance, but perhaps an accelerator.

What this means for the future: Performance differentiation is moving beyond parameter count. If Anthropic’s approach—integrating safety constraints directly into the training loop (Constitutional AI)—yields robust, reliable systems that organizations trust more readily, then Meta needs to integrate similar "trust engineering" into Avocado. Trust is becoming a crucial, unquantified benchmark.

The Crushing Cost of Compute and the Hardware Bottleneck

Ultimately, even the most brilliant algorithm is useless without the hardware to run it. The delay of Avocado likely has a significant infrastructure component tied to the global scarcity and staggering expense of frontier AI training.

Training a state-of-the-art Large Language Model (LLM) costs hundreds of millions of dollars in specialized hardware (primarily Nvidia GPUs), energy, and cooling. The continuous demand for these chips has led to shortages and skyrocketing costs. When Meta tests "Avocado" internally and finds it lacking, the easiest solution—more training time on more chips—is also the most financially punishing.

For the Future of AI Development: This hardware crunch is creating an elite club of AI developers. Only those with the deepest pockets (Microsoft/OpenAI, Google, Meta, and increasingly well-funded startups like Anthropic) can afford to stay in the game of training true frontier models from scratch. This centralization of training power poses a risk, potentially stifling diversity in architectural innovation outside the labs that can afford continent-sized data centers.

We are seeing fierce competition not just over algorithms, but over access to the supply chain. Future competitive advantage might hinge more on strategic deals with Nvidia or building custom AI silicon (like Google’s TPUs or Amazon’s Trainium) than on pure academic breakthroughs.

Future Implications: What Does This Mean for Your Business?

The pause on Avocado serves as a clear reminder that the AI landscape is highly volatile. Here are the practical implications:

1. Increased Reliance on Optimized Open-Source

If Meta’s closed efforts are temporarily struggling, businesses will continue to rely heavily on the *currently* available open models (Llama 3 variants). This makes efficiency and fine-tuning paramount. Instead of waiting for a model that might be 5% better next year, organizations must become experts at squeezing 95% of current potential out of existing, reliable architectures today.

2. The Rise of Specialized Fine-Tuning Services

As the frontier models (GPT-4o, Claude 3 Opus) become more expensive or restrictive, the value shifts to companies that can take a capable open model and train it specifically on proprietary industry data (legal, medical, engineering). The moat is moving from the base model itself to the unique, specialized data applied on top of it.

3. The Importance of Multimodality and Integration

The reason competitors are pulling ahead is likely due to superior integration of different data types (vision, audio). For businesses, this means that the next wave of adoption will focus less on chatbots and more on AI systems that can interpret documents, videos, and spoken commands simultaneously. Any new Meta model must deliver this seamless integration to be relevant.

4. A Potential Opening for Second-Tier Players

While Meta pauses, competitors who are less beholden to the "must beat OpenAI" pressure might use this window. Companies that focus on niche AI optimization—perhaps specializing in energy-efficient inference or unique vertical applications—can gain traction while the giants are busy recalibrating their flagship releases.

Actionable Insights for Navigating the Uncertainty

For technologists and business leaders, uncertainty is the new normal. Here is how to prepare:

- Diversify Your AI Portfolio: Do not anchor your entire strategy to the next version of one company's model. Test applications against the current top models from OpenAI, Google, and Anthropic. Build abstraction layers in your software so you can swap out the underlying LLM provider with minimal friction if performance or pricing shifts.

- Invest in Inference Efficiency: Since training is prohibitively expensive, focus development efforts on *running* models efficiently (inference). Can you use a smaller, faster model (like Llama 3 8B or 70B) for 80% of your tasks, reserving the behemoths for the remaining 20%? This cuts costs dramatically.

- Monitor Hardware Trends: Keep a close watch on announcements from Nvidia, AMD, and cloud providers regarding custom AI accelerators. The next major performance leap might come from a new chip architecture that lowers the barrier to entry for training smaller, highly optimized frontier models.

- Embrace Iterative Deployment: The era of the massive, multi-year model release is fading. Assume models will require constant re-training and updating. Build continuous integration/continuous deployment (CI/CD) pipelines specifically designed for AI models, allowing for rapid iteration when a competitor drops a surprise release.

The delay of Meta’s "Avocado" model is a clear indication that the AI race is entering a phase defined by exponential difficulty. Progress at the frontier is becoming rarer, more expensive, and more dependent on foundational infrastructure than ever before. For Meta, this is a moment to re-evaluate resources and perhaps double down on its open-source ecosystem as its most resilient strategic advantage. For the rest of the industry, it confirms that leadership in AI is a marathon requiring sustained, multi-front investment—not just in algorithms, but in capital, hardware, and strategic clarity.