Meta's 'Avocado' Delay: Cracks in the AI Arms Race and What It Means for Frontier Model Dominance

The field of Artificial Intelligence is currently defined by a breathless, hyper-accelerated arms race. Every major technology player is pouring billions into building the next, more capable Large Language Model (LLM). It is a competition where performance is measured in tiny fractions of a percentage point on esoteric benchmarks. Therefore, when a titan like Meta Platforms reportedly delays a major internal model—codenamed "Avocado"—because it cannot keep pace with rivals like Google and OpenAI, the implications ripple far beyond just one company's product schedule.

This setback is not just a footnote in Meta’s quarterly reports; it is a vital data point exposing the true dynamics of frontier AI development. The competitive landscape is far tighter than the public narrative suggests, and the difference between leading and lagging is often determined behind closed doors, in the unforgiving scrutiny of internal testing.

The Reality Check: Benchmarks Define the New Leadership

The initial report centering on the "Avocado" delay suggests that Meta’s model failed to meet the internal bar set by the current market leaders. To understand the gravity of this, one must look at the current state of play, often tracked through the quantitative measures synthesized by researchers (as suggested by the need to investigate **"LLM leader board comparison" GPT-4 vs Gemini 2.0 vs Claude 3 Opus**).

What we observe in public leaderboards is a constant, razor-thin margin between the top three or four labs. A model that is "falling behind" might only be a few percentage points shy of achieving state-of-the-art (SOTA) status on key metrics like advanced reasoning, complex coding tasks, or multimodal integration. For a company like Meta, which bases a significant part of its strategy on community perception and the ability to eventually release powerful open-source models (like the Llama series), even a minor gap can translate into a major perception deficit.

The Importance of the SOTA Ceiling

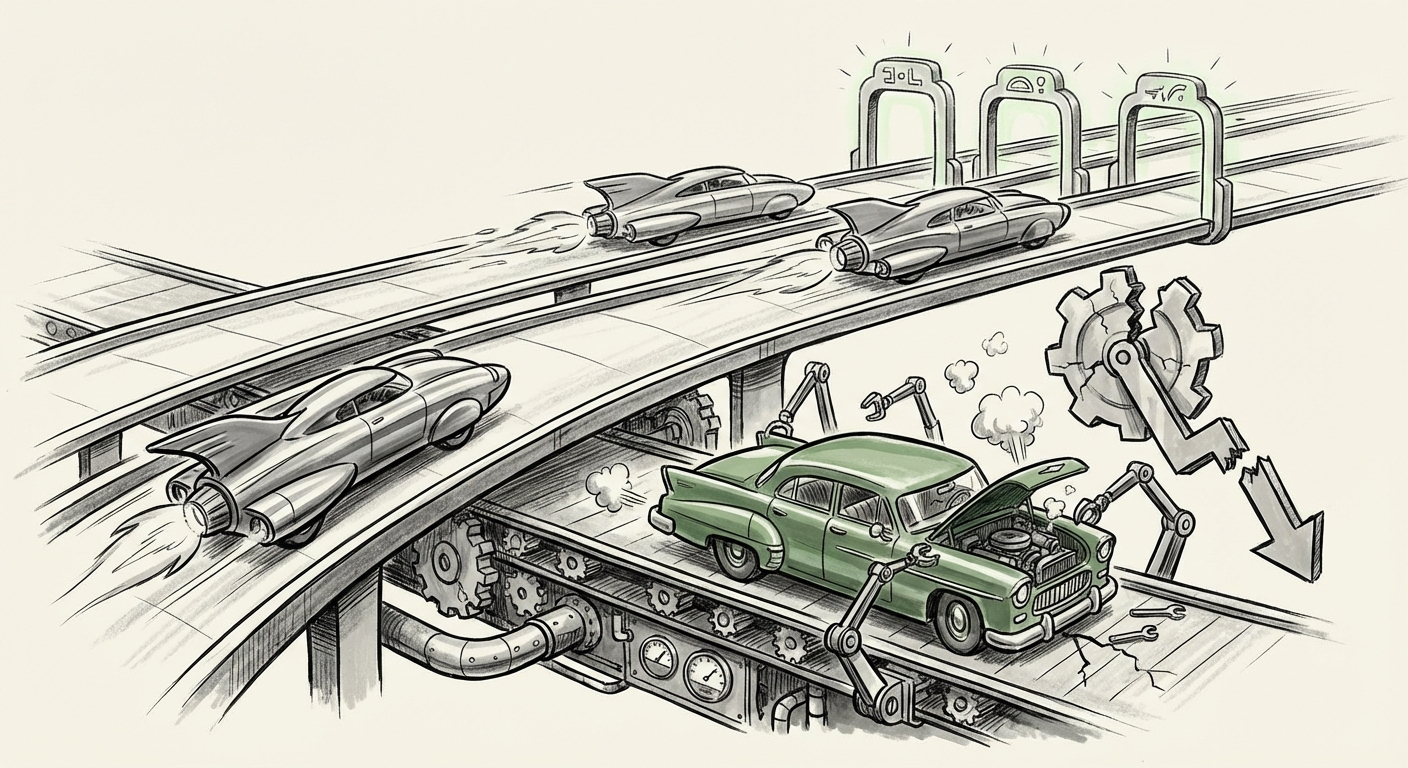

For non-technical audiences, think of LLM capability like climbing the world’s highest mountain. OpenAI (with GPT) and Google (with Gemini) seem to have established camps higher up the peak. Anthropic has positioned itself as a highly capable co-climber, often excelling in safety and nuanced understanding. If Avocado cannot consistently reach the altitude of its peers in internal tests, it means Meta must either invest significantly more compute, rewrite core architectural components, or accept a lower-tier release—a prospect that undermines their narrative of leading the open-source charge.

Meta's Dual Strategy Under Scrutiny: Open vs. Closed

Meta’s AI strategy is unique in the landscape. While OpenAI and Anthropic primarily operate on a closed, proprietary model, Meta has aggressively championed the open-source movement with Llama. The implied contract with the developer community is that Meta will provide foundational models that rival or exceed the performance of the closed systems, democratizing access to cutting-edge AI.

A significant delay in a cutting-edge internal model like Avocado forces us to examine the pressure this puts on their entire roadmap (a topic pursued by researching **"Meta AI" future model roadmap after Llama 3 delays**). If their *best* internal research model cannot compete, it directly impacts the capabilities they can promise for future Llama releases. This is a strategic risk:

- Credibility Risk: If Meta consistently releases models perceived as one generation behind the SOTA, developers might migrate their foundational work to competitors who offer demonstrably better starting points.

- Talent Acquisition: Top AI researchers want to work on models that push boundaries. Setbacks signal a potential slowdown in the groundbreaking work necessary to attract and retain the best minds.

This creates a fascinating tension: Should Meta prioritize quickly releasing an "open" model that is slightly behind the curve to maintain community momentum, or should they delay everything to ensure their next major open release is truly competitive with the best proprietary offerings? The Avocado delay suggests they are leaning toward quality over immediate velocity in their closed track, but the open-source community is watching closely.

The Hidden Battlefield: Internal Evaluation and Safety Guardrails

Perhaps the most illuminating aspect of this story is that the failure was detected during internal tests. This points toward the increasing sophistication and rigor required to deploy models safely and effectively—a critical area of research suggested by queries on **challenges in internal AI model evaluation "hallucination rates" scaling**.

External benchmarks (like those found online) are helpful, but they are often static or curated. Real-world deployment demands robustness against unexpected inputs, adversarial attacks, and a low rate of harmful outputs (hallucinations or biased responses). Internal testing, or "red teaming," is where a model is subjected to intense, proprietary stress tests designed to break it.

Why Internal Tests Reveal More

It is highly likely that Avocado did not fail on simple math problems, but rather on one of the following sophisticated internal criteria:

- Long-Context Coherence: Can it maintain logical thread over an entire document or complex conversation?

- Safety Alignment Drift: Does the model gradually become easier to trick into generating harmful content the longer a conversation goes on?

- Domain Specificity: Does it perform poorly on proprietary internal data sets or highly specialized tasks that Google or OpenAI might have prioritized?

For businesses deploying AI, this underscores a vital lesson: The performance metrics that matter most are not the marketing headlines, but the stability and safety proven in the trenches of specialized testing. A model that achieves 90% accuracy on public tests but 40% safety compliance internally is unusable for enterprise applications.

The Fourth Pillar: Anthropic's Quiet Ascendancy

The context of the AI race is incomplete without focusing on the dynamic role of Anthropic. Often perceived as the conscientious challenger, recent market movements suggest they are far more than a safety-focused alternative, a point reinforced by tracking their progress through queries like **Anthropic recent funding and performance vs Google and OpenAI**.

Anthropic's Claude 3 models have consistently shown leadership in specific reasoning and long-context handling capabilities, directly challenging the perceived dominance of GPT-4. Backed heavily by significant investments from key players (including strategic alliances with major cloud providers), Anthropic represents a powerful, well-capitalized force. Meta is not just competing against the incumbents; they are racing against a rapidly iterating, highly focused challenger that has successfully carved out a premium segment of the market by prioritizing trust and capability synergy.

Future Implications: What This Means for AI Adoption

The news of Meta’s internal struggle has several key implications for the future trajectory of AI:

1. Consolidation at the Top

The immediate implication is that the gap between the absolute top tier (OpenAI/Google) and the rest of the field—even a powerful player like Meta—is widening, at least temporarily. Building frontier models requires obscene amounts of capital for compute (GPUs) and top-tier research talent. Setbacks suggest that hitting the next level of capability (e.g., moving toward Artificial General Intelligence or AGI) is incredibly difficult and expensive, potentially leading to market consolidation among those who can sustain the spending required for iteration.

2. The Value Proposition of Open Source Evolves

If Meta cannot consistently deliver the *best* foundational models openly, the open-source movement must adapt. Future open models may not compete on raw intelligence but on efficiency, customizability, or licensing freedom. Businesses might choose a slightly less powerful, but fully customizable and privately hosted, Llama variant over a proprietary API that restricts data usage.

3. Heightened Stakes for Enterprise AI Strategy

For businesses planning their AI deployment strategy, this development serves as a critical warning about reliance on a single vendor or a single model generation. If Meta struggles to match its competitors, enterprises relying on Meta’s ecosystem must have contingency plans. Diversification across providers (using OpenAI for creativity, Google for integration, and potentially Anthropic for complex analysis) becomes less a luxury and more a necessity for operational resilience.

Actionable Insights for Stakeholders

Based on this competitive tightening, here is what technology leaders and strategic planners should consider:

- Deepen Your Evaluation Frameworks: Do not rely solely on vendor-provided benchmark scores. Develop internal, proprietary evaluation suites that mimic your most critical, high-stakes workflows. If Meta is failing internally, you must rigorously test every vendor’s offering against your unique risk tolerance.

- Monitor Compute Spend: The speed of iteration is directly tied to hardware access. Keep a close eye on announcements regarding Meta's next-generation custom silicon or major GPU purchases. Compute availability will be the single biggest differentiator in the next 12 months.

- Invest in Model Fine-Tuning Expertise: If the absolute frontier models become too expensive or too proprietary, the value shifts to the teams who can take the *second-best* open models and fine-tune them precisely for a company's niche data. Expertise in prompt engineering and fine-tuning is now a core business competency, not just a research interest.

- Watch the Anthropic Effect: Assume Anthropic is the immediate performance competitor to watch. Their strategic alignment with major infrastructure players means they can move quickly from research breakthrough to enterprise integration.

The journey to superior AI is clearly fraught with hidden engineering hurdles. The "Avocado" delay is a stark reminder that in this space, the race is not linear. It involves constant recalibration, intensive internal validation, and a battle fought one data point at a time. While Meta remains a powerhouse, the current evidence suggests that the summit of LLM capability is currently occupied by a very select group, forcing everyone else to refine their climb or change their route entirely.