The Ultimate Proving Ground: How Battlefield Data is Forging the Future of Autonomous AI Drones

In the world of artificial intelligence, data is the undisputed kingmaker. For years, the most advanced military AI models were trained in sterile digital sandboxes—simulations designed to mimic combat. While useful, these models often failed when confronted with the chaos, electronic noise, and sheer unpredictability of a real warzone.

That paradigm is now undergoing a revolutionary shift. The news that Ukraine is opening its operational battlefield data streams to allied nations for the specific purpose of training AI models for autonomous drones is arguably one of the most significant technological developments in modern defense strategy. This is not just an academic exercise; it is the live, high-stakes stress-testing of autonomous systems where the margin for error is measured in lives and territory.

The Shift from Simulation to Reality: The Data Flywheel

Imagine teaching a child to recognize a stop sign. You show them thousands of pictures (simulation data). That's one thing. But showing them a stop sign obscured by fog, partially shot by gunfire, or viewed through a dusty windshield under heavy jamming—that’s the power of real-world data. This is precisely what Ukraine is providing.

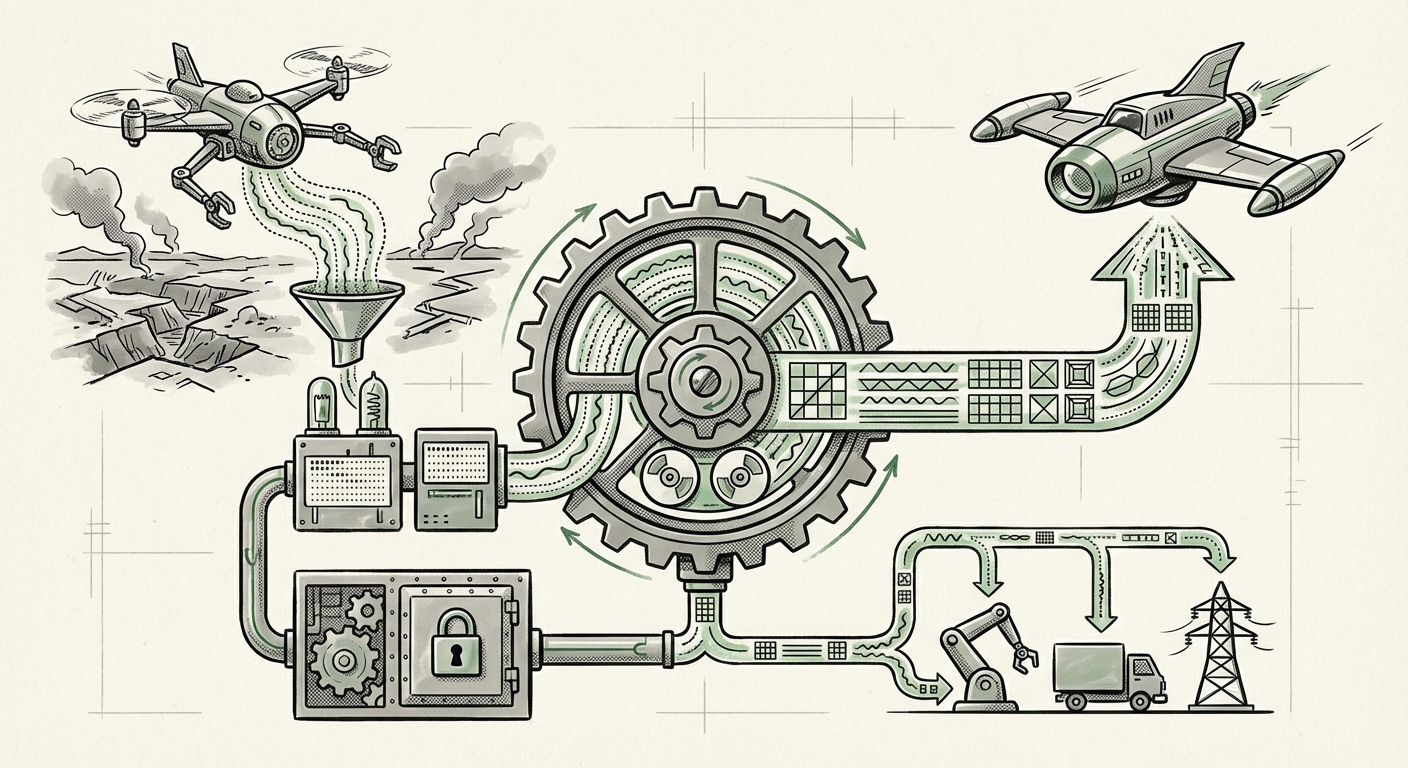

This collaboration ignites what we in the industry call the **“Data Flywheel.”**

- Combat Generates Data: Drones are flying, targets are being engaged, and electronic warfare is being fought every hour. This generates massive volumes of raw sensor data.

- Data Cleanses and Trains AI: Allies ingest this data, which is far richer than any synthetic environment, and use it to retrain perception and decision-making algorithms for their autonomous systems.

- Better AI Deployed: The resulting AI models are vastly more accurate, robust against environmental noise, and better at target identification in contested airspace.

- Performance Generates More Data: These improved systems generate new, high-quality data based on their successful (or unsuccessful) engagements, feeding the cycle back into the training pipeline.

This feedback loop shortens the development cycle for defense contractors and allied nations from years to months. As analysts tracking military technology have noted, the conflict has become an unintentional, yet incredibly effective, incubator for practical military machine learning applications, moving AI out of the lab and onto the front line [Source Context on Platform Sharing].

The Technical Edge: Learning to Survive

For AI engineers, the value of this data extends beyond simply identifying enemy equipment. Modern drone survival depends on defeating sophisticated electronic countermeasures (ECM). The training needs to encompass:

- Adversarial Machine Learning: Training the drone’s navigation and vision systems to recognize when its GPS signal is being spoofed or its camera feed is being jammed. The data must contain examples of successful jamming attempts so the AI can learn alternative, robust navigation methods (Query 4).

- Low-Observable Targets: Real combat reveals how easily camouflage or environmental clutter can fool current perception models. Training on this live data teaches the AI to differentiate true threats from background noise with unparalleled precision.

For defense technologists, this collaboration is the gold standard for validation, providing the necessary data diversity that synthetic environments often lack.

Strategic Implications: Reshaping Global Defense Alliances

The decision by Ukraine to share this sensitive information is as much a political and strategic statement as it is a technical one. It solidifies a deep technological partnership with the West, far beyond the transfer of physical weaponry.

Deepening NATO Integration (Query 3)

This data sharing underscores a robust, integrated technological relationship between Kyiv and NATO members, particularly the United States. It suggests a coordinated strategy where operational lessons learned in real-time are immediately funneled into the joint R&D pipelines of allied nations. This moves beyond simply supplying equipment; it means allies are co-developing the next generation of battlefield capabilities based on current threat modeling.

For geopolitical analysts, this confirms that the technological commitment to Ukraine is not just about materiel readiness for today, but about shaping the technological landscape of future conflicts involving NATO forces.

The New Data Advantage

In conventional warfare, strategic advantage often came from superior hardware, numbers, or logistics. In the AI-driven era, the decisive factor is the Quality and Velocity of Data Incorporation. Nations that can quickly leverage conflict data—especially high-fidelity battlefield metrics—will possess superior autonomous systems years ahead of those relying solely on internal testing.

This collaboration means allies are essentially purchasing a massive, expedited training license for their autonomous warfare systems, creating a significant temporal advantage over adversaries not participating in this feedback loop.

The Ethical Crucible: Governing Autonomous Weapon Systems (Query 2)

This technological acceleration brings us directly to the most challenging aspect of military AI: ethics and control. The development of autonomous systems capable of selecting and engaging targets—often termed Lethal Autonomous Weapon Systems (LAWS)—has long been debated in international forums.

From Policy Debate to Policy Reality

For years, human rights organizations and policymakers have raised alarms about the speed at which AI systems might be deployed without sufficient human oversight. The challenge lies in defining the boundaries of autonomy. How much autonomy is acceptable when the AI is trained on data derived from actual combat, where mistakes have fatal consequences?

When AI models are trained on data reflecting kinetic engagements, the resulting algorithms become incredibly adept at pattern recognition relevant to lethal outcomes. This forces allies to confront immediate policy questions:

- Can an AI trained on these data sets still be constrained to operate only in "human-in-the-loop" or "human-on-the-loop" modes?

- What assurances are required that an autonomous drone, trained to identify an enemy vehicle, will not misclassify a civilian vehicle under novel, real-world conditions that the training data inadequately represents?

Policy makers and ethicists are watching closely. The deployment readiness fueled by this data sharing puts tremendous pressure on international bodies to finalize regulations or risk seeing advanced autonomous capabilities deployed under existing, potentially outdated, legal frameworks [Relevant Ethical Debate Source Type Example].

The Responsibility of High-Stakes Training

This collaboration highlights the massive responsibility placed on the receiving nations. They must ensure ironclad data security and rigorously validate the AI before deployment, especially given the sensitivity of the data’s origin. The goal is to build systems that are not only effective but also legally and ethically compliant, even when operating under the extreme stress that real combat data represents.

Practical Implications for Business and Society

While this development is framed within defense technology, the trends observed here will inevitably bleed into the commercial and industrial sectors. Businesses must take note of three key takeaways:

1. The Supremacy of Field Data

The military pivot away from purely synthetic data proves a universal truth for any enterprise relying on AI: the quality of training data dictates the ceiling of your product’s performance. Commercial applications—from autonomous vehicles to complex industrial robotics—will need systems that can rapidly incorporate edge-case, real-world feedback.

Actionable Insight: Businesses must invest in infrastructure that allows for rapid, secure ingestion and labeling of operational data captured in the field to maintain a competitive AI edge.

2. Accelerated Pace of Autonomy

If defense tech can rapidly evolve autonomous drone capabilities using operational feedback, the expectation for commercial autonomy (like advanced trucking or logistics) will intensify. The tolerance for slow, simulation-bound development will decrease. Competitors will look to replicate this "Data Flywheel" model across their product lines.

3. Ethical Frameworks as Competitive Necessities

The intense ethical scrutiny facing military AI will translate to commercial AI. As AI systems become more capable in high-stakes civilian environments (like medical diagnosis or infrastructure management), the public and regulators will demand the same level of transparency and validated safety that is now being debated in defense circles. Companies that proactively establish transparent, verifiable safety standards based on real-world failure modes will build greater public trust.

Conclusion: The Future is Being Trained Now

Ukraine’s decision to share its combat telemetry is more than a footnote in military history; it is a critical inflection point in the history of artificial intelligence deployment. It signifies the definitive end of the purely theoretical era of military AI and the beginning of the age of battle-hardened algorithms.

The convergence of allied technological capacity with raw, high-stakes Ukrainian combat data creates an environment where autonomous drone capabilities—and perhaps, autonomous decision-making systems across the board—will evolve at an unprecedented speed. This presents profound strategic advantages for the allies leveraging this data, while simultaneously heightening the urgency for international dialogue on how these powerful, rapidly evolving systems must be governed. The next generation of technology isn't waiting for a white paper; it's being built, tested, and perfected in the most challenging proving ground on Earth.