The Ultimate AI Weaponization: How Battlefield Data Is Forging Autonomous Drones and Reshaping Global Security

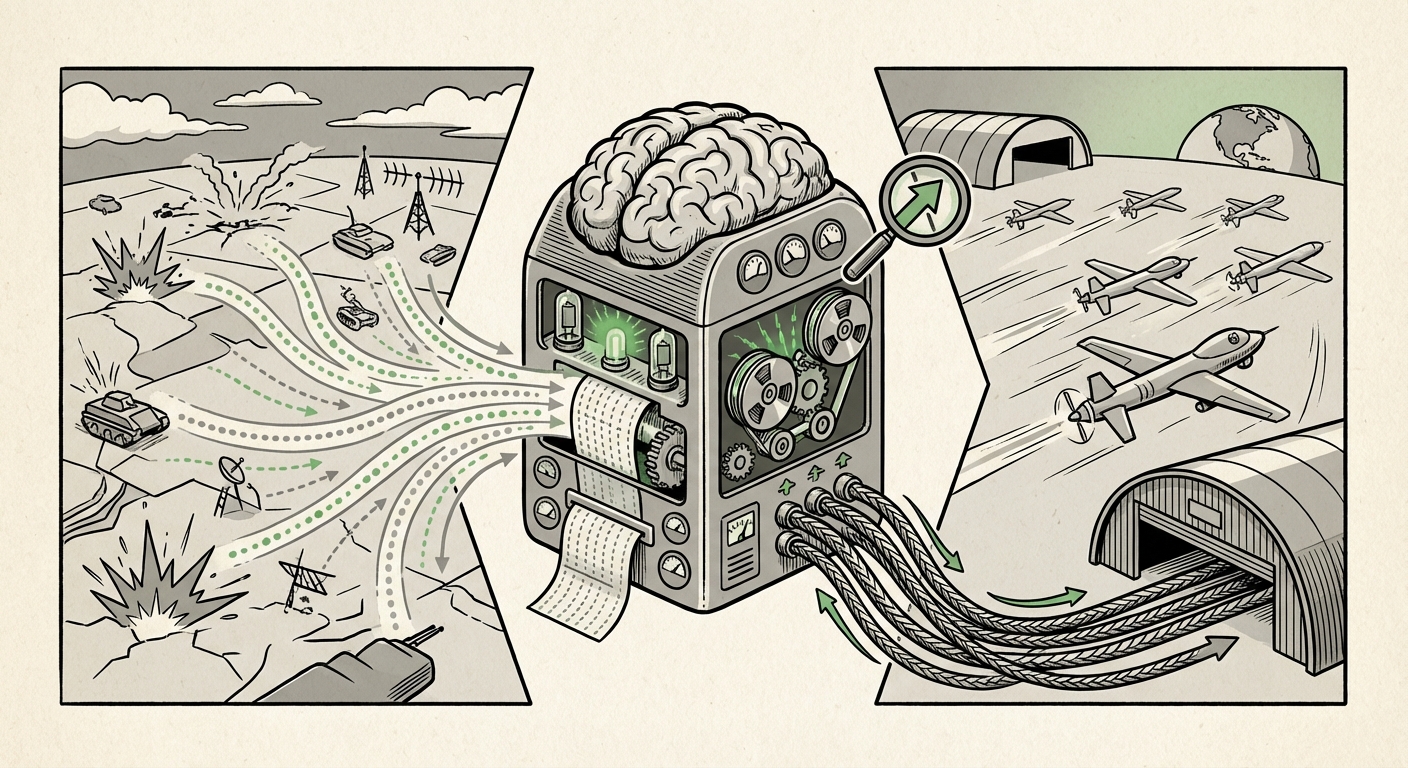

The modern battlefield is no longer defined solely by hardware—it is defined by data. A recent, pivotal development confirms this paradigm shift: Ukraine is actively sharing raw, high-fidelity combat data with allied nations specifically to train Artificial Intelligence (AI) models underpinning autonomous drone systems. This is not a simulation; this is real-world, high-stakes learning deployed at an unprecedented scale.

This move transitions AI from a topic confined to defense white papers and corporate labs into the operational core of contemporary conflict. For observers of technology, defense strategy, and ethics, this moment demands deep analysis. What does this data-sharing initiative truly unlock, and what are the immediate and long-term implications for the future of AI?

The Data Pipeline: From Conflict Zone to Cloud Server

To understand the significance, we must first understand the value of the data itself. Training robust AI, especially for complex tasks like target identification, evasion, and coordinated group maneuvers (swarming), requires massive, diverse, and *validated* datasets. In machine learning, the quality of the output is entirely dependent on the quality of the input—the data.

Why Real-World Data Trumps Simulation

While defense organizations have long used sophisticated simulators, these environments struggle to replicate the inherent chaos, electronic interference, lighting variations, and unpredictable adversarial behavior found in an active warzone. The data Ukraine provides offers:

- Ground Truth Validation: Verified successes and failures of current drone operations against sophisticated defenses.

- Adversarial Examples: Data capturing how enemy systems attempt to jam or spoof Ukrainian drones, offering immediate lessons on defensive AI programming.

- Rapid Iteration: Instead of years of phased testing, allies can integrate these lessons into their own models within months, creating smarter, faster-reacting autonomous platforms.

This process aligns perfectly with the growing strategic emphasis on **Data-Centric Warfare**. If an allied nation’s strategy centers on deploying AI-driven systems, their greatest weakness is access to validated training material. Ukraine, by opening its platform, has instantly become the most crucial data provider for advanced drone autonomy.

Corroborating the Trend: Strategic Alignment and Necessity

This initiative does not exist in a vacuum. It is the practical application of broader strategic goals already being articulated by major military powers. To fully grasp the scope, we must look at the strategic environment underpinning this data transfer.

1. The Allied Strategic Imperative: Data-Centric Warfare

Major defense ministries, particularly the Pentagon, have explicitly stated that future military dominance will be built on the ability to rapidly process and act upon data better and faster than any adversary. This requires massive data infrastructure and sharing protocols.

To contextualize this, one must examine existing frameworks: Searching for "Pentagon strategy autonomous systems data sharing" yields insights into how NATO allies are attempting to structure these relationships. This data sharing initiative serves as a crucial, immediate stress test for those established frameworks. It forces allies to quickly harmonize their AI standards, security protocols, and data ingestion pipelines to handle this influx of sensitive, operational intelligence.

[Hypothetical Reference Point]: Analysis of DoD frameworks suggests that while policy exists for sharing intelligence, operational combat data for *training* algorithms requires rapid, bespoke agreements, which the Ukrainian arrangement appears to be. (See: [Read more on the DoD's AI Strategy Framework and data requirements](https://www.defensenews.com/artificial-intelligence/2023/10/dod-ai-data-framework/))

2. The Technical Hurdle: Training in the Trenches

The *how* is almost as important as the *what*. Training AI on live combat data is technically fraught. Errors in data labeling or interpretation can lead to catastrophic operational failure or, worse, unintended escalation.

Queries like "Challenges of training military AI on real-time combat data" reveal the critical technical issues at play. Allies must manage 'data drift'—where the real-world environment changes faster than the model can adapt—and combat 'adversarial attacks' specifically designed to trick AI recognition systems. The Ukrainian data helps refine the very algorithms used to filter noise and validate the "ground truth" of what a machine identifies as a legitimate target or threat.

3. The COTS-to-Military Acceleration

Much of the drone technology currently deployed is based on rapidly adapted commercial hardware and software. This efficiency allows for quick deployment but creates a massive training debt for high-level autonomy.

By looking into "Commercial drone technology transfer military applications," we see that this data sharing is about closing the gap between consumer-grade capabilities and dedicated military AI. The data allows allies to take robust COTS platforms and rapidly imbue them with sophisticated, battle-tested decision-making matrices, bypassing years of internal, purely simulation-based development.

Future Implications: The AI Arms Race Shifts Gears

This data exchange has profound implications, moving beyond the current conflict and setting precedents for future technological competition.

Acceleration of the Autonomy Timeline

The most immediate implication is the dramatic compression of the timeline for achieving meaningful autonomy. For decades, integrating AI into kinetic systems was a slow process, hobbled by regulatory review and the inability to obtain truly relevant data. Ukraine is effectively providing a massive, international Beta Test, accelerating the deployment of next-generation systems by years.

This means that the next generation of autonomous drones fielded by NATO members—capable of complex missions with minimal human oversight—will be informed by experience gained on the front lines of the world's most intense current conflict. This is a transition from theoretical capability to proven operational effectiveness.

The New Standard of Data Sovereignty

This arrangement highlights a crucial pivot: data is the most valuable military asset. Nations that possess large stores of unique, high-quality data—whether medical records, cyber activity logs, or combat sensor data—will hold immense strategic leverage. For businesses, this reinforces the lesson that proprietary, real-world data sets are the ultimate moat against competition, a lesson now magnified tenfold in the defense sector.

The Escalating Ethical Firebreak: LAWS

Perhaps the most significant long-term challenge arises at the intersection of real-world training and ethical oversight. The deployment of autonomous systems trained on combat data directly pressures the international community regarding Lethal Autonomous Weapons Systems (LAWS).

As allies integrate these improved models, the threshold for systems making life-and-death decisions without a human "in the loop" lowers significantly. Searching for "Lethal Autonomous Weapons Systems (LAWS) international regulation update" shows that global governance bodies are struggling to keep pace. When an autonomous drone, trained on verified combat data, correctly identifies and engages a target, it builds undeniable confidence in the technology, making calls for outright bans or moratoriums harder to enforce internationally.

The argument shifts from "Can AI make this decision?" to "The AI trained on real combat made this decision successfully; should we restrict its ability to defend our troops?" This tactical utility often trumps abstract ethical concerns in moments of immediate security need.

Actionable Insights for Business and Policy

What lessons can technology leaders, investors, and policymakers draw from this battlefield data exchange?

For Technology & Business Leaders: Embrace 'Operational AI'

If your sector relies on predictive modeling or automation, understand that the benchmark for performance is now being set by military AI operating under extreme duress. Businesses must move beyond siloed, historical datasets and invest in infrastructure that allows for continuous, secure ingestion and validation of live operational data.

Actionable Insight: Prioritize data governance frameworks that can handle rapid, high-stakes feedback loops. If you can secure and validate data at the speed of conflict, you can optimize any complex system.

For Policymakers: Bridge the Governance Gap Now

The speed of military AI deployment is now dictated by the velocity of the conflict, not the velocity of bureaucracy. Policy surrounding acceptable risk, transparency in autonomous decision-making, and international norms must be urgently updated.

Actionable Insight: Policymakers must engage deeply with the engineers developing these systems to create agile regulatory "sandboxes" that can evolve faster than the technology itself, or risk being permanently behind the curve on critical security matters.

For Defense Contractors: The Data-First Approach

The value proposition is shifting. Contractors who provide merely hardware or standard software will be rapidly outpaced by those who offer comprehensive, end-to-end data pipelines capable of integrating and refining allied training data into superior autonomy engines. The winners in the next decade of defense procurement will be the *data integrators*, not just the equipment manufacturers.

Conclusion: The Era of Empirically Proven Autonomy

The sharing of Ukrainian battlefield data for AI training is a landmark moment. It signifies the formal beginning of the era of Empirically Proven Autonomy in warfare. This convergence of necessity, cutting-edge technology, and geopolitical urgency has forged a path toward highly capable autonomous systems far quicker than any peacetime projection suggested.

As these allies refine their AI models, the landscape of aerial reconnaissance, electronic warfare, and precision targeting will irrevocably change. The challenge ahead for global society is to ensure that the ethical guardrails, which often lag behind innovation, are erected with sufficient speed and strength to manage a future where the machines learning on the battlefield today will become the defining tools of tomorrow’s security calculus.