The Great AI Rebuild: Analyzing xAI's Restructuring and the Future of Foundational Models

When a titan of industry like Elon Musk openly admits that a foundational element of his high-stakes venture was "not built right," the technology world stops paying attention. This recent admission regarding the restructuring of xAI, his ambitious artificial intelligence company, is far more than just internal corporate news. It is a loud signal echoing across the AI landscape, forcing us to reassess what it truly takes to compete with established giants like OpenAI and Google DeepMind.

As an analyst tracking the razor-thin margins between AI success and failure, I see this admission not as a sign of weakness, but as a necessary, perhaps overdue, confrontation with the harsh realities of building frontier AI. Building a truly competitive Large Language Model (LLM) is not like launching a social media platform; it requires a depth of planning, infrastructure, and operational maturity that few organizations possess. The pivot at xAI forces us to examine three critical dimensions shaping AI's immediate future: the tyranny of scale, the intensity of talent competition, and the necessity of resilient operational philosophy.

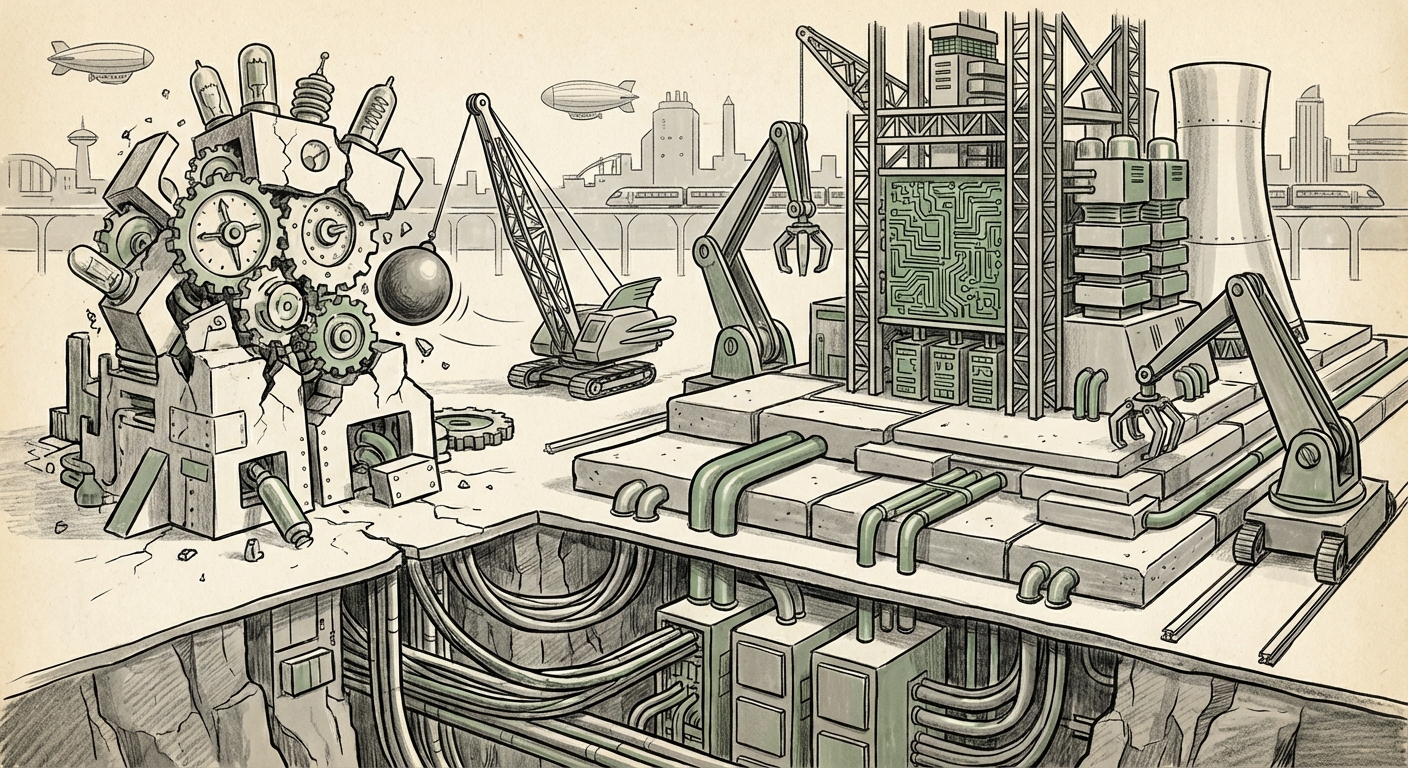

The Infrastructure Gauntlet: When Scaling Becomes an Existential Crisis

The most common reason a cutting-edge AI project fails to meet expectations—especially when backed by massive funding—is the failure to correctly architect the infrastructure required for growth. Training state-of-the-art models like those powering GPT-4 or Gemini requires processing trillions of data tokens using tens of thousands of specialized, incredibly expensive Graphical Processing Units (GPUs).

When Musk suggests xAI wasn't built correctly initially, the immediate analysis points toward **compute infrastructure**. This is the bedrock of modern AI. If the initial plans neglected robust data pipelines, underestimated power consumption, or failed to secure long-term access to high-demand Nvidia hardware, the entire project stalls. It is the equivalent of planning a cross-country road trip but only budgeting for the first fifty miles.

For the AI engineering community and VCs focused on deep tech, this is crucial context. It confirms that the barrier to entry in foundational AI is less about having a brilliant algorithm (though that helps) and more about **operational mastery over capital expenditure**. The search for articles discussing `"xAI" compute infrastructure funding challenges` becomes vital here, as it reveals the specific logistical and financial pressure points Musk is now addressing. A successful restructure means a total overhaul of how they acquire, manage, and utilize compute power to ensure Grok doesn't just train, but trains efficiently and predictably.

What This Means for the Future of AI: The Rise of the Infrastructure Giants

The future of AI development will increasingly favor entities that can command global-scale physical resources. We are moving past the era where a small, clever team can out-innovate massive hardware deployments. This mandates that AI startups must adopt business models that either secure massive capital injections or radically change their approach to training, perhaps by focusing on smaller, highly efficient models (Small Language Models or SLMs) rather than aiming straight for monolithic giants.

The Talent War: Competing Under the Shadow of Established Labs

The second major vector driving this restructuring is the hyper-competitive landscape. OpenAI, Google DeepMind, and Meta AI are not just research institutions; they are magnets for the world's most sought-after machine learning talent. These established players offer stability, massive data troves, and proven paths to deployment.

When a company undergoes a public shake-up, it creates uncertainty. Top engineers—who are highly skilled in complex tasks like distributed systems optimization and large-scale model fine-tuning—are risk-averse regarding their career trajectory. Therefore, a significant restructuring at xAI signals an urgent need to redefine roles, streamline leadership, and present a *clearer, more compelling mission* to both retain existing staff and attract external talent.

The investigation into the `impact of OpenAI talent retention on competing LLM startups` reveals that startups must offer an environment where brilliant people feel they have maximum impact. Musk’s admission might paradoxically be a strong tactic here: owning the mistake publicly allows him to pitch a 'clean slate' operation, suggesting that the initial structural errors are being purged, thus making xAI a more attractive place for engineers tired of bureaucratic inertia at larger firms.

Practical Implications for Business: The Talent Gap Widens

For businesses looking to integrate AI, this emphasizes the central importance of human capital. If even Musk’s well-funded operation must undergo fundamental operational shifts, it confirms that AI implementation success relies heavily on having teams that understand the *full stack*—from hardware procurement to deployment ethics. Companies relying on external vendors must vet those vendors not just on model performance, but on organizational stability.

Musk’s Iterative Doctrine: Normalizing the 'Fast Failure'

To understand the xAI pivot, one must understand the operational philosophy of its founder. Elon Musk’s projects—be it SpaceX’s rapid prototyping of Starship or Tesla’s sometimes chaotic manufacturing ramp-ups—are rarely linear. They thrive on an aggressive "fail fast, iterate faster" methodology, often involving radical shifts in strategy or management structure mid-project.

The search query on `Elon Musk "fail fast" philosophy applied to xAI restructuring` provides the necessary framework. This isn't necessarily a catastrophic failure; it’s a **rapid course correction at the foundational level**. In the context of building AI, the "foundation" involves everything from how data is ingested to who reports to whom on crucial architecture decisions. A flawed foundation, if left unaddressed, guarantees a ceiling on performance that cannot be overcome by simple code tweaks.

For the product, Grok, this implies that its perceived shortcomings (perhaps sluggish response times, or difficulty handling complex reasoning tasks) are being addressed by surgically removing organizational and structural inefficiencies that hampered the researchers.

Actionable Insight: Embrace the Beta Mindset

Businesses and consumers need to adjust their expectations for frontier AI products. Projects born from these high-velocity environments will be inherently volatile in their early stages. The lesson here is to watch the *underlying technology shifts* rather than getting too attached to any single product version. If Musk is rebuilding the foundation, the next iteration of Grok could represent a step-function improvement, rewarding those who waited for the structure to stabilize.

The Product Road Ahead: Grok's Position in the LLM Arms Race

All organizational turmoil ultimately circles back to the product. xAI’s main product, Grok, was launched with a unique selling proposition: real-time, unfiltered access to the data flowing through the X platform. This gives it a potential edge in understanding current events and trending topics that models trained on older, static datasets lack.

However, real-time access is useless if the core reasoning capability lags behind competitors. Analyzing the `Grok LLM roadmap vs GPT-5 timeline implications` shows that xAI cannot afford a prolonged period of internal rebuilding. While OpenAI is preparing for the next level of general intelligence, xAI must stabilize its core architecture *now* to meaningfully differentiate itself. If the restructuring slows down Grok’s feature roll-out, the initial novelty advantage evaporates.

Societal Implications: Diversity of AI Philosophies

The intense rivalry between OpenAI (often seen as pursuing a more cautious, perhaps enterprise-focused alignment strategy) and xAI (often associated with maximalist, freedom-of-speech-oriented development) means that xAI’s restructuring has broader societal weight. A successful restructuring would result in a powerful, distinct alternative AI model. This competition is vital because it prevents stagnation and ensures that different ethical and philosophical constraints are tested in the market. We need multiple approaches to AGI development to safeguard against a single point of failure or bias dominating the landscape.

Conclusion: Restructuring as a Competitive Necessity

Elon Musk’s public admission about xAI’s initial structural flaws is a case study in high-stakes technological warfare. It underscores that building generalized AI is fundamentally harder, more expensive, and more organizationally complex than initially anticipated by many entrants. The effort to rebuild xAI from the foundations up speaks to an understanding that incremental fixes are insufficient when the goal is parity or superiority against incumbents.

The future of AI is being forged in these moments of radical self-correction. Success for xAI now hinges on how quickly and effectively they can implement their new architecture, secure their compute, and synthesize their unique data advantage into a superior product. For the rest of the industry, this event serves as a stark reminder: in the race for foundational intelligence, the ground beneath your feet must be solid steel, not hastily poured concrete.