The Great Rebuild: Why Elon Musk's xAI Restructuring Signals a New Maturity in Frontier AI Development

The world of Artificial Intelligence operates at a dizzying speed, often characterized by brinksmanship, massive capital injections, and near-constant surprise announcements. Amidst this high-stakes environment, a rare admission recently landed with significant weight: Elon Musk acknowledged that his foundational AI company, xAI, "was not built right the first time around" and is now undergoing a full, foundation-up restructuring.

This isn't just office gossip; it’s a profound signal echoing across the entire technology sector. When a figure as famously determined and hands-on as Musk concedes a fundamental structural error in an AI venture, it forces us to look beyond the chatbot hype and examine the real, difficult mechanics of scaling frontier research. This pivot suggests that the initial, perhaps chaotic or highly personalized, "Muskian" approach to building a world-class Large Language Model (LLM) is yielding to the iron laws of scalable engineering and organizational maturity. What does this mean for xAI, its competitors, and the future architecture of AI labs?

The Echo of Inevitability: Why Early AI Labs Fail to Scale

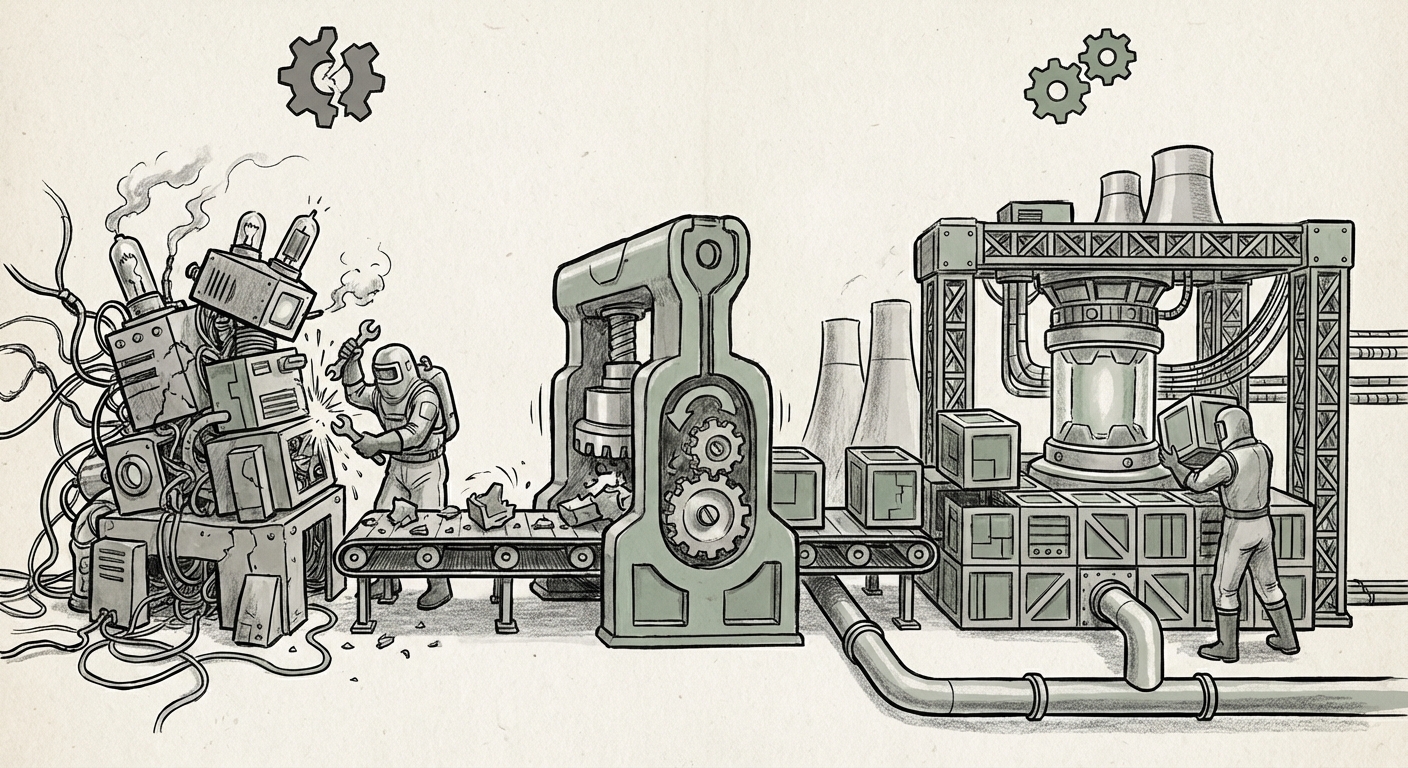

The journey from a successful proof-of-concept to a trillion-parameter, production-ready model is often paved with organizational wreckage. Musk’s candid statement confirms a pattern we’ve seen play out—albeit less publicly—across the AI startup ecosystem. Building an LLM like Grok requires two vastly different organizational philosophies to coexist:

- The Research Lab Mentality: High intellectual freedom, rapid experimentation, fluid roles, and a focus on novel breakthroughs. This is where the "magic" of emergent properties in models is discovered.

- The Engineering Factory Mentality: Rigorous version control, clear accountability, resilient infrastructure pipelines, and process optimization for continuous training runs that cost millions of dollars.

Initial structures, often hastily assembled to capture talent and initial funding, frequently favor the former. However, as the search queries targeting "xAI 'hiring structure' prior to restructuring" suggest, chaos reigns when the need for the latter becomes urgent. Musk’s admission likely points to a failure in bridging this gap—perhaps too much directorial autonomy stifled necessary engineering rigor, or maybe the infrastructure wasn't managed robustly enough to handle the technical scaling demands (as queried by looking into "'Scaling frontier LLMs' infrastructure requirements").

For the technical audience—the AI engineers and CTOs—this is a validation: you cannot iterate indefinitely without robust architecture. The restructuring is the painful transition from a "hacker house" model to an enterprise-grade development environment.

The Competitive Crucible: Running to Keep Pace

The context surrounding xAI is not one of quiet introspection; it is one of intense, existential competition. When one company admits structural flaws, it is almost always because a rival is executing flawlessly. The focus on queries like "'GPT-5' timeline vs xAI roadmap competition" highlights the primary driver.

The race for Artificial General Intelligence (AGI) is often benchmarked by capability releases (e.g., GPT-4o, Gemini Ultra). If xAI’s previous structure inhibited the speed at which they could integrate new research insights, fine-tune models efficiently, or deploy multimodal capabilities—areas where OpenAI and Google are heavily invested—then a reorganization is a survival mechanism.

This competition forces a strategic shift. The new architecture must be laser-focused on **throughput and feature parity.** Businesses relying on xAI for future tooling or investment will be looking for signs that the new structure prioritizes:

- Faster Iteration Cycles: Reducing the time from a research breakthrough to a deployable product update.

- Talent Retention and Alignment: Ensuring that the best minds are working on the highest-leverage tasks, unencumbered by organizational friction.

- Defensible Differentiation: Whether that be superior real-time data access or a unique model architecture, the structure must support a distinct competitive edge, not just mimic existing leaders.

The Safety/Speed Trade-Off in the New Architecture (Query 3 Analysis)

A critical layer in understanding xAI’s pivot lies in the philosophical tension between development speed and safety protocols. Musk has historically positioned xAI as a "truth-seeking" entity dedicated to understanding the universe, often contrasting its approach with what he views as overly cautious or censored competitors.

However, as models become more powerful, the cost of an unmanaged error—whether a serious hallucination, a bias amplification, or a security vulnerability—skyrockets. The query concerning "'AI safety vs speed' balancing act" is highly relevant here. The initial structure may have tipped too far toward speed, leading to what Musk now perceives as an "unright" foundation.

The restructuring suggests a formalization of safety and governance. A mature AI organization understands that safety is not a roadblock to speed; it is the *only* sustainable pathway to speed. You cannot safely deploy massive, powerful models if your internal checks and balances are improvised. The rebuilt xAI will likely feature more dedicated governance layers, formal red-teaming processes, and clearer ethical guidelines embedded directly into the engineering workflow, moving beyond simple aspiration to measurable engineering practice.

Implications for Businesses: The Industrialization of AI Development

The takeaway for businesses using or building AI applications extends far beyond Musk’s personal venture. The xAI restructuring is a leading indicator of the industry's required evolution:

1. Demand for Predictable Roadmaps

Companies purchasing enterprise licenses or integrating third-party models need reliability. A startup operating in perpetual chaos, no matter how brilliant, is a risk. The market is beginning to reward companies that demonstrate **organizational stability and predictable deployment cadence**. If xAI succeeds in its restructure, it will be because it delivers reliable, scaled performance. This places pressure on every other player to clean up their organizational houses.

2. Infrastructure as the New Moat

The challenge of scaling LLMs (Query 4) is increasingly less about finding a single genius algorithm and more about managing unprecedented computational loads efficiently. The new xAI structure will undoubtedly place massive emphasis on MLOps (Machine Learning Operations) and cloud infrastructure management. For businesses, this means vetting AI partners based on their operational maturity—their ability to manage data pipelines, distributed training jobs, and GPU clusters securely and cost-effectively.

3. Talent Migration and Organizational Lessons

When a major restructuring occurs, talent shifts. Engineers seeking stability, clear roles, and established processes will be drawn to the newly organized structure, while those valuing total creative freedom might depart. Businesses should watch these talent flows closely. Furthermore, the lessons learned from xAI’s correction—the importance of early clarity in reporting lines and defining the "Minimum Viable Organization" (MVO) for an AI lab—will be codified and adopted by the next wave of ambitious startups.

Actionable Insights for Navigating the AI Maturation Phase

As the AI landscape transitions from the Wild West prototyping phase to an industrial building phase, strategic actors must adapt their perspectives:

For Technical Leaders (CTOs, VPs of Engineering):

Institutionalize Rigor Early: Do not wait for a model to be successful before implementing robust version control, documentation standards, and formalized security audits. The chaos that enables initial breakthrough often paralyzes scalability. Use the xAI pivot as a reminder that organizational design is an engineering problem that needs solving *before* the computational budget becomes too large to manage.

For Business Strategists and Investors:

Demand Operational Transparency: Look beyond impressive benchmark scores. Ask potential AI partners about their incident response protocols, their data governance frameworks, and their organizational chart for high-stakes projects. A company that cannot clearly explain who owns the safety parameters of a production model is a liability, regardless of the model’s intelligence.

For Policy Makers and Ethicists:

Structure Mirrors Accountability: The restructuring highlights that governance must evolve alongside capability. As leaders formalize internal structures to manage risk, policy efforts should focus on verifying that these internal structures meet public safety standards. A clearly defined organizational structure makes accountability tangible.

Conclusion: The Necessary Pain of Becoming Industrial

Elon Musk’s admission regarding xAI is more than a headline; it is a historical marker. It signals the end of the purely experimental phase for many frontier AI labs and the difficult beginning of the industrial age. Building a world-changing technology requires not just brilliant minds and massive compute power, but also the organizational discipline to manage that power responsibly and effectively over the long term.

The coming months will reveal the shape of the "rebuilt" xAI. If they successfully forge a structure that marries aggressive innovation with mature engineering, they will set the standard not just for their own success, but for every startup attempting to build the next generation of intelligence. The future of AI won't just be defined by better algorithms; it will be defined by better organizations capable of wielding them.